Algorithm puts Cascadia earthquake timeline into question

Add Axios as your preferred source to

see more of our stories on Google.

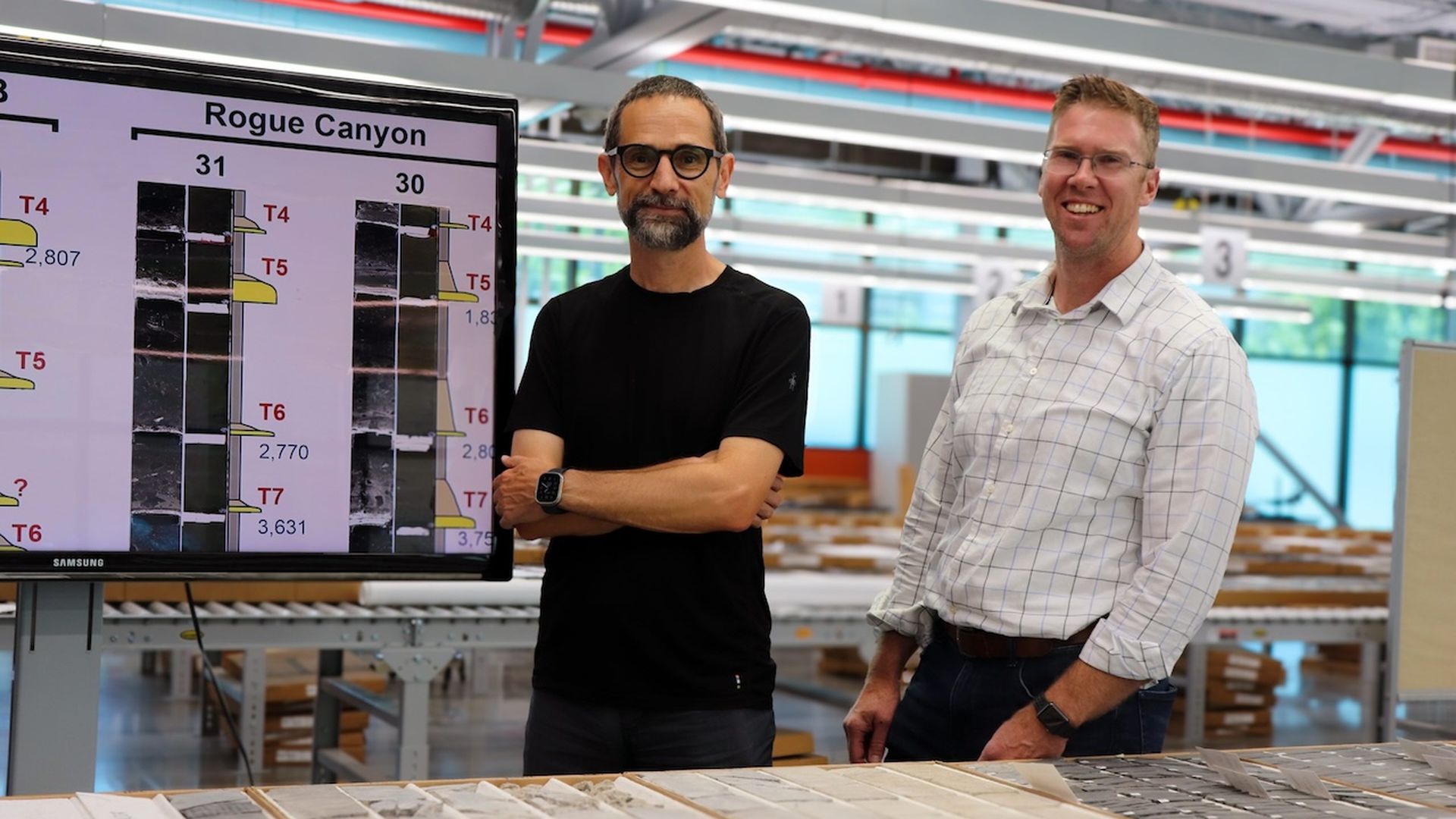

Research professors Zoltán Sylvester (left) and Jacob Covault (right) are co-authors of a new study on the Cascadia earthquake timeline. Photo: Courtesy of The University of Texas at Austin/Jackson School of Geosciences

A new algorithm used to analyze sediment layers of the seafloor is helping scientists pinpoint the timing of past Cascadia Subduction Zone earthquakes to determine when the next big one will come.

Why it matters: The Pacific Northwest is at risk for a massive, once-in-500-years earthquake, and many of our buildings and bridges won't be able to stand it.

- While the timing of future quakes is uncertain, new research could help communities along the fault line better prepare their infrastructure, Joan Gomberg, a geophysicist at the U.S. Geological Survey, told Axios.

Context: The 700-mile Cascadia Subduction Zone runs along the coast of the Pacific Northwest — from northern California to southern British Columbia. It's located roughly 100 miles off the Pacific shoreline.

- The last earthquake that occurred here was a 9.0 magnitude in 1700, which also caused a tsunami to hit Japan, historical records show.

- For decades, scientists have been looking for signs of past earthquakes by analyzing rocks, sediment and topography to better understand the subduction zone's timeline.

- Right now, scientists estimate a major earthquake in the subduction zone takes place every 500 years or so.

Yes, but: "It doesn't happen precisely every 500 years to the dot," Gomberg said. That's what the new algorithm puts into question, she added.

What they did: Gomberg, along with researchers at the University of Texas at Austin, analyzed a selection of turbidites — sedimentary deposits found on the seafloor formed by earthquakes or underwater landslides — taken from the subduction zone region dating back 12,000 years.

- Previous turbidite research to correlate past earthquakes relied on visual comparisons to assess how similar each layer was to another, Zoltán Sylvester, a professor at UT Austin and co-author of the study, told Axios.

- The algorithm, on the other hand, can tell "the probability that two seemingly similar layers are actually the same" by analyzing X-ray-computed images of turbidite samples and corresponding magnetic logs.

What they found: Based on these characteristics, turbidites collected across large distances did not correlate as reliably as previously assumed, Sylvester said. Instead, it was no better than random.

- This means previous estimates of earthquake recurrence intervals in the subduction zone may have larger gaps outside of the 500-year window. However, both Sylvester and Gomberg said more data is needed to further understand the fault's timeline.

- The algorithm, rather than eyeballing turbidite samples, though, can give future researchers a repeatable result, Sylvester said.

The bottom line: Knowing more accurately when earthquakes can strike "has real-world implications," Gomberg said.

- As this new tool develops, Gomberg said, it could assist stakeholders in the Pacific Northwest with crafting building codes and designing earthquake-resistant infrastructure longevity, as well as with deciding where to place underwater fiber-optic cables used for telecommunication and energy production.