Study finds ChatGPT reflects stereotypes of U.S. cities and countries

Add Axios as your preferred source to

see more of our stories on Google.

Illustration: Sarah Grillo/Axios

Researchers have found that LLMs, like ChatGPT, are just as biased as the rest of the world.

Why it matters: Last year, over 50% of adults in the U.S. reported using LLMs, and as more people rely on the information these platforms deliver, geographic, racial and economic stereotypes are perpetuated further.

Driving the news: Internet scholars at University of Oxford and University of Kentucky recently compared 91 U.S. cities with populations over 250,000 across a range of categories, including social and physical attributes, food quality, governance, politics and business climate.

Zoom in: The authors define the "silicon gaze" as "shaped by the positionalities and power asymmetries of its training data, designers, and platform owners," so for AI models, those sources tend to be predominantly male, white and Western.

The other side: "ChatGPT is designed to be objective by default and to avoid endorsing stereotypes," a ChatGPT spokesperson tells Axios in a statement.

- "Research based on forced-choice prompts and older models doesn't reflect how ChatGPT is typically used or how current models behave today. We continue to improve how ChatGPT handles subjective or non-representative comparisons, guided by real-world usage, ongoing evaluations, and user feedback."

How we did: Chicago ranked in the top 10 for U.S. cities considered more fashionable and smarter than all but 14 other cities. Chicago also ranked high for "nurturing creative minds."

- On a less positive note, ChatGPT thinks we're "smellier" than a lot of cities. In fact, only 12 cities are smellier (sorry, New Orleans, but you stink, apparently!)

- Our food scene still reigns supreme, coming in No. 5 for overall food quality.

Whoa … whoa… whoa: Apparently, we're not friendly, falling in the bottom 10. Who the heck you calling not friendly?!

Try it out: You can spend hours digging into dozens of qualities ChatGPT attributes to Chicago, comparing it to other cities in those same areas.

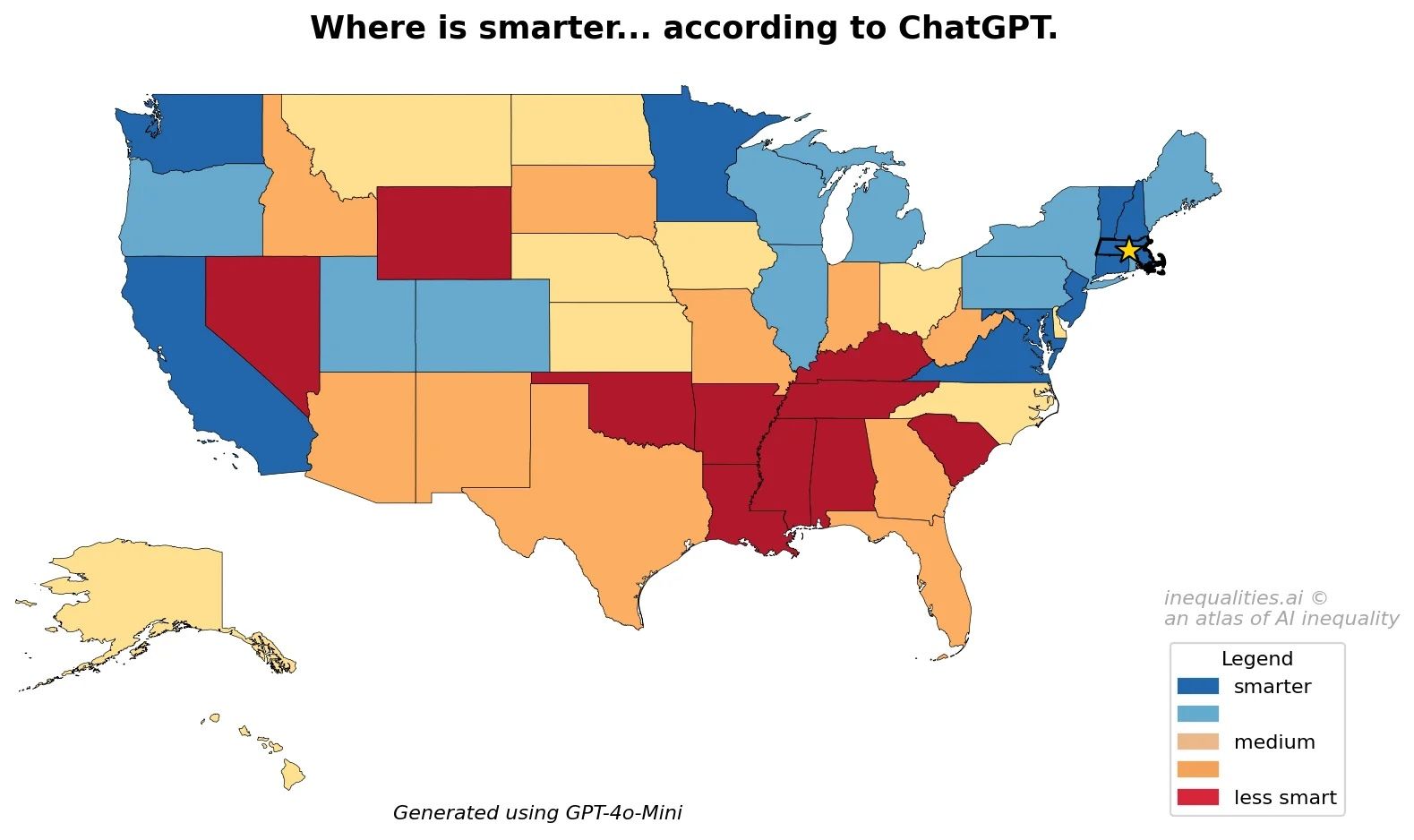

Yes, but: Like most of the internet, LLMs train on what's already on the internet so benign stereotypes like "southern hospitality" to more damaging ones like "southerners are lazier and less intelligent" will prevail. In the case of Chicago, harsh winters will push us down when searching for quality of life. Let's be honest, "polar vortex" is just not inviting.

- Questions and comparisons based on very subjective criteria such as likability, attractiveness, or intelligence favored higher-income areas.

The bottom line: The bias from LLMs can extend into policies and decision-making if not viewed with a critical and nuanced lens. In other words, do your research and don't limit your sources.