How AI is supercharging cybercrime

Add Axios as your preferred source to

see more of our stories on Google.

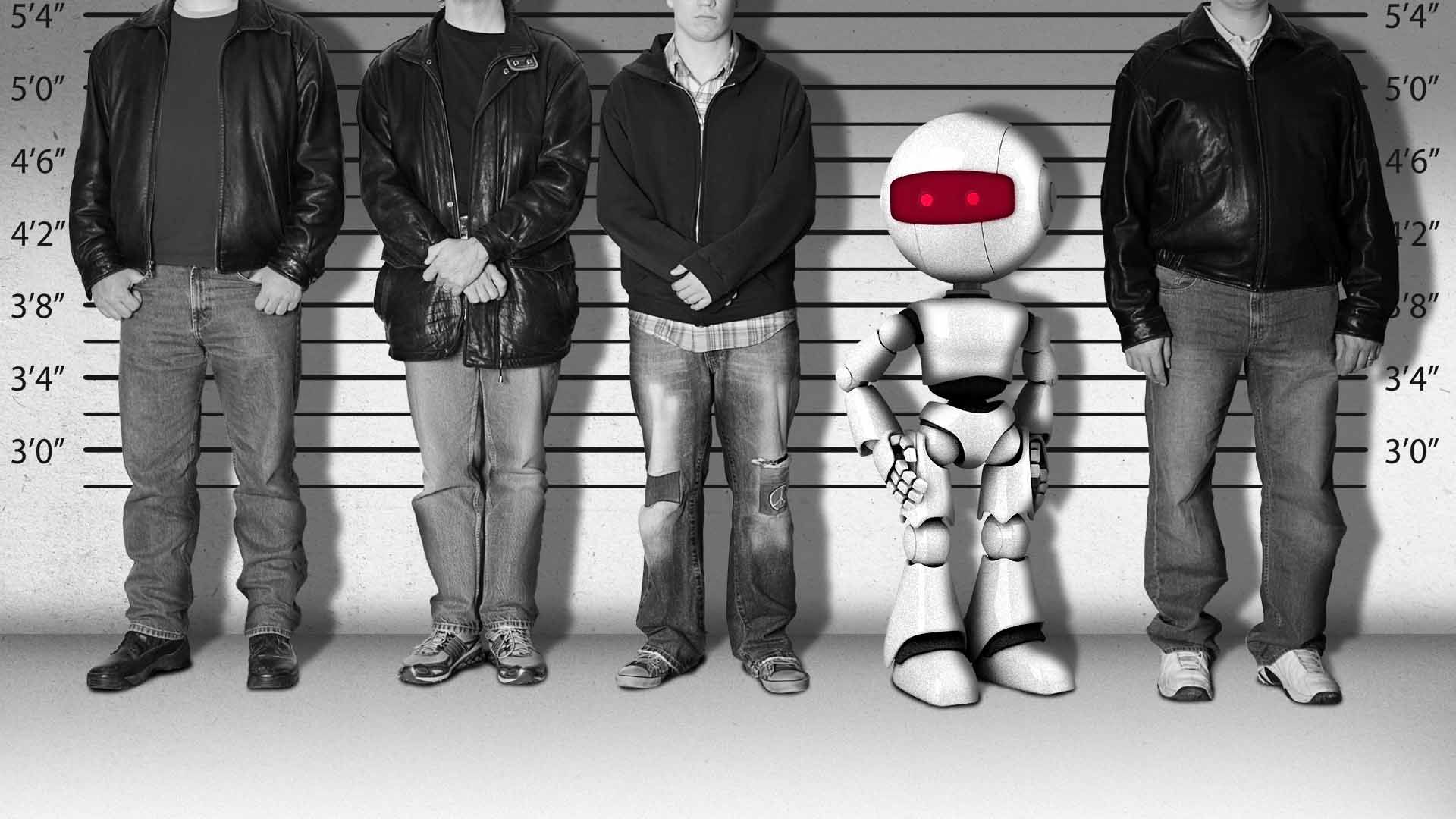

Illustration: Sarah Grillo/Axios

Artificial intelligence is quickly rewriting the playbook for crime, from cheap deepfake scams and AI-written ransomware to mass identity hijacks and critical-infrastructure hacks.

Why it matters: AI-supercharged crime is putting lives and financial systems at risk, vastly increasing the threat from cybersecurity incidents, such as those that targeted the Port of Seattle and Seattle Public Library last year.

Zoom in: While the recent attacks in Seattle don't appear to be explicitly AI-driven, they hint at the devastation possible if the same ransomware playbook is supercharged by AI — making intrusions faster, cheaper and harder to stop.

How it works: Off-the-shelf AI lowers the skill level and cost of carrying out attacks, enabling small crews to execute schemes that previously required nation-state resources.

- Crimes can now hit millions at once with voice clones and account takeovers, while local agencies are trained and funded to chase one case at a time.

Catch up quick: When Rhysida ransomware hit the Port of Seattle in August 2024, the attack disabled airport kiosks, baggage systems and Wi-Fi, while exposing data for roughly 90,000 people.

- About two months earlier, the Seattle Public Library suffered a ransomware attack that wiped out its catalog, computers, Wi-Fi and e-books for roughly three months.

- The port said it refused to pay ransom and had restored most operations within a week. The library said recovery cost about $1 million.

Zoom in: AI can create automations to "lock pick" into a system millions of times per second, something humans can't do, futurist Ian Khan tells Axios.

- Once inside, hackers can then use AI to steal identities, pump and dump stocks and cause havoc to water plants, smart homes and hospitals.

- The attacks can come from across the street to the other side of the world, said futurist Marc Goodman, author of "Future Crimes: Inside the Digital Underground and the Battle for Our Connected World."

- Deep fake voices can persuade victims to hand over money, or stolen identities could lead to voter fraud and false arrests.

The latest: Chinese state-backed hackers used AI tools from Anthropic to automate breaches of major companies and foreign governments during a September cyber campaign, the company said this month.

- "We believe this is the first documented case of a large-scale cyberattack executed without substantial human intervention," the company said in a statement.

State of play: Generative AI has increased the speed and scale of synthetic-identity fraud, especially across real-time payment rails, according to the Federal Reserve Bank of Boston.

- A deepfake attack occurred every five minutes globally in 2024, while digital-document forgeries jumped 244% year-over-year, the Entrust Cybersecurity Institute found in a report.

- U.S. losses from fraud that relies on generative AI are projected to reach $40 billion by 2027, according to the Deloitte Center for Financial Services.

What's next: Federal and state lawmakers — including in Washington — are scrambling to catch up, rolling out new laws to curb AI-driven cybercrime.

- Washington state now criminalizes harmful deepfakes and requires labels on synthetic political ads.

- Congress, meanwhile, has passed the TAKE IT DOWN Act and is weighing broader protections, including a bill backed by U.S. Sen. Maria Cantwell (D-Washington) that would require a traceable marker on AI-generated content.