Axios AI+

February 28, 2024

Ina here, reminiscing about Lowly Worm and the other, more enduring apple car. Today's AI+ is 1,272 words, a 5-minute read.

1 big thing: AI software bugs are different

Illustration: Brendan Lynch/Axios

Generative AI is raising the curtain on a new era of software breakdowns rooted in the same creative capabilities that make it powerful, reports Scott Rosenberg.

Why it matters: Every novel technology brings bugs, but AI's will be especially thorny and frustrating because they're so different from the ones we're used to.

Driving the news: AT&T's cellular network and Google's Gemini chatbot both went on the fritz last week.

- In AT&T's breakdown, a "software configuration error" left thousands of customers without wireless service during their morning commute.

Google's bug was very different. Its Gemini image generator created a variety of ahistorical images: When asked to depict Nazi soldiers, it included illustrations of Black people in uniform; when asked to draw a pope, it produced an image of a woman in papal robes.

- This was a more complex sort of error than AT&T's, at the boundary between engineering and politics, where it looked like a diversity policy had gone haywire. Google paused all AI generation of images of people until it could fix the problem.

- Google CEO Sundar Pichai sent a memo to staff Tuesday, saying of Gemini, "I know that some of its responses have offended our users and shown bias — to be clear, that's completely unacceptable and we got it wrong."

Conservatives shouted, "Woke!" But Google was taking precautions against bias much like those every AI company has learned to apply after years of embarrassing missteps — particularly in the realm of human images.

- Those problems have dogged the field because the data used to train generative AI models, typically culled from mountains of web pages, is full of the biases of the humans that created it.

- AI firms could have reduced these problems by spending a lot of time and money up front to curate their models' training data. Instead, they are now applying spotty Band-Aid patches to the problems after the fact.

The big picture: In both the AT&T and the Google incidents, systems failed because of what people asked computers to do.

- AT&T's wireless service crashed when it tried to follow new instructions containing an error or contradiction that caused the system to stop responding.

Yes, but: Most AI systems don't operate by commands and instructions — they use "weights" (probabilities) to shape output.

- Developers can put their fingers on the scales — and clearly they did with Gemini. They just didn't get the results they sought.

Zoom in: Gemini isn't able to "think" clearly about the difference between historical research and "what if?" creativity.

- A smarter system — or any reasonably well-informed human — would understand that the Roman Catholic Church has never had a pope who wasn't male.

Zoom out: Making things up is at the heart of what generative AI does.

- Traditional software looks at its code base for the next instruction to execute; generative AI programs "guess the next word" or pixel in a sequence based on the guidelines people give them.

- You can tune a model's "temperature" up or down, making its output more or less random. But you can't just stop it from being creative, or it won't do anything at all.

Between the lines: The choices AI models make are often opaque and even their creators don't fully understand how they work.

- So when developers try to add "guardrails" to, say, diversify image results or limit political propaganda and hate speech, their interventions have unpredictable results and can backfire.

- But not intervening will lead to biased and troublesome results, too.

What's next: The more we try to use generative AI systems to accurately represent reality and serve as knowledge tools, the more urgently the industry will try to place boundaries around their "hallucinations" and errors.

- Over time, as the field learns from experience, AI programmers could succeed at taming and tuning their models to be more factual and less biased.

- But it could turn out that using generative AI as the fundamental interface for knowledge work is just a very bad idea that will continue to bedevil us.

2. OpenAI's Sora isn't the only AI movie maker

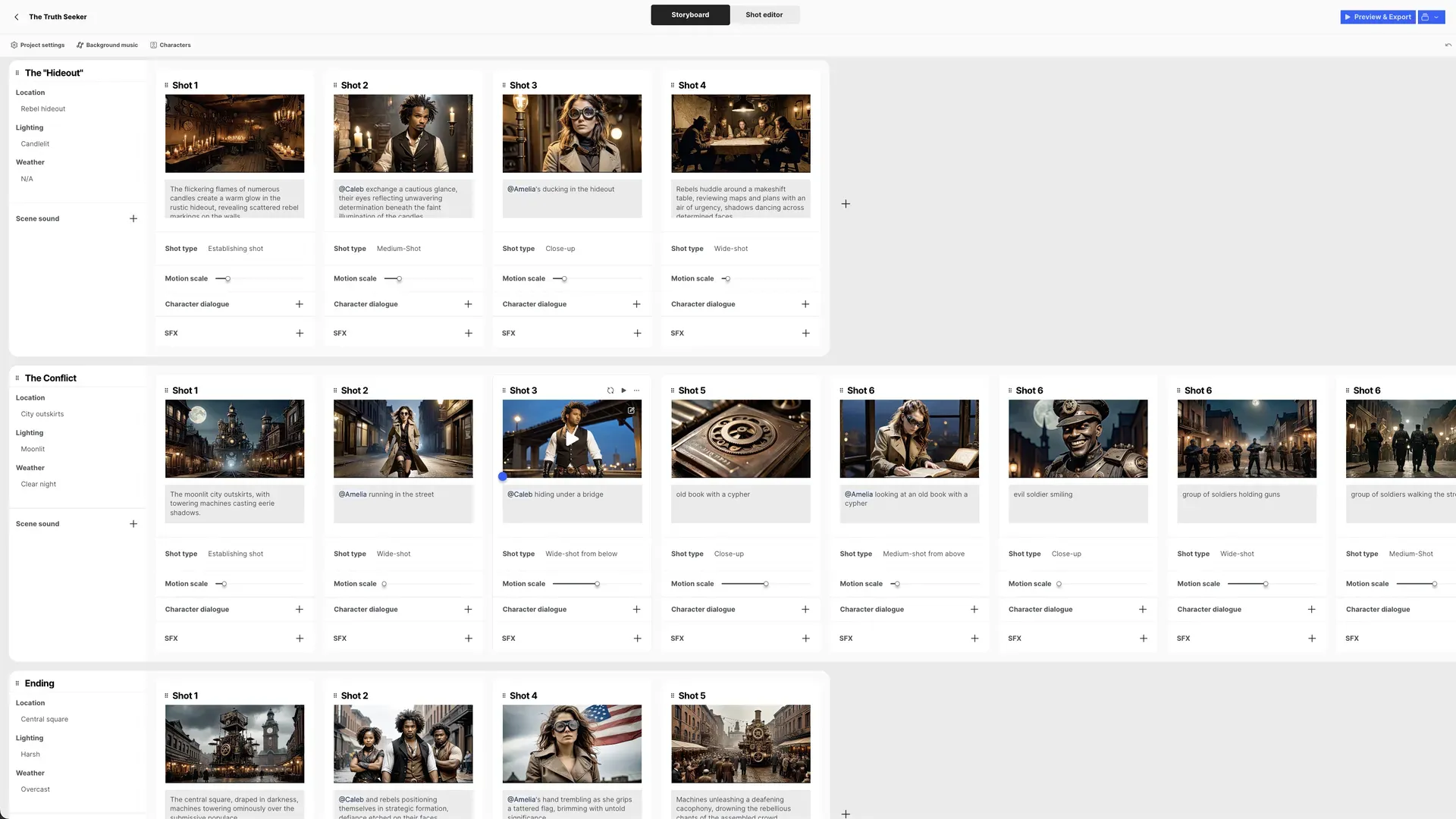

A screenshot from LTX Studio, a forthcoming AI video editing tool from Israel's Lightricks. Image: Lightricks

Lightricks, the Israeli startup behind Facetune and Photoleap, announced a new AI video tool called LTX Studio that can generate characters, scenes, storyboards and even entire movies using only text descriptions.

Why it matters: Proponents argue such tools can help democratize the filmmaking process, while some creative professionals worry that generative AI tools will eliminate jobs and hurt the arts.

The big picture: There are a number of products that create individual video scenes from prompts, including from Runway and the forthcoming Sora from OpenAI. LTX Studio is designed to allow a greater degree of editing and control across a longer production.

How it works: Unlike Lightricks' other tools, LTX Studio is browser-based, which means it should work across a wide range of devices, including PCs, tablets and smartphones.

- Users write text descriptions that generate characters and plot and then choose from a variety of camera angles and styles, like "cinematic" or "anime." Music and audio can be added and many of the elements can be changed and customized, with characters remaining consistent from scene to scene.

- The product won't be available until an event on March 27, but customers can join a waiting list to gain access after launch.

- Lightricks said the product will initially be free. CEO Zeev Farbman says the company will eventually charge, but hopes not to have a price for each edit made with generative AI, as some other tools require. "It just doesn't lend itself to the creative process," he said.

Between the lines: Lightricks expects the first version of the software to be used more for smaller efforts or commercial storyboarding and pre-production than for creating finished movies.

- Over time, he expects software like LTX Studio will lead to a greater range of film projects produced. "Very small crews will be able to create some amazing stuff," he said.

Lightricks has been working on LTX Studio since last year, with about a quarter of the company now devoted to the project.

- Longer term, Farbman has said he expects many other areas of software will be disrupted.

- AI is a "game-changer, meaning that we completely need to revisit our technological stack because it's obsolete," Farbman told Axios in December. "It's true for us. It's true for Adobe, Autodesk, any toolmaker."

Zoom in: Lightricks used LTX Studio to create a video trailer for Axios, in a steampunk style based on the prompt: "An intrepid journalist tries to find love and truth in a world dominated by a brainwashed populace and machines that confidently spew gibberish."

- Here is the video.

3. Training data

- OpenAI is asking a federal court to throw out the New York Times' lawsuit, maintaining the newspaper had to "hack" ChatGPT in order to get the system to spout full Times articles. (Axios)

- Sources told Bloomberg that Apple is shutting down its electric car division and transitioning those working on it to the AI team. (Axios)

- Ukraine's national security adviser warned the U.S. and the U.K. that Russia is using new AI-powered tools to interfere with elections at a new, "exponentially greater" scale. (The Times)

- U.S. school leaders struggle to craft AI policies around plagiarism, bias, ethics, deepfakes and other conundrums. (Axios)

- Tumblr and Wordpress.com are planning to sell user data to OpenAI and Midjourney, but Automattic (which owns both services) says it will let users opt out. (404 Media)

- Google is paying some independent publishers a "five-figure sum" to publish content using its generative AI tools for journalists, according to documents seen by Adweek.

4. + This

I have a feeling we are going to see a lot more real-life experiences that don't live up to their AI-generated marketing materials.

Thanks to Scott Rosenberg and Megan Morrone for editing this newsletter.

Sign up for Axios AI+

Scoops on the AI revolution and transformative tech, from Ina Fried, Madison Mills, Ashley Gold and Maria Curi.