Axios AI+

March 14, 2024

Hi, it's Ryan. Today's AI+ is 1,268 words, a 5-minute read.

1 big thing: Generative AI's privacy problem

Illustration: Aïda Amer/Axios

Privacy is the next battleground for the AI debate, even as conflicts over copyright, accuracy and bias continue, Ina reports.

Why it matters: Critics say large language models are collecting and often disclosing personal information gathered from around the web, often without the permission of those involved.

The big picture: Many businesses are wary of execs and employees using proprietary information to query ChatGPT and other AI bots — either banning such apps or opting for paid versions that keep business information private.

- As more individuals use AI to seek relationship advice, medical information or psychological counseling, experts say the risks to individuals are growing.

- Personal data leaks from AI can take a variety of forms, from accidental information disclosure to data gained via deliberate efforts to break through guardrails.

Driving the news: Several lawsuits seeking class-action status have been filed in recent months alleging Google, OpenAI and others have violated federal and state privacy laws in training and operating their AI services.

- The FTC issued a warning in January that tech companies have an obligation to uphold their privacy commitments as they develop generative AI models.

"With AI, it's this big feeding frenzy for data, and these companies are just gathering up any personal data they can find on the internet," George Washington University law professor Daniel J. Solove told Axios.

- The risks go far beyond just the disclosure of discrete pieces of private information, argues Timothy K. Giordano, partner at Clarkson Law Firm, which has brought a number of privacy and copyright suits against generative AI companies.

Between the lines: While AI is creating new scenarios, Solove points out that many of these privacy issues aren't new.

- "A lot of the AI problems are exacerbations of existing problems that law has not dealt with well," Solove told Axios, pointing to the lack of federal online privacy protections and the flaws in the state laws that do exist.

- "If I had to grade them, they would be like D's and F's," Solove said. "They are very weak."

The big picture: Generative AI's unique capabilities raise bigger concerns than the common aggregation of personal information sold and distributed by data brokers.

- In addition to potentially sharing specific pieces of data, generative AI tools can draw connections, or inferences (accurate or not), Giordano told Axios.

- This means tech companies now have, in Giordano's words, "a chillingly detailed understanding of our personhood — enough ultimately to create digital clones and deepfakes that would not only look like us, but that could also act and communicate like us."

Building AI so that it respects data privacy is complicated by how generative AI systems work.

- Typically, they are trained on huge sets of data that leave a kind of probability imprint in the model, but they don't save or store the data afterward. That means you can't simply erase information that's been woven in.

- "You cannot untrain generative AI," said Grant Fergusson, a fellow at the Electronic Privacy Information Center. "Once the system has been trained on something, there's no way to take that back."

Reality check: In some cases, people can choose not to have their data used for AI training, though such policies vary and data sharing settings can be confusing and hard to find.

- Plus, even where users do offer consent, they might be sharing data that could impact the online privacy of others.

The other side: An OpenAI representative told Axios it doesn't seek out personal data to train its models and takes steps to prevent its models from disclosing private or sensitive information.

- "We want our models to learn about the world, not private individuals," an OpenAI spokesperson told Axios. "We also train our models to refuse to provide private or sensitive info about people."

- The company said its privacy policy outlines options for people to delete certain information as well as to opt out of model training.

What's next: AI companies could do more on their own, but Solove said that expecting companies to protect privacy without mandating it through law is probably unrealistic.

- "You're kind of telling sharks, 'Please sit down and use utensils,'" Solove said.

2. Here comes the humanoid robot revolution

Agility's robots are on trial assignment at an Amazon warehouse south of Seattle. Photo courtesy of Agility Robotics

Envisioning a day when hundreds of humanoid robots can be deployed at the touch of a button, Agility Robotics has announced its first fleet management platform, Axios' Jennifer Kingson reports.

Why it matters: There's intense competition among humanoid robot manufacturers to get their products into the production lines of companies like Amazon and BMW.

Driving the news: Agility Arc is a new cloud-based tool that'll be able to command a robot army for tasks like moving bins to a conveyor belt.

What they're saying: "The ability to control fleets of robots is something that everybody in the robotics business needs to do," Damion Shelton, president of Agility Robotics, told Axios.

Walking, dexterous robots are gradually making the leap from the science lab to the workplace, requiring more sophisticated management systems.

- Agility's Digit robot is being tested by Amazon and GXO Logistics, which recently deployed it at a Spanx warehouse in Georgia.

- See a video of an Agility VP telling Digit to "pick the box that's the color of Darth Vader's lightsaber and put it on top of the tallest box in the front row."

The other side: A competing robot maker called Figure garnered a massive investment from Jeff Bezos and OpenAI, and is staffing a BMW production line.

- The Figure 01 is an OpenAI-powered dextrous robot that can have full conversations, per a demonstration video released yesterday.

Yes, but: The Occupational Safety and Health Administration and other federal agencies are still working out how to set safety rules governing the new metallic workers.

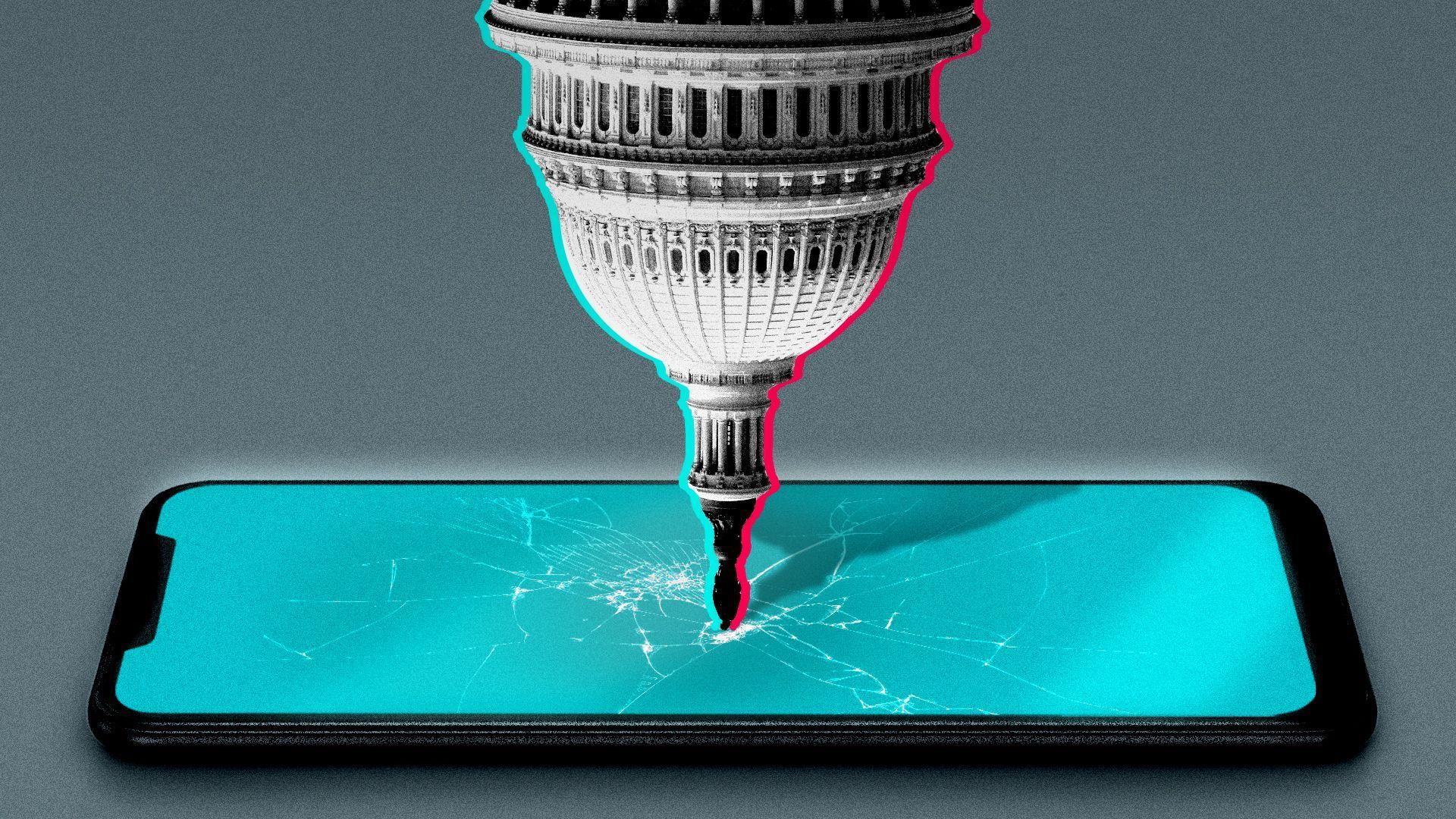

3. Washington's TikTok crackdown: What's next

Illustration: Shoshana Gordon/Axios

The House overwhelmingly passed a bipartisan bill yesterday to force China-based ByteDance to sell TikTok or face a ban in the U.S., Axios' Ashley Gold, Maria Curi and Sareen Habeshian report.

Why it matters: Lawmakers decisively passed the bill in a 352-65 vote, and moved with unusual speed — the bill didn't exist eight days ago.

The big picture: While President Biden, whose campaign recently joined the app, has vowed to sign the legislation if it reaches his desk, it's unlikely the app is going anywhere anytime soon.

- The bill faces an uphill climb. There is no Senate companion bill, and any bill will likely face a filibuster.

Zoom in: Opinions in the Senate are varied.

- Sens. Mark Warner (D-Va.) and Marco Rubio (R-Fla.) endorsed the legislation after the vote, despite having their own legislation to address national security concerns.

- Senate Commerce Committee Chair Maria Cantwell (D-Wash.) also has her own TikTok bill, the GUARD Act, and is not considering co-sponsoring the House bill.

- "We need curbs on social media, but we need those curbs to apply across the board," Sen. Elizabeth Warren (D-Mass.) told Axios Pro's tech team.

- Sen. Lindsey Graham (R-S.C.) said he's conflicted: "I would like to protect the data, keep the website up if we could," he said, but the U.S. has to "make sure our data is not used by China and they can't influence elections."

4. Training data

- The EU's parliament formally approved the bloc's comprehensive AI Act by a margin of 523 to 46. (CNBC, Axios)

- Sam Altman's AI-related empire stretches well beyond OpenAI. (Business Insider)

- Chamath Palihapitiya fired two Social Capital partners — Jay Zaveri and Ravi Tanuku — over an AI investment, in a dispute likely to head to litigation. (Axios)

- The U.K.'s Advanced Research and Invention Agency will hand out more than $50 million to researchers in a push to cut AI computing costs by 99.9%. (uk.gov)

5. + This

How to create an AI-tainted communications mess without even using AI.

Thanks to Scott Rosenberg and Megan Morrone for editing this newsletter and to Carolyn DiPaolo and Caitlin Wolper for copy editing it.

Sign up for Axios AI+

Scoops on the AI revolution and transformative tech, from Ina Fried, Madison Mills, Ashley Gold and Maria Curi.