Axios AI+

October 08, 2025

Attention Buckeye State readers: Join Axios in Cincinnati on Tuesday, Oct. 14, at 8am ET for an event exploring ways to prepare the workforce for new tech, AI and manufacturing jobs. RSVP here. Today's AI+ is 1,144 words, a 4.5-minute read.

1 big thing: Welcome to AI's mega-blob era

The edges of companies that make AI and those that make AI infrastructure are blurring as the industry coalesces into a handful of corporate mega-blobs linked by investments, partnerships and shared supply chains.

Why it matters: The AI world is moving into a new era of corporate entanglement with OpenAI's latest megadeal, a "tens of billions of dollars" agreement with AMD that has OpenAI buying mountains of AMD's microprocessors and taking up to a 10% stake in the firm.

How it works: AI's leading companies still compete — sorta. They also work together at a large scale in increasingly esoteric ways.

You could call that an ecosystem. You could also call it, as AI critics have, a shell game.

- Either way, the AI business is beginning to function like one giant dollar-eating, energy-sucking entity that makes chips, trains models and sketches utopias to justify its runaway costs.

- All this is happening well before the takeoff of a "superintelligence" that always seems to lie just over the next ridge.

What they're saying: "We are in a phase of the build-out where the entire industry's got to come together and everybody's going to do super well," OpenAI CEO Sam Altman told the Wall Street Journal as the AMD deal was unveiled.

- "You'll see this on chips. You'll see this on data centers. You'll see this lower down the supply chain."

Indeed, everyone seems to be singing "Come Together" with Altman and OpenAI.

- Nvidia announced a massive deal last month in which the chipmaker plans to invest up to $100 billion in OpenAI in stages, with OpenAI using the money to build data centers chock full of Nvidia systems.

- Nvidia also recently cut a deal with Intel to invest $5 billion in the troubled U.S. chipmaker.

- OpenAI has pulled in additional billions from Oracle and SoftBank to fund its ambitious Stargate data center project in the U.S., with more billions from the UAE to fund a data center in Abu Dhabi.

- These partnerships all follow OpenAI's foundational relationship with Microsoft, forged in the company's early days and restructured last month.

Meanwhile, OpenAI competitor Anthropic has taken big investments from both Google and Amazon.

- Oh, but OpenAI itself also has a deal for services from Google Cloud.

- And Microsoft is powering some of its products with AI from Anthropic.

Between the lines: The U.S. government itself has become a stakeholder in the AI mega-blob.

- The Biden administration and Congress had already gotten into the business of funding domestic chipmaking via the CHIPS Act.

- Then the Trump administration decided that in return for CHIPS grants aimed at helping once-dominant U.S. chipmaker Intel recover its manufacturing capacity, the company should give the U.S. 10% ownership.

Flashback: Historically, as with the build-out of railroads in the late 19th century, eras of massive growth and speculation have led to corruption and scandal that then provokes regulation and prosecution.

- But today, the government has become one more player in the game, and the fast-dealing, anything-goes climate of Trump's second term makes a Progressive Era-style pushback look unlikely for now.

Yes, but: The AI boom's massive dollar amounts and hints of investment circularity, where company A supplies company B with the cash to buy A's products, create their own kind of risk.

- Just as AI technology has an "interpretability problem," where researchers can't always understand or explain what AI models are doing or why, the financial engineering behind the technology is also getting harder for most investors to map, track and grok.

- That troubles veterans of the dot-com bust and the 2008-09 financial crisis, both of which featured opaque, unconventional financing mechanisms that went haywire and caused a ton of collateral damage.

The bottom line: The more entangled AI firms get with one another, the more likely any setback to one will turn into a calamity for all.

- Right now, OpenAI — a company with enormous potential that's also raising enormous sums to place enormous bets — is propping up much of the AI industry, and the industry is propping up much of the U.S. economy.

- If it falters, or investors lose faith, everyone else will be on the hook, too.

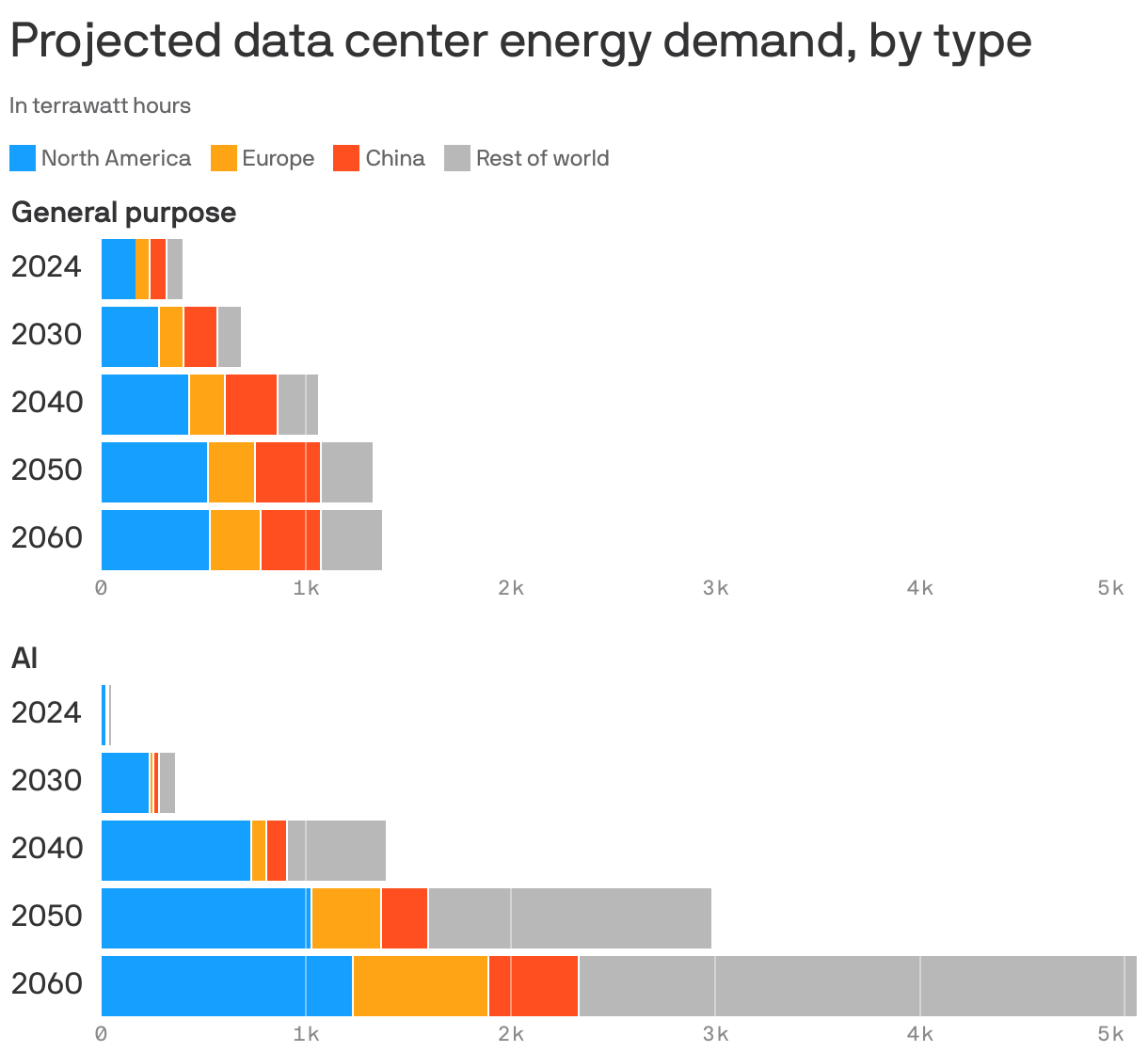

2. AI power demand to grow tenfold by 2030

Data centers built for AI may demand 10 times more power over the next five years, according to a new report from global risk firm DNV.

Why it matters: How much energy the world will need to power artificial intelligence is a hotly debated topic.

- It has giant implications for the billions of dollars going into AI startups and electricity infrastructure across the U.S. and the world.

Driving the news: The firm forecasts that North America — especially the U.S. — will lead global demand among data-center energy consumers over the coming decades.

- DNV estimates that by 2040, U.S. and Canadian data centers will account for 16% of electricity use, with 12% of that tied to AI applications.

Reality check: The report argues that even with this growth, AI's share of global electricity is unlikely to exceed 3% by 2040, staying below demand from sectors such as electric vehicle charging and building cooling.

The big picture: The report from Norway‑based DNV covers a range of topics.

- Its topline projection finds that even sweeping policy reversals under President Trump would have only a "marginal impact" on the broader global energy transition.

- Much like an International Energy Agency's report out yesterday, this study finds that within the U.S., Trump's moves do "slow that nation's transition markedly," though they do not reverse it.

- Emission reductions are delayed by about five years, it finds.

The bottom line: DNV analyzed over 50 publications with global data center energy estimates. It finds itself on the lower end of such projections — so maybe they will be an understatement.

3. Training data

- Elon Musk's xAI is increasing the size of its current founding round to $20 billion with a new investment infusion from Nvidia that will help xAI lease more Nvidia chips for its data centers, sources tell Bloomberg.

- Treasury Secretary Scott Bessent will keynote an AI forum Oct. 21 hosted by the newly formed Prometheus Initiative, a group aimed at promoting AI's social benefits. (Axios)

- Anthropic is planning to open an office in India as it seeks additional partnerships in a key market. (TechCrunch)

- IBM will integrate Anthropic's Claude into its software products for business customers. (WSJ)

4. + This

I typically leave the Roblox playing to Harvey, but this new "Sesame Street" experience (which lets you create your own Muppet avatar) had me searching for my account credentials.

- Meanwhile, keeping on the "Sesame Street" theme, this sums up my week pretty well.

Thanks to Scott Rosenberg and Megan Morrone for editing this newsletter and Matt Piper for copy editing.

Sign up for Axios AI+