Axios AI+

January 10, 2025

Nintendo teased a new console, but it's not the next Switch. It's a Lego version of its Game Boy handheld. Today's AI+ is 1,108 words, a 4-minute read.

Situational awareness: The Supreme Court holds arguments at 10am ET today on ByteDance's appeal of the law banning TikTok from the U.S.

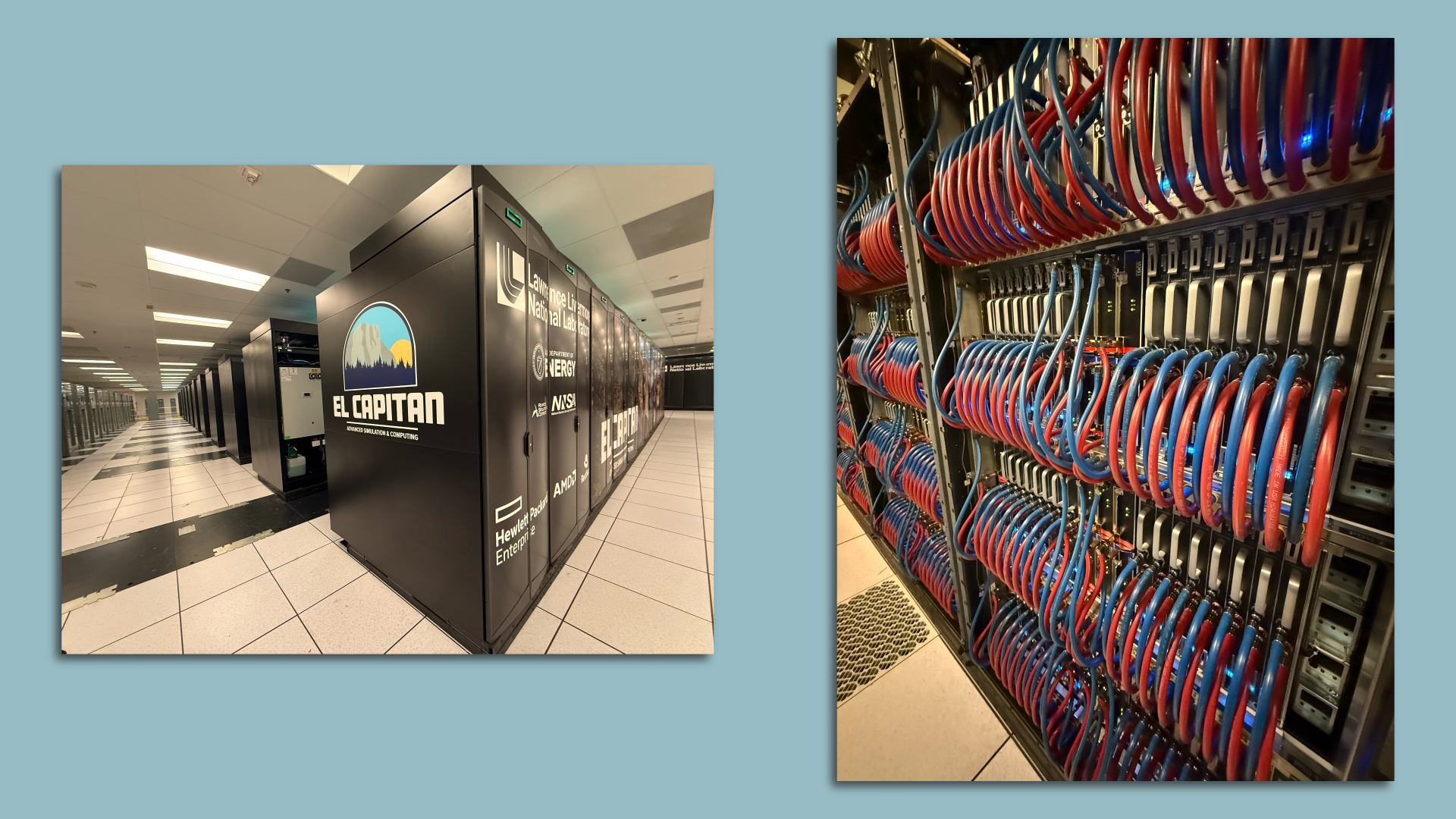

1 big thing: $600 million supercomputer

The world's most powerful supercomputer was officially dedicated in California yesterday, with the CEOs of Hewlett-Packard Enterprise and AMD on hand to celebrate their handiwork.

Why it matters: El Capitan — as the $600 million supercomputer is known — will handle an array of classified tasks aimed at securing the U.S. stockpile of nuclear weapons and run a variety of other unspecified simulations.

Zoom in: El Capitan and a smaller sibling designed for nonclassified work sit inside a large data center inside Lawrence Livermore National Labs, roughly 30 miles northeast of Silicon Valley.

- That smaller sibling, Tuolumne, is similar in design to El Capitan, but just one-tenth the size. It's still powerful enough to rank 10th among the world's most powerful supercomputers.

- Reporters yesterday were able to get a peek at the giant computer, which has been running test code since last year.

While not solely designed for AI work, officials expect the two computers to make significant use of the emerging technology.

- "While we're still exploring the full role AI will play, there's no doubt that it is going to improve our ability to do research and development that we need," said Bradley Wallin, a deputy director of the Livermore lab.

By the numbers: El Capitan is capable of peak performance of 2.79 exaflops, or 2.79 quintillion calculations per second.

- That's equivalent to the processing power of about 1 million of today's high-end smartphones working simultaneously.

- Its 87 computer racks and accompanying infrastructure weigh 1.3 million pounds. That's about the same as four blue whales or 100 African elephants.

- El Capitan uses 30 megawatts of power, drawn from the local grid, though the lab did have to arrange for more power to be concentrated in the data center that houses it.

Zoom out: For HPE, El Capitan is yet another notch in its belt. The company, which acquired supercomputer maker Cray in 2019, is also responsible for supercomputers at Oak Ridge and Argonne labs, the next-largest such computers.

- For AMD, it's another sign of just how far the chipmaker's fortunes have risen. Long Intel's distant rival, AMD has not only grabbed a significant share of PCs and servers, but emerged as a key player in supercomputing.

- AMD also aspires to grab a larger share of the AI training market from Nvidia.

- "I'm smiling from ear to ear," CEO Lisa Su told reporters yesterday.

The big picture: Both Su and HPE CEO Antonio Neri said the knowledge gained building El Capitan will directly benefit their AI efforts.

- "There is complete leverage," Neri said, noting the marked similarity between machines like El Capitan and those used to train AI systems.

- "It's basically the same building blocks, as Antonio said, configured in a different way," Su said.

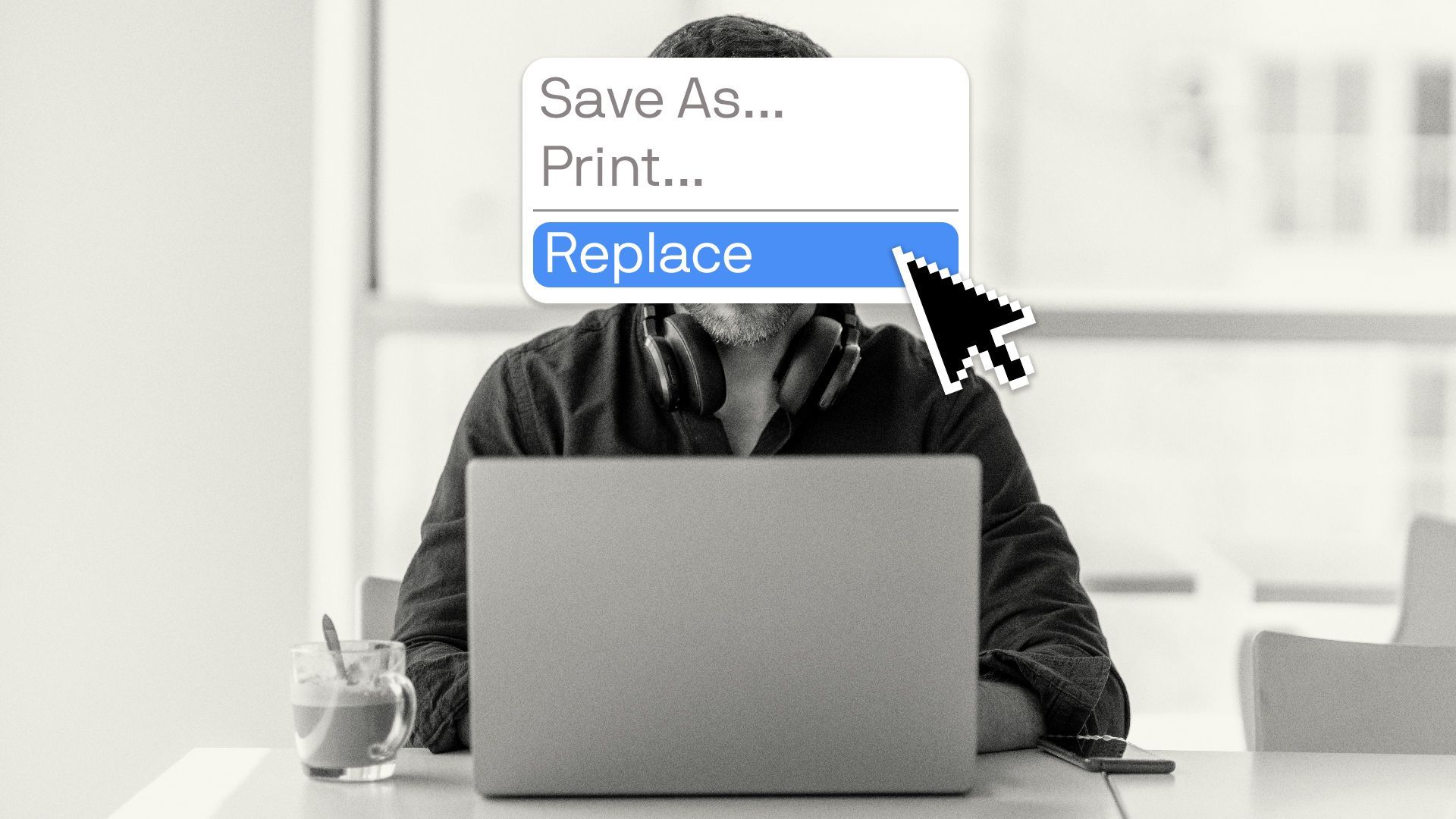

2. AI agents are coming to a workplace near you

AI technology is advancing rapidly and if you're not already using it at work, brace yourself.

Why it matters: That was Sam Altman's message, buried in a recent blog post.

- "We believe that, in 2025, we may see the first AI agents 'join the workforce' and materially change the output of companies," writes the OpenAI founder.

State of play: The possibility of using AI agents to do work instead of expensive humans has some companies super excited. It's making many workers super anxious.

For example, a scientist could use a bot to conduct research and possibly even design an experiment.

- But an AI agent, when prompted, can act as a research assistant — it can not only do the research and design an experiment, the agent can conduct it and compile the results. (In a recent paper, scientists at AMD and Johns Hopkins University described how they successfully had an agent do just that.)

Zoom out: Altman, of course, has a big interest in a future where AI plays a bigger role at work. And it's not clear yet what happens to U.S. workplaces in 2025.

- But the idea of AI agents in our workplaces is hardly just an AI entrepreneur's fantasy, researchers and experts say.

Zoom in: Some companies are already experimenting with AI agents in limited pilot programs — to conduct drug discovery, for project management, or to design marketing campaigns.

The big picture: The key question is what happens to people's jobs? Most experts agree that agents will change the nature of work over the coming years — particularly for those who work at a desk in front of a computer.

That could mean an agent starts doing some of your work. "In an ideal world, this is a multiplier of effort where I delegate the worst parts of my job to AI," says Ethan Mollick, a management professor at Wharton who studies AI.

- Altman has said something similar. He doesn't think about "what percent of jobs AI will do, but what percent of tasks will it do," he said on Lex Fridman's podcast last year. AI will let people do their jobs, "at a higher level of abstraction."

- AI has made workers more efficient, but there's still a lot more work to do. "The one thing I'm not worried about is that we're running out of work," GitHub CEO Thomas Dohmke told Axios.

Yes, but: While humans will still absolutely be needed to supervise the AI's work, agents will start replacing humans over the next two years, says Anton Korinek, an economics professor at the Darden School of Business at the University of Virginia and a visiting scholar at Brookings.

- "Any job that can be done solely in front of a computer will be amenable to AI agents within the next 24 months," Korinek said in an email, assuring this reporter that he was not himself an AI. (He also agreed that he could be replaced by one.)

Between the lines: Humans are moving more slowly than the technology. Companies have to figure out how to adjust operations to accommodate AI workers, says Lareina Yee, a senior partner at McKinsey and AI expert.

- And that can be a costly endeavor.

- The biggest challenge to moving AI agents into the workplace isn't the tech, it's the people, she says. "This is not a technology strategy moment, it's a business strategy moment."

3. Training data

- Meta allegedly allowed its team to train its large language models on copyrighted works. (TechCrunch)

- A lawyer for Elon Musk called on California and Delaware officials to allow outside investors to place bids for OpenAI's assets, as the company prepares to transfer its technology from nonprofit board control to a for-profit. OpenAI has no plans for such an auction. (The Financial Times)

4. + This

Today I learned that Livermore is not only home to two national labs, but also the world's longest continuously burning light bulb. And yes, there's a "bulb-cam."

Thanks to Scott Rosenberg and Megan Morrone for editing this newsletter and Matt Piper for copy editing it.

Sign up for Axios AI+