OpenAI touts ‘scientific approach’ to measure catastrophic risk

Add Axios as your preferred source to

see more of our stories on Google.

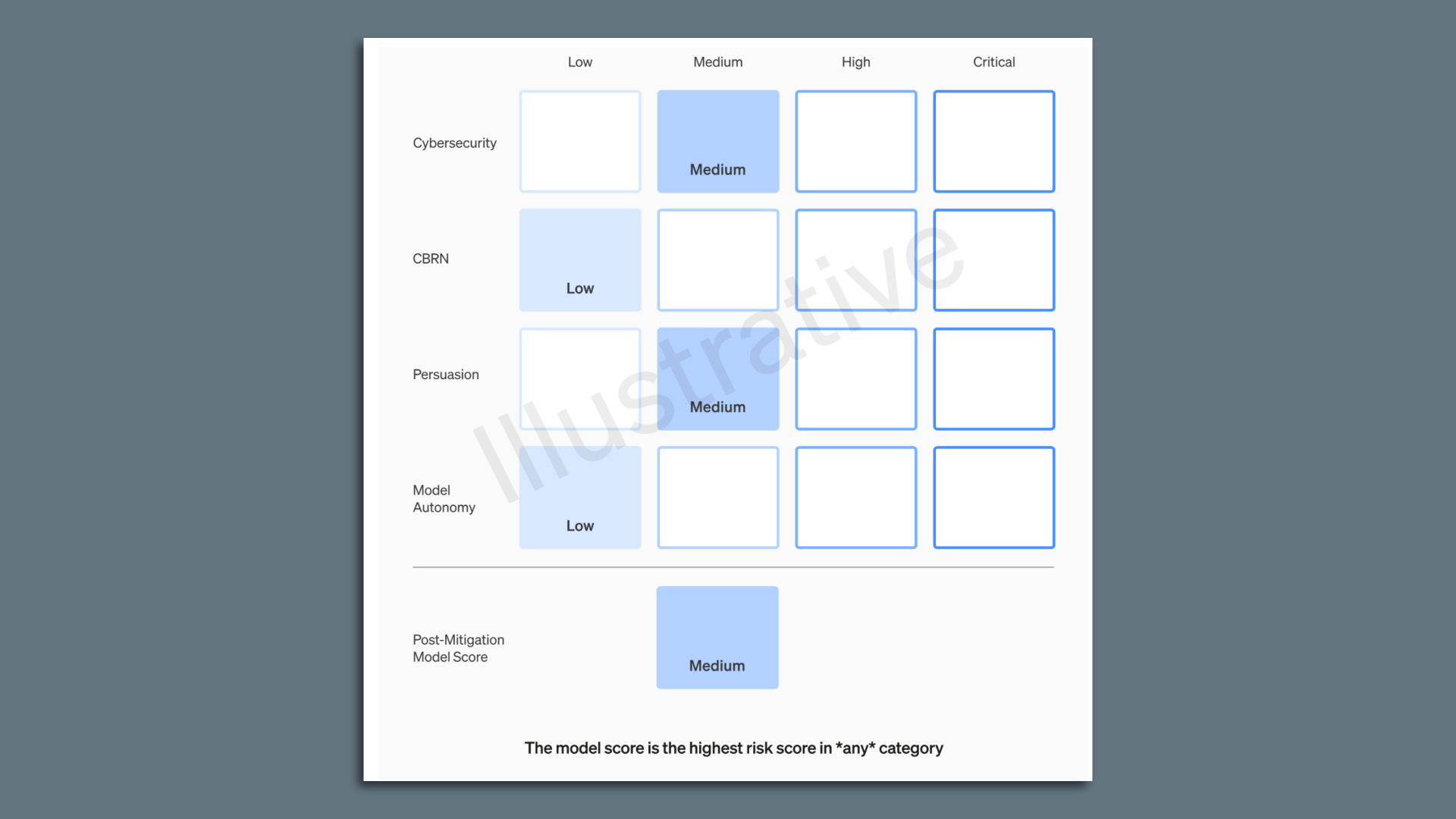

An example of an AI-preparedness score card. Image: OpenAI

OpenAI on Monday launched what it hopes will form a more scientific approach to assessing catastrophic risks posed by the most advanced AI models.

Why it matters: While there has been a great deal of hand-wringing and fear about the potential for life-threatening risks, there has been far less discussion of just how to prevent such harms from emerging.

"We really need to have science," OpenAI head of preparedness Aleksander Madry told Axios.

How it works: OpenAI's 27-page preparedness framework proposes using a matrix approach, documenting the level of risk posed by frontier models across several categories, including risks such as the likelihood of bad actors using the model to create malware, social engineering attacks, or to distribute harmful nuclear or biological weapons info.

- OpenAI will score each risk as low, medium, high or critical both before and after implementing mitigations across each category of risk.

- Only models with a score of "medium" or below can be deployed, while the company will stop development on models if it can't reduce the risk below critical.

- While OpenAI's CEO will be in charge of deciding what to do on a day-to-day basis, the board will be informed of the risk findings and can overrule the CEO's decisions.

Between the lines: OpenAI divides the people working on safety issues into three teams. There's a safety team that focuses on mitigating and addressing the risks posed by the current crop of tools, while a superalignment team looks at issues posed by future systems whose capabilities outstrip those of humans.

- Madry's team, a relatively new one, has a timeframe in between those two efforts, evaluating the most powerful models as they are being developed and implemented.

- There are currently only four people on the team, including Madry, though he is actively hiring and expects to have 15-20 people, on par with the safety and superalignment teams.

- In addition to better assessing and documenting risk, OpenAI is also trying to improve its governance systems in the wake of the events that led to the firing and re-hiring of CEO Sam Altman last month.

The big picture: Madry hopes that other AI companies will adopt a similar approach to assess the risks of their own models.

- "The hope is that, yeah, everyone will do some form of this," Madry said, adding that it could also be a model for regulation.