A convincing AI-made video that tells on itself

Add Axios as your preferred source to

see more of our stories on Google.

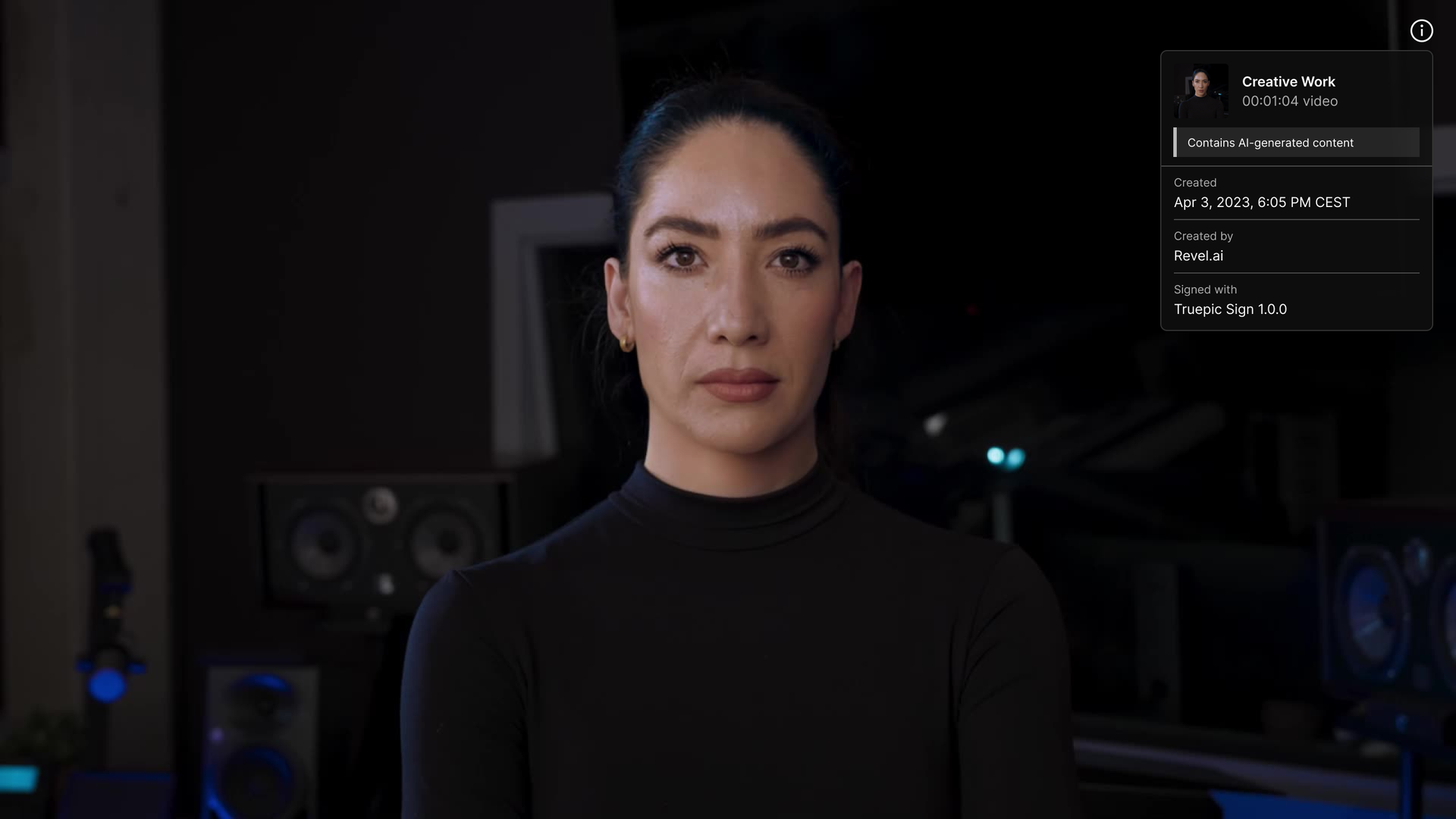

Image: Truepic

Several leaders in AI have teamed up to create what they are calling the first transparent deepfake — a video that looks convincingly real, but is both synthetically created and makes use of an emerging standard to label itself as such.

Why it matters: Many in the field say concerns over synthetic media are no longer hypothetical and that now is the time to start clearly labeling how content was created or altered.

Driving the news: A new synthetic video from Revel ai features a convincing fake of AI author Nina Schick — with her permission.

- The video is labeled using commercially available technology from Truepic to identify itself as being synthetically generated and having come from Revel.ai.

- The labeling is based on the latest version of a standard from the Content Authenticity Initiative led by Adobe and others designed to show how images and video were produced. Adobe is also using similar tools to identify content created using its new Firefly generative AI tools.

What they're saying: Truepic CEO Jeffrey McGregor told Axios that momentum is building around the need to label synthetic content, with fear of regulation among the motivations.

- Last week's letter calling for a pause in some AI development also underscored the urgent need for a system of content verification as existing AI tools are widely adopted.

- "I think the conversation has changed even in the past two weeks," McGregor said. "People are starting to look at this as a best practice for implementing responsible AI."

Yes, but: Labeling content that is synthetically created doesn't help if most legitimately captured video is not labeled.

- More than anything, you want to know that real content is real, rather than just have some portion of fakes admit that they are fakes.

McGregor said labeling synthetic content can help educate consumers and incentivize other good actors, but acknowledges that the bulk of all content really needs to be transparently labeled in order to minimize the impact of deepfakes.

- Truepic already has a business helping create secure pipelines that media organizations and others can use to prove their images and video were legitimately captured, along with any editing that has taken place.

- McGregor says the company is working with more than 170 organizations, including a pilot with Microsoft to authenticate images coming out of Ukraine.

The bottom line: The era of deepfakes is already here and people should be suspicious of any video that can't prove where it came from. "You should not trust digital video at this point," McGregor said. "You really shouldn’t."