Axios AI+

November 10, 2025

FYI, we will be off tomorrow for Veterans Day, but back in your inbox on Wednesday. Today's AI+ is 1,152 words, a 4.5-minute read.

1 big thing: The sleeping giant awakes

Axios asked the top AI executives for their private take on the American rival they fear most. Without pause, they all coughed up the same name: Google.

Why it matters: The search giant has been somewhat sleepy so far in the race for AI dominance.

- But Google's combination of scientific brain power, deep access to data, and lucrative income streams has rivals worried.

The big picture: The company with the most to lose (and fear) is OpenAI, the early leader in the race for consumer AI adoption and dominance.

- The two companies are increasingly in direct competition to conquer the next generation of search — one where AI curates smarter, faster, better answers without the hassle of digging and clicking.

- The prize is the generational business of being America's — and much of the world's — front door to just about everything.

Zoom out: When OpenAI upended the market in late 2022 with the launch of ChatGPT, much of the Silicon Valley buzz was that Google had taken its eye off the ball.

Yes, but: Not anymore. Google has been quietly — and successfully — pursuing all the buzzy AI trends: touting AI agents, offering enterprise subscriptions and putting chatbots everywhere.

- Gemini went viral in August after the company released its Nano Banana image generation model and won praise for the realistic physics underlying its latest Veo video generation model.

- Now there are reports Apple may shift gears on its own AI ambitions and turn to Google to power the long-awaited next generation of Siri — a concession that, for all of Apple's technical might, Google just does this better (right now).

Between the lines: Perhaps as important as those recent gains, Google has a large and profitable business to support its aggressive training and development pace, while cash-burning rivals like OpenAI must constantly find fresh sources of capital.

- Google also has a leg up on OpenAI when it comes to distribution, thanks to its ubiquitous search engine, Chrome browser and Android operating system.

- Google can leverage those diverse income streams in multiple ways, while OpenAI works to build a business that generates enough revenue to justify funding $1.4 trillion in infrastructure over the next eight years.

The intrigue: Venture capitalist Josh Wolfe has argued that Google could use its search profits to offer Gemini for free, or near-free, thus causing many ChatGPT users to switch.

- How that would work as a business remains murky. Google has suggested it has plans to bring advertising to AI results, though no one yet knows what that really looks like.

The bottom line: The long-term race is still about which company reaches artificial general intelligence first. More immediately, what will matter is who turns today's AI into a sustainable business model.

2. Dimming job market's bright spot: AI skills

Hiring is slowing, but demand for AI skills is spiking.

Why it matters: Business leaders are beginning to see an emerging gap between workers who embrace AI and those who use it only for basic tasks or not at all.

By the numbers: Mentions of AI skills in job postings rose 16% in three months, even as overall tech hiring is down 27% year-over-year, per ManpowerGroup's Work Intelligence Lab.

The other side: Greenhouse data shows that 32% of job seekers have claimed AI skills they don't actually have.

- "Application volumes are up 239% on average since ChatGPT launched, flooding hiring teams with low-intent spam generated in seconds," Daniel Chait, CEO and co-founder of Greenhouse, told Axios.

The big picture: The fastest-growing AI jobs focus on wrangling data: data labeling, data annotation, data analysis, data science.

- Businesses say they're looking for employees who can interpret AI output, spot bad data, and integrate machine insights into business decisions.

- Demand for data-mining and management freelancers grew 26% and demand for AI and machine learning (ML) skills increased from September to October, according to Upwork, a work marketplace.

- More than half (55%) of businesses say they expect to hire data analysts and data scientists in the next three months, per Upwork.

Between the lines: Learning platform Simplilearn says math, statistics and programming languages — specifically Python — are also key.

Yes, but: Human skills still matter, says Cormac Whelan, CEO of software company Nitro.

- In particular: "curiosity, ability to learn fast and to adapt fast," Whelan, previously CEO of an AI startup sold to Apple in 2020, says.

- Upwork COO Anthony Kappus told Axios that he's seen "a rapid rise in demand for talent who can pair hard skills like design, video editing, and marketing with uniquely human skills like creativity, strategic thinking, and judgment to deliver work built with AI tools."

- "The AI landscape is evolving so fast," Whelan says, "that how someone learns matters more than whether they have a Ph.D. in generative AI."

The bottom line: Still, with so much weakness in the job market, a Ph.D. in generative AI certainly can't hurt.

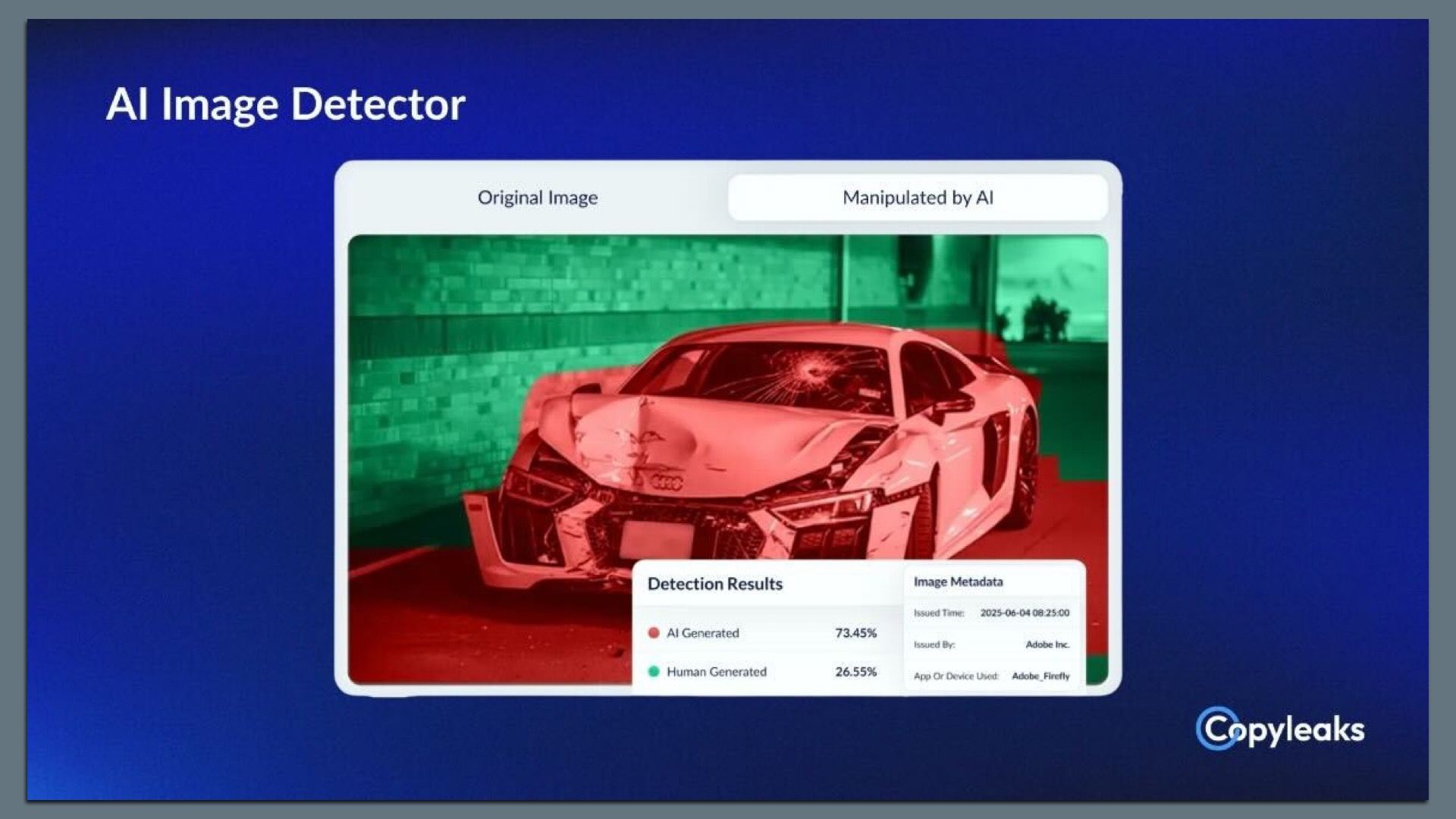

3. Exclusive: Copyleaks scans for AI in images

Copyleaks, known for its software to detect plagiarism and AI-generated text, is expanding into identifying AI use in images, the company shared first with Axios.

Why it matters: Beyond misinformation, AI image tools are fueling fraud — from fake receipts to doctored insurance claims.

Zoom in: The new Copyleaks detector assigns each image an AI-use probability score and shows where AI was likely applied on an image.

- There's a public demo of the tool.

Zoom out: CEO Alon Yamin told Axios the company sees a broad range of markets for the new tool, including education, financial services and publishing.

- "It used to be 'seeing is believing,'" Yamin said. "It's not anymore the case. So how are you making sure that you're able to really authenticate the content and understand its source?"

- As it does with its text-based detection tools, Copyleaks will charge institutions using its image detector based on volume.

Yes, but: Detecting AI-generated content is tricky; detecting whether AI is used for fraud is even trickier.

- Some phone cameras today use AI to combine multiple exposures for a "best take" or they use AI to fill in details on a zoomed-in shot.

- Limited Axios tests found mixed results — the tool flagged some AI-created images of official documents but missed others. It also returned false positives on images that weren't AI-generated.

What's next: The company says it plans to expand into identifying the use of AI in audio and video in 2026.

4. Training data

- The big AI firms are homing in on India as a key market for their chatbots. (Bloomberg)

- Google sent a letter to Sen. Marsha Blackburn (R-Tenn.) saying that the AI hallucination problem gets worse when consumers use models only meant for developers and researchers. (Axios)

5. + This

There is a guy named Scott pushing an AI technology called Devin and a guy named Devin selling an AI technology called Scott. What a world.

Thanks to Megan Morrone for editing this newsletter and Matt Piper for copy editing.

Sign up for Axios AI+