Axios AI+

May 29, 2025

Exercise is very important. Each Wednesday I have it on my calendar to go ice skating. (And yesterday, for the second time this year, I actually made it.) Today's AI+ is 1,194 words, a 4.5-minute read.

1 big thing: Keeping AI secret

More employees are using generative AI at work, and many are keeping it a secret.

Why it matters: Absent clear policies, workers are taking an "ask forgiveness, not permission" approach to chatbots, risking workplace friction and costly mistakes.

The big picture: Secret genAI use proliferates when companies lack clear guidelines, because favorite tools are banned or because employees want a competitive edge over coworkers.

- Fear plays a big part, too — fear of being judged and fear that using the tool will make it look like they can be replaced by it.

By the numbers: 42% of office workers use genAI tools like ChatGPT at work and 1 in 3 of those workers say they keep their use secret, according to research out this month from security software company Ivanti.

- A McKinsey report from January showed that employees are using genAI for significantly more of their work than their leaders think they are.

- 20% of employees report secretly using AI during job interviews, according to a Blind survey of 3,617 U.S. professionals.

Catch up quick: When ChatGPT first wowed workers over two years ago, companies were unprepared and worried about confidential business information leaking into the tool, so they preached genAI abstinence.

- Now the big AI firms offer enterprise products that can protect IP, and leaders are paying for those bespoke tools and pushing hard for their employees to use them.

- The blanket bans are gone, but the stigma remains.

Zoom in: New research backs up workers' fear of the optics around using AI for work.

- A recent study from Duke University found that those who use genAI "face negative judgments about their competence and motivation from others."

Yes, but: The Duke study also found that workers who use AI more frequently are less likely to perceive potential job candidates as lazy if they use AI.

Zoom out: The stigma around genAI can lead to a raft of problems, including the use of unauthorized tools, known as "shadow AI" or BYOAI (bring your own AI).

- Research from cyber firm Prompt Security found that 65% of employees using ChatGPT rely on its free tier, where data can be used to train models.

- Shadow AI can also hinder collaboration. Wharton professor and AI expert Ethan Mollick calls workers using genAI for individual productivity "secret cyborgs" who keep all their tricks to themselves.

- "The real risk isn't that people are using AI — it's pretending they're not," Amit Bendov, co-founder and CEO of Gong, an AI platform that analyzes customer interactions, told Axios in an email.

Between the lines: Employees will use AI regardless of whether there's a policy, says Coursera's chief learning officer, Trena Minudri.

- Leaders should focus on training, she argues. (Coursera sells training courses to businesses.)

- Workers also need a "space to experiment safely," Minudri told Axios in an email.

The tech is changing so fast that leaders need to acknowledge that workplace guidelines are fluid.

- Vague platitudes like "always keep a human in the loop" aren't useful if workers don't understand what the loop is or where they fit into it.

- GenAI continues to struggle with accuracy, and companies risk embarrassing gaffes, or worse, when unchecked AI-generated content goes public.

- Clearly communicating these issues can go a long way in helping employees feel more comfortable opening up about their AI use, Atlassian CTO Rajeev Rajan told Axios.

- "Our research tells us that leadership plays a big role in setting the tone for creating a culture that fosters AI experimentation," Rajan said in an email. "Be honest about the gaps that still exist."

The bottom line: Encouraging workers to use AI collaboratively could go a long way to removing the secrecy.

- Generative AI works best when it's combined with human intelligence, says Elliot Katz, co-founder of mixus.ai, a collaborative AI business platform.

- "One person's dirty little secret," Katz told Axios in an email, "can be a tool that teams are excited to use together daily."

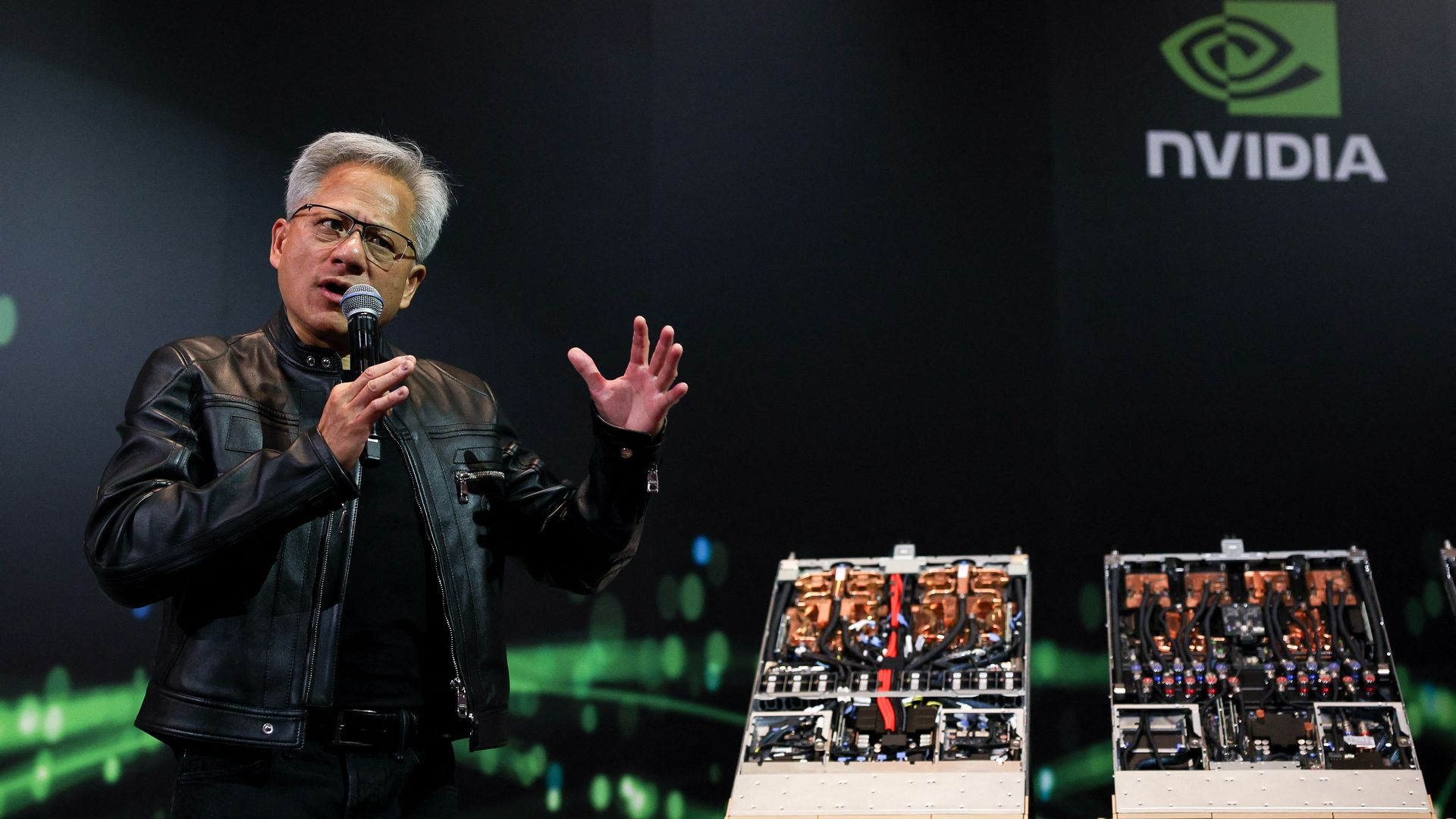

2. Chip restrictions dim Nvidia's strong quarter

Nvidia topped earnings expectations in its most recent quarter as AI chip demand continues to fuel growth for the company despite concerns about mounting U.S.-to-China export restrictions.

Why it matters: Nvidia has become a bellwether stock for the AI economy as tech companies invest heavily in product development and data center capability, powering Nvidia's rise.

By the numbers: The company yesterday posted revenue of $44.1 billion for its first quarter, up 69% from a year ago and beating S&P Global Market Intelligence expectations of $43.2 billion.

Threat level: Nvidia warned that it expects to lose about $8 billion in second-quarter revenue from the loss of sales of H20 chips due to U.S. export control restrictions.

- The company incurred a $4.5 billion charge in the first quarter from excess H20 inventory due to the restrictions.

Catch up quick: Nvidia CEO Jensen Huang argued last week that U.S. chip export controls have been "a failure," with their biggest impact being the erosion of Nvidia's competitive position.

- Nvidia's once-dominant position in China has been significantly dented, Huang said — its market share in the country falling from 95% four years ago to only 50% today.

3. Good fences make good robots

AI-driven creatures — whether they're autonomous vehicles, delivery bots or humanoid robots — aren't ready to be unleashed freely into the wild.

- That's why robotaxis today only operate in certain neighborhoods and humanoids are being tested inside factory cages where they can't hurt anyone.

Why it matters: Unlike chatbots, which can learn to talk simply by scraping information from the internet, AI robots are expected to move fluidly through unstructured environments, communicate with people, manipulate things and make reasoned decisions.

- That's a far bigger challenge that requires tons more training data and real-world experience.

The big picture: Tesla CEO Elon Musk is among the most bullish about how generative AI will reshape autonomy and robotics.

- He envisions 1 million driverless Teslas by the end of 2026 and 1 million Optimus humanoids doing useful work by the end of the decade.

- Tesla is working on a generalized solution for self-driving cars: Instead of coding step-by-step instructions for every street in every city based on high-definition maps, it's using AI to teach cars how to drive virtually anywhere.

Yes, but: It all depends on whether there's sufficient training data available.

4. Training data

- A federal judge late yesterday ruled that President Trump lacks legal authority to impose across-the-board tariffs. (Axios)

- Meanwhile, Secretary of State Marco Rubio announced plans to revoke visas for all Chinese students. (Axios)

- Opera announced plans for a browser that can also act as an AI agent, performing tasks in the background. (The Verge)

- Humain, Saudi Arabia's state-backed AI company, is seeking U.S. investors. (Financial Times)

- Elon Musk tried to block OpenAI's Middle East data center deal unless xAI was also included, according to the Wall Street Journal.

- A new startup is recruiting doctors to build and refine AI models to improve their accuracy for health care use. (Axios Pro)

5. + This

Lego and Nike are today revealing the first fruits of a partnership announced last year, including brick-themed shoes and shoe-themed bricks. The initial shoe model and Lego set arrive later this summer, and a broader lineup of shoes, apparel and Lego sets will come in the fall.

Thanks to Scott Rosenberg and Megan Morrone for editing this newsletter and Matt Piper for copy editing.

Sign up for Axios AI+