Axios AI+

June 21, 2024

Greetings from sunny Orange County, where I spent the first part of my post-college career, reporting for the Orange County Register and the Orange County Business Journal.

Today's AI+ is 1,181 words, a 4.5-minute read.

1 big thing: Researchers built a BS detector for AI

A new algorithm, along with a dose of humility, might help generative AI mitigate one of its persistent problems: confident but inaccurate answers.

Why it matters: AI errors are especially risky if people rely on chatbots and other tools for medical advice, legal precedents or other high-stakes information.

- A new Wired investigation found AI-powered search engine Perplexity churns out inaccurate answers.

The big picture: Today's AI models make several kinds of mistakes, some of which may be harder to solve than others, says Sebastian Farquhar, a senior research fellow in the computer science department at the University of Oxford.

- But all these errors are often lumped together as "hallucinations" — a term Farquhar and others argue has become useless because it encompasses so many different categories.

- Farquhar is also a senior research scientist at Google's DeepMind.

Driving the news: Farquhar and his Oxford colleagues this week reported developing a new method for detecting "arbitrary and incorrect answers," called confabulations, the team writes in Nature. It addresses "the fact that one idea can be expressed in many ways by computing uncertainty at the level of meaning rather than specific sequences of words."

- The method involves asking a chatbot a question several times, i.e., "Where is the Eiffel Tower?"

- A separate large language model (LLM) grouped the chatbot's responses — "It's Paris," "Paris," "France's capital Paris," "Rome," "It's Rome," "Berlin" — based on their meaning.

- Then they calculated the "semantic entropy" for each group, a measure of the similarity among the responses. If the responses are different — Paris, Rome and Berlin — the model is likely to be confabulating.

What they found: The approach can determine whether an answer is a confabulation about 79% of the time, compared to 69% for a detection measure that assesses similarity based on the words in a response and similar performance by two other methods.

Yes, but: It will only detect inconsistent errors — not those produced if a model is trained on biased or erroneous data.

- It also requires about five to 10 times as much computing power as a typical chatbot interaction.

- "For some applications, that would be a problem, and for some applications, that's totally worth it," Farquhar says.

What they're saying: "Developing approaches to detect confabulations is a big step in the right direction, but we still need to be cautious before accepting outputs as correct," Jenn Wortman Vaughan, a senior principal researcher at Microsoft Research, told Axios in an email.

- "We're never going to be able to develop LLMs that are perfectly accurate, so we need to find ways to convey to users what mistakes might look like and help them set their expectations appropriately."

Vaughan and other researchers are looking at ways to have AI systems communicate the uncertainty in their answers — to get them, in effect, to be more humble.

- But "figuring out the right notion of uncertainty to convey — and how to compute it — is a huge" open question, she said, adding it will likely depend on the application.

- In a new paper, Vaughan and her colleagues look at how people perceive a model's expression of uncertainty when a fictional "LLM-infused" search engine answered a medical question. (For example, "Can an adult who has not had chickenpox get shingles?")

- Participants were shown the AI response and asked to report how confident they were in it. They then answered the question themselves and said how confident they were in their own answer.

They found that people who were shown AI answers with first-person expressions of uncertainty — "I'm not sure, but..." — were less confident in the AI's responses and agreed with its answers less often compared to participants who saw no expression of uncertainty.

- That suggests "natural language expressions of uncertainty may be an effective approach for reducing overreliance on LLMs, but that the precise language used matters," they write.

- The researchers note their study has several limitations, including that people had single interactions with the system, and they didn't look at more complex tasks — like writing an article — or explore cultural or language differences.

- Conveying uncertainty needs to center on the needs of the users, Vaughan says. "How do we empower them to make the best choices about how much to rely on the system and what information to trust? We can't answer these types of questions with technical solutions alone."

Between the lines: While giving an algorithm extra training data can make it "more accurate on things that you know you care about," people may want to ask much more of AI, stretching it beyond the data it is trained on and opening up the possibility for errors and fabrications, Vaughan says.

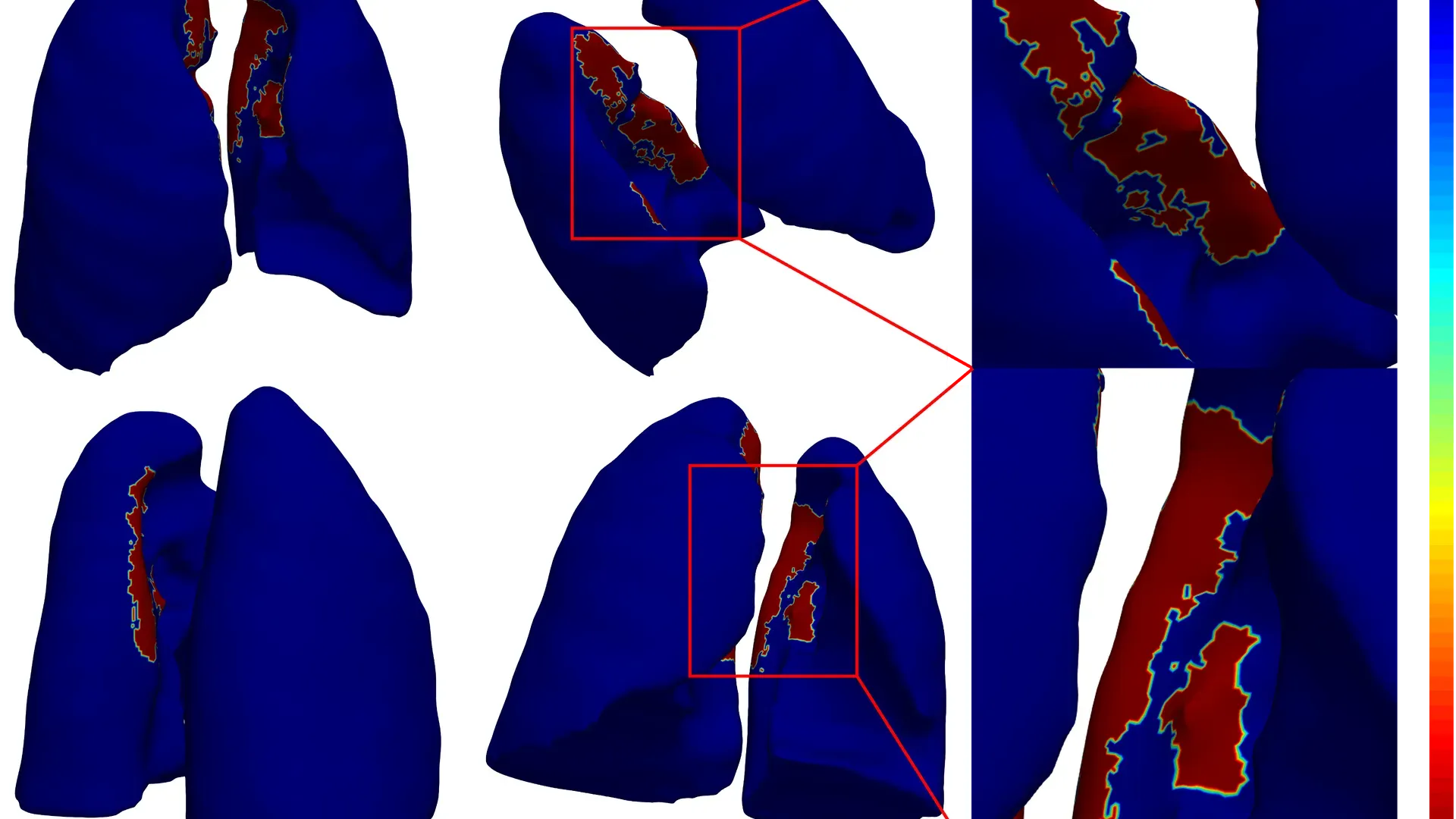

2. AI helps detect long-term COVID-19 effects

An AI analysis found deformations on the lungs of patients with severe COVID-19, according to an Emory University-led study published June 10.

Why it matters: Scientists are investigating long COVID's effect on the body. The study's lead researcher said in a press release that the AI analysis found damage with possible enduring consequences.

What they did: Emory University's AI health institute collaborated with researchers from North America, Europe and Asia to analyze CT scans from more than 3,400 patients across multiple institutions.

- They created 3D AI models of the lungs of people without COVID-19, patients with mild COVID-19, and those whose severe COVID-19 cases required ventilators.

What they found: Significant lung shape differences were observed along areas between the lungs across all severity levels of the disease.

- Differences were also seen on basal surfaces of the lung when comparing healthy, non-COVID-19 and severe COVID-19 patients.

Threat level: Researchers suggest the deformations could impair lung function, affecting one's quality of life and potentially increasing overall mortality.

- Experts have already found that COVID-19 can cause pneumonia, severe lung damage and blood infections possibly resulting in lung scarring and chronic breathing issues. Some people fully recover. Others may suffer permanent damage.

What they're saying: Understanding how COVID-19 affects the lungs early on can help us understand and treat the disease, study author Amogh Hiremath said in a press release.

Caveat: The study has limitations, calling itself "retrospective in nature," for instance.

- The clinical practicality of the AI used needs to be validated by following patients until discharge, the study states.

What's next: Future studies will explore lung shape differences among COVID-19 patients as a biomarker in the context of long COVID, researchers say.

Go deeper: AI tool forecasts new COVID variants

3. Training data

- HeyGen, a startup that helps users create their own personal deepfakes, has raised $60M in Series A funding, led by Benchmark. (Axios, HeyGen)

- Trading places: HeyGen also announced it has hired former Asana CMO Dave King as chief business officer, former HubSpot engineering VP Rong Yan as chief technology officer, and Lavanya Poreddy, formerly of Match Group and Meta, as its head of trust and safety.

- AI is changing warfare — in ways that go way beyond killer robots. (The Economist)

4. + This

Check out these black bears tearing through a mock campsite at the Oakland Zoo. The zoo aimed to show what can happen when people fail to make their campsite bear-proof.

Thanks to Megan Morrone and Scott Rosenberg for editing this newsletter and to Caitlin Wolper for copy editing it.

Sign up for Axios AI+