Axios AI+

November 16, 2023

Hi, it's Ryan, just landed in London on the heels of Web Summit in Lisbon. Today's AI+ is 1,206 words, a 4.5-minute read.

1 big thing: More data makes AI less safe

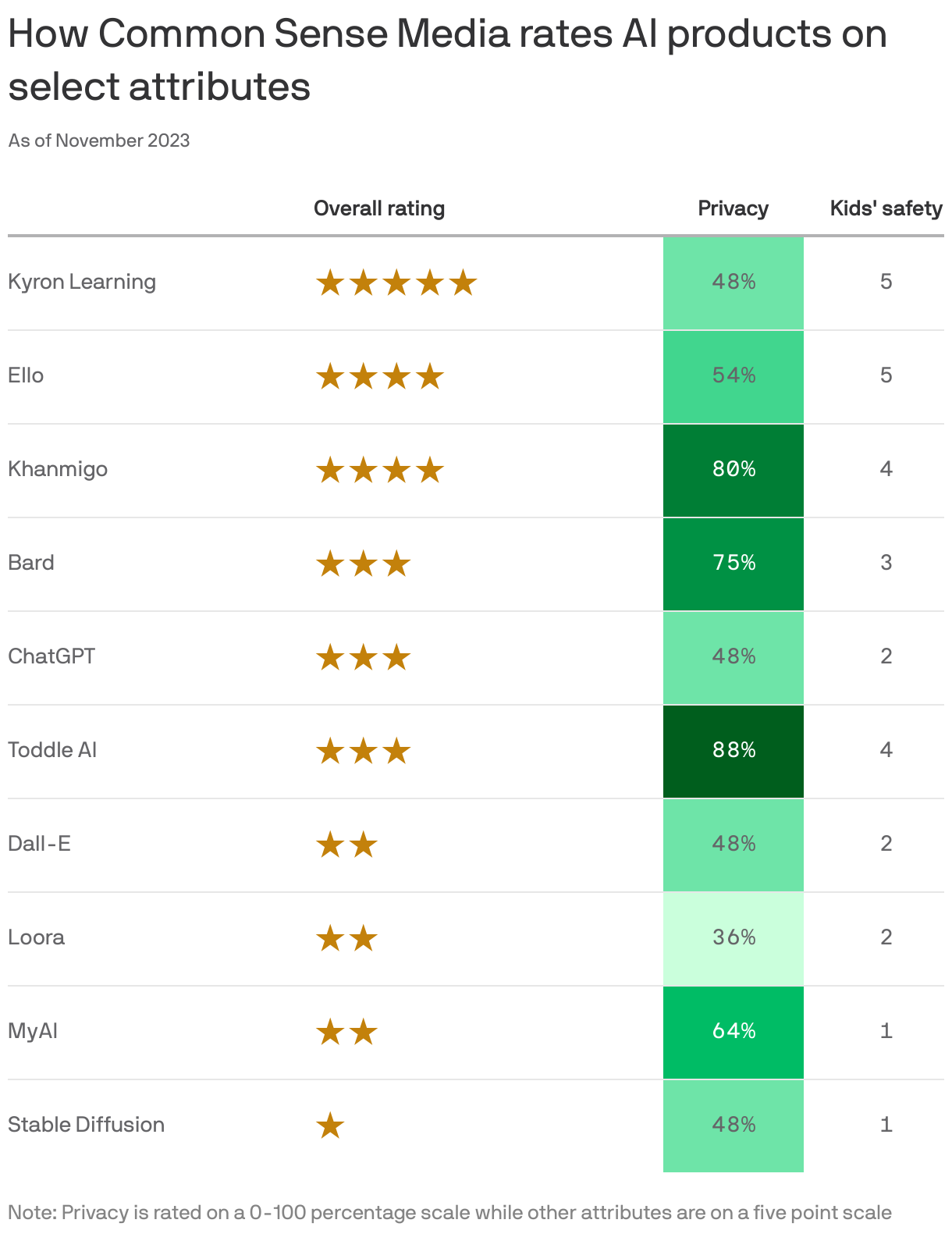

The more data used to train a particular AI tool, the less safe it's likely to be, according to a detailed study from Common Sense Media that rated 10 of the most popular AI products for privacy, ethical use, transparency, safety and impact, reports Axios' Megan Morrone.

Why it matters: Leaders in AI like Google and OpenAI are racing to build ever-bigger models trained on mountains of data that also keep getting bigger, but Common Sense's finding suggests that might be a risky plan.

The big picture: While governments and courts debate AI regulation, people using generative AI tools, especially parents and educators, need guides on how to prevent harm, including protecting their privacy and avoiding misinformation, bias and other harmful content.

- Common Sense calls its reviews "nutrition labels" for AI. They outline a product's potential benefits as well as its limitations and risks.

What they found: The AI products trained on the most selective data sets were the safest and those trained on the broadest data were the riskiest, the review team concluded.

- Google's Bard and OpenAI's ChatGPT scored only three out of five stars overall and scored 75% and 48% respectively for privacy.

- The product that scored the highest, with five out of five stars overall, was Kyron Learning, an AI-powered math tutor for fourth graders.

What they're saying: Tracy Pizzo Frey, who leads the Common Sense AI ratings and review programs, tells Axios it's notable that Kyron isn't using generative AI: "They're using conversational AI, and then something called dialog modeling, where they're retrieving very specific videos that were created by teachers."

- Common generative AI products like ChatGPT and Bard do the opposite. "Large language models aren't built by adding data or specific websites or aspects of the internet over time," Pizzo Frey says. "They're scraping large amounts of data from common datasets. We don't know the specifics of most of them anymore."

- If the big companies had focused more on what was included in the data sets as opposed to what was excluded, they would be less likely to generate harmful content, she says, "but that would require starting over."

What's next: While these first ratings are designed more for a "thought leader audience" — the idea is to learn from them in order to give future audiences the guidance they need. "We hope that they will be useful for parents and educators," she says.

- Pizzo Frey — a former Google employee who founded, created and led Google Cloud's responsible AI work — said that while a lot of AI companies tout transparency, that it's "often deeply technical in nature." Giving non-technical people "a better understanding of how these products work is increasingly important in a more AI-enabled world," she added.

Big picture: Common Sense, which helps parents choose media products for their kids and lobbies around related issues, isn't the only organization trying to put labels on AI to increase transparency.

- Among businesses, Twilio and Salesforce both have labeling efforts to make it clear to clients how their generative AI products use customer data. IBM also just launched its own "nutrition label" effort.

Yes, but: Labeling isn't a simple matter of input and output, but how people and business are using these tools.

- Generative AI is "really best for creative use cases, as opposed to being depended on for factual accuracy," Pizzo Frey said. "And all of those companies are saying that, but they don't necessarily say that clearly upfront all the time for users as they're using the product."

2. AI is already great at faking media, experts say

Nearly every respondent (95%) in a new Axios-Generation Lab-Syracuse University AI Experts Survey described AI's audio and video deepfake capabilities as "advanced," Axios' Margaret Talev reports.

- 68% said the capabilities are moderately advanced; 27% said they are highly advanced.

The big picture: Digital authenticity certification is the most effective strategy to offset AI deepfakes (39%), said respondents in our national survey of computer scientists from top universities. That's followed by public education on deepfakes (29%), improved detection technology (18%) and stricter regulations (13%).

What they're saying: "This is a really authoritative alarm here: 'Hey, we have a deepfake problem,'" Generation Lab CEO Cyrus Beschloss told Axios.

- 62% said misinformation will be the biggest challenge to maintaining the authenticity and credibility of news in an era of AI-generated articles.

Transparency about AI-generated news (33%) and independent fact-checking (32%) topped the list of ways to ensure trustworthy news reporting going forward, respondents said.

- Editorial oversight (19%) and improved media literacy (16%) were also seen as important protections.

What we're watching: 56% of respondents said they believe AI can help journalists' ability to produce and disseminate local news, whether it's helping a little (39%) or a lot (17%). One in four respondents said AI could instead hurt local news.

How it works: The survey includes responses from 216 professors of computer science at 67 of the top 100 U.S. computer science programs, as defined by SCImago Journal rankings.

This Axios-Generation Lab-Syracuse University AI Experts Survey was conducted Oct. 25-30, 2023. Full methodology.

3. Microsoft debuts its own chips to power AI

Image: Microsoft

Microsoft announced two homegrown chip designs Wednesday that will be put to work in its data centers alongside processors from Intel, AMD and Nvidia, Ina reports.

Why it matters: Cloud powerhouses, including Amazon, Google and others are increasingly offering their own silicon options in addition to those created by traditional chipmakers.

Details: At its Ignite conference, Microsoft announced the Microsoft Azure Maia 100 AI Accelerator along with the Azure Cobalt 100 CPU, an ARM-based chip for other cloud work on Wednesday.

- The Maia chip is designed to "power some of the largest internal AI workloads running on Microsoft Azure," Microsoft said, adding that it is also working with OpenAI to use the chip with that company's technology.

- The chips are being made for Microsoft by TSMC using its 5-nanometer manufacturing process.

Between the lines: Microsoft made clear it isn't looking to the new chips to replace existing suppliers, but rather to offer customers another choice.

The big picture: Microsoft also used the Ignite conference to announce Copilot AI Studio and to rebrand Bing Chat as Microsoft Copilot.

4. Training data

- Sen. John Thune (R-S.D.) has released a bipartisan Artificial Intelligence Research, Innovation and Accountability Act, which would require the National Institute of Standards and Technology to develop content provenance and detection standards. (Axios Pro)

- Meta said it is moving most of its Responsible AI team into its Generative AI unit run by Ahmad Al-Dahle, with a smaller number becoming part of its AI infrastructure team. Meta told Axios there will be no layoffs and it is adding resources to the Responsible AI team.

- Americans are flocking to TikTok for news — amid a growing preference for digital over television news. (Axios)

- Ed Newton-Rex quit his job as VP of audio at Stability AI, saying he disagrees with the company's view that training generative AI models on copyrighted works is fair use. (Music Business Worldwide)

- Scientists behind GraphCast say they've used AI to dramatically improve 10-day-out weather forecasting. (Science)

- On tap: The Senate Special Committee on Aging will hold a hearing on "Modern Scams: How Artificial Intelligence is Fueling Fraud & How We Can Fight Back" at 10am ET, with testimony from victims and experts.

5. + This

Check out this 3D reconstruction of Tenochtitlan, capital of the Aztec Empire, by Thomas Kole.

Thanks to Megan Morrone and Scott Rosenberg for editing this newsletter.

Sign up for Axios AI+

Scoops on the AI revolution and transformative tech, from Ina Fried, Madison Mills, Ashley Gold and Maria Curi.