Axios AI+

February 17, 2026

Ina here, excited that the Canadian and American women's hockey teams won their semifinals yesterday, setting up a battle for gold between the top rivals. Today's AI+ is 1,182 words, a 4.5-minute read.

1 big thing: Pentagon threatens to punish Anthropic

Defense Secretary Pete Hegseth is "close" to cutting business ties with Anthropic and designating the AI company a "supply chain risk" — meaning anyone who wants to do business with the U.S. military has to cut ties with the company, a senior Pentagon official told Axios.

- The senior official said: "It will be an enormous pain in the ass to disentangle, and we are going to make sure they pay a price for forcing our hand like this."

Why it matters: That kind of penalty is usually reserved for foreign adversaries.

Chief Pentagon spokesman Sean Parnell told Axios: "The Department of War's relationship with Anthropic is being reviewed. Our nation requires that our partners be willing to help our warfighters win in any fight. Ultimately, this is about our troops and the safety of the American people."

The big picture: Anthropic's Claude is the only AI model available in the military's classified systems, and is the world leader for many business applications. Pentagon officials heartily praise Claude's capabilities.

- As a sign of how embedded the software already is within the military, Claude was used during the Maduro raid in January, as Axios reported on Friday.

Breaking it down: Anthropic and the Pentagon have held months of contentious negotiations over the terms under which the military can use Claude.

- Anthropic CEO Dario Amodei takes these issues seriously, but is a pragmatist.

- Anthropic is prepared to loosen its current terms of use, but wants to ensure its tools aren't used to spy on Americans en masse, or to develop weapons that fire with no human involvement.

The Pentagon claims that's unduly restrictive, and that there are gray areas that would make it unworkable to operate on such terms. Pentagon officials are insisting in negotiations with Anthropic and three other big AI labs — OpenAI, Google and xAI — that the military be able to use their tools for "all lawful purposes."

2. AI concentration is everywhere

AI is concentrating stocks, bonds, private credit — and even the broader economy — around a single bet.

Why it matters: Tech companies are projected to issue over $1 trillion in debt to fund their AI goals this year. Yet there's little evidence the technology can be monetized at a scale that justifies the bet.

Hyperscalers — the AI data-center giants — could spend up to $700 billion from their balance sheets on AI this year, while issuing eye-popping debt to access even more capital, according to UBS.

- The eight largest companies, all tech firms with AI ambitions, make up nearly half of the S&P 500.

- More than half of invested venture-capital dollars went to AI firms in 2025, according to PitchBook.

Threat level: If AI can't be monetized effectively, investors will quickly move to reprice the value of Big Tech, reverberating across:

- The stock market: AI stocks were responsible for about 70% of the S&P 500's 2025 gains, according to JPMorgan. If those stocks fall, so will the market.

- The economy: AI capex drove over 90% of economic growth in the first half of 2025, Harvard economist Jason Furman says. If investors pull back, executives may slow spending, which could then weigh on GDP.

- Entire financial systems: Private credit — a booming Wall Street industry that lets companies bypass traditional banks to borrow money — has bet heavily on AI. Moody's warns that because banks, insurers and other investors are exposed to those funds, a hit to AI could cascade outward and trigger wider financial "contagion."

3. AI's big biosecurity blind spot

Researchers from Johns Hopkins, Oxford, Stanford, Columbia and NYU are calling for guardrails on certain infectious disease datasets that could enable AI to design deadly viruses.

Why it matters: Once high-risk biological data hits the open web, it can't be recalled — and regulation won't matter if the knowledge itself is already widely distributed.

Driving the news: An international group of more than 100 researchers has endorsed a framework to govern certain biological data the same way we handle sensitive health records.

What's inside: The proposed framework isn't meant to slow science. The authors argue that most biological data should stay open.

- Only a narrow band that materially increases potential misuse should be protected, they say.

- "Responsible governance and scientific progress are not contradictions," according to the framework.

How it works: Training models on datasets that link viral genetics to real-world traits — like transmissibility or immune evasion — could lower the barrier to designing dangerous pathogens.

Zoom in: The concern isn't about off-the-shelf versions of ChatGPT and Claude, says Jassi Pannu, assistant professor at the Johns Hopkins Center for Health Security and one of the authors of the framework.

- Some AI models for biological research use architectures similar to large language models — but trained on DNA instead of text. Researchers found that systems built to understand human language can also learn the "language" of genetics.

- Some developers voluntarily decided not to train their models on virology data because they were worried about putting that capability into the world.

Zoom out: If the data exists on the web, third parties who may not follow the same safeguards can take those models and fine-tune them on the data that's out there.

- "Legitimate researchers should have access," Pannu said. "But we shouldn't be posting it anonymously on the internet where no one can track who downloads it."

The intrigue: "Right now, there's no expert-backed guidance on which data poses meaningful risks, leaving some frontier developers to make their best guess and voluntarily exclude viral data from training," Pannu says.

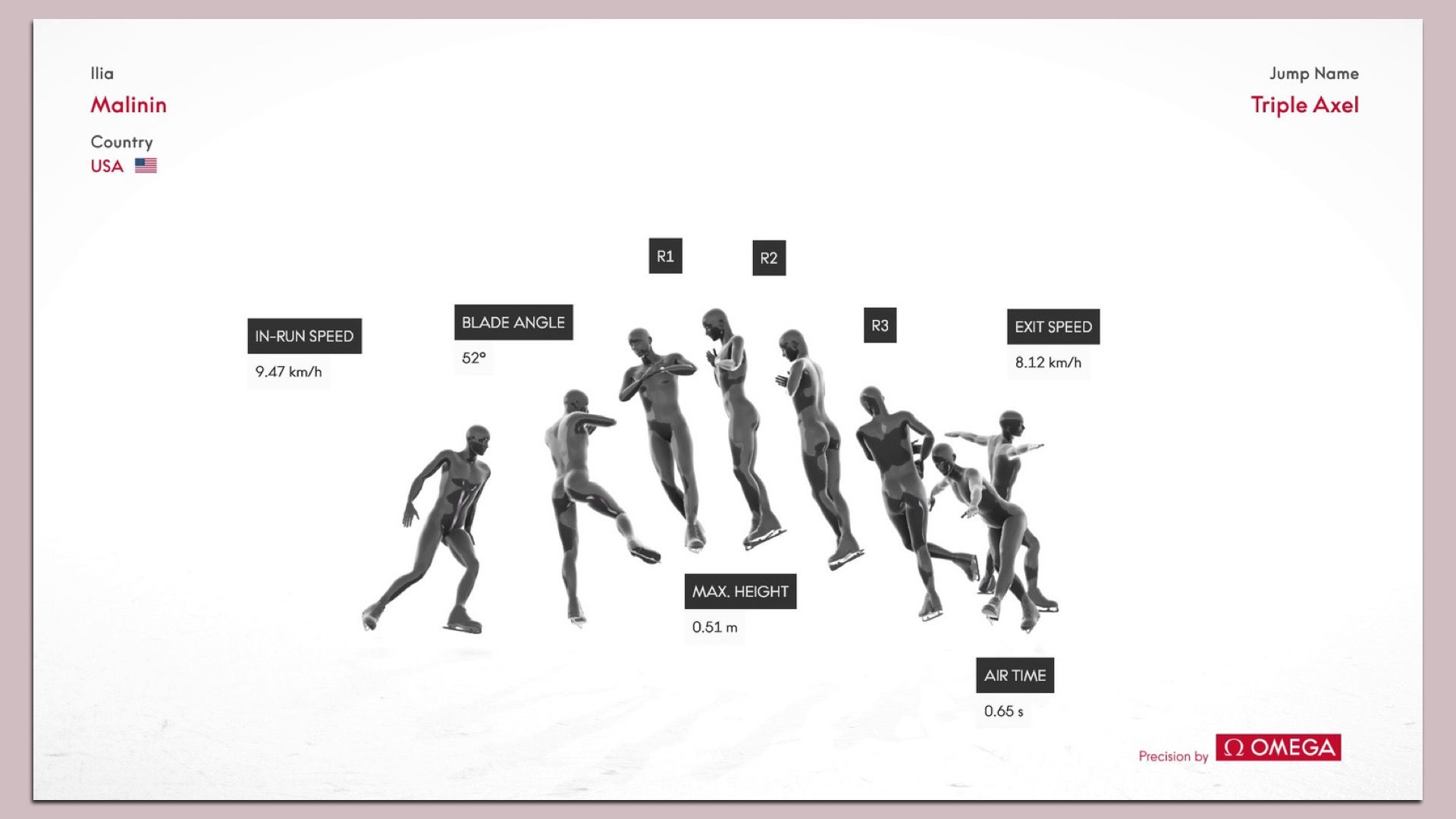

4. AI could help judge Olympic figure skating

An AI innovation making its Winter Olympics debut could help figure skating judges determine whether an athlete landed a fast-twirling move.

The big picture: Omega, the official Olympic timing and measurement provider, has installed an array of 14 cameras to track athletes in motion.

How it works: Omega uses its camera data to create a heat map of where skaters are concentrating their moves, including jump height, length and rotation.

- "We're down to millimeters in the detection of the blade," said Alain Zobrist, CEO of Omega's timing unit. AI can detect movements that "couldn't be seen with the naked eye," he added.

- Omega's capabilities could eventually support judging decisions.

Zoom in: For now, Omega is providing this information to broadcasters. Judges at international competitions could get access later this year.

Yes, but: There's a limit to how much technology can aid in sports like figure skating where a significant portion of the score comes down to the judge's discretion.

5. Training data

- OpenAI hired Peter Steinberger, the creator of the viral, open-source AI program OpenClaw. (Bloomberg)

- Meta largely fails to protect kids from its own chatbots. (Axios)

- ByteDance said it would curb its AI-powered video tool after receiving a cease and desist letter from Disney. (The Guardian)

6. + This

I've been trying out a fisheye lens at the Olympics. Here's a shot from the women's hockey semifinal between Canada and Switzerland.

Thanks to Megan Morrone for editing this newsletter and Matt Piper for copy editing.

Sign up for Axios AI+