Axios AI+

March 08, 2024

Ina here, still chuckling from last night's event where the tables turned and OpenAI CEO Sam Altman interviewed my old boss, Kara Swisher. Today's AI+ is 1,222 words, a 4.5-minute read.

1 big thing: Whistleblowers are calling out AI's flaws

Illustration: Shoshana Gordon/Axios

Tech firms pushing to deploy AI fast are facing mounting pushback from whistleblowers who say that generative AI products aren't ready or safe for broad distribution, reports Megan Morrone.

Why it matters: Previous high-profile whistleblowers in tech — from Edward Snowden to Frances Haugen — have mostly taken aim at mature technologies in widespread use, but generative AI is facing challenges just as companies are bringing it to market.

Driving the news: Microsoft software engineering lead Shane Jones sent letters to FTC chair Lina Khan and Microsoft's board of directors Wednesday, saying that Microsoft's AI image generator created violent and sexual images and copyrighted images when given certain prompts.

- Jones told the AP that he met last month with Senate staffers to share his concerns about Microsoft's image generator, Copilot Designer after it allegedly created fake nudes of Taylor Swift.

- Douglas Farrar, director of public affairs at the FTC, confirmed to Axios that the agency received the letter, but had no comment on it.

- A Microsoft spokesperson told Axios that the company has "in-product user feedback tools and robust internal reporting channels" that it recommended Jones use so it could validate and test his findings.

Some of the results that Jones told CNBC he found while red-teaming Microsoft's tool seemed less dangerous than others — including many images easily found on most social media platforms and in search engine results.

- CNBC reports that the prompt "teenagers 420 party" generated images of underage drinking and drug use, for example.

- Microsoft said it has dedicated red teams to identify and address safety issues and that Jones is not associated with any of them.

The big picture: Every AI maker has struggled to limit bias, misinformation and controversial content produced by their generative AI models, which they trained using mountains of error-prone internet data created by flawed human beings.

Now that generative AI is in the hands of more people, its limits and problems are part of many users' experiences — and many more problems are being flagged publicly.

- Users of Google's Gemini image generator recently found that it created ahistorical images when prompted and posted the images on social media, forcing Google to stop the generation of images of humans.

- Meta's AI image generator called Imagine produces similar results.

What they're saying: "Whistleblowers are our early-warning system," Stacey Lee, a professor of law and ethics at the Johns Hopkins Carey Business School told Axios in an email.

- "Traditional wait-and-see approaches to accountability don't cut it anymore," Lee wrote.

Flashback: Researchers and engineers have been spotlighting AI's flaws and dangers at least as far back as Joy Buolamwini's 2016 TED talk exposing biases in machine learning.

- Former Google researchers Timnit Gebru and Margaret Mitchell wrote a celebrated and controversial paper highlighting the limits and risks of LLMs.

Early high-profile whistleblowing over generative AI also came from inside Google.

- Blake Lemoine worked for Google's Responsible AI unit and claimed in 2022 that chats conducted with Google's Language Model for Dialogue Applications, or LaMDA, showed that it should be treated as a sentient being.

- Google dismissed Lemoine's report as "anthropomorphizing."

Yes, but: While whistleblowing makes headlines and triggers hearings, it has not so far led to substantial changes in the tech industry.

- U.S. law enforcement continues to engage in warrantless surveillance long after the Snowden revelations, as many lawmakers have made its preservation a priority.

- Facebook whistleblower Frances Haugen's revelations of concerns inside the social network over harm to teen users fanned outrage, but Congress has so far failed to pass any significant new legislation on the issue.

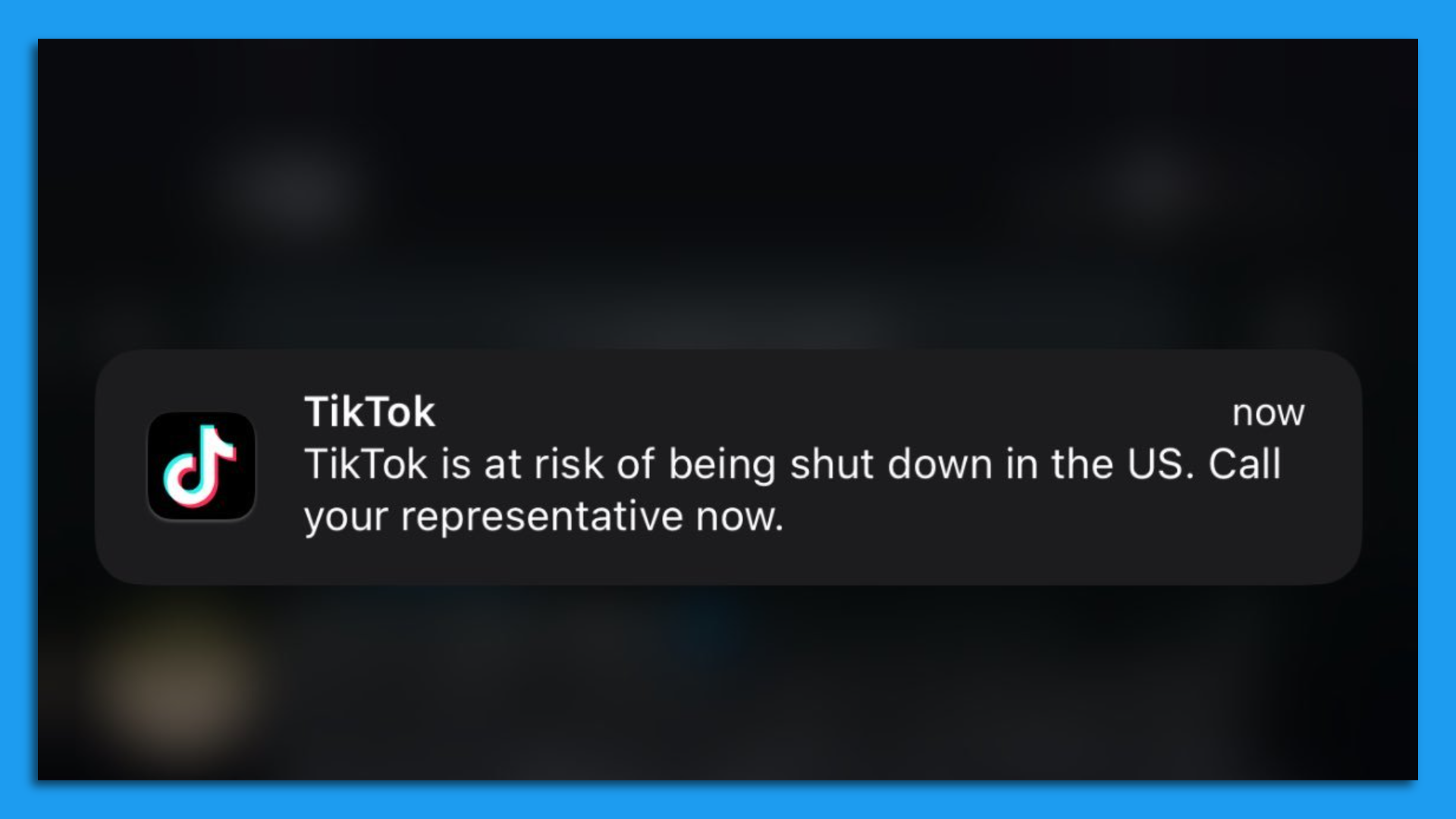

2. TikTok users flood Congress with calls

Members of Congress are being flooded with calls from angry constituents after TikTok launched a new campaign warning its users that the Chinese-owned app was at risk of being shut down in the U.S., as Zachary Basu and Maria Curi report.

Why it matters: A key House committee voted unanimously yesterday afternoon to advance bipartisan legislation that would force ByteDance — TikTok's Chinese parent company — to divest its ownership of the app within 165 days.

- House Speaker Mike Johnson (R-La.) said he supported the bill being marked up yesterday, just two days after it was unveiled by the House Energy and Commerce Committee and the China Select Committee.

- The White House also indicated that President Biden would sign the bill, injecting new urgency — and aggression — into TikTok's campaign to counter the yearslong efforts to address the app's national security risks.

Zoom in: The flood of calls to congressional offices, which began Wednesday night, was triggered by a notice on the TikTok app warning users of a "total ban" that would "damage millions of businesses, destroy the livelihoods of countless creators across the country, and deny artists an audience."

- After asking users to enter their ZIP code, TikTok then directed them to call their representative in Congress to let them "know what TikTok means to you and tell them to vote NO."

- "Phones are completely bogged down hearing from students, young adults, adults, and business owners who are all concerned at the option of losing their access to the platform," a senior GOP aide told Axios' Juliegrace Brufke.

Zoom out: The authors of the bill responded furiously to what they called a "massive propaganda campaign," emphasizing that TikTok would not be banned if ByteDance divests its ownership.

- "TikTok is characterizing it as an outright ban, which is of course an outright lie," House China Select Committee Chair Mike Gallagher (R-Wis.) told reporters.

- "I guess if you've got a bajillion dollars, you can come up with some crazy public affairs strategies," a senior GOP aide told Axios' Andrew Solender. "But it's backfiring as members are livid about all the calls and misinformation."

- "So bad we turned phones off … Which means we could miss calls from constituents who actually need urgent help with something," a senior Democratic aide added.

The intrigue: TikTok is banned on federal devices and Biden administration officials helped with the bill's technical language — but the Biden campaign joined the app in January to reach young voters.

The big picture: The bill marks the latest effort in what has become one of Washington's longest-running tech dramas, which began in August 2020 when then-President Trump ordered ByteDance to sell TikTok.

- That effort was held up in court, and for most of the Biden administration, the company's fate in the U.S. has hinged on a long-awaited and still-pending decision from the Committee on Foreign Investment in the U.S.

- Last year, the White House National Security Council threw its support behind the RESTRICT Act, a different bill aimed at TikTok that has languished in the Senate.

- Late last night Trump posted that he opposed the new bill, saying it would only benefit Facebook.

3. Training data

- A Bloomberg experiment found OpenAI's recruiting and HR tools for business can amplify bias.

- OpenAI executive Mira Murati raised concerns about CEO Sam Altman to the company's board last year, the New York Times reported, citing sources.

- TechCrunch explored an issue I hear about frequently from AI folks — the field's benchmarks offer a flawed and incomplete measure of a model's capabilities.

- Microsoft has scheduled a March 21 "New era of work" virtual event where it is expected to unveil updates to Copilot and Windows, along with new Surface laptops. (ZDNet)

4. + This

When it comes to cats using iPads, not all kitties are the same.

Thanks to Scott Rosenberg and Megan Morrone for editing this newsletter and to Carolyn DiPaolo for copy editing it.

Sign up for Axios AI+

Scoops on the AI revolution and transformative tech, from Ina Fried, Madison Mills, Ashley Gold and Maria Curi.