Axios AI+

February 14, 2025

Happy Friday. It's also the start of the NBA's All-Star Weekend. Since it's taking place in San Francisco, I'll be doing a bunch of behind-the-scenes reporting on how AI and other tech is being adopted. We'll be off Monday for Presidents Day and back Tuesday. Today's AI+ is 1,215 words, a 4.5-minute read.

1 big thing: Axios interview — Google's Hassabis warns against AI "race"

The more artificial intelligence becomes a race, the harder it is to keep the powerful new technology from becoming unsafe, Google DeepMind CEO Demis Hassabis told me in a wide-ranging interview at this week's AI Action Summit in Paris.

Reality check: Rules to control AI only work when most nations agree to them, Hassabis said, and that's only getting harder — as made clear by the summit's inconclusive outcome.

"It seems to be very difficult for the world to do," said Hassabis — who won a Nobel Prize last year and now leads Google's AI work. "Just look at climate. There seems to be less cooperation. That doesn't bode well."

- Indeed, at the Paris Summit, the U.S. and U.K. refused to sign on to a communique on AI safety that had already been criticized for lacking enforceable commitments.

The big picture: Hassabis said the need for norms and rules grows as the world gets closer to so-called artificial general intelligence (AGI), meaning advanced AI systems that can do a broad range of tasks faster and better than humans.

- "But it has to be international," Hassabis said. "Otherwise you'll get nations competing and other things like that."

Hassabis doesn't have a specific recipe for creating that international cooperation, but he said it will need to involve governments, companies, academics and civil society.

- "It is too important for it only to be one set of people working on this," he said. "It's going to require everybody to come together — hopefully, in time."

Between the lines: Hassabis also stressed the need for a diverse collection of people to be involved in the development of AI — even as companies, including Google, move away from their programs to diversify their workforces, which remain highly white and male.

- "Research advances are better with a big diversity of thinking in your team," he said. "That's kind of well-proven in science and in research."

- Having a diverse set of voices in the room when it comes to deploying technologies is even more critical, he said, "because that's when it affects people's lives."

- "I think that's where you know you want the people that are being affected to have a say as to how those technologies get deployed," he said.

Open source AI has become linked in the public mind with both Meta and China, but Hassabis said Google is a "huge proponent of open science and open source."

- "We've open sourced many, many, many things in the past and obviously published almost all of our innovations, including transformers and AlphaGo, and all of the things that the modern industry is built on," he said. "Clearly that makes progress go faster."

- But he warned that the spread of open source AI only sharpens the technology's root ethical dilemma: "How do you stop bad actors repurposing general purpose technology for harmful ends?"

- "Powerful agentic systems are going to be built, because they'll be more useful, economically more useful, scientifically more useful. ... But then those systems become even more powerful in the wrong hands, too."

The bottom line: Hassabis said it's going to take effort to manage societal change even if the tech industry does manage to develop AI safely.

- "I think there needs to be more time spent by economists, probably, and philosophers and social scientists on what do we want the world to be like, even if we get everything right, post AGI," he said. "I'm surprised there's not very much discussion about that, given the relatively short timelines."

2. Hassabis on Google's military AI use shift

While Google has changed its position to allow greater military use of its AI technology, Hassabis told me the company will tread carefully.

- "The benefits have to substantially outweigh the risks of harm," Hassabis said during our interview at the AI Action Summit.

Driving the news: Google recently altered its AI principles to remove a section that prohibited military use.

- "Our values and our intent has not changed," Hassabis said. "We're the same people who we were yesterday."

What has changed, he said, is that large language models are now widely available rather than just in the hands of companies like Google and OpenAI.

- "Things are very different today than they were 8 to 10 years ago," he said. "There are tons of models available now — Chinese models, Western models, open source models, proprietary models. A lot of that is commoditized now."

- Also, he said, the geopolitics have shifted. "Western democratic values are under threat," Hassabis said. "We're duty bound to be able to help where we are uniquely suited and capable of helping."

- Specifically, Hassabis said that Google could play a role in helping ensure better defenses against AI-enabled cyber and biological attacks. "I think there are possibilities if research is done to accelerate defensive capabilities, sort of asymmetrically ahead of offensive capabilities."

Yes, but: Hassabis said he remains opposed to the notion of automated warfare.

- "I've been on record saying I don't think we should have lethal autonomous weapons, but some countries are building them," he said. "That's just a reality."

The bottom line: Hassabis said Google's policies remain committed to following international law and ensuring risks aren't taken lightly when Google engages in military-related applications.

- "I'm very comfortable we have the right setup and the right committees and things and the right people actually in senior positions to thoughtfully think those tricky tradeoffs through," he said.

3. Publishers sue Cohere for copyright violations

More than a dozen major U.S. news organizations yesterday said they were suing Cohere, claiming the enterprise AI firm illegally repurposed their work and tarnished their brands.

Zoom in: The federal lawsuit alleges Cohere engaged in unauthorized use of content from News Media Alliance publishers to power its AI products, which they believe violates copyright law.

- It argues Cohere will repurpose full articles, sometimes with reporters' bylines included, when prompted for a request for a specific article, thus competing directly with the news publishers.

- Like the New York Times, which sued OpenAI and Microsoft in a similar case in 2023, the NMA lawsuit alleges Cohere served summaries from paywalled articles.

- The lawsuit also alleges Cohere violated publishers' trademark rights by passing off "inaccurate," "substandard" articles its AI had created as belonging to news publishers, tarnishing their brands.

Between the lines: The lawsuit represents the first official legal action against an AI company organized by the News Media Alliance, the largest news media trade group in the U.S.

The other side: "Cohere strongly stands by its practices for responsibly training its enterprise AI," company spokesperson Josh Gartner told Axios.

- "We have long prioritized controls that mitigate the risk of IP infringement and respect the rights of holders."

What's next: As part of the lawsuit, the publishers are seeking a permanent injunction prohibiting Cohere from using their works.

4. Training data

- The U.K.'s AI Safety Institute is changing its name to the AI Security Institute, to signal that AI is a technology "we need to supercharge, not suppress." (Axios Pro)

- TikTok is back in Google's app store and Apple's, too, but the company is still in legal limbo. (Axios)

5. + This

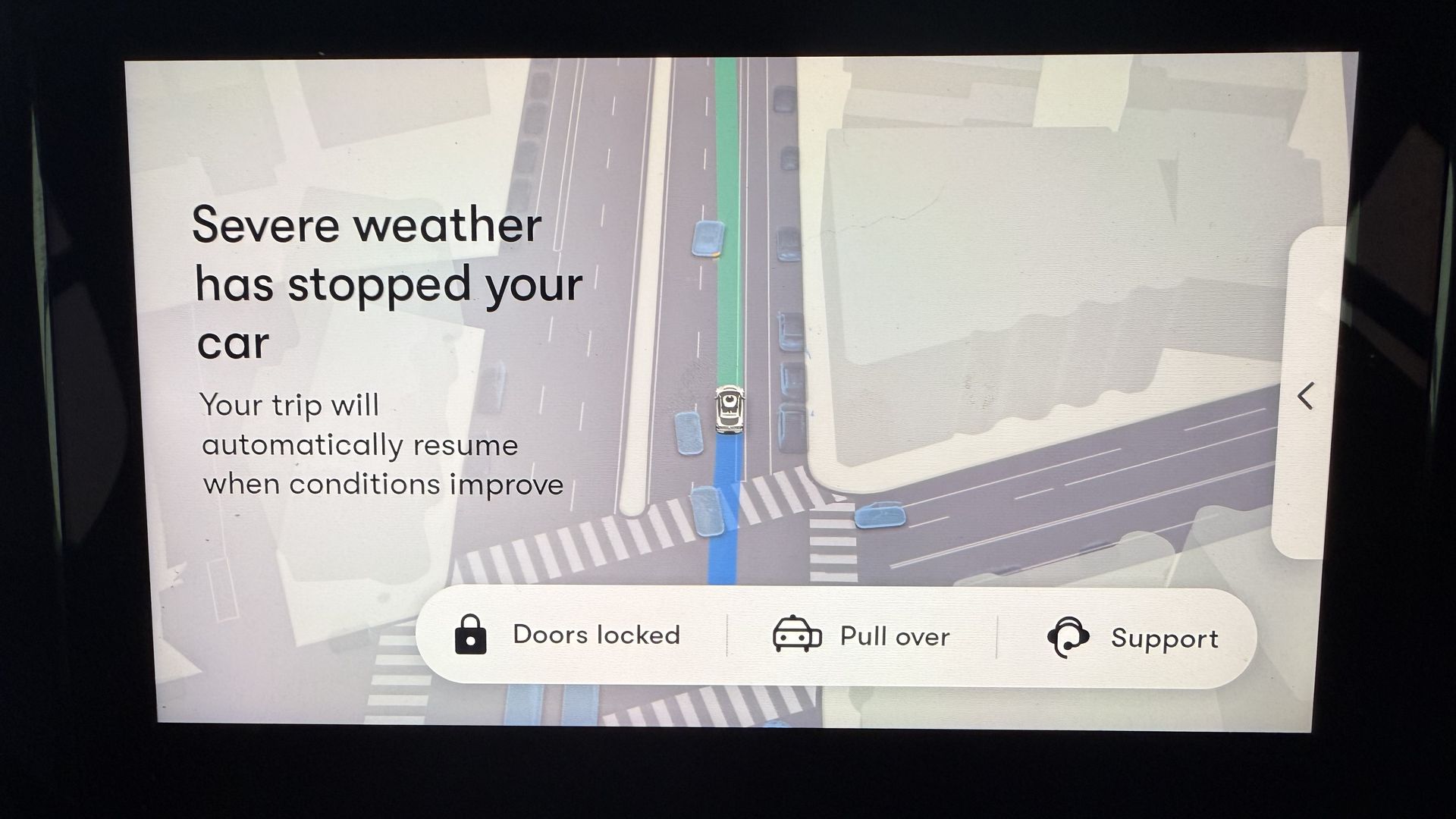

I've never had a Waymo pull over because of rain — until yesterday. I was headed to Moscone Center and it started raining heavily. So the Waymo pulled over for a bit, resuming after the rain had eased up. Good for it to know its limitations, I guess.

Thanks to Scott Rosenberg and Megan Morrone for editing this newsletter and Matt Piper for copy editing it.

Sign up for Axios AI+