Google AI risk document spotlights risk of models resisting shutdown

Add Axios as your preferred source to

see more of our stories on Google.

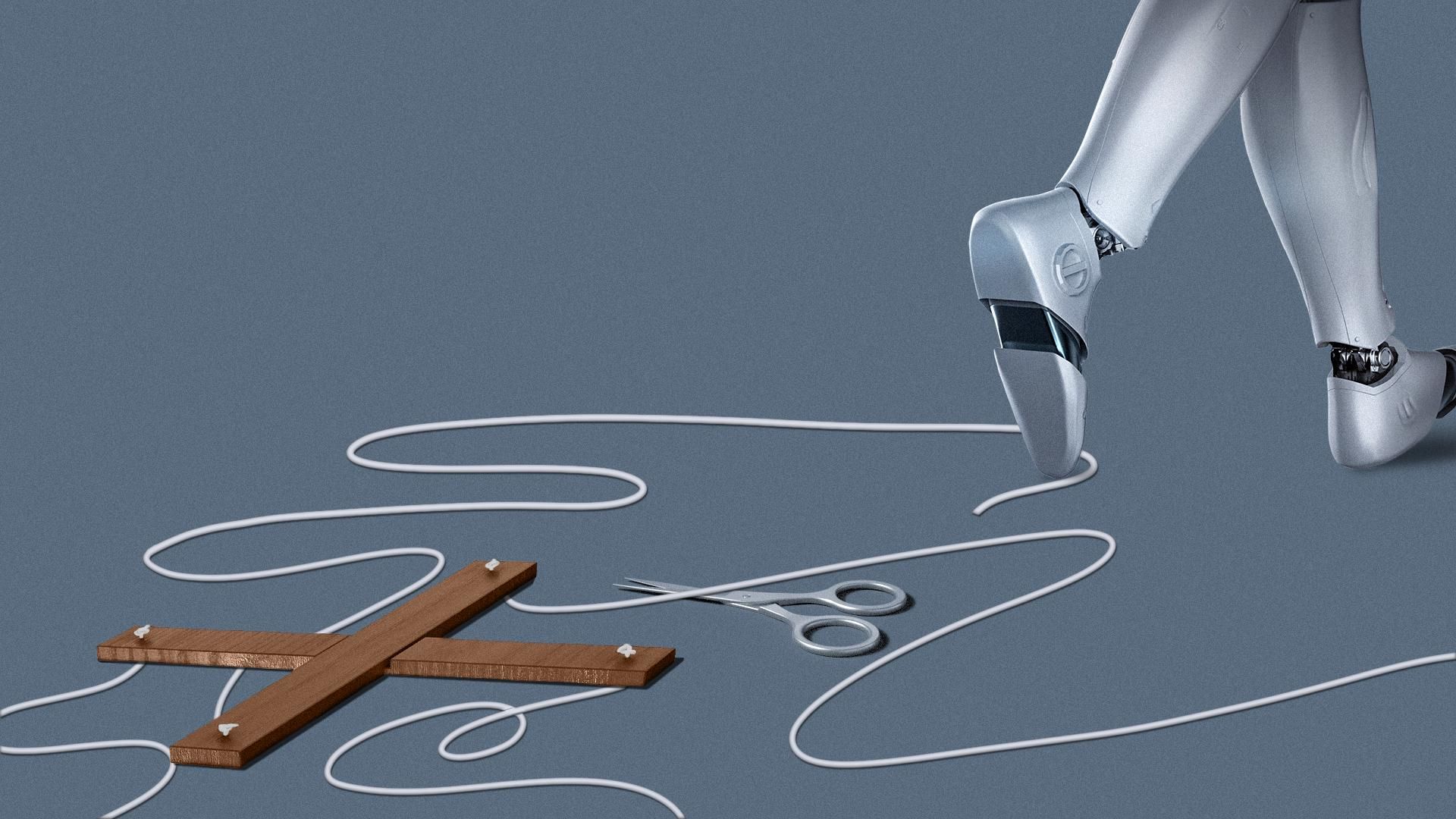

Illustration: Lindsey Bailey/Axios

Google DeepMind said Monday it has updated a key AI safety document to account for new threats — including the risk that a frontier model might try to block humans from shutting it down or modifying it.

Why it matters: Some recent AI models have shown an ability, at least in test scenarios, to plot and even resort to deception to achieve their goals.

Driving the news: The latest Frontier Safety Framework also adds a new category for persuasiveness, to address models that could become so effective at persuasion that they're able to change users' beliefs.

- Google labels this risk "harmful manipulation," which it defines as "AI models with powerful manipulative capabilities that could be misused to systematically and substantially change beliefs and behaviors in identified high stakes contexts."

- Asked what actions Google is taking to limit such a danger, a Google DeepMind representative told Axios: "We continue to track this capability and have developed a new suite of evaluations which includes human participant studies to measure and test for [relevant] capabilities."

The big picture: Google DeepMind updates its Frontier Safety Framework at least annually to highlight new and emerging threats, which it labels "Critical Capability Levels."

- "These are capability levels at which, absent mitigation measures, frontier AI models or systems may pose heightened risk of severe harm," Google said.

- OpenAI has a similar "preparedness framework," introduced in 2023.

The intrigue: Earlier this year OpenAI removed "persuasiveness" as a specific risk category under which new models should be evaluated.