Video-making AI tools are headed into general use

Add Axios as your preferred source to

see more of our stories on Google.

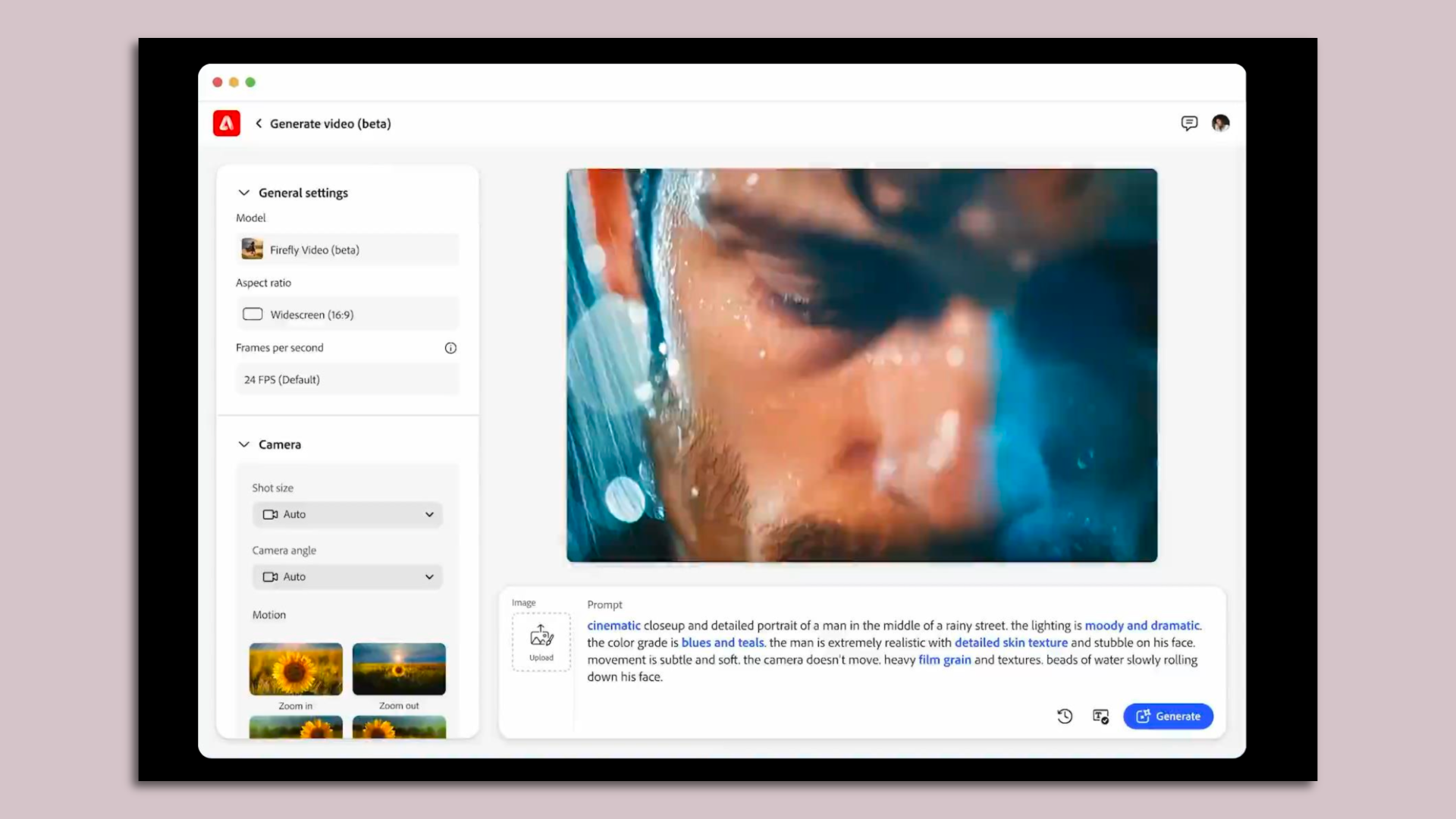

Adobe's Firefly Video Model in action. Image: Adobe

For years tech companies have been showing off tools that use AI to create short video clips, but now a lot more people are going to get their hands on them.

Why it matters: The ability to turn a photo or a prompt into several seconds of video opens up new creative avenues, while also offering millions an opportunity to create lifelike deepfake videos.

Driving the news: Adobe on Monday released a public beta of its Firefly Video Model, allowing its Creative Cloud subscribers to turn ideas and photos into short video clips.

- Adobe also added a Generative Extend feature to a beta version of Premiere Pro that uses generative AI to lengthen footage that has been captured.

The big picture: Meta recently unveiled Video Gen, a more powerful AI engine, with an aim of bringing it to customers by next year.

- OpenAI's demo of its Sora video making tool wowed observers earlier this year, and last month Google added AI tools based on its Veo model to YouTube Shorts.

Zoom out: As video turns into the next big frontier in AI content creation, the industry is racing into a new competitive brawl over capabilities and speed.

- At the same time, it's searching for tools and technologies to limit the potential havoc a flood of AI-made videos could wreak via misinformation, intellectual property theft, nonconsensual pornography and more.

Case in point: Startup Truepic, which specializes in assuring the legitimacy of photos and video, announced Tuesday is has uploaded the first video to YouTube that includes end-to-end content credentials verifying its authenticity.

- The video itself features Truepic CEO Jeff McGregor recreating the first video uploaded to the site 19 years ago — a scene filmed at the San Diego Zoo.

- For the authenticated video, Truepic used its Lens secure camera software and then applied content credentials developed by the C2PA consortium that emerged out of Adobe's Content Authenticity initiative.

Yes, but: Video creation services are expensive for AI companies to run and pose added safety risks.

- Adobe is limiting use of the Firefly Video Model to paid Creative Cloud customers, helping manage demand. It's also prohibiting videos to be generated of kids and public figures, among other restrictions, in an effort to minimize harmful uses.

Even with those limits, Adobe says it knows that it is putting a powerful ability in the hands of lots of creators by enabling a video clip to be generated from a single image.

- "It's a new level of responsibility we're putting in creatives' hands," Adobe CTO Ely Greenfield told Axios. "The onus is on the creator to make sure that they have permission from the actor to do that, that they're behaving responsibly."

- Meanwhile, Truepic, Adobe and others are trying to spur the adoption of content credentials as a way to both label AI-generated content and verify that content captured via cameras has not been altered.

Between the lines: Applying labels to content made using popular AI tools is important, says Truepic's McGregor, but not sufficient, "because bad actors will use open source models that do not have this [technology]."

- That's where the need arises for authentication technology that is built into cameras. Leica and a handful of hardware makers have adopted the technology.

What we're watching: The biggest impact would come if Apple and Google included this technology in the default Android or iOS cameras — since that's where the majority of photos are taken.