YouTube tightens hate speech policies

Add Axios as your preferred source to

see more of our stories on Google.

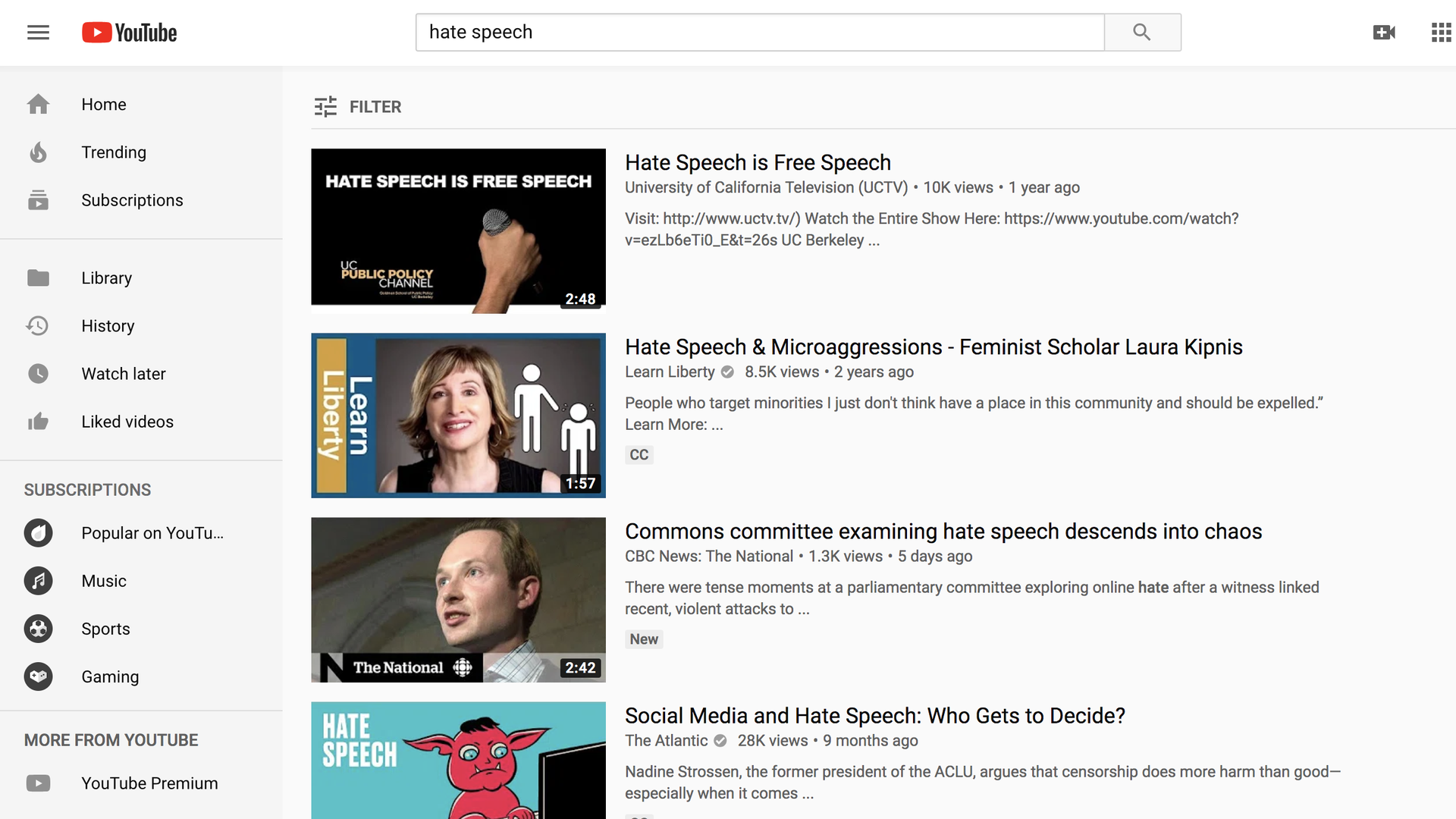

Screenshot of a search for "Hate Speech" on YouTube.com

YouTube today is announcing three changes designed to limit the posting and spreading of hate speech, even as the Google-owned video site faces fresh complaints it is allowing such content to flourish.

Why it matters: YouTube has been promising to improve both its policies and recommendation algorithms, but big problems persist.

Prohibiting claims of group superiority: The site now specifically bans videos alleging that one group is superior "in order to justify discrimination, segregation or exclusion based on qualities like age, gender, race, caste, religion, sexual orientation or veteran status."

- YouTube says this will prohibit, for example, videos that promote Nazi ideology.

- It also says it will bar videos that deny the existence of "well-documented violent events" like the Holocaust or the Sandy Hook shootings.

- The company expects thousands of videos and channels will be taken down as a result of this change.

Changing which videos get recommended: YouTube says it will identify more content as "borderline," which will remove it from being recommended or monetized.

- When someone is watching borderline content, YouTube says its algorithm will start to recommend more authoritative content as the next video.

- The move aims to remedy the problem of "rabbit holes" — recommendation sequences of videos with ever more extreme perspectives that some critics say YouTube's algorithm promotes and that can have the effect of radicalizing viewers.

Tightening the ad revenue spigot: YouTube says it will turn off monetization options for creators who "repeatedly brush up against our hate speech policies."

Meanwhile: Supporters of Carlos Maza, a video producer at Vox, are furious with YouTube for not taking action against popular conservative creator Steven Crowder, who has directed a number of slurs and insults at Maza over the years.

- YouTube has also come under fire after a New York Times report detailed how its algorithm has been recommending unrelated, individually innocuous videos of children, having the effect of creating a highlight reel for pedophiles. (YouTube says it is taking separate action to address the problem.)

The bottom line: Just how effective these moves will be will depend on how YouTube enforces its policies, especially in cases that aren't as simple as explicit Nazi propaganda.

- Reality check: YouTube says nothing in its new rules would have changed its decision in the Maza/Crowder case.