Axios Science

April 25, 2024

Thanks for reading Axios Science. This week's newsletter is 1,627 words, about a 6-minute read.

- Send your feedback and ideas to me at [email protected].

1 big thing: AI chips in space

Illustration: Annelise Capossela/Axios.

A raft of startups, companies and governments are trying to develop new chips to unlock AI's power in space.

Why it matters: The harsh conditions of space have so far limited the use of AI on board satellites that play a critical role in the space economy.

- AI could help fuel growth in the space industry, which some predict will be worth as much as $1.8 trillion by 2035 — on par with the semiconductor industry.

But right now the space industry is in "the Dark Ages," says former NASA administrator Dan Goldin. "Instead of doing edge computing, it is done in data centers and mission control."

- "If we want to have a real space industry, not a foo-foo space industry, we've got to put AI up there," he says.

The big picture: AI and machine learning algorithms have for years helped to analyze astronomical data, optimize operations, control satellites and rovers and other space-related tasks.

- Some satellites can process data on board with AI, but for the most part, they download it to Earth for analysis — a process limited by available, and expensive, bandwidth.

- But the number of satellites in space and the sensors they carry is multiplying fast, generating more data and increasing the risk that satellites could collide with one another or dangerous space debris.

There are going to be "tens of thousands of satellites orbiting Earth and [we] can't possibly count on human intervention for them to avoid each other, to pair up with each other or to de-orbit," says Bill Weber, CEO of Firefly Aerospace.

- Putting AI devices on satellites could give them more autonomy to navigate using data they collect in real time.

- On-board AI processing can also help researchers better leverage the streams of data satellites collect to take the pulse of Earth's forests and fields, monitor methane emissions, and track illegal fishing and other activities and events.

- "Sensor data collection is growing exponentially, not only on Earth, but in space, whereas communication downlink technology is only growing linearly," says Paul Quintana of Untether AI. "You can't send all the data from space down anymore. You have to do on-orbit processing."

Zoom out: Space presents a few big challenges for computing.

- Radiation — a cumulative dose or an acute one — can fry digital circuits. The threat of potential space-based weapons that use radiation to jam satellites is adding to the pressure to develop systems that can withstand radiation.

- The vacuum of space makes it difficult to dissipate the heat generated by processors.

- Chips have to be able to withstand the high temperatures of a rocket launch and the low ones of space.

There's also limited power in space.

- A GPU in a data center on Earth has one kilowatt of power available to it versus less than one watt for an entire satellite in space, says Brandon Lucia, CEO and co-founder of Efficient and a professor at Carnegie Mellon University.

- Adding batteries and solar panels adds weight, which comes at a cost in space.

"Whenever you have technologies converging, it takes a while to build it out," says Tony Trinh, who leads advanced packaging technologies at Mercury Aerospace, one of the handful of places with facilities to test devices for space.

2. Part II: Seeking a space-worthy chip

Engineers are taking a range of approaches to tackling these problems.

- Untether, which is focused on being able to process large amounts of visual data, is making a chip that combines processors and memory. The design reduces how much data has to be moved in and out of the device, allowing it to get more operations with minimum power, the company says.

- Efficient is developing a chip that can handle different types of computation — it can process other data for the satellite along with machine learning models. Lucia says it uses up to 100 times less energy to run the same program compared to a CPU off the market.

- Firefly offers a digital platform that can support data processing in real time in orbit. The company expects to launch a test of the platform for autonomous AI-enabled satellite navigation later this year.

- Still others are working on analog approaches, which are energy-efficient but noisier and prone to errors.

Between the lines: Chips in space today are decades-old technology. "Anytime you're designing a chip today [it's] for applications 20, 30, 40 years from now," says Heather Pringle, CEO of the Space Foundation and former commander of the Air Force Research Laboratory.

- She points to Voyager 1, a spacecraft that's now more than 15 billion miles from Earth running on nearly 50-year-old technology developed around the time when Atari was state-of-the-art.

- Voyager lost communication with Earth late last year, but engineers recently deployed a software fix and were able to re-establish contact.

- The best AI-enabled chips "should be reconfigurable so we can deploy new algorithms very quickly," Kiruthika Devaraj, VP of avionics and spacecraft Technology at satellite company Planet, wrote in an email.

The bottom line: "We have launch vehicles, launch towers, spacecraft, onboard propulsion and antennas, and in the end, it comes down to the damn semiconductor," Goldin says.

3. Memorable images slow time

Illustration: Sarah Grillo/Axios

When we look at memorable images, time appears to slow down, a new study found.

Why it matters: The findings could help inform efforts to develop AI that can sense the passage of time — arguably a key component of human intelligence — and improve our interactions with it, the study authors say.

What they found: Study participants (about 100) were shown images of scenes that were six different sizes and had six different levels of clutter for 300 milliseconds to 900 milliseconds.

- They were then asked to judge whether they saw the image for a long or short time.

- Participants were more likely to say they looked at smaller, cluttered scenes for shorter than the actual duration. Larger, sparser scenes — like an empty warehouse — appeared to be shown longer, Martin Wiener, a professor of psychology at George Mason University, and his colleagues report this week in the journal Nature Human Behavior.

In another experiment, the researchers asked a different set of participants to look at images whose memorability had been previously rated.

- They found participants believed more memorable images were shown longer than they actually were and were remembered more precisely.

- And the longer an image seemed to be shown, the more likely a participant remembered it the next day.

- Using neural network models of vision, they found more memorable images were processed faster and how fast an image was processed was linked to how long it seemed to last.

The big question: Why? says Dean Buonomano, a professor of neuroscience at UCLA who wasn't involved in the study.

- "Is that a side effect of how the brain works or does it have a function?"

The intrigue: The finding suggests "we use time to gather information about the world around us," Wiener said in a press briefing.

- "[A]nd when we see things that are more important, more relevant, more memorable, we dilate our sense of time in order to get more information," he said.

- Yes, but: "It's not that everything that's longer must be more memorable," he said, adding that other factors can interfere with time perception.

What's next: Wiener said he hopes the study inspires more work in AI and computer vision fields to "build systems that track and record and measure time."

- "If we want to build AI that is interactive, that interacts with people the way we interact with each other, it needs to have a sense of time similar to ours."

4. Worthy of your time

5. Something wondrous

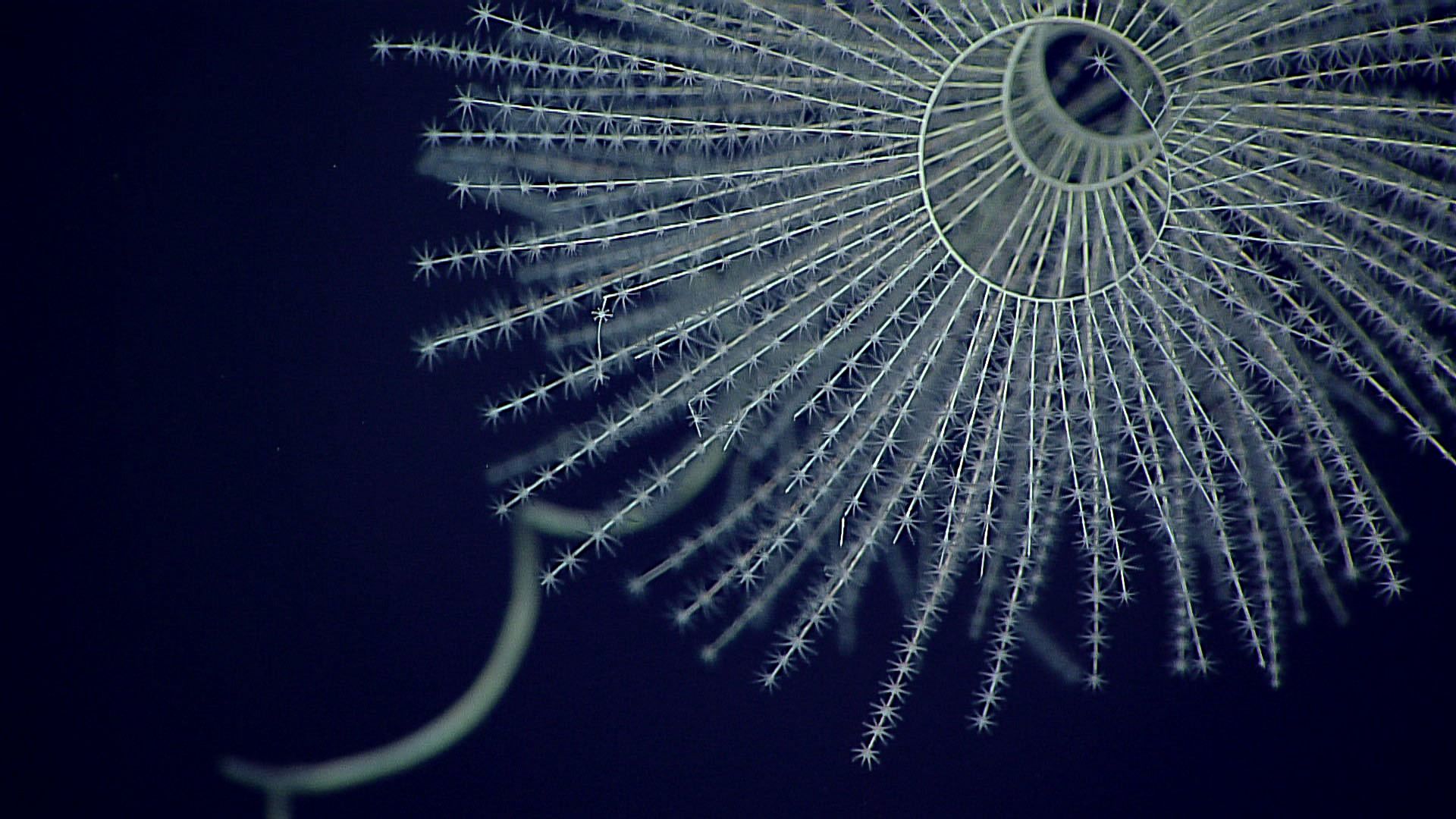

Iridogorgia magnispiralis. Photo: NOAA Office of Ocean Exploration and Research, Deepwater Wonders of Wake

Bioluminescence may have evolved in a group of marine invertebrates 540 million years ago — 300 million years earlier than previously thought, according to a new study.

Why it matters: The findings could help scientists figure out where, when and how the ability to produce light from chemical reactions emerged.

- "Nobody quite knows why it first evolved in animals," Andrea Quattrini, curator of corals at the Smithsonian's National Museum of Natural History and a co-author of the study, said in a statement.

The big picture: Bioluminescence has evolved roughly 100 times in nature, helping to give a range of animals the ability to camouflage themselves, hunt, communicate and court.

- Scientists previously thought it first evolved in ostracods — a class of small marine crustaceans — about 267 million years ago.

What they found: Members of the team had previously used genetic data from 185 species of octocorals — a class of 3,000 different species, many of which are bioluminescent — to map their evolutionary relationships.

- In the new study, they placed two octocoral fossils with known ages on that tree of life to determine when octocorals diverged and labeled the branches with species that are bioluminescent, the researchers write this week in the journal Proceedings of the Royal Society B.

- Using different statistical models, they determined the probability that a common ancestor on the tree was bioluminescent.

- "The more living species with the shared trait, the higher the probability that as you move back in time that those ancestors likely had that trait as well," Quattrini said.

They found bioluminescence first evolved in a common ancestor of octocorals about 540 million years ago.

What's next: The researchers want to look at more species of octocorals and, using a genetic test to determine whether they are bioluminescent, determine when octocorals lost the trait.

- That could help them home in on the environmental factors that contribute to bioluminescence and its function.

Big thanks to managing editor Scott Rosenberg and Carolyn DiPaolo for copy editing this newsletter.

Sign up for Axios Science

Gather the facts on the latest scientific advances