Axios Science

August 22, 2024

Thanks for reading Axios Science. This week's newsletter is 1,668 words, about a 6.5-minute read.

- Send your feedback to me at [email protected].

1 big thing: Shedding light on AI's black box

Scientists are opening generative AI's black box and beginning to understand the models' inner workings.

Why it matters: The prospect of harnessing generative AI to make decisions and perform tasks is pushing researchers to better understand how AI systems work — and how they might be controlled.

- "We can't base our entire understanding of [large language models] on their inputs and outputs alone," says Marissa Connor, a machine learning researcher at the Software Engineering Institute at Carnegie Mellon University.

- "If you're relying on AI models to work in high-impact situations — like diagnosing medical conditions — then it is important to understand why they have a specific output."

Catch up quick: Unlike computer programs that use a set of rules to produce the same output each time they are given the same input, generative AI models find patterns in vast amounts of data and produce multiple possible answers from the same input.

- The internal mechanics of how an AI model arrives at those answers aren't visible, leading many researchers to describe them as "black box" systems.

Zoom in: One way AI researchers are trying to understand how models work is by looking at the combinations of artificial neurons that are activated in an AI model's neural network when a user enters an input.

- These combinations, referred to as "features," relate to different places, people, objects and concepts.

- Researchers at Anthropic used this method to map a layer of the neural network inside its Claude Sonnet model and identified different features for people (Albert Einstein, for example) or concepts such as "inner conflict."

- They found that some features are located near related terms: For example, the "inner conflict" feature is near features related to relationship breakups, conflicting allegiances and the notion of a catch-22.

- When the researchers manipulated features, the model's responses changed, opening up the possibility of potentially using features to steer a model's behavior.

OpenAI similarly looked at a layer near the end of its GPT-4 network and found 16 million features, which are "akin to the small set of concepts a person might have in mind when reasoning about a situation," the company said in a post about the work.

Yes, but: The papers from OpenAI and Anthropic acknowledge it is early days for the work, especially for how it might apply to AI safety.

- While the research looks at larger language models than previous work, it examines just a slice of these massive models and captures a fraction of the concepts represented in a model's billions of neurons activated across a network's many layers.

The latest: Google DeepMind tried to tackle that limitation in its recent release of Gemma Scope, a tool that looks across all of the layers in a version of the company's Gemma model, covering 30 million features.

The big picture: The unknowns about what happens in a large language model between something being put in and something coming out echo observations in other areas of science where there is an "inexplicable middle," says Peter Lee, president of Microsoft Research.

- In biology, there's an understanding of DNA — including the fundamental physics underlying the molecule's chemistry — and descriptions of the behaviors of animals, microbes, plants and people.

- But in between are some of biology's biggest and most complicated questions, including how genetic, molecular and environmental processes shape a cell's development."

My claim would be generative AI has created for scientists another example of that kind of problem," Lee says.

2. A call for AI biosecurity rules

A group of experts is calling for the U.S. and other countries to pass legislation and set regulations to try to limit the risks posed by advanced AI models being applied to biology.

Why it matters: AI models trained on biological data have double-edged potential to help scientists design new molecules and vaccines but also to possibly be used to create new or enhanced viruses.

Where it stands: Recent research from OpenAI, RAND and others argues today's large language models don't increase the risk of a bioweapon being created. The data to train them is limited and their predictions have to be experimentally validated.

- There's an impression today that "producing biological weapons with all of the information that AI can provide is easy, straightforward," said Sonia Ben Ouagrham-Gormley, an associate professor and deputy director of the Biodefense Graduate Program at George Mason University, at a Center for New American Security event yesterday.

- But "producing biological weapons is very complex, very complicated."

Yes, but: Many researchers say the risk is likely to increase as AI models improve.

- "I think we need to listen to the AI developers about the potential capabilities of the next generation," Anita Cicero, deputy director of the Johns Hopkins Center for Health Security, said at the event.

What they're saying: AI developers committing to evaluate models is "important but cannot stand alone," Cicero and her co-authors write today in the journal Science.

- They propose government regulations could start by requiring models trained with large computational resources on large amounts of biological data and on models trained with sensitive biological data to be evaluated before they are released.

- That could focus officials on evaluating "the models that pose the greatest risks without unduly hampering academic freedom," they write.

- They also call for legislation requiring the providers of synthetic nucleic acids — which turn genetic sequence information into physical molecules — to screen their customers and their orders. Beginning in October, federally funded researchers will be required to get their synthetic nucleic acids from providers who do just that.

Friction point: Ouagrham-Gormley argued at the CNAS event that before AI biological models are regulated "we absolutely need to better understand the capability itself, and then that will allow us to assess better the risks associated with those."

Between the lines: A lot of work developing AI biological models is done in the private sector.

- "Government AI biosecurity policies should therefore apply to advanced biological models that pose potential high-consequence threats, whether or not they were developed with federal funding assistance," the researchers write in Science.

The big picture: "It's really incumbent on the U.S. and other countries that are in leading positions [in the field] to be setting up their own governance systems," Tom Inglesby, director of the Johns Hopkins Center for Health Security, told me.

3. Congress probes pharma work with Chinese military

A bipartisan group of House lawmakers is scrutinizing hundreds of clinical trials they say U.S. drug companies conducted with medical centers connected to China's military over the last decade, Axios' Maya Goldman writes.

Why it matters: An Aug. 19 letter the group sent to Food and Drug Administration commissioner Robert Califf about the collaborations broadens the scope of congressional inquiries into Beijing's role in drug development ahead of an anticipated vote on banning select Chinese biotech companies from doing work in the U.S.

- Among the concerns is whether Beijing is gaining access to U.S. intellectual property as the nations compete on biotechnology, and whether the FDA is doing enough to look out for national security interests.

State of play: The group pointed to an ongoing trial done with a People's Liberation Army-affiliated hospital on Eli Lilly's Alzheimer's drug donanemab, which is sold under the brand name Kisunla, and a past study of Pfizer's axitinib, sold as Inlyta for treating kidney cancer.

- Drawing from information on a federal clinical trials website, the lawmakers noted research is also taking place in Xinjiang, a region that's home to millions of Uyghurs and other ethnic minorities where the Chinese government has launched a campaign of assimilation that's included human rights abuses.

- "[W]e believe that U.S. biopharmaceutical entities could be unintentionally profiting from the data derived from clinical trials during which the CCP forced victim patients to participate," wrote Reps. John Moolenaar (R-Mich.), Raja Krishnamoorthi (D-Ill.), Anna Eshoo (D-Calif.) and Neal Dunn (R-Fla.).

- The lawmakers asked Califf whether the FDA has reviewed the clinical trials or inspected the facilities in question, and whether the agency sent notices to companies about the collaborations.

What they're saying: The FDA received the letter and will respond directly to the lawmakers, a spokesperson told Axios.

- Eli Lilly said it conducts clinical trials around the world to ensure diversity in research and to increase access to its medicines. A spokesperson added the company is committed to IP protections and screens its research partners.

- Pfizer did not immediately respond to a request for comment.

4. Worthy of your time

First biolab in South America for studying world's deadliest viruses is set to open (Meghie Rodrigues — Nature)

Astrology shown to be no better than random guessing (Tom Leslie — New Scientist)

At the Salton Sea, uncovering the culprit of lung disease (Fletcher Reveley — Undark)

5. Something wondrous

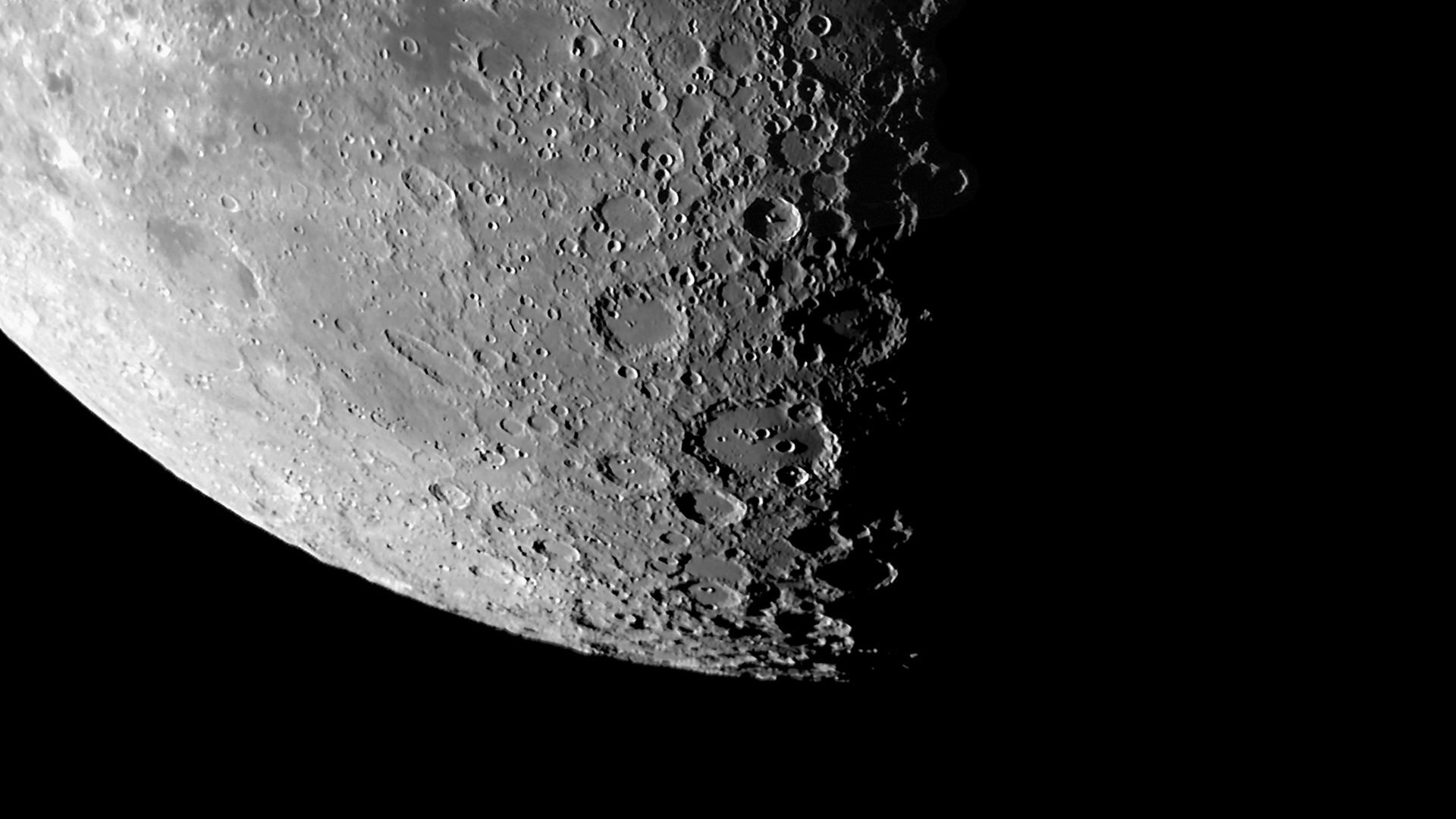

Soil measurements made at the Moon's southern pole are bolstering the hypothesis that the lunar surface was once an ocean of magma, scientists reported this week.

- The data from India's Chandrayaan-3 mission represents the first measurements made at the Moon's southern pole.

What they found: The Pragyan rover traveled 103 meters (about 340 feet) along the lunar south pole, taking scientific measurements at 23 different stops.

- The rover analyzed the lunar regolith, or outermost layer of soil, using an X-ray spectrometer and the team at the Physical Research Laboratory in Ahmedabad found the "lunar terrain in this region is fairly uniform and primarily composed of ferroan anorthosite (FAN)," they write in Nature.

The big picture: The lunar magma ocean (LMO) hypothesis suggests there was a layer of molten rock on the Moon's surface for tens or hundreds of millions of years after it formed about 4.5 billion years ago.

- The lunar interior then formed when the heavy minerals olivine and pyroxenes sank, leaving lighter minerals — including FAN — that became the Moon's crust.

- The chemical composition of the regolith analyzed at the southern pole region matches that found along the lunar equator and other regions, providing support for the LMO hypothesis.

- The "measurements serve as the first ground truth in the south polar highlands and probably play a key role in the overall understanding of the origin and evolution of the Moon," the researchers write.

Big thanks to managing editor Scott Rosenberg and copy editor Jay Bennett.

Sign up for Axios Science