Axios Future

February 21, 2019

Have your friends signed up?

Any stories we should be chasing? Hit reply to this email or message me at [email protected]. Kaveh Waddell is at [email protected] and Erica Pandey at [email protected].

Okay, let's start with ...

1 big thing: AI and the possible bad guys

Illustration: Sarah Grillo/Axios

In the U.S. and Europe, Big Tech is under fire — hit with big fines and the threat of stiff regulation — for failing to thwart the profound consequences of its inventions, including distorted elections, divided societies, invaded privacy and sometimes deadly violence.

Kaveh writes: Now, artificial intelligence researchers, facing potentially adverse consequences from their own technology, are seeking to avoid being ensnared by the same "techlash."

Driving the news: AI researchers are working to limit dangerous byproducts of their work, like race- or gender-biased systems and supercharged fake news.

- But the effort has partly backfired into a controversy of its own.

What's happening: As we reported, OpenAI, a prominent research organization, unveiled a computer program last week that can generate prose that sounds human-written.

- OpenAI described the feat and allowed reporters to test it out (as we did), but said it would withhold the computer code.

- The group said it was attempting to establish a new norm around disclosing potentially dangerous inventions. To prevent misuse of these inventions, researchers would continue their work but keep some advances under wraps in the laboratory.

- In the case of its own computer program, OpenAI said it feared that somebody could use it to effectively develop a weapon for mass-producing fake news.

- This was the first time a major research outfit is known to have used the rationale of safety to keep AI work secret.

But the move met massive blowback: AI researchers accused the group of pulling off a media stunt, stirring up fear and hype, and unnecessarily holding back an important research advance.

Why it matters: Against the backdrop of the techlash, we're seeing a messy debate play out around an urgent question: What to do with increasingly powerful "dual-use" technologies — AI that can be used for good or for ill.

- The outcome will determine how technology that could cause widespread harm will — or won't — be released into the world.

- "None of us have any consensus on what we're doing when it comes to responsible disclosure, dual use, or how to interact with the media," Stephen Merity, a prominent AI researcher, tweeted. "This should be concerning for us all, in and out of the field."

Details: OpenAI says its partial disclosure was an experiment. In a conversation with two top AI researchers from Facebook, OpenAI's Dario Amodei held up social media companies as a cautionary tale:

"The people designing Twitter, Facebook, and other seemingly innocuous platforms didn't consider that they might be changing the nature of discourse and information in a democracy … and now we're paying the price for that with changes to the world order."

Several researchers praised OpenAI's decision to withhold code as a vital step toward rethinking norms. But other academic researchers came down hard.

- While the new program is often impressive, its researchers admit that they simply used a scaled-up version of previous work. It's very likely therefore that someone could replicate the feat at relatively minimal cost.

- OpenAI says that's why it sounded the alarm. But critics say that makes the delay meaningless.

2. The new clash of the retail titans

Illustration: Sarah Grillo/Axios

Walmart has improbably emerged as a powerful foil to Amazon's retail dominance, as we've reported. The latest front in this clash of the titans is autonomous vehicles — and their potential to upend the future of delivery.

Erica and Axios' autonomous driving reporter Joann Muller write: Shoppers have become accustomed to often free, fast delivery. AVs would be able to deliver goods quicker and at an even cheaper cost to the shipper, say KPMG researchers.

- That could increase pressure on brick-and-mortar retailers and possibly trigger even more online shopping.

- Key stat: McKinsey predicts that autonomous deliveries will slash retailers’ shipping costs by 40%.

The big picture: We don't know exactly when and how AVs will be deployed, but the country's biggest retailers are making large investments in the field. Griffin Carlborg, an analyst at Gartner L2, says, “They’re getting themselves ready.”

- In Arizona, cars from Waymo — Google's AV arm — are picking up shoppers and dropping them off at Walmart stores to collect groceries. Also in Arizona, Udelv is delivering packages for Walmart in driverless cars.

- In Miami, Ford is working with Walmart to courier groceries in AVs.

Amazon is in the battle, too: It formed a small team in 2016 to investigate driverless technology, and early last year it partnered with Toyota to explore AV deliveries. It has also been spotted using self-driving trucks to haul cargo in Arizona.

- Now, in recent weeks Amazon has doubled down with two big investments in AV companies Aurora and Rivian.

What they're saying:

- "We don't want to act like everyone is going to be zipping around in autonomous vehicles tomorrow, but we do believe they'll be important in the future of delivery," Molly Blakeman, a Walmart spokesperson, tells Axios. "When that happens we want to be ahead of the curve."

3. The U.K. ups its AI play

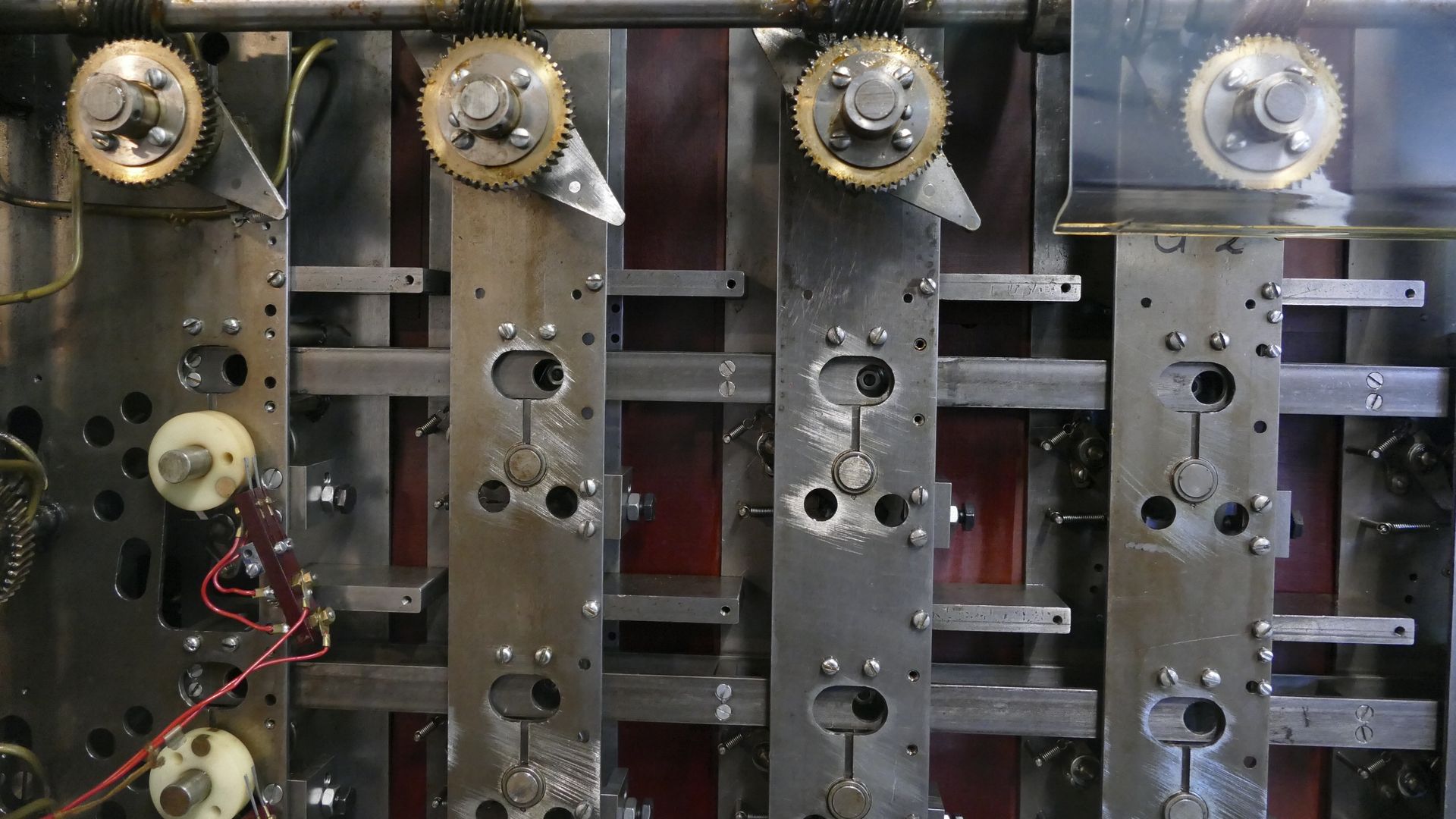

An Enigma codebreaker like the one developed by Alan Turing. Photo: Universal History Archive/UIG/Getty

Seeking to play in the global technology race dominated by the U.S. and China, the U.K. says it will pay for about 1,200 students to earn AI master's and Ph.D.s in its universities, and fund the salaries of 3–5 more to join the nation's top government AI lab.

Kaveh writes: Given the paucity of AI talent, 1,200 is a highly ambitious target, a number that, if fully realized, could go far toward making the U.K. competitive in the global race.

- The U.K.'s top universities and several high-profile companies have kept it in the running alongside the U.S. and China. But many U.K.-trained researchers end up working for American tech giants, such as Google.

- "Well-known Big Tech companies have been paying high salaries to attract people out of academia," says Adrian Weller, director of the AI program at the Alan Turing Institute, the U.K.'s national institute for data science and AI. "This is an attempt to redress the balance."

By the numbers:

- The U.K. government says it will pay up to £110 million — about $144 million — for the training programs and that private companies will chip in millions more.

- The money will fund the education of 1,000 Ph.D. students and up to 200 master's students.

- The Turing Institute will contribute to fellows' salaries with up to $114,000 a year. This is a far cry from the hundreds of thousands in annual salary paid by big companies and slightly lower than tenured faculty jobs in academia.

Go deeper: Today's announcement is part of the U.K.'s national AI plan, announced last year, which includes the equivalent of $1.3 billion in funding.

4. Worthy of your time

China offers to wipe out its U.S. trade surplus (Gavyn Davies — FT)

Women are better educated, but are working less (Stef Kight — Axios)

The downside of dollar stores (Rachel Siegel — WP)

China ends advanced battery subsidies (Takashi Kawakami — Nikkei Asian Review)

Russians take 19 minutes to hack a network (Patrick Tucker — Defense One)

5. 1 sci-fi thing: 119 years of unnerving stories

French postcard, 1910. Photo: Transcendental Graphics/Getty

Tech hands often have cut their teeth on science fiction, finding inspiration — and sometimes a visionary glance at what's coming next — in paperbacks often passed hand to hand. Elon Musk, for instance, famously recommends Isaac Asimov's Foundation series.

Now, a group of AI researchers at Stanford have produced a gourmet selection for the sci-fi fanatic — what they call the Science Fiction Concept Corpus, writes Lauren Murrow at Wired.

- It's comprised of more than 2,600 works published since 1900, all of it divided into 108 sci-fi themes, including time travel, space travel, genetic enhancement and social issues.

- The program will suggest a title once you identify an area of interest, what the Stanford group calls "intelligent browsing."

Sign up for Axios Future

Spot the mega-trends impacting our world