Axios AI+

March 12, 2025

I'm sad my Stanford class with Tara VanDerveer has ended, but we went out with a bang. Last night's guest was Golden State Warriors head coach Steve Kerr. Today's AI+ is 1,180 words, a 4.5-minute read.

1 big thing: Google looks to give AI arms and legs

Google today announced it is bringing the broad knowledge of its Gemini large language models into the world of robotics.

Why it matters: The move could pave the way for robots that are vastly more versatile, but also opens up whole new categories of risks as AI systems take on physical capabilities.

Driving the news: Google announced two new Gemini Robotics models, pairing its Gemini 2.0 AI with robots capable of physical action.

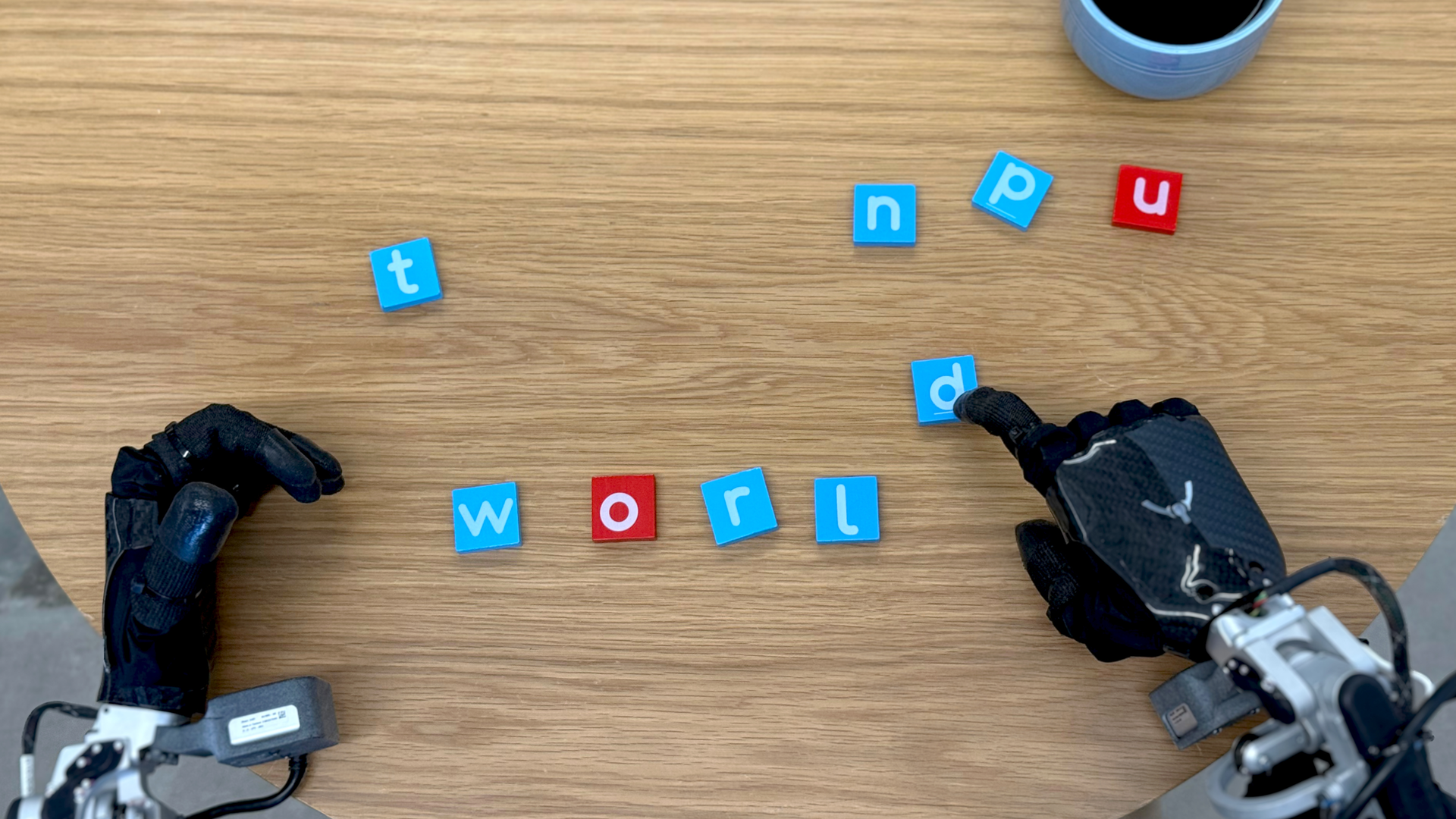

- In video demos, Google showed robots handling an array of tasks.

- In one demo, a robot was able to understand and execute a command to dunk a miniature basketball in a toy hoop — a task for which it had not been trained. In another it was told to put fruit into a clear bowl and continued to revise its approach as a person moved the bowl around.

- Google said it has a partnership with Texas developer Apptronik to bring Gemini to Apptronik's humanoid robots. It's also working with several robotics companies as early "trusted testers," including Agile Robots, Agility Robotics, Enchanted Tools and Boston Dynamics, maker of the Spot robot dog.

- "In order for you to build really useful robots, they need to understand you," Google DeepMind senior director Carolina Parada told reporters during a briefing. "They need to understand the world around them, and then they need to be able to take safe action in a way that is general, interactive and dexterous."

The big picture: Google isn't the first to combine an LLM with robotics, but prior efforts have been far more limited.

- Moxie, the ill-fated kids' robot, for example, paired an LLM with a basic robot, but it couldn't do physical tasks.

- Others are also pursuing the intersection of robotics and AI, including OpenAI and World Labs, the startup run by Stanford professor Fei-Fei Li.

Between the lines: Early chatbots had a built-in safety limit; they could talk, but not act. That protection will vanish as AI gains the ability to make decisions and take physical actions — introducing new risks.

- This transformation has already begun with the move to give AI agentic powers — that is, the ability to take action without human intervention.

- Combining AI with the ability to interact with the physical world means robots could take actions for which they were not specifically programmed. That could be especially concerning if applied to areas like policing and the military.

- When asked about military use, Google stressed it was not building for that market, but rather for general purpose use.

The other side: Google says it is taking a multi-layered approach to safety, one that includes the content protections already in Gemini, industry standard rules for physical robots as well as a "constitutional AI" governing the system's overall behavior.

- And, by adding an AI brain, it says that robots can be more versatile and useful.

- "One of the big challenges in robotics — and a reason why you don't see useful robots everywhere — is that robots typically perform well in scenarios they've experienced before, but they really fail to generalize in unseen, new, unfamiliar scenarios," Google DeepMind principal scientist Kanishka Rao told reporters.

My thought bubble: This is a dangerous inflection point, and giving a computer limbs is not a step to be taken lightly. If this were "Terminator 2," it would be the moment the heroes go back in time to shut it all down.

2. Interview: John Roese on Dell's federal AI push

John Roese, Dell's global chief technology officer and chief AI officer, told Axios during a Washington visit Tuesday that the federal government's AI adoption is poised to speed up in part because of success cases in business.

Why it matters: "Nobody in the government wants to be the bleeding-edge, first adopter of a technology — that's pretty risky," he said.

Roese said his message to D.C. is: "Come on in — you're not the first to move. It's early, but you don't have to figure this out by yourself. I can show you with confidence this is actually achievable. How you do it will probably be a bit different; your data will be different."

The big picture: Michael Dell, the company's chair and CEO, told Bruce Mehlman during an event yesterday at the National Press Club that shunning AI tools soon will be like having a phone without WiFi.

- Dell Technologies says in new recommendations for Washington that AI "will require substantial and appropriate investment focus to help address chip resourcing and increased compute power, data storage and energy efficiency needs. Public and private sector collaboration provides the optimum basis from which to move forward."

Looking ahead to agentic AI, Roese told us: "Imagine a world where ... the agent that manages your finances talks to the agent at the IRS and your taxes just happen continuously."

- "The level of efficiency goes up, the effectiveness goes up, the actual outcomes improve, and the friction of humans having to interact with bad processes and complex environments just disappears. ... Compliance probably goes up, too."

Behind the scenes: Roese said Dell decided it needs to be "Customer Zero," since there's no playbook for transforming an organization using generative AI.

- "The only way that we would be able to properly inform the strategy and have any credibility with customers who are also on this journey [was] to be a first and visible aggressive adopter of the technology," he said.

A chief AI officer needs to be "deeply involved in the actual technology evolution that's occurring, and can translate that and understand it is actually a strategic asset to make your company better," Roese said.

- "You're literally changing the way work happens in an organization," he added. "If you try to do that bottom-up through consensus building, good luck! It will take you a thousand years to get to a consensus. You have to be top-down. You have to make decisions."

Roese, who holds about 30 patents, said he has "never met a problem that wasn't interesting and worth solving." He said the Dell approach is: "Do it fast; fail forward faster; have a high-risk profile."

- "I go into a meeting, I don't even have to tell people, anymore. If we're debating something, they all realize: If we don't make the decision this week, John's going to make the decision. So we get moving, because we don't have time to waste."

3. Training data

- As part of its push toward AI agents, OpenAI released a new tool that allows outside developers to make use of web-surfing capabilities. (Ars Technica)

- Google owns about 14% of Anthropic, but holds no board seats or voting rights, per legal filings. (NYT)

- AI systems designed to predict the likelihood of a hospitalized patient dying largely aren't detecting worsening health conditions, a new study found. (Axios)

- The University of South Florida announced the first college dedicated to AI and cybersecurity. (Axios Tampa Bay)

4. + This

I just learned there is an official sport called "roofball" where you throw a ball on a roof and catch it. I played a similar game as a kid, but I just called it "being an only kid with no friends in the neighborhood."

Thanks to Scott Rosenberg and Megan Morrone for editing this newsletter and Matt Piper for copy editing it.

Sign up for Axios AI+