Axios AI+

August 29, 2025

We're off Monday for Labor Day, back in your inbox on Tuesday. Today's AI+ is 1,254 words, a 4.5-minute read.

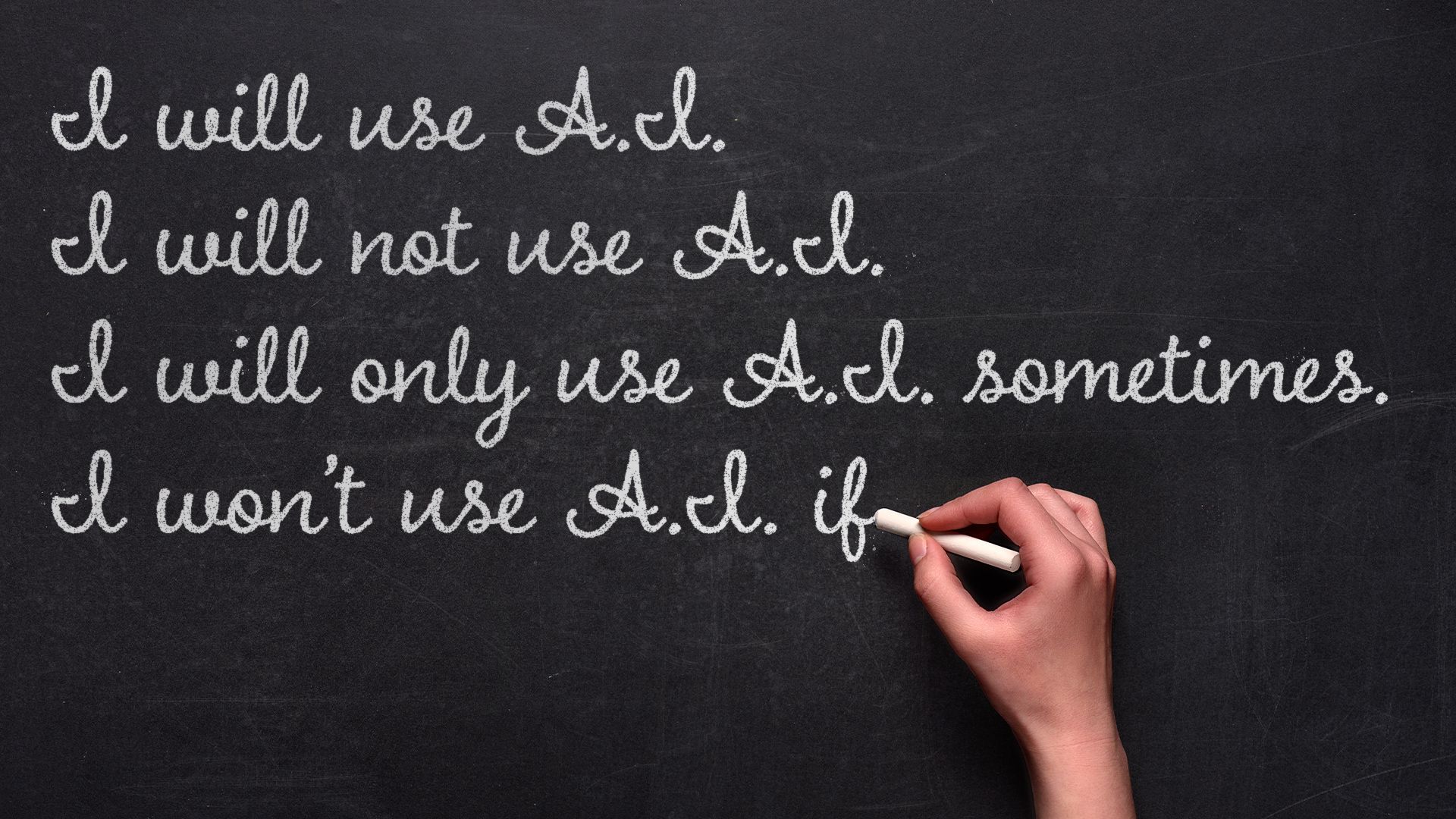

1 big thing: Schools' perplexing AI policies

Educators, students and parents are heading into another year of generative AI trial and error in the classroom.

Why it matters: Murky policies risk widening inequities and undermining trust, even as most educators agree that AI is here to stay.

By the numbers: The Trump administration's push to expand AI in schools, coupled with an ed tech gold rush, has districts revising rules in real time.

- 89% of high school and college students say they use AI technology for school, per recent polling from learning platform Quizlet. That's up from 77% in 2024.

- The average teacher is using around 150 different ed tech tools in a given school year, Arman Jaffer, founder of classroom AI assistant Brisk Teaching, told Axios.

Between the lines: This is the fourth school year that students have had access to ChatGPT on personal devices, but many districts still don't allow it on school devices.

- OpenAI's policies forbid those under 13 from using ChatGPT and require users 13 to 18 to get parent or guardian permission. (Anthropic's Claude chatbot, which is popular among college students and educators, requires users to be 18 or older.)

- Regardless of policies and rules, there's no way to stop kids from using ChatGPT on their personal devices, many educators told Axios.

The big picture: Parents and students still aren't sure whether using AI tools counts as cheating or digital literacy.

- It can be both, says David Touretzky, a Carnegie Mellon computer science professor and founder of AI4K12, an initiative to develop national guidelines for AI education.

- "Schools that are already stressed want to ban ChatGPT because they don't know how to cope with it right now," Touretzky told Axios in an email.

- Districts with more resources can experiment with new tools — but some students everywhere will still try to cheat if they can get away with it, Touretzky said.

The intrigue: Sal Khan, founder of Khan Academy and the AI tutor and teaching assistant Khanmigo, told Axios that teachers falsely accusing students of cheating is a growing problem.

- Khan said a family member was writing assignments and then using AI to introduce errors into their work so that their teacher's AI detector wouldn't flag it as AI-generated.

Reality check: Rachel Yurk, chief information and technology officer at the Pewaukee School District in Wisconsin, told Axios her district blocks AI tools on all school-owned student devices, but it's not pretending the tools don't exist.

- Since 2022 the district has been teaching students about chatbots — including bias, privacy, accuracy, emotional dependency and other problems with generative AI.

What they're saying: Educators know that students are using chatbots to complete homework. "We're not putting our heads in the sand," Katherine Goyette, computer science coordinator at the California Department of Education, told Axios.

- Calling it cheating, Yurk says, is misunderstanding the problem that her district is trying to solve through conversations about responsible use and academic integrity.

- Cheating is taking someone else's work and calling it your own, Yurk says, which gets complicated when you're talking about AI: "There's no person behind it, right?"

The solution for many schools will be to change the way teachers assess student proficiency, opting for more in-class writing assignments, oral assessments, class discussions and group work.

- These techniques aren't new, Clay Shirky wrote in a recent New York Times op-ed: "They are simply a return to an older, more relational model of higher education."

- "Educators at all levels are having to rethink how we should create assignments and how we can accurately measure student learning," Touretzky says. "We'll figure it out eventually," but "banning LLMs is not a realistic solution."

What we're watching: Administrators say that the tech is moving too fast to cement rules into place.

- Ednovate Schools, a network of independent public charter schools in California, has been honing its AI policy for years, Lanira Murphy, Ednovate's senior director of academics, tells Axios.

- Murphy says the AI policy is flexible, focusing on conversation and learning rather than strict punishment for misusing tools.

- The worst approach, Murphy says, is to have no policy at all. "If my child uses ChatGPT and you didn't have a policy, but then you punish them, we have a problem."

2. Google's new Pixels showcase AI on the phone

Google's new Pixel 10 lineup leans heavily on AI, with tools for sharper photos, real-time translation in your own voice and the beginnings of a truly personal AI assistant.

Why it matters: Smartphones haven't changed much in the last few years, but AI offers the potential to reshape the phones we rely on daily.

The big picture: AI shows up across the Pixel 10 experience and notably in the functions you use most — the camera, phone and messages.

- Apple laid out a similar vision last year for how AI could help the smartphone experience. But it has struggled to deliver and delayed its upgraded Siri, central to its ambitions.

- Google's AI features — significantly more ambitious than last year's enhancements — also seem better suited for everyday use than those served up by other Android phone makers.

Zoom in: I've been using a loaner Pixel 10 Pro for a little while. This review focuses on the AI features, specifically three key areas.

Camera

Google already equipped its phones with an impressive array of lenses and features, but this year it uses AI to make the camera both more capable and easier to use, as I experienced during a local photo safari at the California Academy of Sciences.

- A built-in "camera coach" offers multi-step suggestions, but the fish and butterflies I was trying to capture weren't keen on hanging around. That said, I left with an album full of great photos.

- A super-long zoom offers the ability to get far closer than the 5x optical zoom allows, with AI helping to fill in the details that might otherwise be blurry. In practice, this offered mixed results. It worked quite well on a long-distance shot of Sutro Tower, but struggled with some of my nature shots. It did allow me to capture really nice bird close-ups.

- Meanwhile, in the Google Photos app, you can now describe the edits you want to make to a photo rather than making the edits yourself. This lets you type or say things like "zoom in and fix the lighting" or even do broader AI transformations, such as making the Moon the background.

- Editing photos with plain requests could set the stage for a radical shift in how we control our software more broadly.

3. Training data

- A tech industry veteran in Greenwich, Connecticut, apparently killed his mother earlier this month in what "appears to be the first documented murder involving a troubled person who had been engaging extensively with an AI chatbot." (Wall Street Journal)

- Microsoft, which relies on OpenAI for its largest AI models, released two of its most capable homegrown models to date, one for voice and the other for text. (Semafor)

- Elon Musk's xAI released a new AI model for agentic coding. (Reuters)

- Here's everything that Meta has gotten from the Trump administration since Mark Zuckerberg's rightward veer. (Platformer)

4. + This

I took a lot of fun pictures at the Academy of Sciences yesterday. But I think my favorite shot so far was this highly zoomed-in picture taken in Pacifica. This is the version with AI enhancements, though in this case it's not that different from the original.

Thanks to Scott Rosenberg and Megan Morrone for editing this newsletter and Matt Piper for copy editing.

Sign up for Axios AI+