Axios AI+

July 19, 2023

Ina here. Don't worry, I'm still getting used to the changes. But I'm really glad you are still here. Thanks for reading.

Today's AI+ is 1,176 words, a 4-minute read.

1 big thing: Meta's double-headed Llama

Illustration: Gabriella Turrisi/Axios

Meta policy chief Nick Clegg wants you to be impressed by the powers of its latest open source AI model, known as Llama 2 — but not so impressed that you worry about the havoc it could wreak in the wrong hands, Axios' Ryan Heath reports.

Why it matters: Meta has opened the new model to allow anyone to use it commercially for free — the prior version, released in February, was for research use only.

- Those using Llama 2 have to agree to an acceptable use policy, but once the code is out there, those rules could prove tough to enforce.

Driving the news: Meta sounded its trumpets Tuesday for the Llama 2 release, announcing partnerships with a variety of industry giants including Microsoft, Amazon and Qualcomm.

- Microsoft will be the preferred cloud partner, getting Llama 2 first for Azure, but Amazon also plans to offer Llama through AWS.

- Qualcomm, meanwhile, is working with Meta to ensure that Llama can run natively on phones and other devices rather than relying on the cloud.

Zoom out: Meta's open-source AI strategy aims to help the social giant catch up to the phenomenal popularity of OpenAI's ChatGPT.

Yes, but: Government experts worry that free, powerful AI models available for re-engineering could hasten the emergence of threats like genetically engineered bioweapons.

What they're saying: Clegg, Meta's global affairs president, used an Axios interview to talk down Llama's capabilities.

- While acknowledging "you can't predict or litigate for all downstream uses," of AI, he argued Llama 2 could not be categorized alongside "frontier models" which are defined as highly capable models in risky fields.

- Meta is not aiming to create "all-singing, all-dancing artificial general intelligence," Clegg said, calling its Llama models "much, much dumber than that. They're just a textual predictive pattern recognition system."

Between the lines: Misinformation is universally viewed by experts as a top AI risk.

- Because Llama 2 is designed to run on devices as well as in the cloud, Meta may have a hard time holding users to its acceptable use policy.

- And Meta-owned Facebook and Instagram — like the rest of the world — are going to have to deal with whatever Llama spits out.

The big picture: Llama 2 is the latest of a number of projects that Meta has released via something akin to open source.

- Llama 2's release isn't technically open source, per the Open Standards Initiative, but the fact that anyone can use it and access much of its code is likely to insure rapid adoption and innovation around the model.

- Meta has tended to favor open sourcing key technical projects, including PyTorch and its AI work, with over 600 of its models now available for use on the HuggingFace platform.

- Clegg noted the company does not automatically open source models, and wrote last week that there are cases where closed models are necessary for safety.

What they're saying: Efforts to test how Llama 2 reacted to demands for sensitive material in fields such as nuclear, biological and chemical weapons led to "very marginal" issues, Clegg said, insisting these "could easily be mitigated."

- Meta will submit its Llama models to the Def Con hackathon in August for further stress testing.

2. Microsoft puts a price tag on AI for business

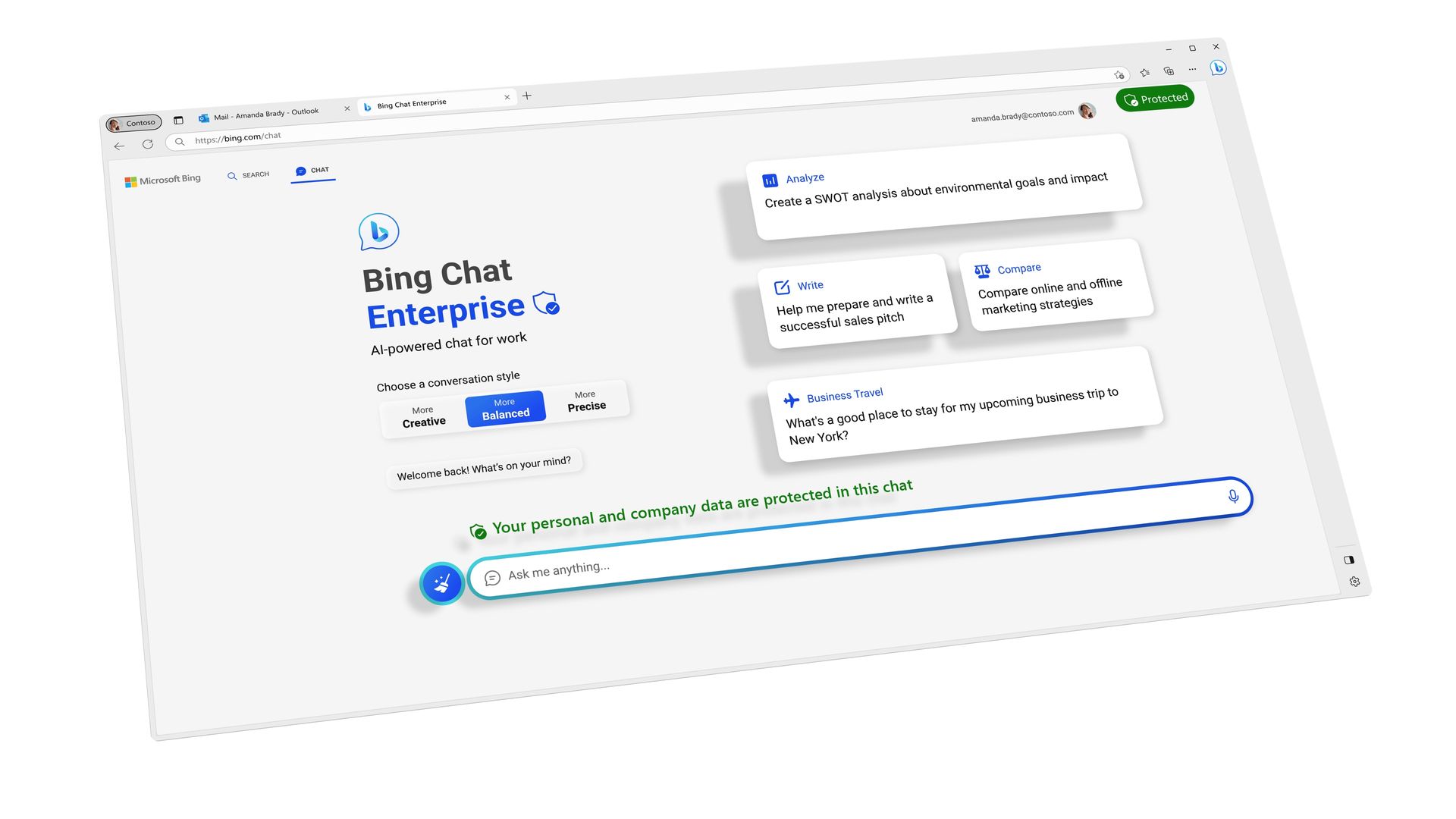

A screenshot of Microsoft's Bing Chat Enterprise. image: Microsoft

Microsoft announced Tuesday it will charge $30 per user per month for businesses that want to use its AI-infused copilots to automate work in Office products such as Word, PowerPoint and Excel.

Why it matters: That will add up to a hefty chunk of change, representing the most significant new revenue opportunity for Microsoft's Office business since it switched to a subscription model.

Details: Microsoft announced the Microsoft 365 Copilot pricing at its Inspire partner conference on Tuesday, along with a business version of its GPT-4-powered Bing Chat, which will sell for $5 per user per month on its own, and also be included in some of the company's subscription bundles.

- Bing Chat Enterpise adds protections designed to ensure that confidential business data doesn't get leaked out into the world.

Between the lines: That could add upwards of $5 billion to $16 billion in additional revenue for Microsoft next year, Ivana Delevska, chief investment officer at asset manager Spear Invest, told Axios. Her revenue estimate assumes 5% to 16% of Office 365 users sign up for Copilot.

- The $30 monthly per-user price was higher than the $5 to $20 per user per month many analysts had expected, Delevska said.

- On the flip side, Delevska noted it also costs Microsoft a lot to power its AI Copilots — on the order of $2 to $5 per user hour for the compute capacity needed to provide the service.

- "We do believe that this creates an opportunity for Microsoft, but it remains to be seen what value it will provide for its customers," Delevska said.

Yes, but: Generative AI services are pretty compute-heavy today, so there's considerable cost involved as well. Microsoft can draw on its existing Azure cloud computing infrastructure which is already providing AI services for OpenAI and others.

3. UN secretary general calls for global AI agency

UN Secretary-General Antonio Guterres in May. Photo: Noushad Thekkayil/NurPhoto via Getty Images

UN Secretary-General António Guterres has endorsed creating a UN agency to deal with AI threats ranging from how AI might be used in weapons of mass destruction to AI's role in spreading conspiracy theories, Ryan reports.

- Guterres told a U.K.-organized briefing at the UN Security Council — the body's first-ever AI discussion — that the prospect of "malfunctioning AI" in a nuclear or biotech setting is "deeply alarming."

Driving the news: Guterres called for a legally binding global agreement banning AI-powered, fully automated weapons of war by 2026.

What they're saying: "Look no further than social media. Tools and platforms that were designed to enhance human connection are now used to undermine elections, spread conspiracy theories, and incite hatred and violence," Guterres said.

- U.S. Ambassador to the UN Linda Thomas-Greenfield promised to work towards "consensus around common frameworks," which is notable given U.S.-China AI rivalry.

Context: The UN deploys AI to monitor conflict settings, has plans to use autonomous vehicles to help deliver food aid and has developed guidelines for ethical use of AI.

4. Training data

- The U.K.'s approach to AI regulation is coming under fire from the Ada Lovelace Institute, which offers 18 suggestions for how the government could strengthen its efforts. (TechCrunch)

- The Senate Judiciary Subcommittee on Privacy, Technology and the Law will hold a hearing next Tuesday exploring principles for AI regulation. Witnesses include Anthropic CEO Dario Amodei and computer science professor Yoshua Bengio, considered one of the "godfathers of AI."

- G/O Media, the publisher of Gizmodo, Jezebel and The Onion, plans to publish more AI-generated articles despite errors made during the first batch. (Vox)

- Thousands of authors have signed on to a letter calling for AI companies to get writers' consent and to offer compensation when using copyrighted works to train their engines. (TechCrunch)

- Tempo Automation, an ambitious startup that aimed to do small-batch hardware prototyping in San Francisco, has laid off most of its staff after failing to achieve profitability. (Axios/SFGate)

5. + This

Speaking of llamas spitting, check out this video. (Feel free to fast forward to 1:30 for the smart brevity version.)

Thanks to Scott Rosenberg for editing and Bryan McBournie for copy editing this newsletter.

Sign up for Axios AI+

Scoops on the AI revolution and transformative tech, from Ina Fried, Madison Mills, Ashley Gold and Maria Curi.