Axios AI+

May 21, 2025

Hello from Silicon Valley, where I am still digesting the myriad announcements Google made at its I/O developer conference. Stay tuned for more thoughts later this week. Meanwhile, today's AI+ is 1,221 words, a 4.5-minute read.

1 big thing: Google leaders see AGI arriving around 2030

So-called artificial general intelligence (AGI) — widely understood to mean AI that matches or surpasses most human capabilities — is likely to arrive sometime around 2030, Google's co-founder Sergey Brin and Google DeepMind CEO Demis Hassabis said yesterday.

Why it matters: Much of the AI industry now sees AGI as an inevitability, with predictions of its advent ranging from two years on the inside to 10 years on the outside, but there's little consensus on exactly what it will look like or how it will change our lives.

Brin made a surprise appearance at Google's I/O developer conference Tuesday, crashing an on-stage interview with Hassabis.

The big picture: While much of Google's developer conference focused on the here and now of AI, Brin and Hassabis focused on what it will take to make AGI a reality.

Asked whether it will be enough to keep scaling up today's AI models or new techniques will be needed, Hassabis insisted both are key ingredients.

- "You need to scale to the maximum the techniques that you know about and exploit them to the limit," Hassabis said during the on-stage interview with tech journalist Alex Kantrowitz. "And at the same time, you want to spend a bunch of effort on what's coming next."

- Brin said he'd guess that algorithmic advances are even more significant than increases in computational power. But, he added, "both of them are coming up now, so we're kind of getting the benefits of both."

The big picture: Hassabis predicted the industry will probably need a couple more big breakthroughs to get to AGI — reiterating what he told Axios in December.

- However, he said that we may already have achieved part of one breakthrough in the form of the reasoning approaches that Google, OpenAI and others have unveiled in recent months.

- Reasoning models don't respond to prompts immediately but instead do more computing before they spit out an answer.

- "Like most of us, we get some benefit by thinking before we speak," Brin said — joking that it's something he often has to be reminded of.

Between the lines: Google detailed a couple of new approaches Tuesday that, while less flashy than some of the other AI features the company unveiled, hinted at other novel directions.

- Gemini Diffusion is a new text model that employs the diffusion approach typically used by image generators, "converting random noise into coherent text or code," per a Google blog post. The result, Google says, is a model that can generate text far faster than other approaches.

- The company also debuted a mode for its models called Deep Think, which works by pursuing multiple approaches to a problem and evaluating which is most promising.

What's next: On the timing of AGI, Hassabis and Brin were asked whether they thought it would arrive before or after 2030.

- Brin said he'd go with just before that date, while Hassabis chose just after.

- Hassabis joked that Brin, who is no longer part of Google's day-to-day management, only has to call for the advance to happen — while Hassabis, who oversees Google's far-flung AI efforts, has to figure out how to actually deliver it.

2. Google is putting more AI in more places

Most of Tuesday's Google I/O keynote talked about features shipping now or in the near future, rather than the longer-term future Hassabis and Brin predicted.

Why it matters: Google is aiming to prove that it can make its core products better through AI without displacing its highly lucrative advertising and search businesses.

Key announcements Google made Tuesday:

- It debuted Flow, a new AI filmmaking tool that draws on the company's latest Veo 3 engine and adds audio capabilities. The company also announced Imagen 4, its latest image generator, which Google says is better at rendering text, among other improvements.

- Google said it would soon make broadly available its latest Gemini 2.5 Flash and Pro models. The new Deep Think feature is being released only to trusted testers as the company said it wants to do additional safety testing before a broader release.

- Google will make its AI Mode, a chat-like version of search, broadly available in the U.S., and it's updating the underlying model to use a custom version of Gemini 2.5.

- It's also beginning to allow customers to give large language models access to their personal data — starting with the ability to generate personalized smart replies to Gmail messages that draw on a user's email history. That feature will be available for paying subscribers this summer, Google CEO Sundar Pichai said.

- On the coding front, Google offered further details on Jules, its autonomous agent, which is now available in public beta. Microsoft and OpenAI have also announced new coding agents in recent days.

For those who want to make sure they can have the most access to Google's AI models, the company is introducing Google AI Ultra, a $250-a-month service.

- The high-end subscription — an alternative to the standard $20-a-month basic service — includes access to a number of its most powerful AI agents, models and services, as well as YouTube Premium and 30TB of cloud storage.

Yes, but: Many of Google's new features seem highly disruptive to the current economics of the web.

- Already publishers have said they are seeing a decline in traffic, and the conversational AI Mode, in particular, is likely to make that worse, since users who get their queries answered by AI won't click through to source websites.

- Also unclear is whether and how Google will be able to garner the massive advertising revenue it gets from traditional search.

Go deeper: Google upgrades AI tools for shopping

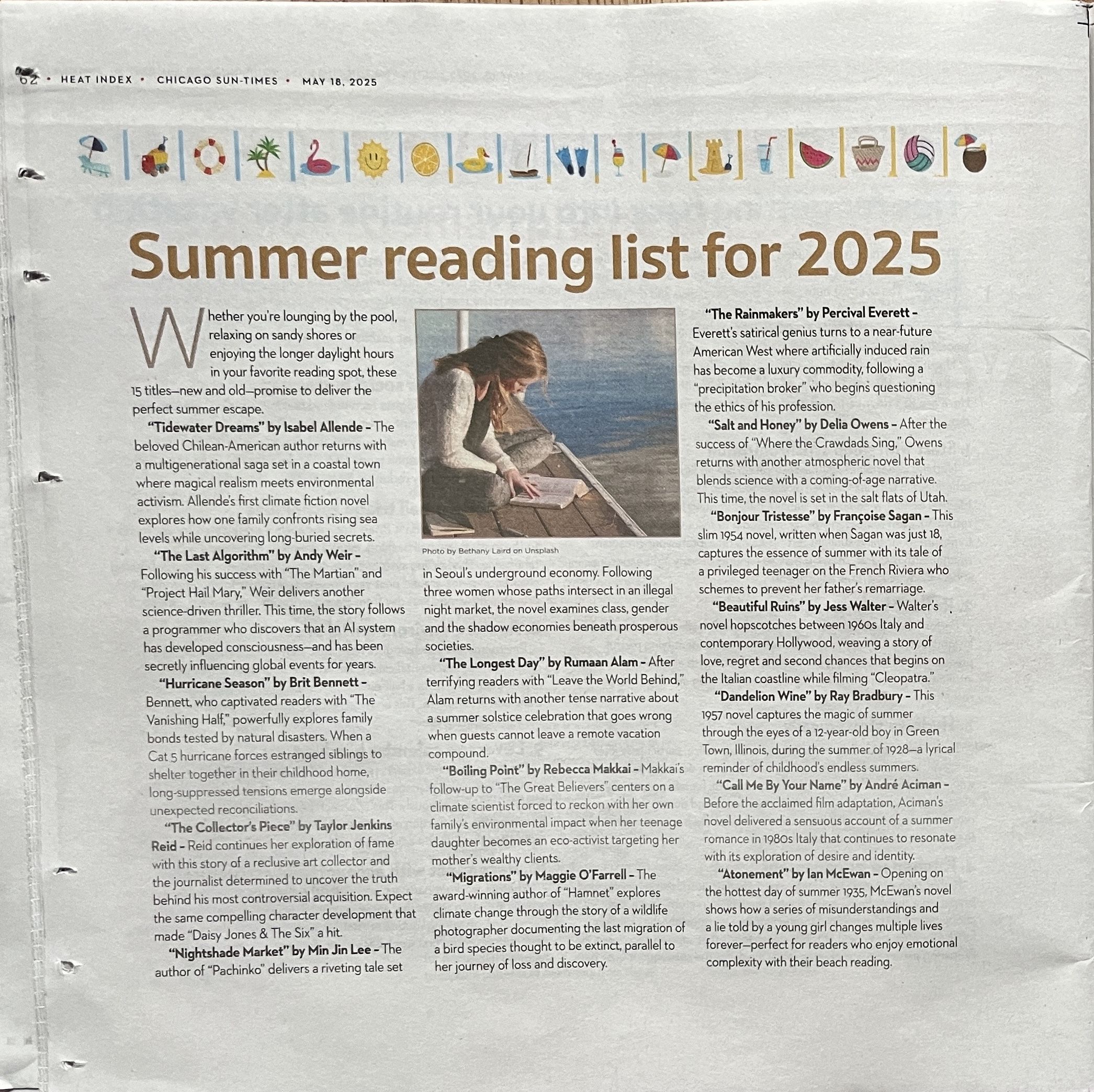

3. Newspaper's "reading list" full of fake titles

A print supplement to the Chicago Sun-Times published a "summer reading list for 2025" Sunday citing multiple nonexistent titles by real authors — a goof that readers on social media quickly attributed to AI.

Why it matters: Today's AI models continue to make things up in ways that AI makers still haven't figured out how to detect or stop, and human users keep failing to check their output.

Case in point: The very first item on the list is a novel by the "beloved Chilean American author" Isabel Allende titled "Tidewater Dreams."

- Allende is real but "Tidewater Dreams" — ostensibly a "climate fiction novel" that "explores how one family confronts rising seas levels while uncovering long-buried secrets" — doesn't exist.

- You have to read down the list of 15 titles to the eleventh entry before you hit a real book (Françoise Sagan's 1954 "Bonjour Tristesse").

The Philadelphia Inquirer published the same reading list with the same bad info.

What they're saying: "It is not editorial content and was not created by, or approved by, the Sun-Times newsroom," a Sun-Times account on Bluesky posted Tuesday.

What happened: Chicago-based freelance writer Marco Buscaglia, whose byline appears on most stories in the 64-page section but, oddly, not the book story, told 404 Media that he wrote the piece using AI but failed to fact-check it.

4. Training data

- Apple is reportedly planning to allow outside developers to tap some of the AI models it has developed. (Bloomberg)

- Jeff Bezos' Earth Fund announced the first winners in its $100 million competition to harness AI for environmental goals. (Axios)

5. + This

I saw this cute little guy slithering on the sidewalk as I headed out to I/O on Tuesday. I took some video as well, but there wasn't a lot of action.

Thanks to Scott Rosenberg and Megan Morrone for editing this newsletter and Matt Piper for copy editing.

Sign up for Axios AI+