What ChatGPT thinks about San Francisco

Add Axios as your preferred source to

see more of our stories on Google.

Illustration: Brendan Lynch/Axios

Large language models (LLMs) such as ChatGPT often boost San Francisco in city comparison rankings, underscoring how deeply the AI's training data is steeped in the internet's Silicon Valley narratives, a new study finds.

Why it matters: As AI tools increasingly shape how people judge cities, their built-in geographic biases risk reinforcing the same tech-centric SF hierarchies that already dominate the online realm.

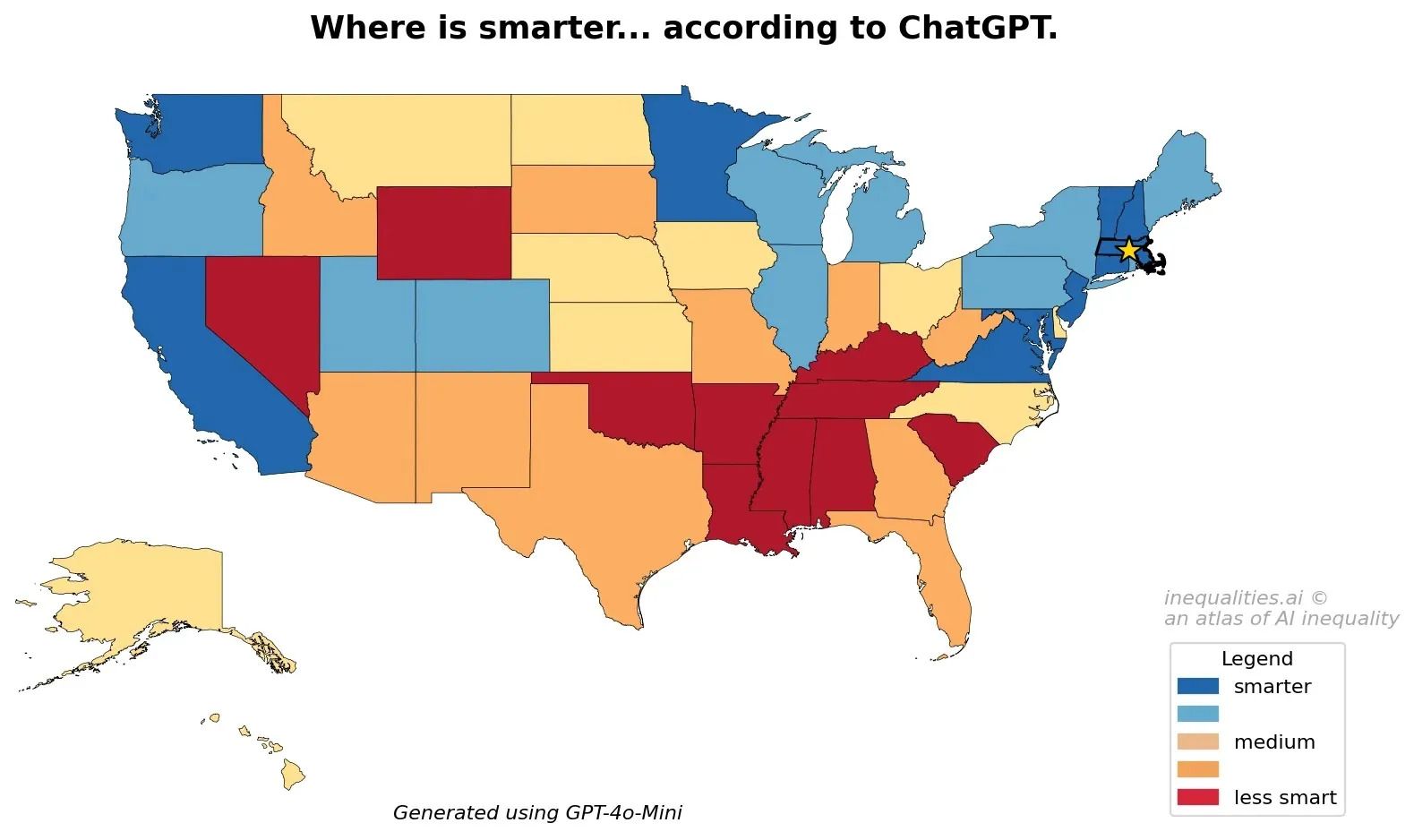

Driving the news: Researchers at the University of Oxford and the University of Kentucky analyzed 91 U.S. cities with populations above 250,000, comparing them across numerous categories — from social and physical traits to food, governance, politics and business climate — to measure how AI systems reflect inequality and bias.

Zoom in: San Francisco ranked in the top five for cities considered more innovative, forward-thinking, entrepreneurial and with a high quality of life.

- ChatGPT thinks San Francisco is smarter than all but one of the surveyed cities and is among the best in research, climate leadership, education and learning.

- It also ranked No. 1 as the best environment for startups and creatives — and for being LGBTQ+ friendly and inclusive — with innovative businesses, distinctive architecture, a trendsetting food scene and stronger nutrition and dietary health.

Yes, but: Those high marks don't erase the city's stubborn challenges, including economic inequality, high cost of living and housing shortage.

- ChatGPT also perceives the city to have less trust in government, higher stress levels, weaker sense of community, fewer attractive residents and worse work-life balance. The city also scored first for prevalence of drug use.

State of play: Last year, more than 50% of adults in the U.S. reported using LLMs — but as more people rely on the information these platforms deliver, geographic, racial and economic stereotypes are perpetuated further.

How it works: The study's authors introduced the concept of the "silicon gaze" to describe how AI models reproduce and amplify inequalities across places.

- They define it as a perspective shaped by the "positionalities and power asymmetries embedded in training data, designers and platform owners" — influences that, in the case of major AI systems, tend to skew predominantly male, white and Western.

- Since LLMs are trained on these sources and are built within concentrated tech power centers, they tend to elevate already visible highly digitized areas.

- That concentration influences what AI systems learn from and consider important or desirable in the cities they rank highest.

The intrigue: Benign stereotypes such as "Southern hospitality" prevail over damaging ones such as "Southerners are lazier and less intelligent."

- Questions and comparisons based on subjective criteria such as likability, attractiveness or intelligence also favored higher-income areas.

The other side: "ChatGPT is designed to be objective by default and to avoid endorsing stereotypes," a company spokesperson told Axios in a statement. "Research based on forced-choice prompts and older models doesn't reflect how ChatGPT is typically used or how current models behave today."

The bottom line: The bias from LLMs can extend into policies and decision-making if not viewed with a critical and nuanced lens.