AI may detect depression just from your voice

Add Axios as your preferred source to

see more of our stories on Google.

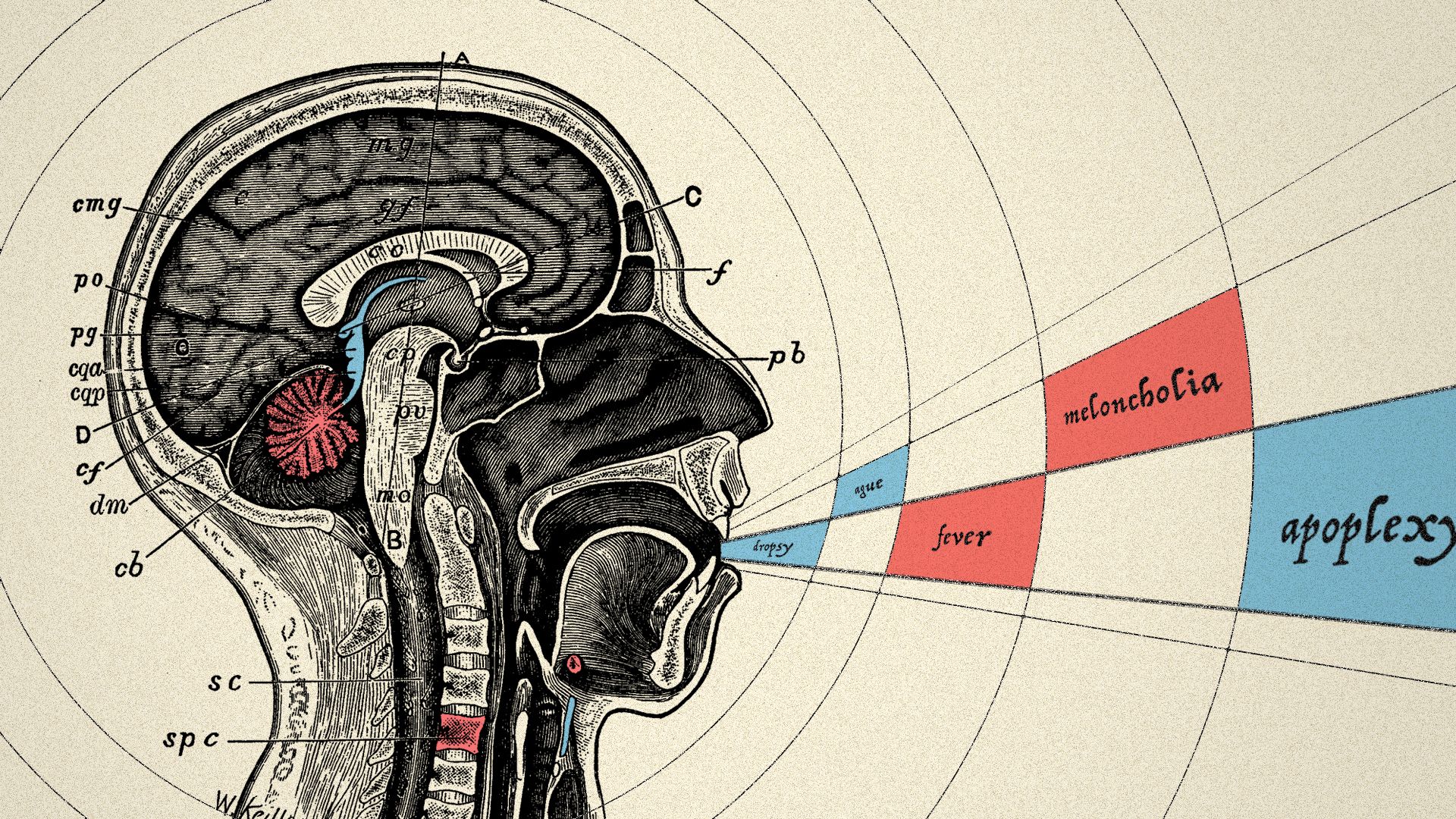

Illustration: Sarah Grillo/Axios

During a conversation, humans can grasp a friend's mood or intent by relying on subtle vocal cues or word choice. Now, researchers at MIT say they have developed an algorithm that can detect if the friend is depressed, one of the most widely suffered — and often undiagnosed — conditions in the U.S.

Why it matters: About 1 in 15 adults — 37 million Americans — experience major depressive episodes, but many times go untreated.

The background: Given a 3D eye scan, AI can diagnose dozens of diseases as accurately as a human expert. From images of tissue samples, AI can detect breast cancer as well as or better than human pathologists. And it can find signs of disorders like schizophrenia, PTSD and Alzheimer's in speech patterns.

The latest: Tuka Alhanai, a Ph.D. candidate at MIT focused on language understanding, trained an AI system using 142 recorded conversations to assess whether a person is depressed and, if so, how severely. The determinations are based on audio recordings and written transcripts of the person speaking.

How it works: Alhanai used a neural network to find characteristics of speech — like pitch or breathiness — that relate most closely to depression but aren’t correlated with one another. Then she employed another algorithm to surface patterns that most likely point to depression.

- The system was most accurate when it considered responses in context, Alhanai says. She used a neural network that can find patterns across answers rather than analyze each in isolation.

- The best-performing system classified 83% of the test cases correctly.

Advantages of a passive system: Someone who is depressed may lack the motivation to see a professional, says Mohammad Ghassemi, Alhanai’s co-author. Without a weighty medical conversation, AI might detect depression that may otherwise be missed.

But, but, but: There are early concerns about such use of AI:

- Assessments should not be carried out surreptitiously, says Alison Darcy, a former instructor at Stanford University School of Medicine and founder of Woebot, a chatbot for mental-health issues.

- Darcy also questioned the premise behind such systems. "Where is this huge need to diagnose people en masse, over and above what’s already available?"

- In a WashPost op-ed, Canadian doctor Adam Hofmann argued that algorithms shouldn’t make mental-health diagnoses at all.

Go deeper: Read the paper presenting the research.