OpenAI releases "Strawberry" model with better reasoning

Add Axios as your preferred source to

see more of our stories on Google.

Image: OpenAI

OpenAI on Thursday released a new model, previously code named Strawberry, which is capable of evaluating its steps before proceeding.

Why it matters: In addition to being better at complex math, science and coding questions, OpenAI says this approach is more explainable and adheres more closely to intended safety guardrails.

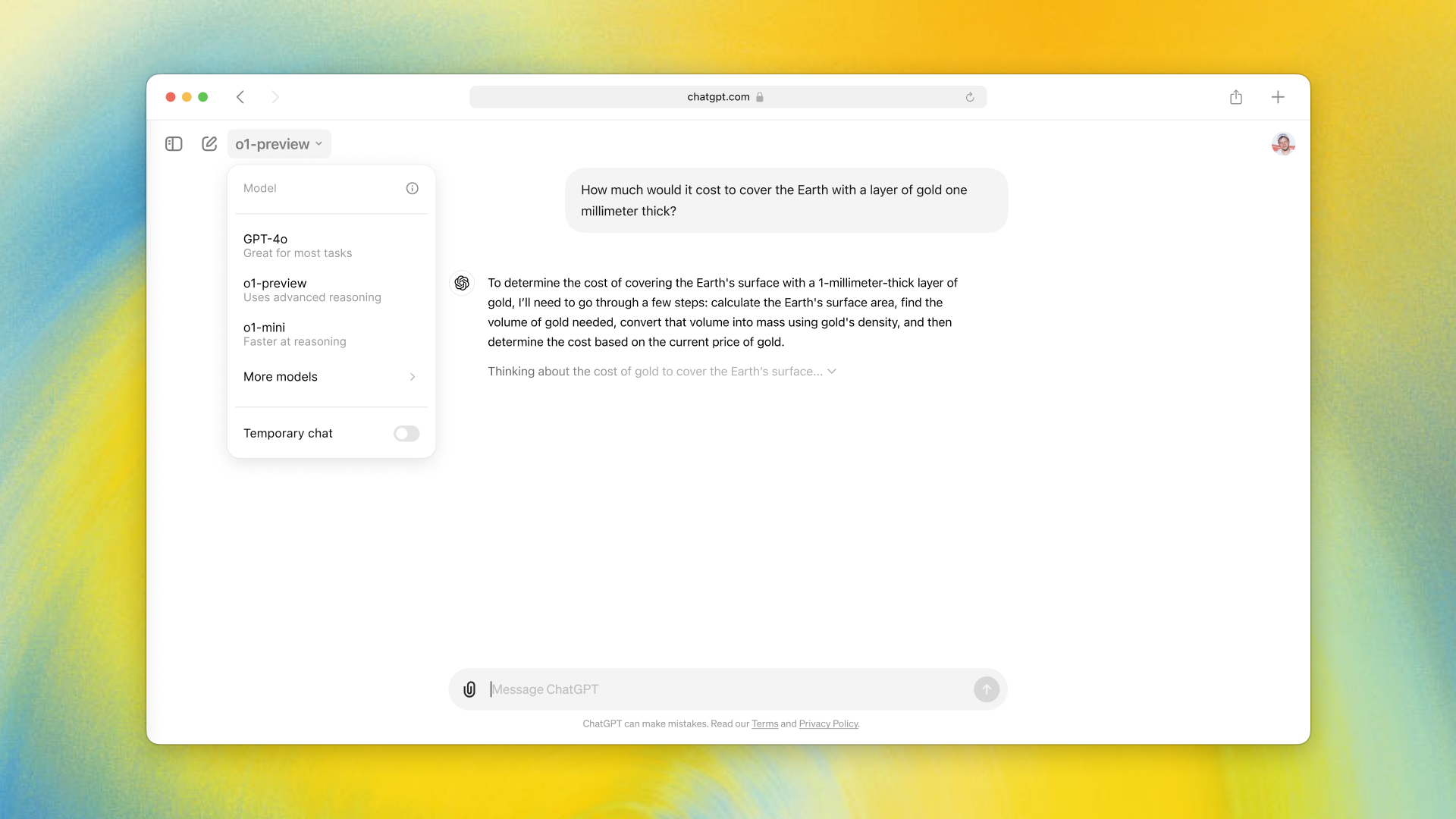

Driving the news: The new model, which also introduces a new naming and numbering system, is called OpenAI o1 (the letter "o" and the number "1").

- It will be added to ChatGPT but will co-exist with existing models, including GPT-4o and will not replace them.

- There is also a lightweight version of the model, known as o1-mini, specifically aimed at code generation.

- OpenAI is rolling out o1 in stages. ChatGPT Plus and Team users gain limited access to both o1-preview and o1-mini starting today. Educational and enterprise customers gain access next week.

Yes, but: While OpenAI o1 has its advantages, it also has a number of limitations.

- It can take longer to answer, it's a text-only model for now and it currently lacks the ability to reason against a specific document or gather real-time information from the Web.

- OpenAI says even those with access will be subject to weekly rate limits of 30 messages for o1-preview and 50 for o1-mini.

How it works: The new model operates differently than prior versions in that it considers different paths before attempting to respond to a query.

- "We trained these models to spend more time thinking through problems before they respond, much like a person would," OpenAI said in a blog post announcing the new model. "Through training, they learn to refine their thinking process, try different strategies, and recognize their mistakes."

- OpenAI says that in its testing, the new model "performs similarly to PhD students on challenging benchmark tasks in physics, chemistry, and biology" and is more capable than past models in both math and coding.

- "In a qualifying exam for the International Mathematics Olympiad (IMO), GPT-4o correctly solved only 13% of problems, while the reasoning model scored 83%," OpenAI said.

- As for the risks associated with these added capabilities, OpenAI said its evaluation found o1 was given a rating of "medium risk" on the company's preparedness ratings system, "because it doesn't facilitate evaluated risks beyond what's already possible with existing resources."

- Plus, OpenAI added, its safety testing found o1 to be better able than prior models to adhere to its safety guidelines and more resistant to generating harmful content.

Between the lines: This model is probably the big release that Mira Murati told Axios in May was coming this year.

- However, OpenAI is also working on a new, larger version of GPT-4, as it noted at the end of its blog. "We also plan to continue developing and releasing models in our GPT series, in addition to the new OpenAI o1 series."

- Microsoft CTO Kevin Scott said at the company's Build conference in May that OpenAI had begun training of a more powerful model, likening it to a giant whale compared with GPT-4 as an orca and previous models as akin to sharks and other smaller sea creatures.

- After the Build conference OpenAI said later that it had started training its next frontier model, but the company didn't say then — and isn't saying now — when to expect that release.