AI's flawed human yardstick

Add Axios as your preferred source to

see more of our stories on Google.

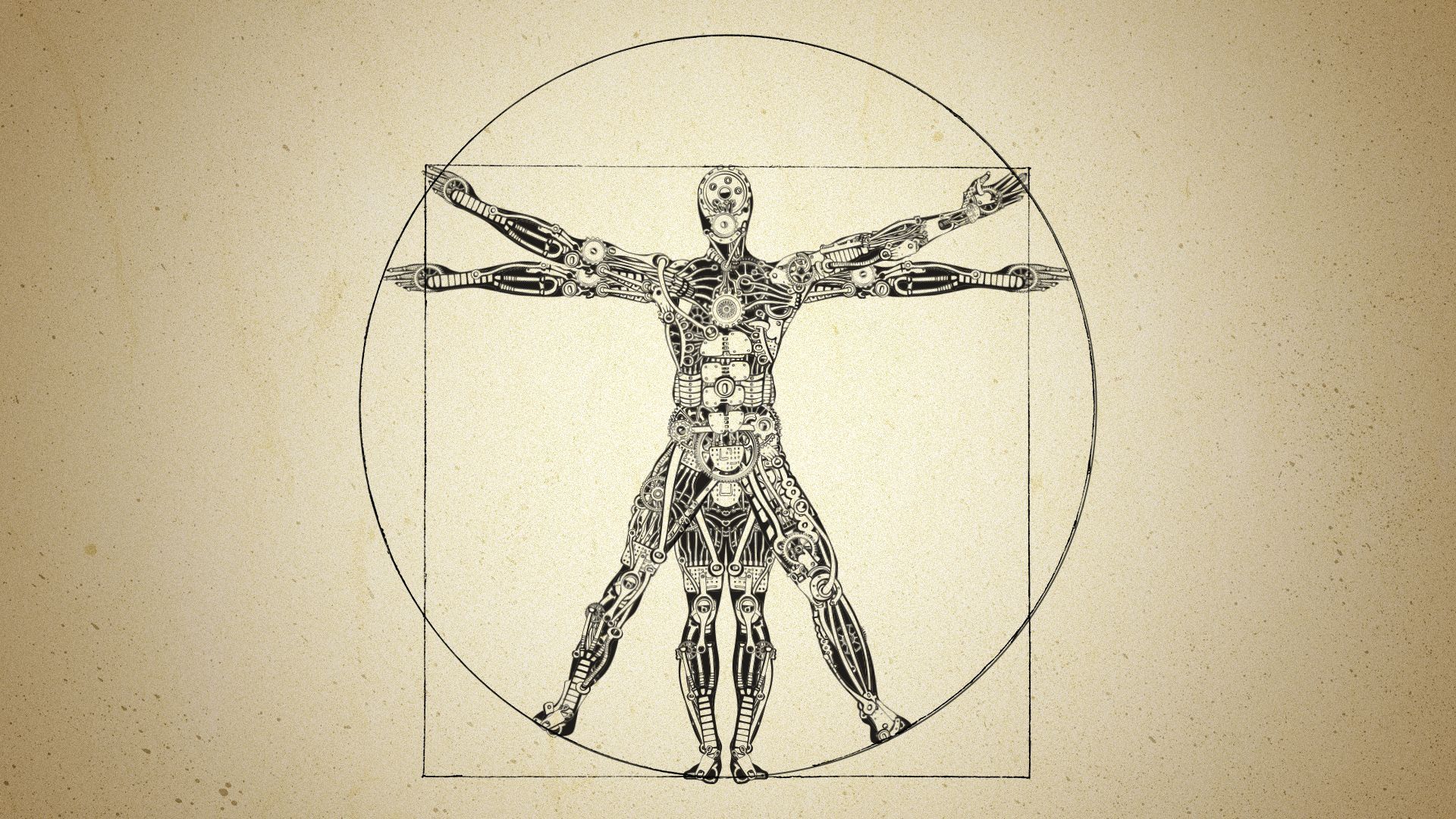

Illustration: Aïda Amer/Axios

Some of the tech industry's loudest voices — most recently Elon Musk — keep aiming for AI to become "smarter than humans," yet there isn't agreement on what that bar is.

Why it matters: If we obsess over this fuzzy human-centric yardstick for AI's abilities, we could miss out on promising — but decidedly non-human — ways machines could meet actual human needs.

- "The real problem is that we view AI systems as similar to humans," Konrad Kording, a neuroscientist at the University of Pennsylvania who studies machine learning, tells Axios.

- "We anthropomorphize them. They are different."

Driving the news: Musk on Monday (again) shared his prediction that "we'll have AI that is smarter than any one human, probably, by the end of next year."

The other side: Meta AI chief Yann LeCun this week (again) said human-level AI is still far in the distance.

The big picture: These conflicting timelines and predictions swirl around decades-old questions about artificial intelligence that are being recast through the lens of ChatGPT and other generative AI tools driving a new AI era.

As the field notches technical advances, experts warn that "smart" isn't a meaningful metric.

- "There is no widely accepted definition of intelligence or 'smart,' so there is no general test that people use," says Blake Richards, a professor of computer science and neuroscience at McGill University.

State of play: For years we tracked AI performance against chess games and the Turing test. Today, AI models can be evaluated with SATs, legal exams and other human tests.

- Their ability can be assessed by benchmarks that combine translating, summarizing, answering questions and tens or hundreds of other language tasks.

Yes, but: "That's partly because these measures are more meaningful in constrained settings," says Michael Littman, a computer scientist who directs the Division of Information and Intelligent Systems at the National Science Foundation.

- These consistent ways of benchmarking allow comparisons to performances by people, he says. And "there are many such objective evaluation measures where the best software meets or exceeds people."

- But while today's general models have "remarkably broad capabilities" that can be tested with these benchmarks, "I wouldn't exactly use the word 'smart,' that's a bit too human," says Zachary Lipton, a computer science professor at Carnegie Mellon University and CTO and CSO of Abridge, a health care AI company.

- Lipton points to the calculator. It's "much 'smarter' than humans at performing arithmetic calculations, and yet much less capable at higher math," he says.

Reality check: These tests don't capture key aspects of intelligence, including reasoning, planning and the smarts that come from interacting with the surrounding world in order to survive and thrive in it.

- "People make optimal decisions based on their circumstances," says Songyee Yoon, president and chief strategy officer at NCSOFT, one of the world's largest online game developers.

- Human beings have "evolved to optimize resources, physical embodiment, and environment to maximize their species' proliferation," she says.

Zoom in: Human intelligence is tied to our experience of ourselves in the world. "We all face the undeniable truth of death at some point," Yoon says.

- "When young, we tend to explore more and take risks. As we age, we exploit what we've learned or accumulated, paving the way for future prosperity," she says. "Deciding when to transition between these phases gradually is also a trait of 'smartness.'"

- "AI, however, doesn't have a fragile body or limited lifespan like us. Its understanding of life and humility towards death is significantly different. It wouldn't be 'smart' for AI to opt for an exploitative strategy prematurely."

Zoom out: The University of Pennsylvania's Kording suggests one specific way we could identify an AI that's smarter than an individual human: It would have to be "better than humans at dealing with completely new problems that emerge in this world."

- The "key is new," Kording adds. He wrote in an email that "having data from humans solving roughly the same problems does not count."

The intrigue: Humans may not even be the goal to match. "Intelligence is not a one-dimensional quantity," says Kyunghyun Cho, a computer scientist at NYU.

- Humans, primates, mammals, reptiles, fish, insects and bacteria are "all intelligent in their own ways and along different axes," he says.

- "It would be a silly question to ask whether AI, which would be just one dot in this high-dimensional space of intelligence, is smarter or dumber than any other dot (including ourselves)," he says.

The bottom line: "Our conception of intelligence is still quite incomplete, and by creating software that can match certain attributes of it, it brings more clarity to what intelligence actually is and is not," Littman says.