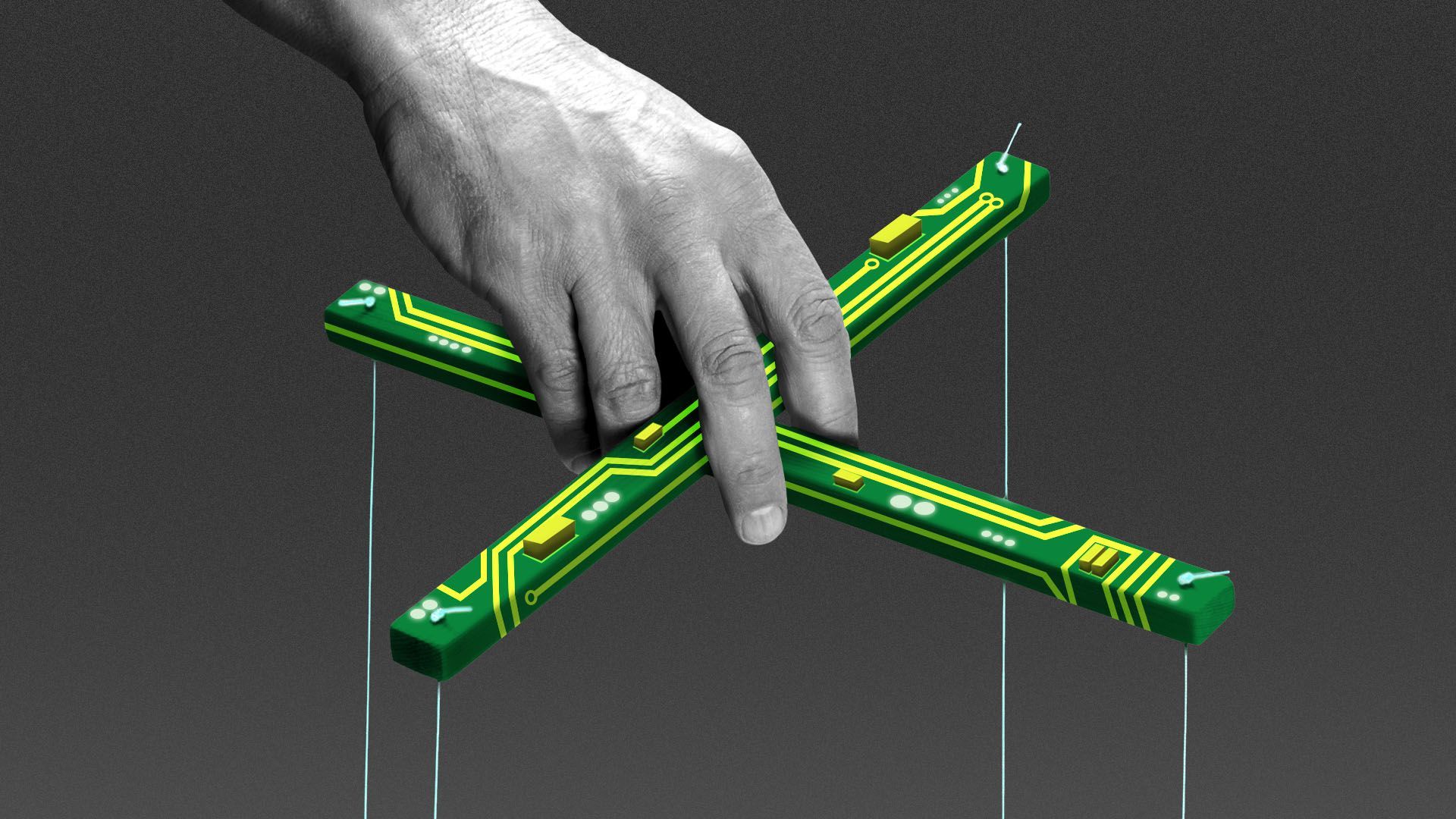

AI's colossal puppet show

Add Axios as your preferred source to

see more of our stories on Google.

Illustration: Sarah Grillo/Axios

Here's an early New Year's resolution for anyone who works with, deals with or writes about artificial intelligence: Stop saying "AI did this" or "AI made that."

Why it matters: AI doesn't do or make anything on its own. It's a software tool that people imagined and invented — the only capabilities and goals it has are those that people give it.

- The more we ascribe independence and autonomy to technology that's actually been designed and directed by specific people, the easier we make it for those people to shirk responsibility for its impacts and errors.

Be smart: Throw away your pictures of AI as a robot — and start imagining the technology as a big puppet instead.

- The strings aren't always visible and even AI developers themselves can't always find the connections between their intentions and the AI's behavior.

- But everything that an AI program does or says starts with the instructions and data that people have given it.

Driving the news: Many social media experts believe 2024 will see an explosion of generative-AI-produced synthetic media colliding with pivotal elections in the U.S. and around the world.

- AI doesn't drive political conflict, but it can accelerate the production of misinformation and erode public trust.

- Think of the flood of crappy AI-generated images of chainsaw-carved wooden dog sculptures inundating Facebook that 404 Media's Jason Koebler has chronicled — and then imagine a similar tactic deployed on behalf of a political campaign.

- This is where you have to remind yourself not to think, "AI is filling up Facebook with crud!" In every instance, someone is using AI to create and spread that flood.

How it works: The urge to view AI as a human actor is inevitable and reinforced by our species' wiring, which is tuned to identify human faces and personalities even where the world only provides us with hints.

- Users have always eagerly anthropomorphized digital technology in a phenomenon known as the Eliza effect, named after a simple 1960s chatbot that played therapist and prompted users to share personal secrets.

- Then LLMs got good at mimicking human conversation and ChatGPT made that talk available to millions of users for free, setting us up for a mass uncontrolled experiment in the projection of human agency onto software.

The intrigue: AI makers find it convenient to create the impression that AI has a mind of its own because it provides cover for their perplexing inability to fully understand or explain the output of the tools they've invented.

- As long as we're captivated by the cute, clever or unpredictable responses an AI program provides to our prompts, we're less likely to wonder why AI developers haven't done a better job of understanding why and how their tools arrive at their answers.

Between the lines: Arguing that AI has no goals of its own may remind us of the familiar gun-debate argument that "guns don't kill people, people kill people."

- Similarly, AI doesn't tell lies. People use AI to tell lies.

- In both cases, technology speeds up an existing human propensity for harm — and society has a legitimate interest in limiting that harm.

- AI's special twist is that, unlike guns, we're inclined to see the technology as something autonomous.

The other side: Many advocates believe AI's capacity for good — in the form of universal education and health care delivered by personalized digital tutors and doctors — is so vast and urgently needed that any hesitation in developing the technology is foolish, or even criminal.

- The self-evident social value of such innovation, they argue, should outweigh any qualms we may have about projecting human traits onto the technology.

The bottom line: The AI debate needs less mysticism and magic and more rigor and clarity.