How OpenAI is testing tech’s shibboleths

Add Axios as your preferred source to

see more of our stories on Google.

Illustration: Maura Losch/Axios

With Sam Altman back at OpenAI, one thing has become clear — how economic considerations have triumphed over moral qualms.

Why it matters: Technologists in general, and AI researchers in particular, are susceptible to the kind of flummery that tells them they have the power to make or break the world. At some point, however, base financial considerations always seem to trump high-minded ideals.

Driving the news: Altman was fired by his board as CEO amidst a deep culture clash — one in which fiscal imperatives ran straight into founding ideals.

The big picture: OpenAI's founders include billionaire entrepreneurs Elon Musk, Peter Thiel and Reid Hoffman.

- No strangers to hubris, these men were happy to sign their names to portentous statements about how "it's hard to fathom how much human-level AI could benefit society, and it's equally hard to imagine how much it could damage society if built or used incorrectly."

- The hubris extended to the idea that the founders could save the planet by using the U.S. tax code to incorporate as a nonprofit, in explicit contrast to the for-profit model in place at Google.

Between the lines: Johns Hopkins' Henry Farrell has diagnosed "a syncretic religion" that dominates the field of AI. That world "is profoundly shaped by cultish debates among people with some very strange beliefs," he writes.

- "The risks and rewards of AI are seen as largely commensurate with the risks and rewards of creating superhuman intelligences," says Farrell, "modeling how they might behave, and ensuring that we end up in a Good Singularity, where AIs do not destroy or enslave humanity as a species."

- Such dorm-room thought experiments might have little real-world utility, but fealty to them is surprisingly important in the world of AI researchers.

- That's because such beliefs flatter computer scientists into buying into what AI scholar Tim Hwang calls "the illusion that technologists are actually the ones in charge when it comes to what ultimately happens to a technology."

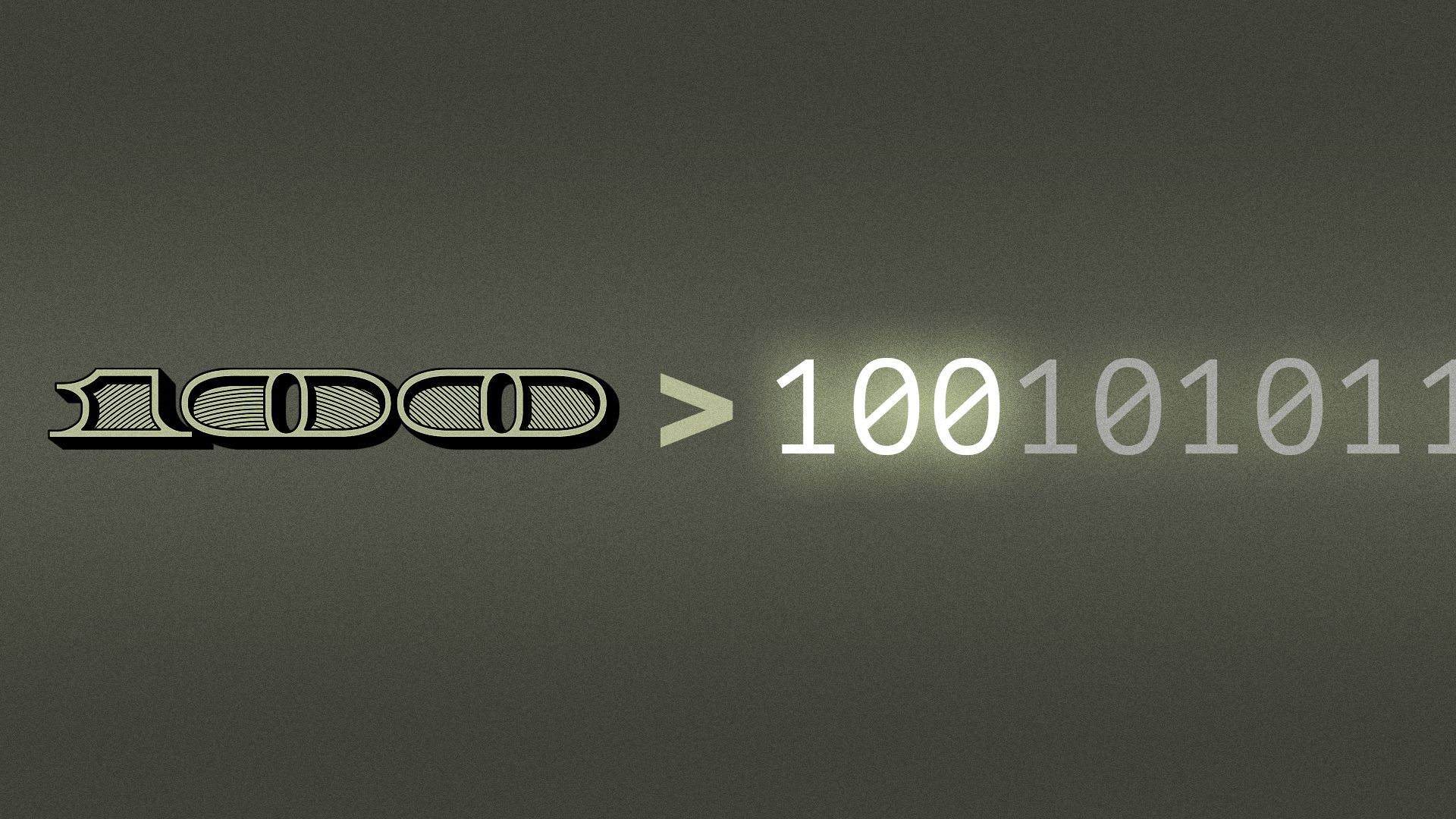

Flashback: As former crypto philosopher-king Sam Bankman-Fried said in his notorious interview with Vox's Kelsey Piper, reputations in Silicon Valley are created when "we say all the right shibboleths and so everyone likes us."

- Core among those shibboleths is the "longtermist" strain of Effective Altruism, a philosophy espoused not only by Bankman-Fried but also by much of the OpenAI board that fired Altman. (Elon Musk has dabbled in it, too.)

- Longtermists believe that avoiding an AI apocalypse is much more important than saving individual lives today — and is certainly more important than making money by turning AI into commercial products.

How it works: Espousing a certain set of beliefs doesn't just cause your peers to like you; it also makes it easier for you to hire them. That's crucial in AI, where the battle for talent is intense.

- The catch: Retaining talent is just as important as attracting talent. That's where money comes in.

- Be smart: Altman created an $86 billion nonprofit while granting substantial equity stakes to employees; he also enjoys the fierce loyalty of nearly all those employees. Those two facts are related.

The bottom line: Follow the money. After all, that's what OpenAI's employees were doing, when they made it clear that they'd follow Altman wherever he went — which ultimately forced the board to bring him back.