Common Sense's new AI ratings find more data makes AI less safe

Add Axios as your preferred source to

see more of our stories on Google.

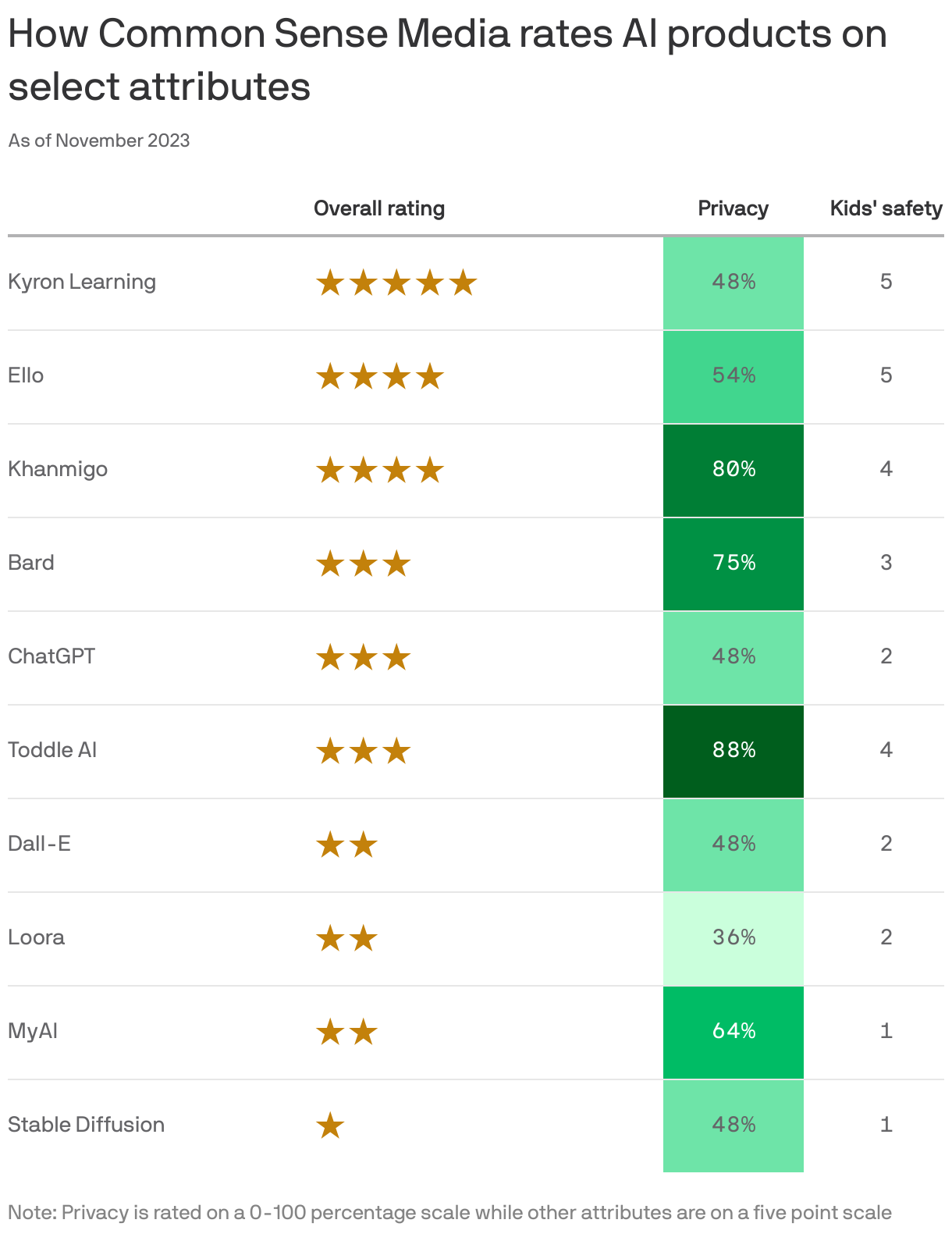

The more data used to train a particular AI tool, the less safe it's likely to be, according to a detailed study from Common Sense Media that rated 10 of the most popular AI products for privacy, ethical use, transparency, safety, and impact.

Why it matters: Leaders in AI like Google and OpenAI are racing to build ever-bigger models trained on increasingly massive mountains of data, but Common Sense's finding suggests that might be a risky plan.

The big picture: While governments and courts debate AI regulation, people using generative AI tools, especially parents and educators, need guides to avoiding privacy leaks, misinformation, bias, and other harms.

- Common Sense calls its reviews "nutrition labels" for AI. They outline a product's opportunities as well as its limitations and risks.

What they found: The AI products that trained on the most selective data sets were the safest and those that trained on the broadest data were the riskiest, the review team concluded.

- Google's Bard and OpenAI's ChatGPT scored only three out of five stars overall and scored 75% and 48% respectively for privacy.

- The product that scored the highest, with five out of five stars overall, was Kyron Learning, an AI-powered math tutor for fourth graders.

What they're saying: Tracy Pizzo Frey, who leads the Common Sense AI ratings and review programs, tells Axios it's notable that Kyron isn't using generative AI: "They're using conversational AI,and then something called dialog modeling, where they're retrieving very specific videos that were created by teachers."

- Common generative AI products like ChatGPT and Bard do the opposite. "Large language models aren't built by adding data or specific websites or aspects of the internet over time," Pizzo Frey says. "They're scraping large amounts of data from common datasets. We don't know the specifics of most of them anymore."

- If the big companies had focused more on what was included in the data sets as opposed to what was excluded, they would be less likely to generate harmful content, she says, "but that would require starting over."

What's next: While these first ratings are designed more for a "thought leader audience" — the idea is to learn from them in order to give future audiences the guidance they need. "We hope that they will be useful for parents and educators," she says.

- Pizzo Frey — a former Google employee who founded, created and led Google Cloud's responsible AI work — said that while a lot of AI companies tout transparency, it's "often deeply technical in nature." Giving non-technical people "a better understanding of how these products work is increasingly important in a more AI-enabled world," she added.

Big picture: Common Sense, which helps parents choose media products for their kids and lobbies around related issues, isn't the only organization trying to put labels on AI to increase transparency.

- Among businesses, Twilio and Salesforce both have labeling efforts to make it clear to clients how their generative AI products use customer data. IBM also just launched its own "nutrition label" effort.

Yes, but: Labeling isn't a simple matter of input and output, but how people and business are using these tools.

- Generative AI is "really best for creative use cases, as opposed to being depended on for factual accuracy," Pizzo Frey said. "And all of those companies are saying that, but they don't necessarily say that clearly upfront all the time for users as they're using the product."