YouTube to require creators to disclose use of generative AI

Add Axios as your preferred source to

see more of our stories on Google.

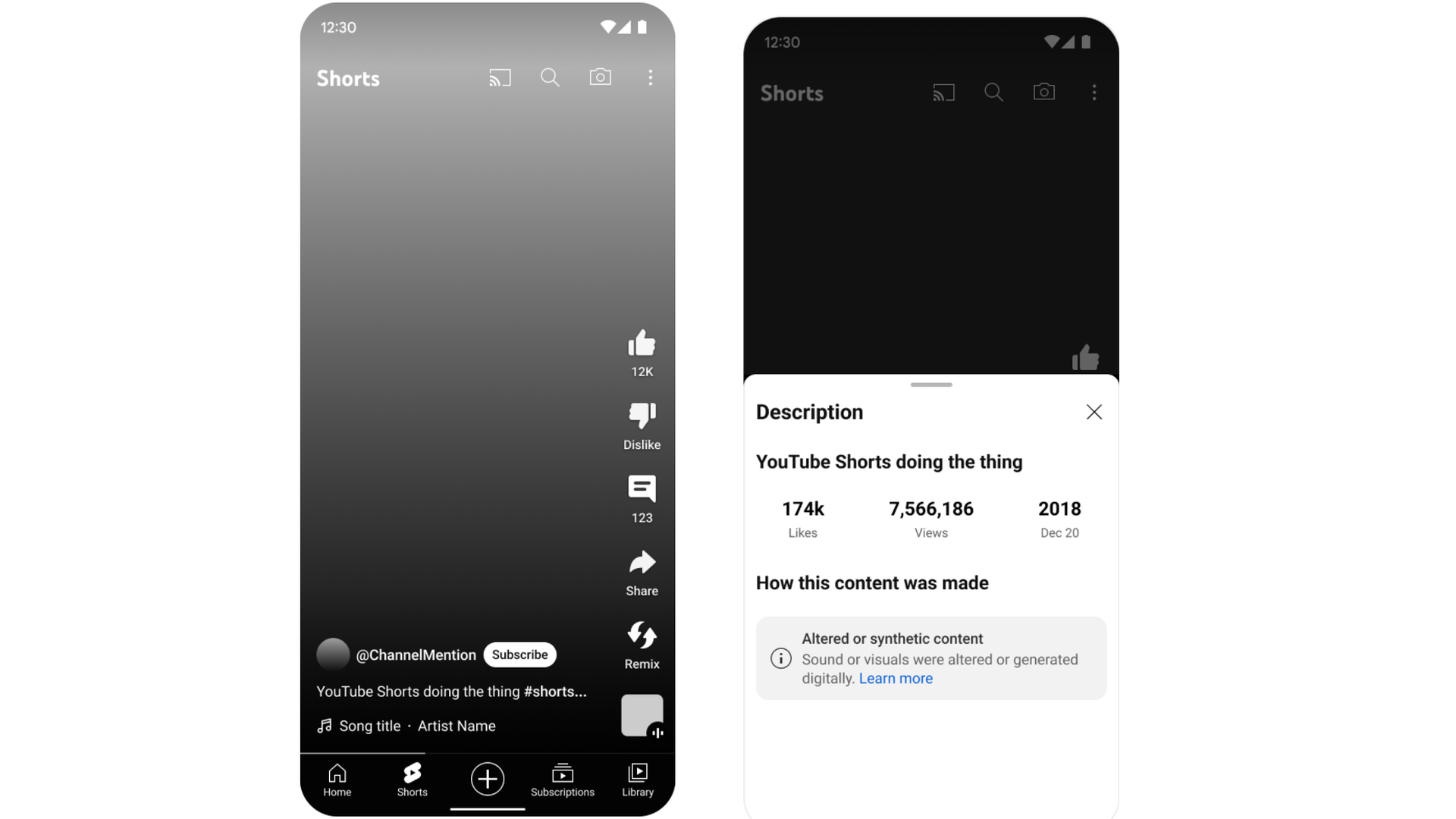

A mock-up of a new YouTube label for video that uses "synthetic content." Image: YouTube

YouTube announced a series of policy changes today that aim to inform viewers when content has been generated by AI.

Why it matters: The changes come as generative AI has made it far easier to create realistic-looking videos showing fictional scenarios.

Driving the news: YouTube's new policies, many of which officially go into effect next year, will:

- Require content creators to disclose when generative AI is used to create realistic-looking scenes that never took place or depict real people saying fictional things.

- Allow people to submit a request for YouTube to take down "content that simulates an identifiable individual, including their face or voice." However, YouTube noted that not all requests will be honored, with higher content moderation thresholds applied to material that is satire or parody and for content imitating public figures.

- Create a separate process for certain music industry partners to request removal of content that "mimics an artist's unique singing or rapping voice."

- Ensure that any of YouTube's own tools that employ generative AI are also fully disclosed to viewers.

Of note: It's up to creators to check the appropriate box when their videos meet these criteria — but YouTube says doing so is not optional and failing to disclose the information could lead to content removal or other penalties.

What they're saying: "We have long-standing policies that prohibit technically manipulated content that misleads viewers and may pose a serious risk of egregious harm," YouTube said in a blog post. "However, AI's powerful new forms of storytelling can also be used to generate content that has the potential to mislead viewers — particularly if they're unaware that the video has been altered or is synthetically created."

Between the lines: While YouTube is requiring the disclosure when AI technology is used in certain ways, how that information is displayed to viewers will vary based on how sensitive YouTube deems the subject to be.

- In many cases the disclosure will only be visible on a video's description screen. The company says it will make labels more prominent for videos on sensitive topics such as politics, military conflicts and health issues.

Be smart; YouTube also says that all its other content standards will apply and that videos generated by AI must still observe standards governing violence and hate speech.