Meta releases tool to detect computer vision bias

Add Axios as your preferred source to

see more of our stories on Google.

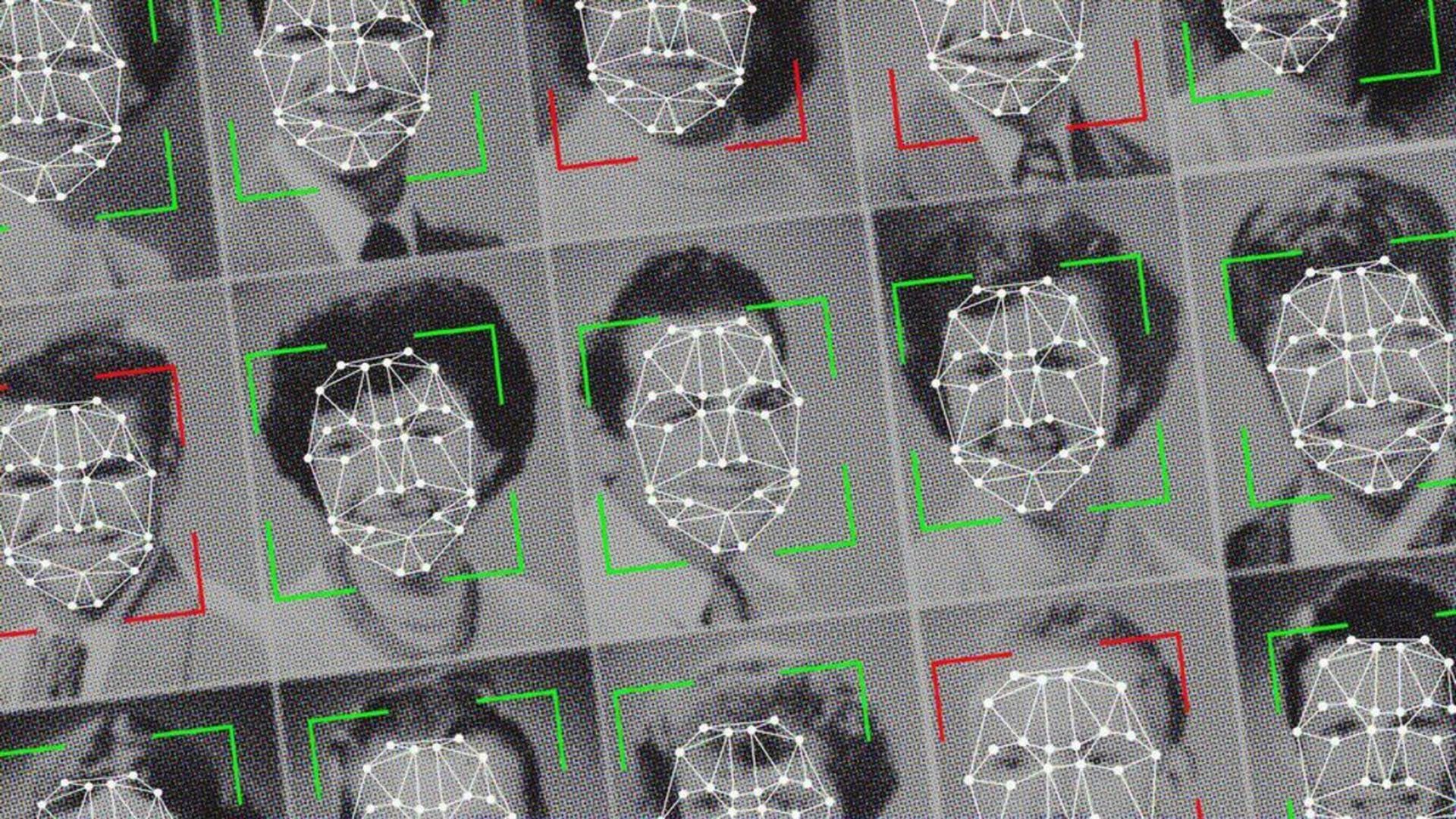

Illustration: Sarah Grillo/Axios

Meta on Thursday released a new tool designed to spot racial and gender bias within computer vision systems.

Why it matters: Many computer vision models have shown systematic bias against women and people of color. The hope is that improved tools will enable developers to better detect shortcomings and address them.

Details: Meta is offering researchers access to FACET (FAirness in Computer Vision EvaluaTion), a tool that evaluates how well computer vision models perform across various characteristics including perceived gender and skin tone.

- FACET was based on more than 30,000 images containing 50,000 people, all of which were tagged by experts across the different categories.

- Meta says FACET can be used to answer questions like whether an engine is better at identifying skateboarders when their perceived gender is male or whether a system is better and identifying people with light skin and dark skin and whether such problems are magnified when a person has curly, rather than straight hair.

- Meta is also re-licensing its DINOv2 computer vision model under an Apache 2 open-source license, allowing for commercial use.

What they're saying: "Benchmarking for fairness in computer vision is notoriously hard to do," Meta said in a blog post. "The risk of mislabeling is real, and the people who use these AI systems may have a better or worse experience based not on the complexity of the task itself, but rather on their demographics."

- "We want to continue advancing AI systems while acknowledging and addressing potentially harmful impacts of that technological progress on historically marginalized and underrepresented communities, especially," Meta chief ethicist Chloe Bakalar said in a statement to Axios.