Facebook's content-review black hole

Add Axios as your preferred source to

see more of our stories on Google.

Screenshot: Axios

Facebook still isn’t fully reviewing all the content users flag as potentially violating its rules, three years after a pandemic-related shift to rely more heavily — and in some cases exclusively — on automated systems for content moderation.

Why it matters: Civil rights groups and regulators say the practice is dangerous and adding to an already toxic environment for marginalized groups.

Details: In March 2020, Facebook warned that due to a lack of content moderators, it was relying more on AI to triage user reports. Some content flagged for breaking the rules, the company said, wouldn't get reviewed by a human at all.

Today, Facebook continues to show that message to an unspecified number of reports deemed as lower risk by its automated tools.

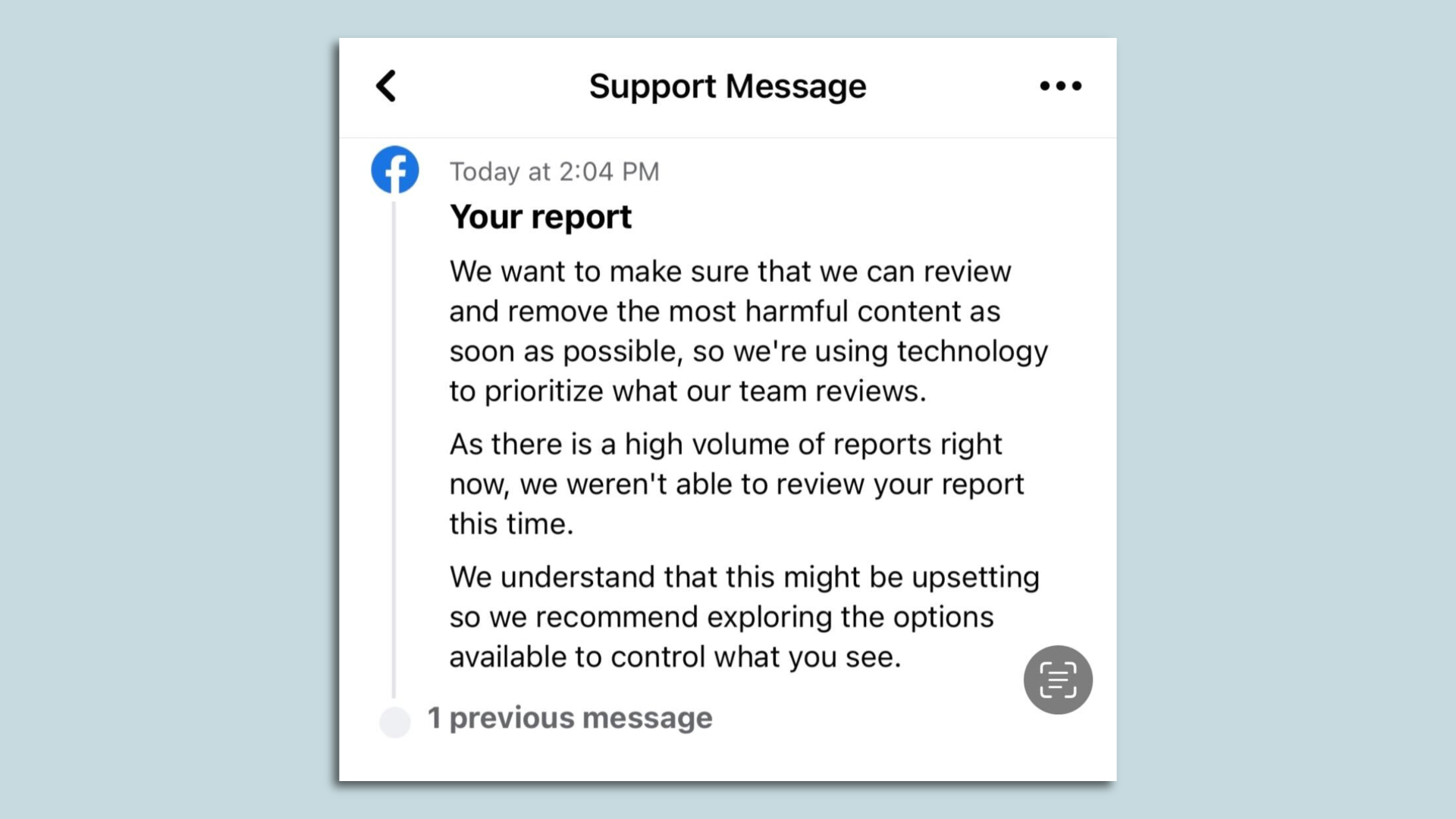

- "As there is a high volume of reports right now, we weren't able to review your report this time," reads a message shown when the company declines to send a report on for human review.

The practice was cited as "gravely concerning" in a recent GLAAD report that gave failing marks to all the major social media platforms, including Instagram and Facebook.

- That report blamed social media companies for directly fostering an environment that has led to a spike in offline hate and harassment and called on the companies to better enforce their existing policies.

- "These kinds of failures — to not even evaluate our reports of hateful content — represent an ongoing active serious public safety concern, not only for LGBTQ people," GLAAD senior director Jenni Olson told Axios.

How it works: Meta, the parent company for both Facebook and Instagram, says it uses a variety of criteria to determine which posts don't get human review — including how likely the content is to represent a serious threat, as well as the likelihood the content will go viral or be seen by large numbers of people.

- Meta declined to say how many reports that it receives are not reviewed by a human content moderator.

Between the lines: Pandemic-related staffing issues have presumably eased, but Meta has also made two rounds of significant job cuts over the past seven months.

- The company cut 11,000 workers, or 13% of staff in November and then announced in March that it was laying off 10,000 more workers and eliminating 5,000 open positions.

- But much of its content-moderation labor is handled by out-sourced contractors.

Zoom in: I recently experienced the impact of Facebook's AI-first approach first hand. In May I publicly posted about what a challenging time it has been for trans people and how good it was to be with my community at the GLAAD Media Awards in New York.

- The initial replies from friends were supportive, but several weeks later, the post drew a number of anti-transgender memes and comments. I reported the ones that appeared to violate Facebook's standards.

- None of the postings I reported were taken down by Facebook. In some cases, I got a message that the content was deemed to not have violated the rules. In others, I received a message that the content was not being reviewed due to high volumes of reports.

- After I brought my concerns to Facebook PR (an option unavailable to most users), all but three of the comments were taken down for having violated the rules, including at least one that I had been told was not being reviewed and others initially deemed not to have violated any rules.

The big picture: Australia's eSafety commissioner, Julie Inman Grant, told Axios via email that her agency saw a 600% increase of illegal and harmful content appearing on both Facebook and Instagram during COVID.

- "They told us then that whilst they were using AI in place of the outsourced content moderators who couldn’t view this content from home for work, health and safety reasons, that they acknowledged that AI was still very imperfect and that humans needed to be kept in loop," Inman Grant said.

- Levels of harmful content have dropped since the peak of the pandemic, Inman Grant said, but this year has seen an increase across all social media platforms in both child sexual abuse material and specifically in image-based sexual extortion.

Despite these problems, Inman Grant said, Meta continues to rely wholly on AI in some cases and has since cut members of its global trust and safety team, including the regional head for that team.

- "Safety cannot continue to be an afterthought or considered an expendable function," she said. "If they can target advertising with deadly precision, they certainly have the capability to target online hate and known child sexual abuse material the same way."

- Meta is not the only company in the Australian regulator's crosshairs. Earlier Wednesday, Inman Grant's agency sent a a legal notice to Twitter demanding it explain the steps it is taking to combat online hate or risk being slapped with fines.

What they're saying: Meta stressed to Axios that the overall prevalence of rule-breaking content, including hate speech, is extremely low.

- "We want our products and platforms to be safe, and we want all users to have a positive experience," a Meta spokesperson told Axios. "That’s why we invest heavily in safety and security."

- "We’re not perfect, and we are always looking for ways to improve our systems and technology,” Meta added.