Adobe sees AI helping artists, not eating their lunch

Add Axios as your preferred source to

see more of our stories on Google.

Image: Adobe

AI's magical text-to-image generators, like Dall-E 2, have sparked fears of unemployment among professional illustrators — but Adobe, the leading maker of software tools for designers, sees AI as more of a creative assistant.

Driving the news: At at a conference this week, Adobe showed how it could build generative AI tools into Photoshop, Lightroom and other products.

Why it matters: Fanciful images generated by the likes of Dall-E 2 and Stable Diffusion have raised thorny legal and ethical questions, but Adobe's early work provides a glimpse at an alternative vision of humans and AI working together.

Details: At its MAX conference in Los Angeles this week, Adobe showed off a number of ways generative AI could help creative workers without entirely supplanting artists.

- In Photoshop, Adobe showed how AI could offer artists an early look at a number of different creative approaches to a subject, allowing them to quickly explore options in far less time.

- In Lightroom, Adobe showed how generative AI could be used to enable a slider that allowed the background sky of an image to be changed gradually from day to night. (Adobe already has AI-infused neural filters that do specific transformations.)

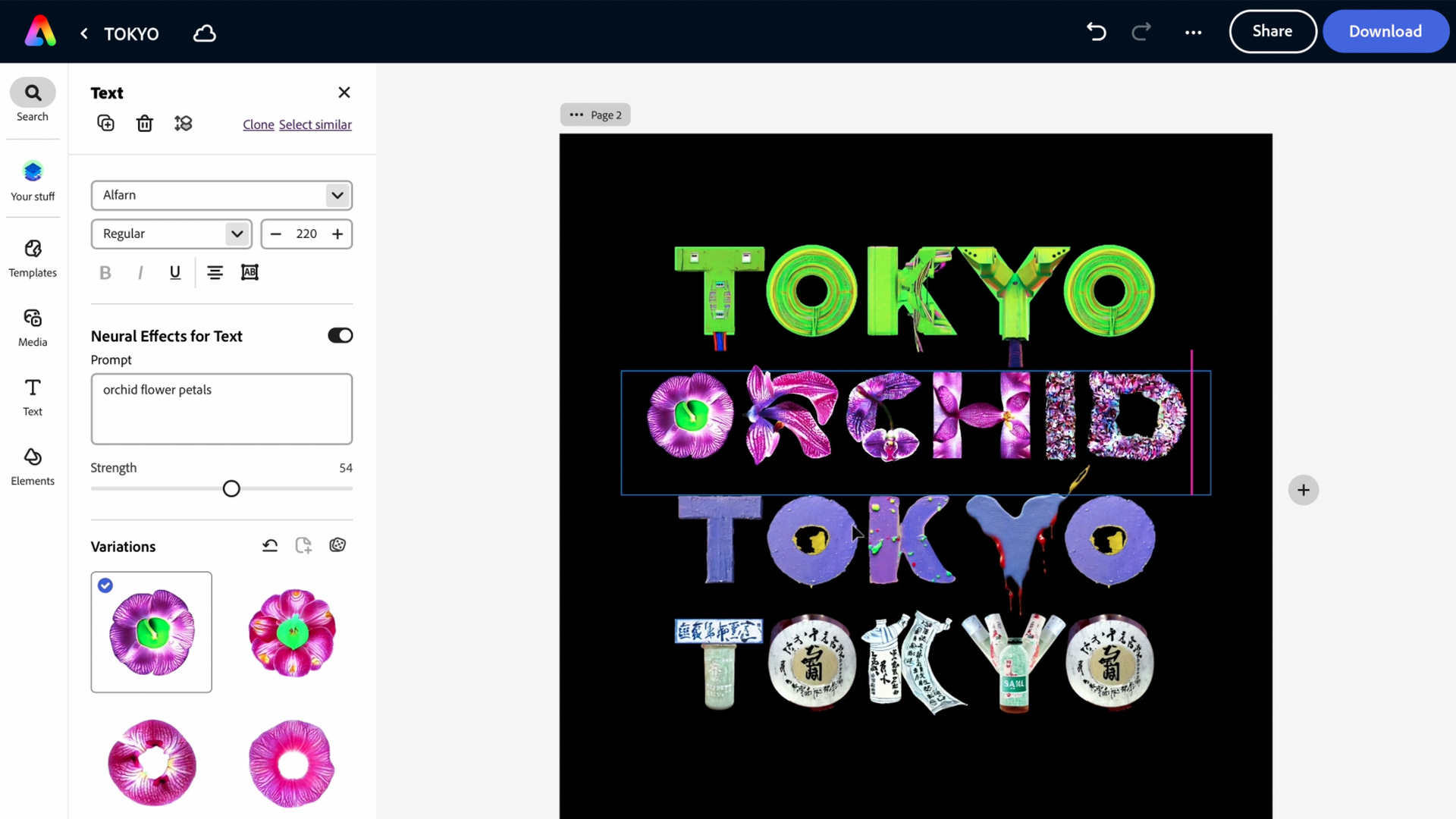

- With its newer web-based Express tool, Adobe showed how a text prompt could be used to transform any of its thousands of fonts to incorporate other objects. In one case, Adobe merged fonts with electrical cables, and in another it infused the type with orchid petals.

The big picture: Adobe's approach offers a contrast to the projects put out by OpenAI, Google, Meta, Stable Diffusion and others, which have largely focused on what AI programs can do on their own given text prompts from users.

What they're saying: While some see generative AI as a threat to artists' livelihood, Adobe chief product officer Scott Belsky told Axios he sees it as eliminating mundane chores, similar to the way GitHub's Copilot helps programmers code faster.

- "It helps you achieve more explorations in less time," Belsky said in an interview Thursday.

- Belsky also sees an opportunity for the software to help people know which elements of an image came from an artist and which used AI (and perhaps which AI system), drawing on work from the company's deepfake-fighting Content Authenticity Initiative. "People need to know where this stuff is coming from," he said.

Yes, but: Legal issues remain, particularly around intellectual property and rights to the data used to train these AI programs. Belsky said that Adobe is wrestling with the same questions as others in the industry, and also trying to develop business models that support artists.

- One approach Adobe is weighing: rely on a so-called clean model that is trained only on images to which Adobe has full rights.

- Another path could be to allow creators in Adobe's Behance network to opt in, allowing their work to be used and potentially collect royalties when another user creates work in their style using the AI tools.

Then there is the question of who owns an AI-generated image.

- While U.S. law doesn't allow fully computer-generated works to be copyrighted, Belsky said he is confident that artists will be able to incorporate the output of generative AI into their own work without sacrificing their rights of ownership.

What's next: While much of the industry's early work has focused on image generation (and simple videos), Adobe sees generative AI also being useful in everything from 3D design to texture creation to making logos.