The perils of AI emotion recognition

Add Axios as your preferred source to

see more of our stories on Google.

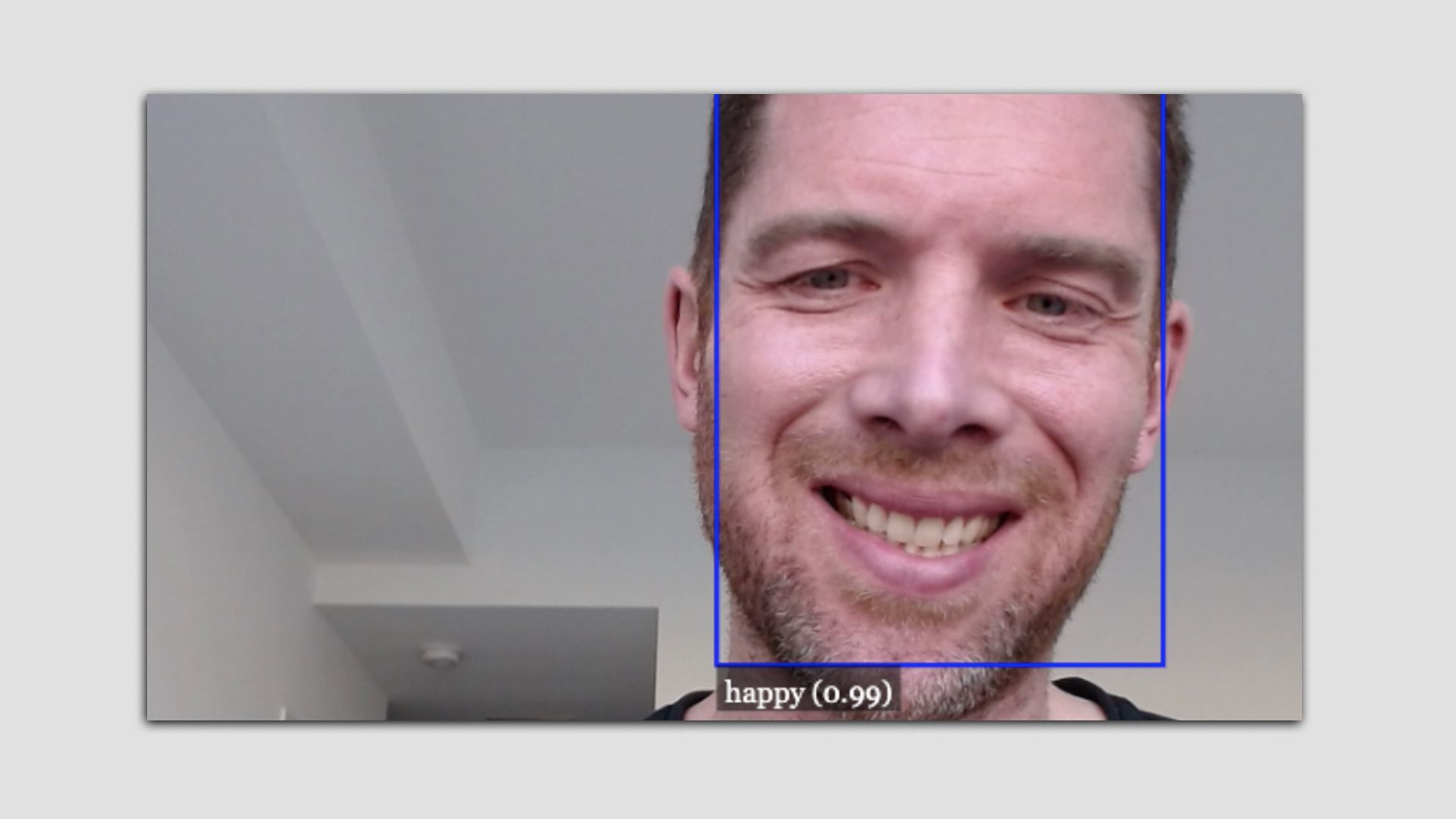

Photo: Screenshot of Bryan Walsh playing the Emojify game

New AI tools purport to be able to identify human emotion in images and speech patterns.

Why it matters: Prompted in part by the push of the pandemic, tech companies have been advertising emotion recognition programs, but experts warn they may not work — and may be misused.

How it works: Emotion recognition software is meant to do just that — use decades-old psychological research about how humans express emotions and recognize it in image, video or even in speech.

- The field is a fast-growing part of the AI industry, with one recent report projecting the market for the technology could grow to $37 billion by 2026.

- The tools are being employed for everything from security to monitoring remote workers to guiding students in a classroom.

The catch: There are serious concerns about how effective emotion recognition AI really is and whether it can even be used ethically.

- A multidisciplinary team led by University of Cambridge professor Alexa Hagerty recently produced the Emojify Project, which allows users on the web to try out emotion recognition tech for themselves.

- It's not hard to "fool" the system by producing a less than real facial expression that corresponds to one of the six supposedly universal emotions conveyed by all human beings — like the "smile" I'm presenting in the picture above this piece.

What they're saying: In a piece published earlier this week in Nature, AI ethicist Kate Crawford argued the technology should be regulated because it can draw "faulty assumptions about internal states and capabilities from external appearances, with the aim of extracting more about a person than they choose to reveal."

- Last week my Axios colleague Ina Fried broke a story about a digital civil rights group asking Spotify to abandon a technology it has patented to detect emotion, gender and age using speech recognition.

The bottom line: The two questions we should ask about emerging technology are: does it work? And should we use it?

Go deeper: ACLU to FOIA information about national security uses of AI