What existential risk can teach us about the coronavirus pandemic

Add Axios as your preferred source to

see more of our stories on Google.

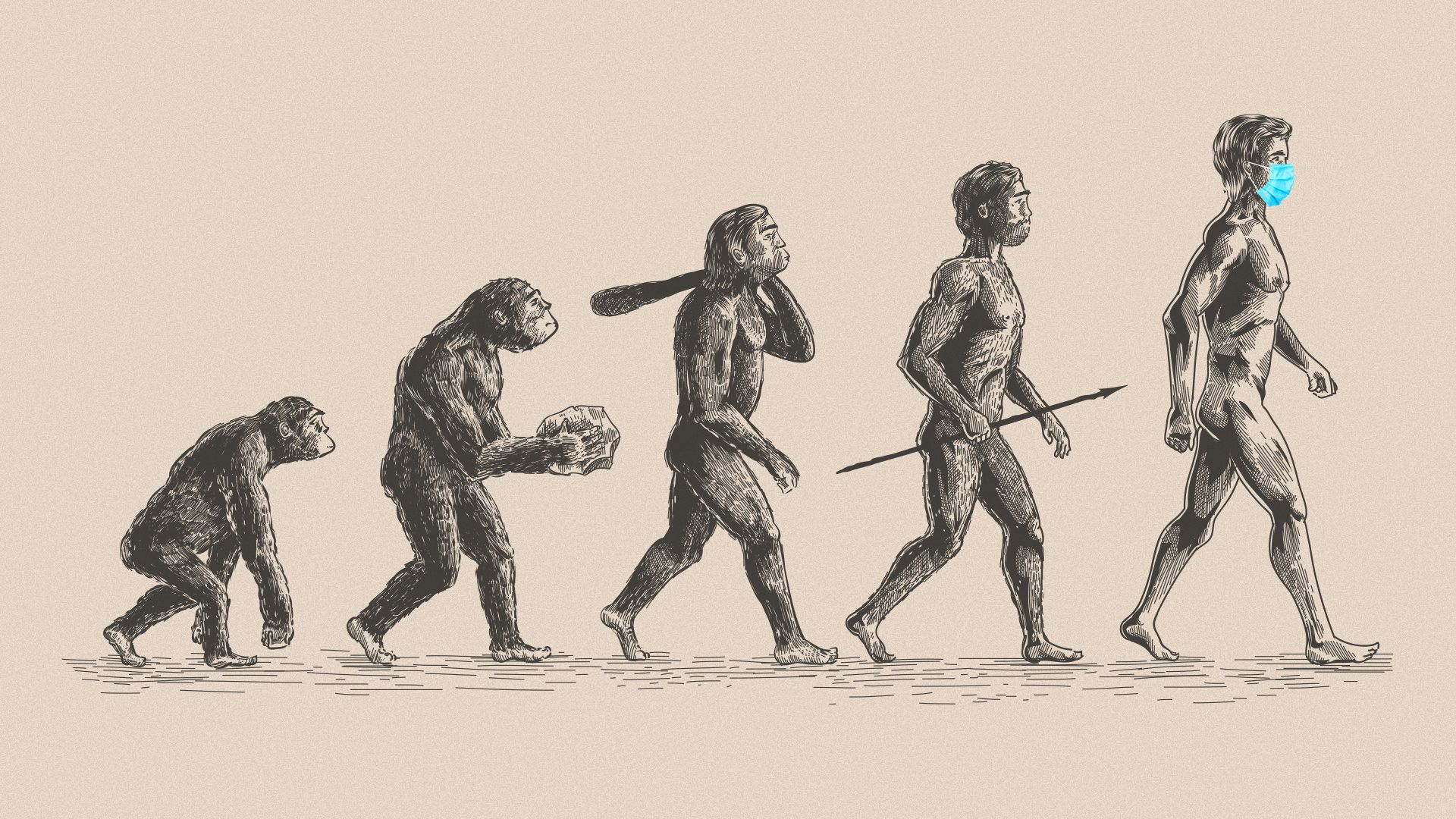

Illustration: Aïda Amer/Axios

Humans face unprecedented peril from new technologies and from our own actions, according to a new book by Toby Ord. By Ord's reckoning, humanity has a 1-in-6 chance of suffering an existential catastrophe this century.

Why it matters: The sheer havoc COVID-19 has caused to our globally connected economy and our clear failure to prepare for such a low-probability but high-consequence threat doesn't bode well for a future where existential risk will intensify.

Toby Ord is a moral philosopher at Oxford University, where he focuses on existential risk — catastrophes so huge they could plausibly threaten the future of humanity. His new book, "The Precipice: Existential Risk and the Future of Humanity," explores why that risk is on the rise and what we can do in this moment of danger to safeguard our future.

What's happening: My initial conversation with Ord took place in early March, just as COVID-19 was beginning to spread in earnest to Europe and the U.S. I checked back with him last week, by which time the pandemic was a pandemic and humanity was clearly facing something it hadn't experienced in decades or longer.

- COVID-19 isn't a plausible existential threat, according to Ord. As diseases go, it doesn't compare to mass killers like the plague or the 1918 flu — yet we have fumbled the response.

"Humanity mostly learns through trial and error. But this only really works when we can still feel the sting of the last error — when it is within a couple of election cycles, or sometimes generations. When the last time is no longer vivid in our memories it is very hard to maintain the political will to keep investing in our defenses. This amnesia makes it extremely difficult to defend against once-in-a-century events, like COVID-19, despite how important they are, and the fact that they were predicted by experts. "— Toby Ord, Oxford University

The big picture: While we may picture world-ending threats in the form of an incoming asteroid or something similarly cosmic, Ord's view is that the much bigger dangers are the risks humanity introduces into the world: nuclear war, climate change, bioengineered pandemics and out-of-control AI.

- Such human-made, technological risks are new and getting worse, as climate change intensifies, nuclear tensions increase and AI and genetic engineering break new barriers. That means if we want to keep humanity safe, as Ord told me recently, "current and future anthropogenic risks are where most of our efforts should be devoted."

Of note: Those efforts, so far, amount to little — Ord estimates the world spends more on ice cream than it does on preventing existential risks.

- He believes a bioengineered pandemic or out-of-control AI present the biggest possibility of causing not just catastrophe, but plausible human extinction.

"The Precipice" is a fascinating book, one that showcases both the knowledge of its author and his humanity. Writing a book about the plausible end of the world can be depressing — as I can say from my own experience — but Ord makes it clear success means not just surviving for another day, but keeping the door open to a much better future.

- "This is the beginning of one of the most important periods of our history," Ord said. "And I think we will rise to these challenges and we'll get our house in order."

The bottom line: As the stakes of existential risk rise, we won't always get the chance to learn from our mistakes in the future. So we had better take all the lessons we can from the pandemic — while we can.