Axios Tampa Bay

January 20, 2026

Good morning! We're here with a special newsletter sharing how to stay ahead of AI tools our youngest generations are using.

- But first, we'll explore Gov. Ron DeSantis' proposal to rein in AI companies and give parents greater control.

🌧️ Today's weather: Slight chance of rain showers, with a high of 68 and a low of 55.

Sounds like: "Mr. Roboto," Styx.

Today's newsletter is 1,069 words — a 4-minute read.

1 big thing: Inside Florida's push to regulate AI

Gov. Ron DeSantis wants Florida to regulate AI — and his proposal has already drawn criticism from President Trump and Big Tech.

Why it matters: State lawmakers have sought to rein in everything from social media to porn websites, efforts that have resulted in litigation and, in some cases, led companies to block access across the entire state.

Driving the news: Senate Bill 482 would give parents the legal right to supervise, access, limit and control their children's use of artificial intelligence.

- It would also prohibit state or local government agencies from using AI technology, software or products provided by an entity owned by a "government of concern," like China.

- Plus, companies would be required to notify consumers when they are interacting with AI chatbots.

Zoom in: The bill runs afoul of an executive order Trump signed last month, which called for AI to be regulated exclusively at the federal level, and builds on prior legislation passed in Florida.

- In 2024, state lawmakers passed legislation requiring political advertisements that use AI-generated images, video, audio or text to inform viewers of their use.

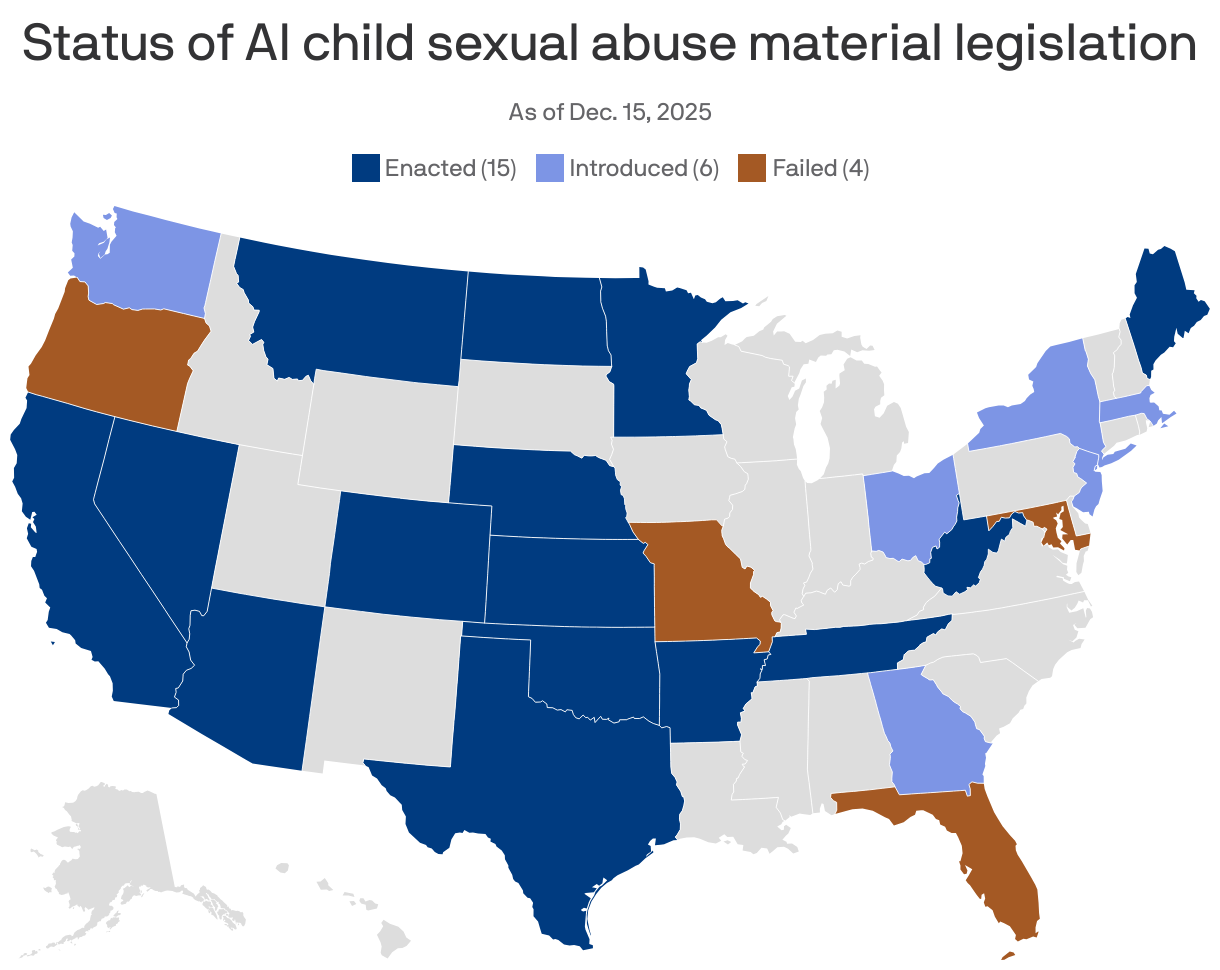

- Then, in 2025, state lawmakers made it a third-degree felony to "willfully and knowingly" create, solicit or possess AI-generated pornographic images or videos of someone without their consent.

Friction point: The Computer and Communications Industry Association, which represents Apple, Google and Meta and has challenged Florida's law that limits social media access for children, opposed the bill.

- "Artificial intelligence systems are developed, trained, and deployed on a national and global scale," the group wrote in a letter.

- "Fragmented state laws make it challenging for a company to deploy more features and services in a particular state, and risk undermining online free expression," it went on.

What's next: The bill has two committee stops before it reaches the Senate floor.

2. "The new imaginary friend"

Screens are winning kids' attention, and now AI companions are stepping in to claim their friendships, too.

Why it matters: The AI interactions kids want are the ones that don't feel like AI, but instead feel human. That's the most dangerous kind, researchers say.

State of play: AI companions can give children and teens the impression they not only can replace human relationships, but they're better, Pilyoung Kim, director of the Center for Brain, AI and Child, told Axios.

- In a worst-case scenario, a child with suicidal thoughts might choose to talk with an AI companion over a loving human or therapist.

The latest: Aura, the AI-powered online safety platform for families, called AI "the new imaginary friend" in its State of the Youth 2025 report.

- Children reported using AI for companionship 42% of the time.

- Just over a third of those chats included violent themes, and about half of those instances also involved sexual role-play.

Even with safety protocols in place, Kim found while testing OpenAI's new parental controls with her 15-year-old son that it's not hard to skirt protections by opening a new account with an older age.

OpenAI told Axios it's in the early stages of an age prediction model, in addition to its parental controls, that will tailor content for users under 18.

- "We have safeguards in place today, such as surfacing crisis hotlines, guiding how our models respond to sensitive requests, and nudging for breaks during long sessions, and we're continuing to strengthen them," OpenAI spokesperson Gaby Raila told Axios.

The bottom line: The more human AI feels, the easier it is for kids to forget it isn't.

3. Slow-moving policies, fast-moving bots

Kids' AI habits are outpacing adult oversight, raising concerns about privacy, development and online safety.

By the numbers: Seven in 10 teens used generative AI last year, and 83% of parents said schools haven't addressed it, a Common Sense Media survey found.

- A 2025 Pew survey shows that among teens who reported using chatbots, about 3 in 10 do so every day.

State of play: Conversations about children's safety and AI are just now coming to the forefront.

- OpenAI launched parental controls this fall.

- Character.AI launched "parental insights" in March and then tightened them in October, saying users under 18 won't be allowed to have open-ended chats.

What they're saying: "There really does need to be more overarching policy to move the needle towards safer online experiences for kids, including AI," Tiffany Munzer, a developmental behavioral pediatrician, tells Axios.

- Munzer is working on the American Academy of Pediatrics' AI policy. It's expected to be published at the end of 2026.

4. AI fuels faster abuse

The National Center for Missing & Exploited Children's CyberTipline saw a 1,325% increase in reports involving generative AI from 2023 to 2024.

Why it matters: AI tools are being weaponized to accelerate and scale child exploitation tactics.

Catch up quick: In late 2023, NCMEC noticed that blackmailing children was getting a lot faster, Fallon McNulty of NCMEC's exploited children division told Axios.

- Previously, interactions lasted days or even years. Now, financial sextortion (using nude images to coerce someone to send money) happens within hours.

- Children who have never sent photos are being contacted with sexually explicit images created using AI.

- The scammer will say: "No one is going to believe this isn't you. You might as well do what I say." It looks scary real, McNulty says.

Education is critical to protecting families, McNulty says.

5. The Pulp: GOP commissioners skip MLK proclamation

✍️ Hillsborough commissioners Donna Cameron Cepeda, Christine Miller and Joshua Wostal, all Republicans, didn't sign a Martin Luther King Jr. Day proclamation recognizing the Tampa Organization of Black Affairs. (Florida Politics)

🏥 Florida eased regulations on nursing schools in 2009 to address a statewide shortage. The change spawned for-profit institutions that left many graduates unable to pass the exam needed to enter the profession. (Tampa Bay Times)

🏨 ICYMI: The Tampa City Council voted down a proposal last week to turn the historic Mirasol apartments on Davis Islands into a boutique hotel. (Fox News 13)

🥳 Yacob is taking Maya out for an early birthday celebration.

🥶 Kathryn is thawing out from a weekend trip to Tallahassee for a friend's baby shower.

This newsletter was edited by Shane Savitsky and Jeff Weiner.

Sign up for Axios Tampa Bay