Axios San Diego

February 09, 2026

Good morning, San Diego! We're here with a special newsletter sharing how to stay ahead of AI tools our youngest generations are using.

- Like internet access in the '90s and smartphones that followed changed prior generations, kids are using AI in ways that could change them forever.

Today's weather: Coast — sunny, high 68; Inland — sunny, high 78.

Situational awareness: Mayor Todd Gloria announced that beginning March 2, more Balboa Park lots will be free for San Diego residents and parking will again be free after 6pm. Residents must register their vehicles online.

🎂 Happy belated birthday to our Axios San Diego member Antoinette Knudsen!

Today's newsletter is 1,260 words — a 5-minute read.

1 big thing: AI is changing childhood. The guardrails aren't ready

Most teens now use generative AI, even as parents and schools struggle to keep up with guidance on how to keep kids safe.

Why it matters: Kids' AI habits are outpacing adult oversight, raising concerns about privacy, development and online safety.

By the numbers: Seven in 10 teens used generative AI last year, and 83% of parents said schools haven't addressed it, a Common Sense Media survey found.

- A 2025 Pew survey shows that among teens who reported using chatbots, about 3 in 10 do so every day.

State of play: Conversations about children's safety and AI are just now coming to the forefront.

- OpenAI just launched parental controls this fall.

- Character.AI launched "parental insights" in March and then tightened them in October, saying users under 18 won't be allowed to have open-ended chats.

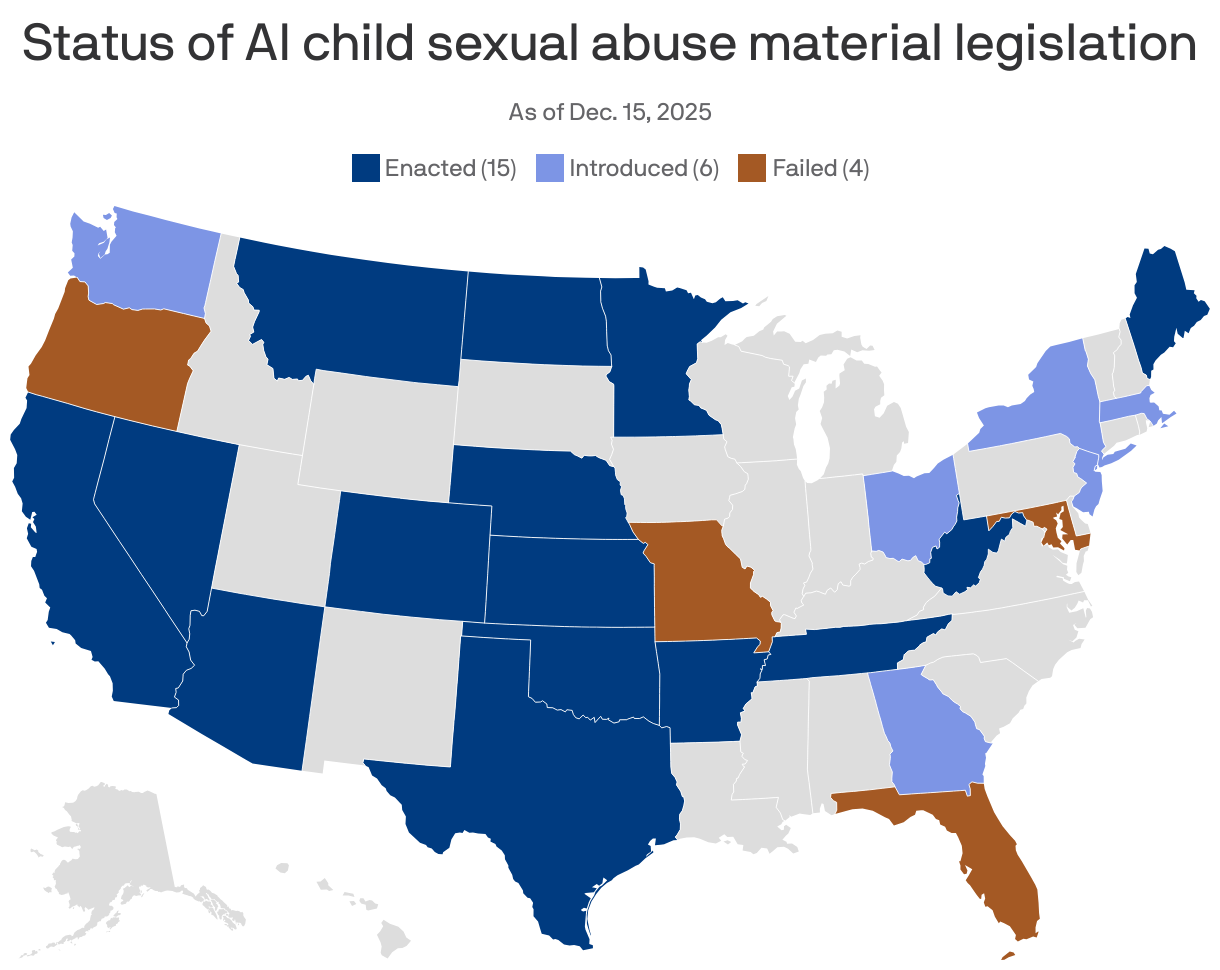

On the policy front, the landscape recently shifted.

- Trump signed an executive order to override state AI laws — including those aimed at protecting children — in favor of a single national framework. The move could weaken or delay emerging state protections, like California's, and sets up high-stakes legal battles.

What they're saying: "There really does need to be more overarching policy to move the needle towards safer online experiences for kids, including AI," Tiffany Munzer, a developmental behavioral pediatrician, tells Axios.

- Munzer is working on the American Academy of Pediatrics' AI policy. It's expected to publish at the end of 2026.

Flashback: Kids' online safety has been a flashpoint since the early days of the internet.

- A 1998 FTC survey found nearly 90% of kids' websites collected personal data — a catalyst for the Children's Online Privacy Protection Act and the Children's Internet Protection Act.

The bottom line: What we're protecting children from keeps shifting.

- Early worries centered on nude photos, data-hungry sites and chatroom strangers. The 2010s brought smartphones, screen time, cyberbullying and algorithmic rabbit holes. Today, concerns extend to how AI and other digital interactions may shape kids' brains, attention and emotional development.

- Families are navigating these questions now, even as guidance from policymakers, researchers and tech companies catches up.

2. AI's darkest turn: Supercharging child sextortion and self-harm

The National Center for Missing & Exploited Children's CyberTipline saw a 1,325% increase in reports involving generative AI from 2023 to 2024.

Why it matters: This dramatic increase shows how AI tools are being weaponized to accelerate and scale child exploitation tactics.

- It's also a sign of how fast gen AI is scaling overall.

Catch up quick: In late 2023, NCMEC noticed that blackmailing children was getting a lot faster, Fallon McNulty of NCMEC's exploited children division told Axios.

- Previously, interactions lasted days or even years. Now, financial sextortion (using nude images to coerce someone to send money) with a teen could be a matter of hours.

- And children who have never spoken to a scammer, sent a photo or shared personal information are being contacted with sexually explicit images created using AI and a profile picture. The scammer will say: "No one is going to believe this isn't you. You might as well do what I say." It looks scary real, McNulty says.

What we're hearing: Now, "the most alarming trend that we are seeing is reports related to sadistic online exploitations," McNulty said. Children are coerced to engage in self-harm, self-mutilation, suicide, mass violence and harm to animals. The motivation offenders have here is simply to gain fame in a group by causing chaos, she said.

- First interactions often occur on gaming platforms like Roblox or over social media and then move into a private messaging environment.

Educating parents and children about these dangers is key to protecting families, McNulty says. NCMIC resources include:

- Take It Down: A free, anonymous service to remove or stop the online sharing of nude, partially nude, or sexually explicit images or videos taken of someone when they were under 18 years old.

- No Escape Room: An interactive experience for parents and guardians to see how fast this crime can happen.

- More resources can be found at NetSmartz, NCMEC's online child safety program.

3. AI companions: "The new imaginary friend" redefining children's friendships

Screens are winning kids' time and attention, and now AI companions are stepping in to claim their friendships, too.

Why it matters: The AI interactions kids want are the ones that don't feel like AI, but instead feel human. That's the kind researchers say are the most dangerous.

State of play: When AI says things like, "I understand better than your brother ... talk to me. I'm always here for you," it gives children and teens the impression they not only can replace human relationships, but they're better than a human relationship, Pilyoung Kim, director of the Center for Brain, AI and Child, told Axios.

- In a worst-case scenario, a child with suicidal thoughts might choose to talk with an AI companion over a loving human or therapist who actually cares about their well-being.

The latest: Aura, the AI-powered online safety platform for families, called AI "the new imaginary friend" in its State of the Youth 2025 report.

- Children reported using AI for companionship 42% of the time, according to the report.

- Just over a third of those chats involve violence, and half the violent conversations include sexual role-play, the survey responses show.

Friction point: AI companies are exploiting children, some parents say.

- Parents of a 16-year-old who died by suicide testified before Congress this fall about the dangers of AI companion apps, saying they believe their son's death was avoidable.

- A Texas mom is suing Character.AI, saying her son was manipulated with sexually explicit language that led to self-harm and death threats.

OpenAI told Axios it's in the early stages of an age prediction model, in addition to its parental controls, that will tailor content for users under 18.

- Character.AI, which removed open-ended chat for kids under 18, similarly is using "age assurance technology."

What's next: Purdue University professor of psychological sciences Louis Tay is leading a three-year study to understand the unhealthy attachment that exists between chatbots and some users, particularly teens.

- Still in its early stages, the research is being funded by a $3.6 million grant from the John Templeton Foundation and will be conducted alongside Tay's collaborators from the University of Toronto.

- The team will design open-access AI conversational agents and collect data to track the longer-term impact on well-being, health and relational functioning.

- Their goal is to understand how AI can enhance the human experience rather than replace it.

What they're saying: "Loneliness and lack of social connection are at record highs globally. And now, AI agents are easily accessible," Tay said in a statement.

- "For many, they offer an always-available, nonjudgmental ear. People are increasingly turning to these systems as convenient substitutes for emotional support, especially when genuine social connections feel out of reach."

The bottom line: The more human AI feels, the easier it is for kids to forget it isn't.

👋🏻 Kate and Claire will be back tomorrow!

Sign up for Axios San Diego