Axios Future of Cybersecurity

January 13, 2026

Happy Tuesday! Welcome back to Future of Cybersecurity.

🍜 Shoutout to everyone who shared their Bay Area restaurant recommendations last week. Keep them coming!

📬 Have thoughts, feedback or scoops I should be chasing? [email protected].

Today's newsletter is 2,018 words, a 7.5-minute read.

1 big thing: National labs quietly push AI into cyber defense

The secretive national laboratories scattered across the country are now making public breakthroughs in AI-enabled cyber defense.

Why it matters: National laboratories — including Los Alamos, Sandia and Lawrence Livermore — are behind some of the biggest advancements you've never heard of in cyberspace.

- Even hearing about a new project likely indicates that the U.S. government is making more headway in the race to fend off adversarial AI attacks than it's showing.

Driving the news: Scientists at the Pacific Northwest National Laboratory (PNNL) in Washington state built a generative AI-powered system that allows defenders to quickly simulate the cyberattacks targeting their organizations.

- Reconstructing a cyberattack is a key part of investigating and remediating the threat. Defenders need to retrace their adversaries' footsteps to understand how they got in and what vulnerabilities must be addressed.

How it works: Researchers used both Anthropic's Claude and MITRE's open-source Caldera simulation tool to build the new system, known as Aloha.

- A security analyst starts by inputting a plain-language description of a specific attack on their network or a hypothetical attack they want to test, including what happened across their systems.

- The AI system, powered by Claude, then generates a detailed representation of the attack's sequences based on its own knowledge base — expanding the plain-language sequence into an executable play-by-play.

- Caldera takes those steps and runs a simulation in a contained environment against a test network, effectively emulating how the attack would play out under different conditions.

- Aloha then observes the simulation in real time, evaluating each step as it runs and determining whether it achieved its intended effect.

- If the simulation hits a wall, Aloha can automatically adjust the next action and keep the simulation moving forward.

- The security analyst can then tweak the conditions — such as what defensive cyber tools are in place — and keep replaying the simulation until they're satisfied with the outcome.

The intrigue: Traditionally, rebuilding an attack chain requires weeks of manual scripting and expert labor. Aloha compresses that timeline dramatically.

- "The technology speeds up the defender's response so that the cybersecurity expert doesn't need to carry out quite as many operations themselves," Loc Truong, who led the PNNL team behind Aloha, said in a statement. "It's click and go."

- Aloha also lowers the barrier for organizations that lack the budget and staff expertise required to run traditional attack-emulation tools.

The big picture: Aloha is arriving as both well-intentioned and malicious hackers embrace AI in their work.

- Anthropic uncovered evidence late last year of Chinese state-sponsored actors using Claude to break into about 30 global organizations. Ransomware gangs are slowly but surely automating their entire kill chain.

- Even at last year's DEF CON hacker convention, nearly every team that competed in the conference's Capture the Flag competition was using AI to assist in their attacks, PNNL cybersecurity researcher Kristopher Willis said in a statement.

Between the lines: The national labs' cyber teams have been at the heart of AI cyber research for decades — and the same goes for the wave of generative AI advancements.

- Researchers across several national laboratories, including those that participate in the country's nuclear program, have been using OpenAI's models in their work for years.

- OpenAI and Los Alamos National Laboratory have also been working together to evaluate the safety of multimodal AI systems.

- Anthropic has been working with the National Nuclear Security Administration to determine the difference between those using its models for legit research and those abusing it to uncover nuclear secrets.

What to watch: The PNNL team is now building on DARPA findings in a recent DEF CON competition to expand Aloha to automatically test newly discovered vulnerabilities on a system and see how severe it is, Willis told Axios.

- "We want to take Aloha to understand proof of vulnerabilities and make them into proof of concepts," Willis said. "That way we can also either create a remediation or even test the remediation."

2. Exclusive: WitnessAI nabs $58M to secure AI

WitnessAI has raised $58 million from high-profile investors, including Ashton Kutcher's Sound Ventures, Fin Capital, Qualcomm Ventures and Samsung Ventures, as it expands into securing AI agents, the company first shared with Axios.

Why it matters: Securing agents is the next battleground in cybersecurity, with defenders racing to lock down nonhuman identities before hackers get hold of them.

Zoom in: WitnessAI offers an AI security and governance platform that helps companies control what data flows into internal AI tools, including how AI agents ingest and move through corporate systems.

- The platform also helps companies monitor and control these activities based on whatever security and data privacy compliance requirements they have to follow.

- "People are using other people's AI, customers are using company chatbots, agents are coming along — all of that problem is going to bleed together," WitnessAI CEO Rick Caccia told Axios. "It's going to be like 'people talking to agents, to apps, to models,' and so we built a product that kind of covers all of it."

Between the lines: The company, which emerged from stealth in 2024, is betting that agent security will soon be unavoidable as enterprises push toward autonomy.

- WitnessAI already works with top global airlines, automakers, financial services firms, utilities and telcos, Caccia said.

- "If we don't enable the safe use of AI and agents, we should expect data loss and manipulation on a scale we've never seen," Nicole Perlroth, founding partner of Silver Buckshot Ventures, which participated in the round, told Axios.

The big picture: Investors are piling into AI security startups focused on agentic threats, as my colleague Chris Metinko wrote over the holidays.

- PitchBook estimates that nearly $250 million was raised for agentic cybersecurity companies last year, as of Dec. 15, across almost two dozen deals.

What they're saying: "If adversaries are going to look at being able to get into your network, your data, your most sensitive information, they're going to come after agents," Gen. Paul Nakasone, former head of the NSA and Cyber Command and a board member at WitnessAI, told Axios.

Reality check: Agentic adoption is still early. Around 1 in 4 respondents in a recent McKinsey study said their organization was scaling an agentic AI system in a meaningful way.

What's next: Caccia is eyeing international expansion, including potential distribution deals with managed service providers and internet providers.

3. Chinese disinformation targets... China

Thirty Chinese companies are tied to a recent operation pushing fake news stories on websites posing as the New York Times, the Guardian, the Wall Street Journal and several other legitimate media outlets, researchers at Graphika said in a report today.

Why it matters: This operation has a different audience than the typical China-based disinformation operation: Chinese businesses and clients looking to convince customers that their businesses are bigger overseas than they actually are.

- Graphika also noted that "several domains are catered to English-speaking audiences outside of China."

The big picture: Spoofing legitimate Western news sites is an increasingly common tactic for adversarial disinformation operatives — making it even harder for social media users to discern between legitimate and fake news sources.

Zoom in: Researchers at Graphika uncovered a wide-reaching campaign where Chinese marketing and PR companies sold access to these fake sites, with customers buying stories that could help them land contracts and gain customers.

- A significant subset of the websites spoofed English-language media outlets like the NYT and the Los Angeles Times.

- Another group of domains mimicked Chinese state media.

How it worked: Chinese marketing and PR firms placed content on these fake news sites, which mimicked real outlets in name and design.

- Their clients then circulated screenshots and links on Chinese platforms, claiming they'd been covered by influential media outlets.

- In some cases, known Chinese influence operations also shared these stories across their global disinformation networks.

By the numbers: The companies operated across a network of 43 domains and 37 subdomains, according to Graphika.

- The sites hosted content tied to 30 Chinese companies and three China-based PR professionals.

- Each domain had technical links back to two China-based companies — Haixun and Haimai — that Google previously found operating a similar network of sites.

Yes, but: While the fake news sites borrowed logos and web designs from the legitimate sources, they still didn't look anything like the actual websites.

What to watch: Foreign adversaries are already experimenting with generative AI to enhance their campaigns.

- That could make these spoofed news ecosystems faster, cheaper and harder to detect, especially as they blur the line between commercial promotion and state-backed disinformation.

4. U.S. loses access to international cyber orgs

The U.S. is leaving three international cybersecurity organizations following President Trump's executive order last week withdrawing from 66 international organizations, conventions and treaties.

Why it matters: The move is the latest crack in how the U.S. coordinates cybersecurity policy with allies.

- The second Trump administration has been slowly removing the country from several international forums — including by declining to sign the UN cybercrime treaty last fall.

The big picture: Cyber threats routinely cross borders, forcing governments to coordinate policy, norms and enforcement.

Zoom in: Under the executive order, the U.S. is withdrawing from the Global Forum on Cyber Expertise (GFCE), the European Centre of Excellence for Countering Hybrid Threats (Hybrid CoE), and the Freedom Online Coalition (FOC).

- The GFCE has worked on a myriad of issues, including cybercrime, the workforce shortage and critical infrastructure protection.

- Hybrid CoE pulls together several member states to tackle threats against democracies like cybercrime, online propaganda and digital espionage.

- And the FOC works with dozens of members to support internet freedom, including free expression, association, assembly and digital privacy.

Reality check: None of these organizations coordinates or plays a role in global cyber operations or law enforcement takedowns — which the U.S. is still participating in.

- However, they play an outsize role in aligning policy, norms and best practices among allies.

What they're saying: In a statement, the GFCE said it "respects the decision of the U.S. government" and expressed appreciation for the country's 10 years of "extensive commitment and constructive involvement."

- Kirsi Pere, a spokesperson for Hybrid CoE, said the group is working to clarify what the decision means practically. It will be "very unfortunate" if the U.S., a founding member, withdraws its cooperation, she said.

- The FOC did not respond to a request for comment.

5. Catch up quick

@ D.C.

🇮🇷 President Trump is being briefed on several options for retaliating against Iran if the regime kills protesters, including offensive cyberattacks. (Axios)

🔌 A look at the signs that make former military officials think a cyberattack was behind the blackouts in Venezuela during the Maduro operation. (Axios)

📲 ICE now has a way to surveil mobile phones in an entire neighborhood and track their movements over time, according to internal documents. (404 Media)

@ Industry

💰 Cyera's $400 million Series F round is just the latest headline-grabbing deal in the data security space. (Axios Pro)

💸 CrowdStrike is buying identity security startup SGNL for $740 million as part of its efforts to help customers fend off AI threats. (Reuters) And it's also acquiring browser security company Seraphic Security. (Forbes)

🧽 OpenAI has been asking contractors to upload projects from past jobs to evaluate the performance of AI agents — and leaving it up to the contractors to scrub any confidential information in those documents. (Wired)

@ Hackers and hacks

⚠️ Attackers are exploiting two zero-day flaws found in Trend Micro's Apex One Management Console on Windows systems. (Dark Reading)

👀 The Cambodian government has extradited the alleged leader of one of Asia's largest conglomerates, who is wanted in the U.S., to China. (Wall Street Journal)

🚀 The European Space Agency suffered a second data breach last week, with one cybercriminal gang claiming it stole 500 gigabytes of sensitive data. (The Register)

6. 1 fun thing

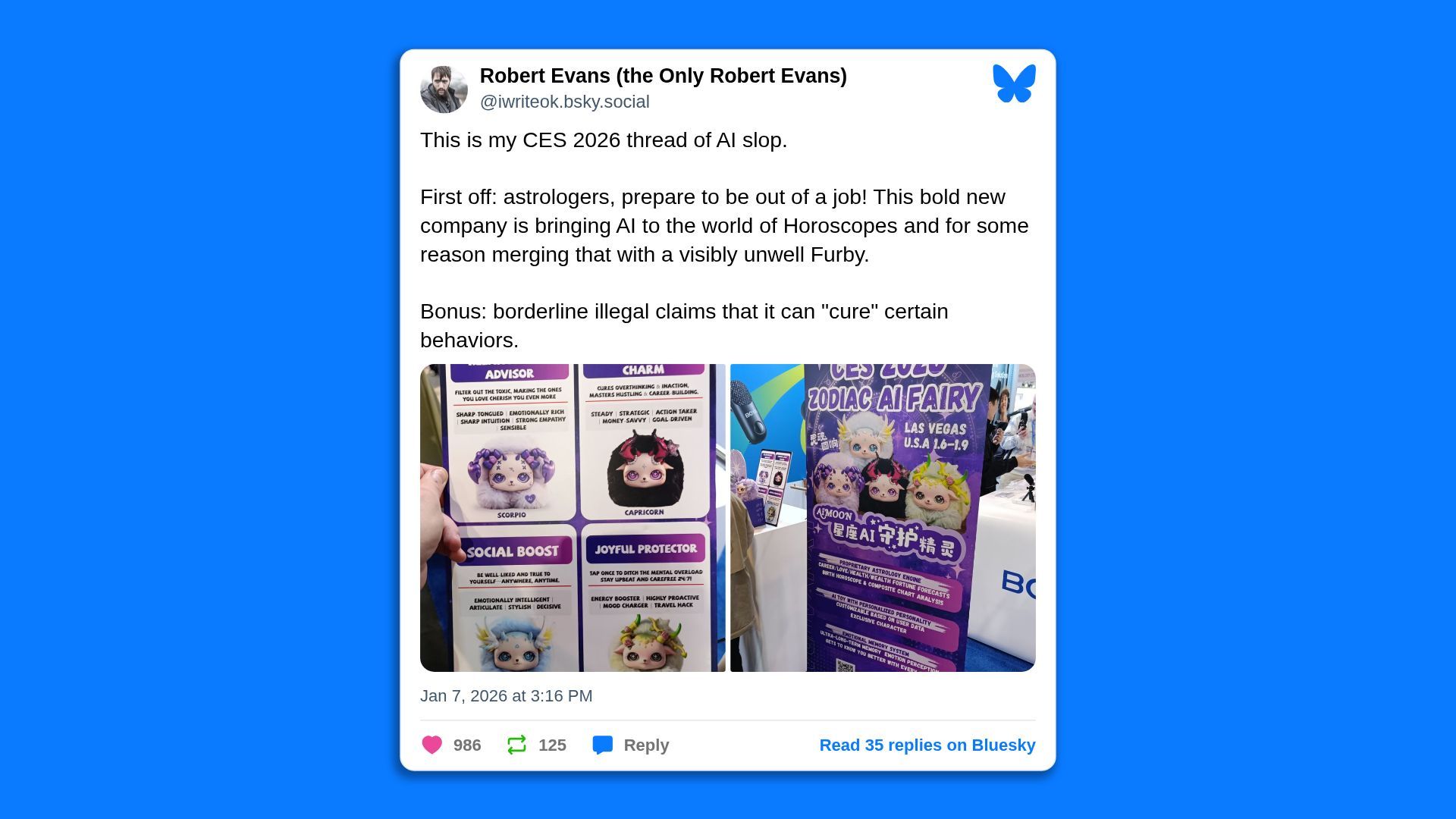

💫 🌌 Seeing a lot of shock that CES featured several astrology AI apps!

- I'm sorry, but if you aren't already using ChatGPT for (fun!) astrology lessons... what's taking you so long?

☀️ See y'all next week!

Thanks to Dave Lawler for editing and Khalid Adad for copy editing this newsletter.

If you like Axios Future of Cybersecurity, spread the word.

Sign up for Axios Future of Cybersecurity