Axios Future

November 27, 2018

Welcome back to Future. Thanks for subscribing.

Consider inviting your friends and colleagues to sign up. And if you have any tips or thoughts on what we can do better, just hit reply to this email or shoot me a message at [email protected].

Okay, let's start with ...

1 big thing: The quarrelsome field of AI

Illustration: Sarah Grillo/Axios

Machines as intelligent as humans will be invented by 2029, predicts technologist Ray Kurzweil. "Nonsense," retorts roboticist Rodney Brooks. By that time, he says, machines will only be as smart as a mouse. As for humanlike intelligence — that may arrive by 2200.

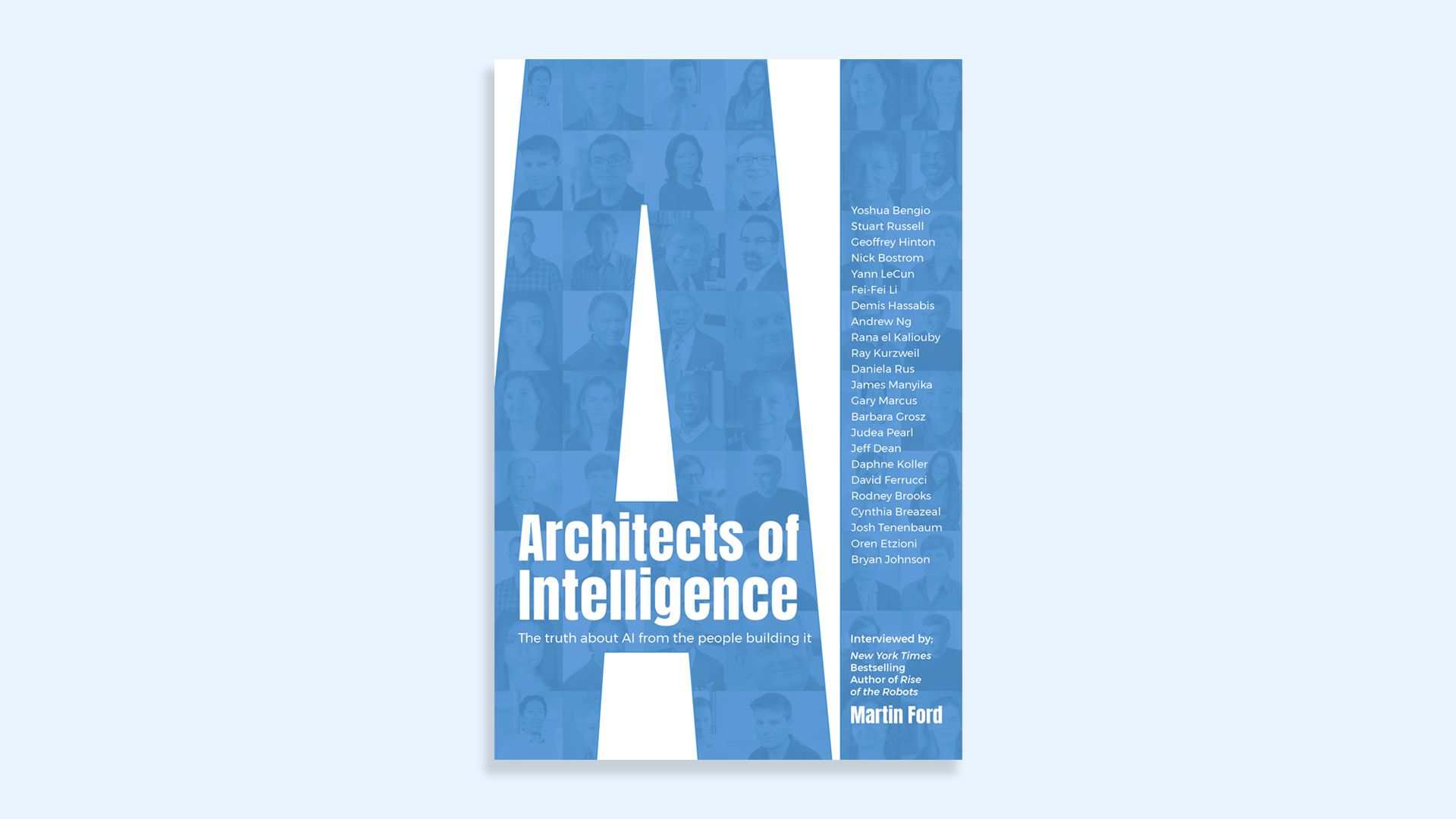

- Between these two forecasts — machines with human intelligence in 11 or 182 years — lies much of the rest of the artificial intelligence community, a disputatious lot who disagree on nearly everything about their field, Martin Ford, author of a new book on AI, tells Axios.

Writes Axios' Kaveh Waddell: Ford is the author of the best-selling and well-reviewed "Rise of the Robots," a dystopian 2015 tract on the sorry coming state of working humans in the new age of automation. Today, he follows it up with "Architects of Intelligence," a collection of interviews he conducted over the last year with almost two dozen of the West's most illustrious artificial intelligence hands.

His main takeaway: "This is an unsettled field," Ford told Kaveh yesterday. "It's not like physics."

- AI may seem to be a smooth-running assembly line of startups, products and research projects.

- The reality, however, is a landscape clouded by uncertainty, Ford says. His interviewees could not agree on where their field stands, how to push it forward or when it will reach its ultimate goal: a machine with humanlike intelligence.

Why it matters: The embryonic state in which Ford found AI — so early in its development more than a half-century after its birth that the basics are still up for grabs — suggests how far it has to go before reaching maturity. On his blog, Brooks has said that AI is only 1% of the way toward human intelligence.

The big picture: Research in the field has progressed in fits and starts since the term "artificial intelligence" was coined in the 1950s by American computer scientist John McCarthy, alternating between periods of hibernation and feverish activity.

- The current frenzy is propelled by the wild success of deep learning, an AI technique that excels at finding patterns and identifying objects in photographs.

- Few of Ford's subjects said deep learning will arrive at humanlike intelligence on its own — they said something new must be developed to get there. But deep learning aficionados ridicule the alternatives suggested by others.

Kurzweil, Google’s director of engineering, stands out on many fronts, Ford says. For one, he has very little patience for colleagues suffering from "engineer’s pessimism."

- Humanlike intelligence will emerge in an exponential burst of innovation, Kurzweil said, not in linear fashion, as so many others seem to think.

- "What Ray says is correct," says Ford. "Engineer's pessimism is what's at play; the question is who's right" — the pessimist or the optimist.

What's next: Not all was discord. There was a remarkable confluence, for instance, on the most promising coming step.

- Many called for an exploration of "unsupervised learning," an AI that, like a child, can wander around, poking and prodding, getting into trouble, and meanwhile learning a lot of important stuff about the world.

- That’s a stark departure from current AI training methods, which require reams of labeled data: think photos of cats that are explicitly labeled as cats.

"Today, in order to teach a computer what a coffee mug is, we show it thousands of coffee mugs. But no child’s parents, no matter how patient and loving, ever pointed out thousands of coffee mugs to that child."— Andrew Ng, former AI lead at Google and Baidu

2. Annals of the new global fiefdoms

Illustration: Sarah Grillo/Axios

As we've been reporting, the U.S. and China seem to be barreling toward a division of the world into two giant, competing fiefdoms, each with largely isolated trade and communications.

Driving the news: In the latest development, reported by the WSJ, the U.S. has been urging carriers in allied countries to stop using communications equipment produced by China's Huawei, which American officials say could contain spying mechanisms.

- But the WSJ follows up today that at least one target of this lobbying effort — Papua New Guinea — has rejected the U.S. campaign and is proceeding with a 3,400-mile internet network from Huawei.

Janice Gross Stein, a professor at the University of Toronto, says the U.S. and Australia are part of the effort to exclude Huawei and ZTE from use by allied companies in what she calls "rebordering."

- "The U.S. and Australian push to prevent Huawei from gaining access to 5G networks is driven by some genuine security concerns, but they are also consistent with what I described as the 'rebordering of the West,'" Stein tells Axios.

Go deeper: What the fiefdoms may look like

3. AI research goes radio silent

Illustration: Sarah Grillo/Axios

Amid rising worries regarding the development of human-level machine intelligence, a prominent Berkeley research organization has become the first to stop openly publishing all of its findings.

Why it matters: The move by the Machine Intelligence Research Institute is a break from a traditional standard of openness in computer-science research.

We’ve reported before on researchers’ questions about the right amount of openness and transparency when discussing potentially dangerous work. This is the most extreme reaction we’ve seen yet.

- It comes as AI researchers are quietly deliberating how to react to the potential malicious use of AI.

- MIRI worries that open publishing could aid progress toward an unchecked super-intelligent machine.

- Today, AI researchers routinely first post their papers at Arxiv, an entirely free and open, non-peer-reviewed repository for scientific papers.

Details: MIRI — which has received funding from AI dystopians like Peter Thiel and Elon Musk’s Future of Life Institute — posted a strategy document on Thanksgiving outlining its new policy of "nondisclosed-by-default research."

- "Most results discovered within MIRI will remain internal-only unless there is an explicit decision to release those results, based usually on a specific anticipated safety upside from their release," wrote MIRI executive director Nate Soares.

"It does seem to me to be useful that an AI research organization has taken this step, if only so that it generates data for the community about what the consequences are of taking such a step."— Jack Clark, OpenAI policy director

OpenAI, another prominent AI research nonprofit, wrote in its charter that it expects that "safety and security concerns will reduce our traditional publishing in the future."

- Jack Clark, OpenAI’s policy director, said the organization is still in the early stages of fulfilling the goal, but that the questions MIRI is grappling with — when it's best to keep research private — are worth debating.

4. Worthy of your time

Illustration: Rebecca Zisser/Axios

The savior palm oil has unleashed catastrophe (Abrahm Lustgarten — ProPublica & NYT)

The ethics of genetically edited babies (Sam Baker — Axios)

Texas to unleash OPEC's worst nightmare (Javier Blas — Bloomberg)

Cheap labor drives China's AI (Li Yuan — NYT)

Unions in the new age of automation (Sarah O'Connor — FT)

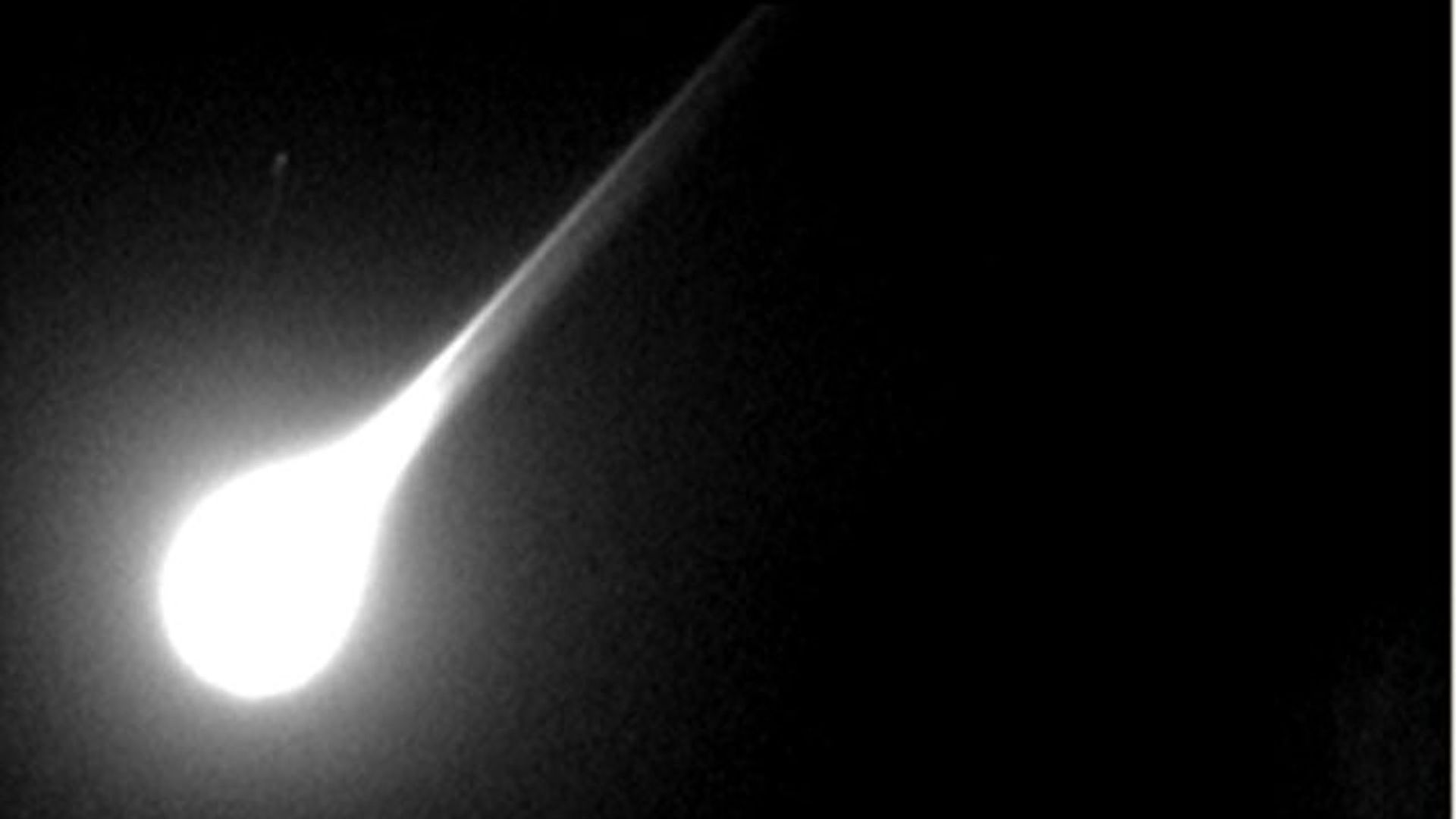

5. 1 crash from space: Death by meteorite

Photo: George Varros and Dr. Peter Jenniskens/NASA/Getty

Some 3,700 years ago, a vibrant collection of farming settlements dotted fertile lands currently known as Jordan. Then, a massive meteorite appears to have struck, destroying everything in a 15-mile-wide circle. So traumatic was the catastrophe that no one resettled there for six or seven centuries, according to a new study.

- Until the suspected meteorite, some 40,000–65,000 people lived in the area north of the Dead Sea, called Middle Ghor, Phillip Silvia, a professor at Trinity Southwest University in Albuquerque, tells Science News' Bruce Bower.

- The collapse of the Middle Ghor civilization happened suddenly in the late Bronze Age.

- For instance, pottery that Silvia unearthed seems to have melted into zircon crystals, which "formed within one second at extremely high temperature," Bower reported.

Sign up for Axios Future

Spot the mega-trends impacting our world