Axios Codebook

February 28, 2025

😎 TGIF, everyone. Welcome to the last edition of Codebook!

- 📣 Starting Tuesday, Codebook will become Axios Future of Cybersecurity, and it will arrive in your inbox weekly. I'll have more details on our new vibe in the first edition.

- 📬 Until then, have thoughts, feedback or scoops to share? [email protected].

- 📲 If you have a sensitive tip, find me on Signal: @SamSabin.01.

Today's newsletter is 1,417 words, a 5.5-minute read.

1 big thing: Untangling safety from AI security is tough, experts say

Recent moves by the U.S. and the U.K. to frame AI safety primarily as a security issue could be risky, depending on how leaders ultimately define "safety," experts tell Axios.

Why it matters: A broad definition of AI safety could encompass issues like AI models generating dangerous content, such as instructions for building weapons or providing inaccurate technical guidance.

- But a narrower approach might leave out ethical concerns, like bias in AI decision-making.

Driving the news: The U.S. and the U.K. declined to sign an international AI declaration at the Paris summit this month that emphasized an "open," "inclusive" and "ethical" approach to AI development.

- Vice President JD Vance said at the summit that "pro-growth AI policies" should be prioritized over AI safety regulations.

- The U.K. recently rebranded its AI Safety Institute as the AI Security Institute.

- And the U.S. AI Safety Institute could soon face workforce cuts.

The big picture: AI safety and security often overlap, but where exactly they intersect depends on perspective.

- Experts universally agree that AI security focuses on protecting models from external threats like hacks, data breaches and model poisoning.

- AI safety, however, is more loosely defined. Some argue it should ensure models function reliably — like a self-driving car stopping at red lights or an AI-powered medical tool correctly identifying disease symptoms.

- Others take a broader view, incorporating ethical concerns such as AI-generated deepfakes, biased decision-making, and jailbreaking attempts that bypass safeguards.

Yes, but: Overly rigid definitions could backfire, Chris Sestito, founder and CEO of AI security company HiddenLayer, tells Axios.

- "We can't be flippant and just say, 'Hey, this is just on the bias side and this is on the content side,'" Sestito says. "It can get very out of control very quickly."

Between the lines: It's unclear which AI safety initiatives may be deprioritized as the U.S. shifts its approach.

- In the U.K., some safety-related work — such as preventing AI from generating child sexual abuse materials — appears to be continuing, says Dane Sherrets, AI researcher and staff solutions architect at HackerOne.

- Sestito says he's concerned that AI safety will be seen as a censorship issue, mirroring the current debate on social platforms.

- But he says AI safety encompasses much more, including keeping nuclear secrets out of models.

Reality check: These policy rebrands may not meaningfully change AI regulation.

- "Frankly, everything that we have done up to this point has been largely ineffective anyway," Sestito says.

What we're watching: AI researchers and ethical hackers have already been integrating safety concerns into security testing — work that is unlikely to slow down, especially given recent criticisms of AI red teaming in a DEF CON paper.

- But the biggest signals may come from AI companies themselves, as they refine policies on whom they sell to and what security issues they prioritize in bug bounty programs.

2. Developers evading AI guardrails, Microsoft says

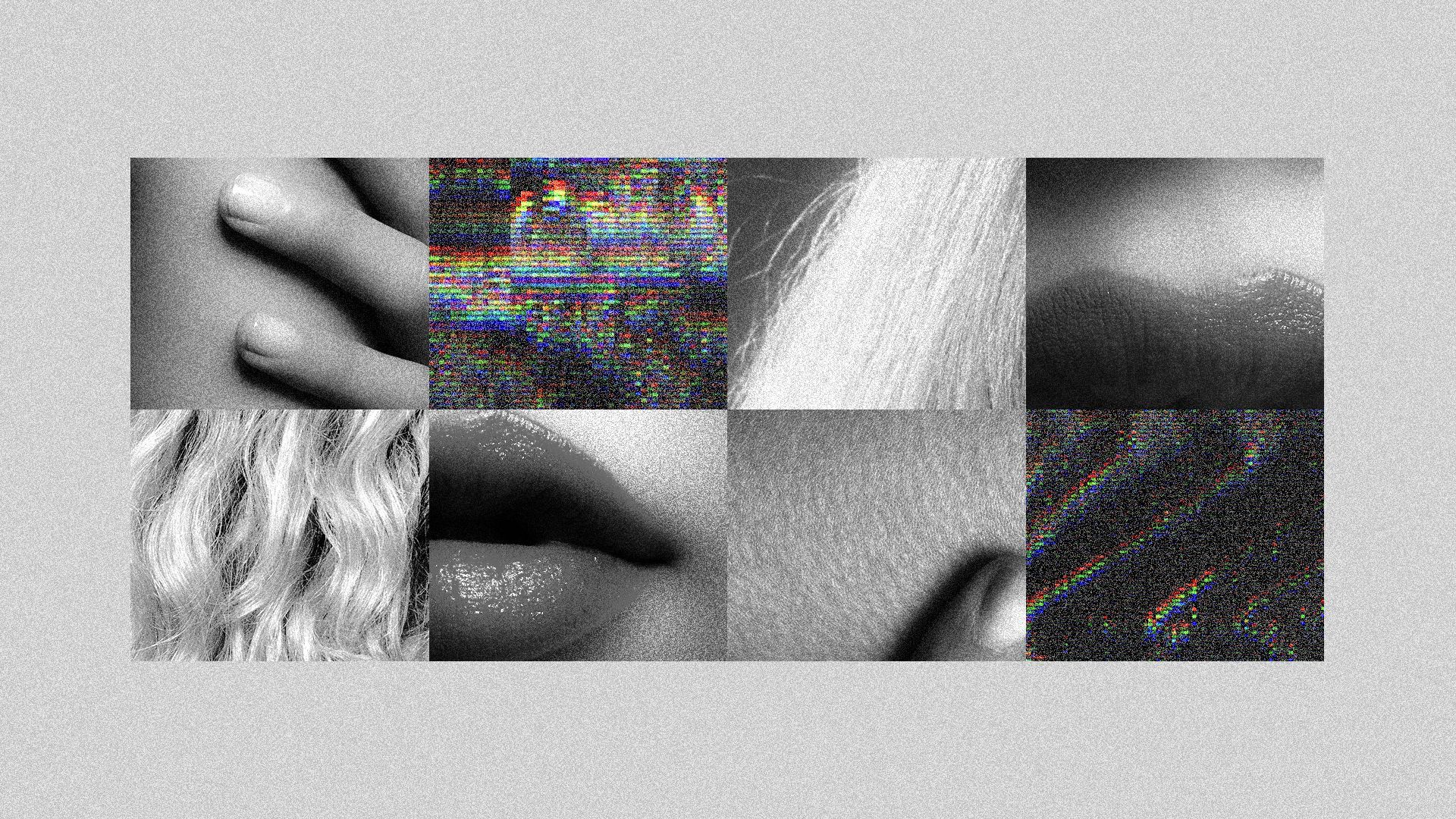

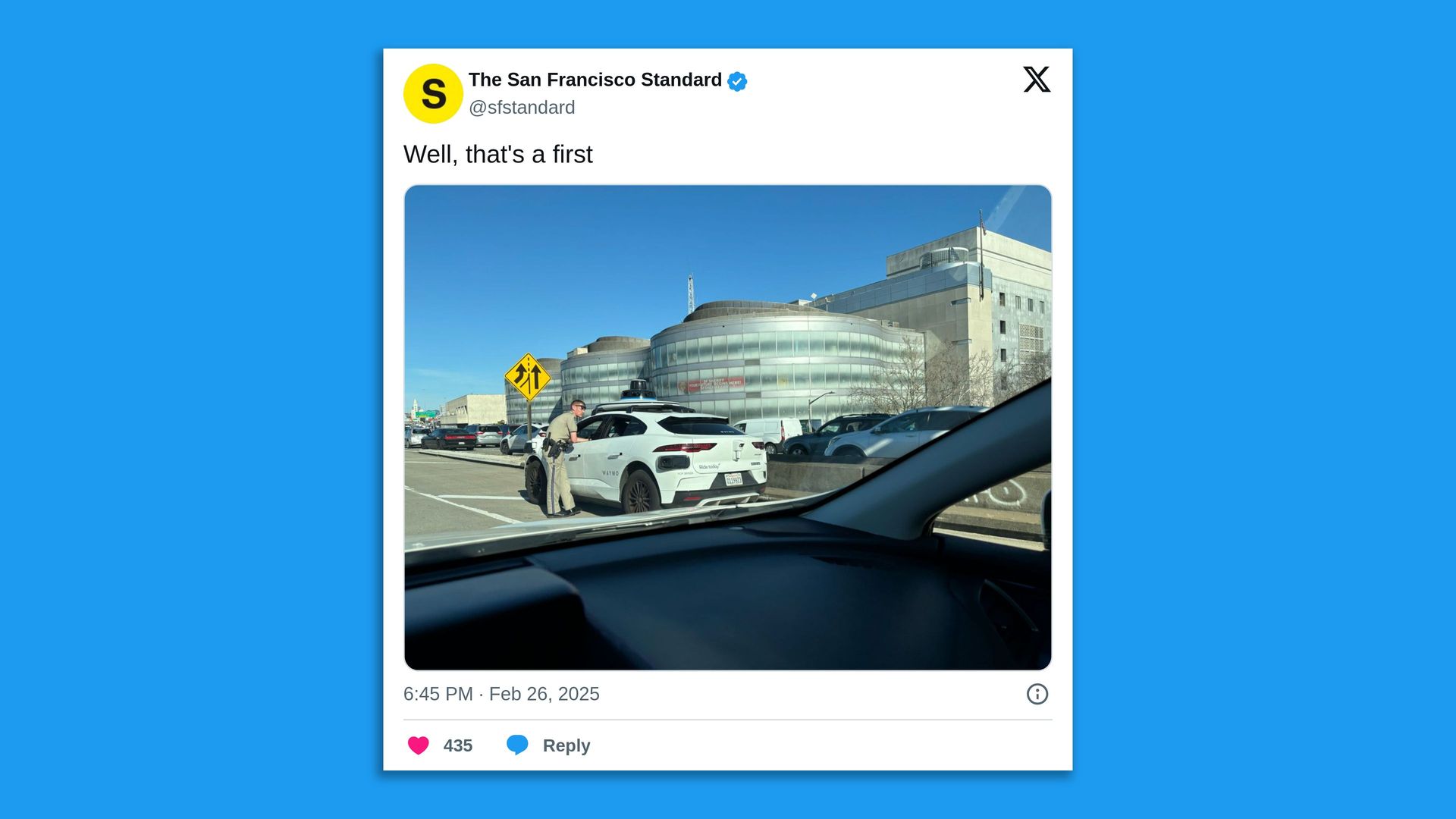

Microsoft has updated a lawsuit to name four developers it says were part of an effort to evade its generative AI guardrails and enable the creation of celebrity deepfakes, among other things.

Why it matters: While Microsoft and others have established systems designed to prevent misuse of generative AI, those protections only work when the technological and legal systems can effectively enforce them.

Driving the news: Microsoft named four developers it says are part of the Storm-2139 global cybercrime network: Arian Yadegarnia, aka "Fiz," of Iran; Alan Krysiak, aka "Drago," of the U.K.; Ricky Yuen, aka "cg-dot," of Hong Kong; and Phát Phùng Tấn, aka "Asakuri," of Vietnam.

- Microsoft said that members of Storm-2139 used compromised customer credentials to hack accounts with access to generative AI services and then bypassed the tools' safety guardrails.

- The individuals then sold access to the accounts, along with "detailed instructions on how to generate harmful and illicit content, including non-consensual intimate images of celebrities and other sexually explicit content."

- Microsoft initially filed suit in December, listing the defendants as John Does. The lawsuit was unsealed in January, at which time Microsoft spoke out publicly.

What they're saying: "We are pursuing this legal action now against identified defendants to stop their conduct, to continue to dismantle their illicit operation, and to deter others intent on weaponizing our AI technology," Microsoft said in a blog post yesterday.

The intrigue: Microsoft said the four named defendants aren't the only participants in the scheme it has identified.

- "While we have identified two actors located in the United States — specifically, in Illinois and Florida — those identities remain undisclosed to avoid interfering with potential criminal investigations," the company said. "Microsoft is preparing criminal referrals to United States and foreign law enforcement representatives."

Between the lines: Microsoft said a court order allowing the company to seize a "website instrumental to the criminal operation" helped both disrupt the scheme and uncover its participants.

- "The seizure of this website and subsequent unsealing of the legal filings in January generated an immediate reaction from actors, in some cases causing group members to turn on and point fingers at one another."

Yes, but: Microsoft said it also led to the doxxing of its lawyers, including the posting of names, personal information and, in some instances, their photographs.

3. The long-lasting impact of cyber job cuts

Cuts to the federal cyber workforce are likely to have long-lasting effects on the government's efforts to recruit and retain future generations of talent, experts told Axios at an event Wednesday.

Why it matters: The cybersecurity industry as a whole already had only enough cyber workers to fill 83% of available jobs, according to federal data.

- The federal government wasn't immune to the shortage.

What they're saying: "What [the administration] is doing to undermine future generations of people, younger or not, who might be interested in working in a space like the government, it's really scary," Rep. Chrissy Houlahan (D-Pa.), a member of House Intelligence and Armed Services committees, told me on stage.

- "We've just discouraged them completely from ever being involved."

Driving the news: The Cybersecurity and Infrastructure Security Agency cut more than 130 positions this month during broad government cuts of probationary workers.

- The cuts came after the agency placed six employees who worked on counter election disinformation on leave.

The big picture: People have typically come to the government because of its mission and the promise of a stable job with decent benefits. Now, that promise is being turned upside down, Houlahan said.

Zoom in: Chris Krebs, the director of CISA during the first Trump administration, also said on stage that the cuts at his former agency "resonate with me personally."

- Krebs noted that while it's natural for any new administration to come in and review the posture of each department and its agencies, CISA needs to be as strong as possible to battle intensifying cyber threats.

- "The [cyber] threats we're going to face tomorrow are tenfold what the threats were yesterday," he warned.

- Jen Easterly, former President Biden's CISA director, spearheaded an effort on LinkedIn this week to help match ex-CISA employees with interested employers.

Reality check: Federal cyber defenders told Krebs they're sticking around because of their dedication to the mission.

- "There are folks who work in the government that I have tried to hire into the private sector, and they're like, 'You know what? Money's great. This mission is better,'" he said.

What we're watching: The White House has requested agencies make plans for massive reductions in force in about two weeks.

Catch the rest of my interviews, as well as a panel with the creators and showrunners of Netflix's new "Zero Day" series, here.

4. Catch up quick

@ D.C.

💼 Karen Evans is officially the executive assistant director for cybersecurity at CISA, putting her in charge of the agency's cyber mission. (CyberScoop)

📲 The Biden administration assured Congress that there were no significant disputes around company information sharing, even though officials were aware of the U.K.'s forthcoming demand that Apple create a back door in its iCloud encryption. (Washington Post)

@ Industry

🤖 DeepSeek is expanding across various products in China, including TVs, fridges and vacuum cleaners, as the country quickly adopts the AI model's technology. (Reuters)

@ Hackers and hacks

🇰🇵 The FBI has formally attributed the $1.5 billion crypto theft at Bybit to North Korea. (Associated Press)

📡 Several scam compounds around the Myanmar-Thailand border appear to be relying on services from Elon Musk's Starlink to stay online and continue running their prolific "pig-butchering" scams. (Wired)

👀 Last year's hack-and-leak at Disney was the result of just one employee accidentally downloading a malware-laced AI tool. (Wall Street Journal)

☀️ See y'all Tuesday!

Thanks to Megan Morrone for editing and Khalid Adad for copy editing this newsletter.

If you like Axios, spread the word.

Sign up for Axios Codebook

Decode key cybersecurity news and insights. With Sam Sabin.