Axios AI+

September 17, 2025

It's AI+ DC Summit day! I hope to see lots of you either in person or via the livestream starting at 2pm ET. We'll have convos with Sen. Mark Kelly (D-Ariz.), Sen. Ted Cruz (R-Texas), Scale AI CEO Jason Droege, Anthropic CEO and co-founder Dario Amodei, AMD chair and CEO Lisa Su and more.

Today's AI+ is 1,189 words, a 4.5-minute read.

1 big thing: What Americans don't want AI to do

Americans say they're comfortable with AI helping develop new medicines or forecast the weather, but most don't think it should play a role in relationships, religion or creative tasks, according to a new report released today from Pew Research Center.

Why it matters: Americans are starting to set boundaries for what they want to turn over to AI — and what they don't.

By the numbers: Americans see AI as a way to boost efficiency, but they remain wary of handing over decisions tied to values, relationships or democracy, Pew found.

- 74% of Pew respondents said AI should play a role in weather forecasting and 70% said it should help with detecting financial crime.

The other side: 66% said AI should not judge whether two people could fall in love.

- 60% oppose AI having a role in making decisions about how to govern the country.

The big picture: Since 2021, Pew has been asking participants how they feel about the increased use of AI in daily life.

- In 2023, 2024 and 2025, about half of Americans said they were more concerned than excited about it.

- That's up from 2021 and 2022 — before the launch of ChatGPT — when only 37 and 38 percent said they were more concerned than excited.

- Only a quarter of Americans now say the benefits of AI are "high" or "very high." Of that 25%, "efficiency gains" was the most commonly cited benefit.

Between the lines: Participants in the study also said AI will weaken core human skills.

- 53% of Americans believe AI will erode people's creativity.

- 50% say it will hurt users' ability to form meaningful relationships.

Stunning stat: Although Americans told Pew they want to put boundaries on AI's role in our lives, more than half said they're "not at all" confident or "not too confident" they can detect whether content was created by a human or a bot.

- Most Americans say it's "extremely" or "very important" to be able to determine if content is AI-generated.

The intrigue: Chatbot interfaces are so open-ended that users who turn to the tools for research may be drawn into using them for more personal, creative or emotional questions.

- Anthropic studied how people use its Claude chatbot and found that "in longer conversations, counseling or coaching conversations occasionally morph into companionship — despite that not being the original reason someone reached out," per a report released in June.

What they're saying: Physicians and educators tell Axios that there are real risks in inviting chatbots deep into our personal, creative and religious lives.

- AI is "going to fundamentally change us as a human species," pediatric physician Dana Suskind told Axios in a July interview. "I think we need to be incredibly intentional, because we may end up in a place that we never imagined."

State of play: Americans are cautiously experimenting with AI, but the Pew data mirrors a growing skepticism of chatbots used for creative pursuits, therapy and intimate companionship, especially when it comes to teens and children.

- AI makers say their tools are "great for brainstorming," but new studies find that chatbots produce a more limited range of ideas than a group of humans would.

- The FTC is investigating AI chatbot safety, demanding details from seven companies — including OpenAI, Meta, Google and xAI — over potential harms to kids.

- After repeated reports of chatbots causing or intensifying delusional behaviors and suicidal thoughts, OpenAI says it's working to improve how its models recognize and respond to signs of mental and emotional distress.

2. Kelly's AI plan calls for tech firms to pay up

Sen. Mark Kelly is releasing an "AI for America" plan today that would require tech companies to contribute to a fund intended to help society adjust to the technology's impact on jobs, infrastructure and the environment.

Why it matters: The proposals are a sharp departure from the Trump White House's pro-growth AI Action Plan — but there's no clear path to see them enacted any time soon.

Driving the news: Kelly's boldest proposal calls for the establishment of a trust fund that could be used to, among other things, provide training to workers whose jobs are threatened by AI and invest further in infrastructure.

- "As a nation, we must seize this moment to build an AI boom for all, not another tech bubble for the few," Kelly says in the plan. "AI companies must be forces for strengthening, not straining, our workforce, energy infrastructure, and public resources."

- On the safety front, the plan also calls for "red team" tests of major models.

Zoom in: Kelly suggests companies could be required to pay for their use of public resources (like power, water and land), or pay additional taxes on profits from digital ad tools powered by AI, or contribute from "AI-based revenue windfalls."

Yes, but: As long as Republicans control both houses of Congress as well as the White House, Kelly's plans seem unlikely to gain traction.

What's next: Kelly is set to appear on stage later today at our AI+ Summit, where he will discuss the plan.

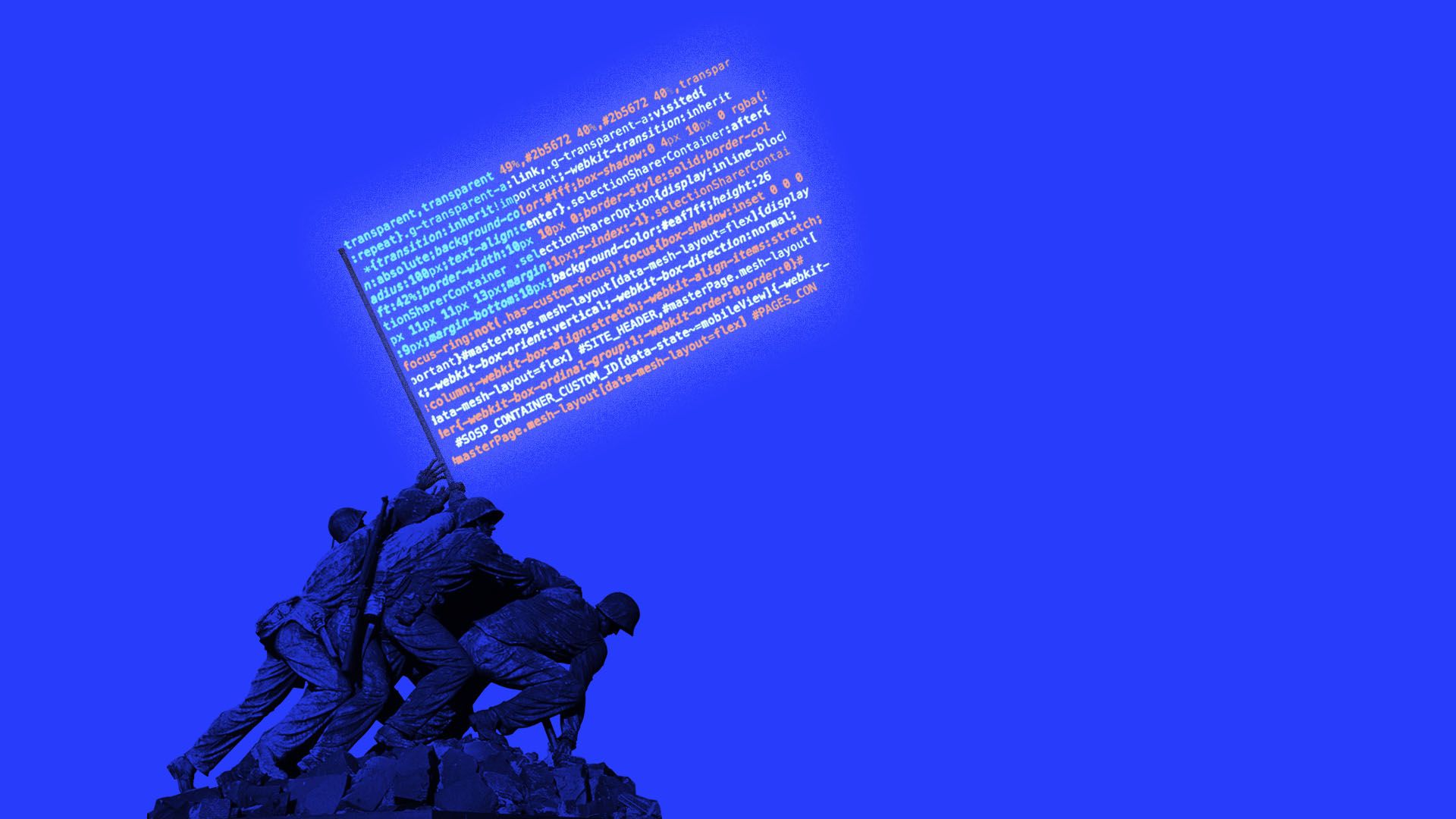

3. Public warms to AI weapons development

Americans are wary of the U.S. military developing AI weapons — until foreign rivals do it first, new polling from Gallup and the nonprofit, nonpartisan Special Competitive Studies Project finds.

Why it matters: The poll echoes the way U.S. defense officials frame the AI race with China and others: as a competition where not keeping up could put America at risk.

By the numbers: 48% of Americans say they oppose the development of AI-enabled weapons for use in military conflicts, compared with 39% who support it.

- But their opinions change once another country is believed to be working on those weapons: In that scenario, support for the U.S. building AI-enabled weapons jumps to 53%.

- 25% of those Americans say they "strongly support" such activities, up 13 points from the original share who said the same.

The big picture: The U.S. Department of Defense has been embracing AI in its future procurement plans. In July, the department awarded up to $200 million in contracts for AI work to OpenAI, Anthropic, Google and xAI.

4. Training data

- Parents of children who died by suicide or harmed themselves after using AI chatbots urged Congress to take action yesterday at a Senate Judiciary Committee hearing. (Axios)

- Exclusive: Scale AI has a new contract for up to $100 million with the Defense Department. (Axios)

- OpenAI has hired former xAI CFO Mike Liberatore to manage its spending on compute capacity. He will report to OpenAI CFO Sarah Friar. (CNBC)

- AI poses a new existential threat to photography, forcing photographers to adapt or lose their livelihoods. (Axios)

- Business Insider will start allowing staff to use AI to write the first drafts of articles. (Status)

5. + This

An engineer managed to turn a vape into a web server.

Thanks to Scott Rosenberg and Megan Morrone for editing this newsletter and Matt Piper for copy editing.

Sign up for Axios AI+