Axios AI+

November 01, 2023

Ina here, hoping you found the candy you really wanted in your kid's stash. (Really, just me?) Today's AI+ is 1312 words, a 5-minute read.

1 big thing: What's in Biden's order — and what's not

Illustration: Maura Losch/Axios

The Biden administration's AI executive order has injected a degree of certainty into a chaotic year of debate about what legal guardrails are needed for powerful AI systems, Ryan Heath reports.

Why it matters: The U.S. will have some measure of government oversight of the most advanced AI projects. It won't have licensing requirements or rules requiring that companies disclose training data sources, model size and other important details.

The big picture: Biden's approach is more carrot than stick, but it could be enough to move the U.S. ahead of overseas rivals in the race to regulate AI.

- The EU is set to finalize comprehensive AI regulations this year, including fines to enforce compliance, but they won't be in effect until 2025.

Yes, but: Executive orders are more unstable than legislation as they can be reversed by future administrations — and this one depends in large part of on the goodwill of tech companies.

Between the lines: Any effective global governance will require constructive dialogue with China.

- That challenge is now in the lap of Vice President Kamala Harris, Biden's point person on AI policy, starting at an AI safety summit outside London that begins Wednesday.

Biden's plan tasks government agencies with examining the application of AI to their areas of responsibility and leaves them to work out the details.

- Its provisions won't just apply to the generative AI programs that captured public imagination over the past year, but to "any machine-based system that makes predictions, recommendations or decisions," per an EY analysis.

Testing requirements are the most significant and most stringent provision of the executive order.

- Developers of new "dual-use foundation models" that could pose risks to "national security, national economic security, or national public health and safety" will need to provide updates to the federal government before and after deployment — including testing that is "robust, reliable, repeatable and standardized."

- The National Institute of Standards and Technology will develop standards for red-team testing of these models by August 2024, while the Defense Production Act will be used to compel AI developers to share the results.

- The testing rules will apply to AI models whose training used "a quantity of computing power greater than 10 to the power of 26 integer or floating-point operations." Experts say that will exclude nearly all AI services that are currently available.

Yes, but: It's not clear what action, if any, the government could take if it's not happy with the test results a company provides.

Other key provisions of the order:

- The Department of Commerce will develop standards for detecting and labeling AI-generated content.

- Every federal agency will designate a Chief AI Officer within 60 days and an interagency AI Council will coordinate federal action.

- The order promises to enforce consumer protection laws to prevent discrimination through AI and enact unspecified "appropriate safeguards" in fields such as housing and financial services.

- The order commits to "ease AI professionals' path into the Federal Government" and offer expanded AI training to bureaucrats. It also asks the secretaries of state and homeland security to make it easier for AI talent to apply for and renew visas.

The order leaves out a number of rules that have featured in this year's public debates.

- There's no licensing regime for the most advanced models, a proposal embraced by OpenAI CEO Sam Altman — and there are no bans on the highest-risk uses of the technology.

- The order does not mandate the release of details about training data and model size, which many experts and critics argue is essential for understanding the technology and anticipating its potential harms.

- There's no guidance around how copyright or other forms of intellectual property law will apply to works created with or by AI — that is now left to courts to decide.

The bottom line: Unless a fractious Congress can somehow unite on this contentious issue — perhaps around a narrowly defined problem, like AI use in elections and campaign materials — Biden's order is likely to be the only AI law of the land in the U.S. for a long time.

2. What relying on AI red teaming leaves out

Illustration: Annelise Capossela/Axios

Policymakers are increasingly turning to ethical hackers to find flaws in artificial intelligence tools. But some security experts fear they're leaning too hard on these red-team hackers to address all AI safety and security problems, Axios' Sam Sabin reports.

Why it matters: Red teaming — where ethical hackers try breaking into a company or organization — is a major touchstone of the Biden administration's new AI executive order.

- Among other things, the order calls on the National Institute of Standards and Technology to develop rules for red-team testing and calls on certain AI developers to submit all red-team safety results for government review before releasing a new model.

- The order is likely to become a policy roadmap for regulators and lawmakers looking for ways to safety test new AI tools properly.

Catch up quick: Policymakers and industry leaders have been rallying around red teaming as the go-to practice for securing and finding flaws in AI systems.

- The White House and several tech companies backed a DEF CON red-teaming challenge in August, when thousands of hackers tried to get AI chatbots to spit out things like credit card information and instructions on how to stalk someone.

- The Frontier Model Forum — a relatively new industry group backed by Microsoft, OpenAI, Google and Anthropic — also released a paper this month laying out its own standard approach to AI red teaming.

- Last week, OpenAI established a preparedness team that will use internal red teaming and capability assessments to mitigate so-called catastrophic risks.

What they're saying: "The emergence of AI red teaming, as a policy solution, has also had the effect of side-lining other necessary AI accountability mechanisms," Brian J. Chen, director of policy at Data & Society, tells Axios.

- "Those other mechanisms — things like algorithmic impact assessment, participatory governance — are critical to addressing the more complex, sociotechnical harms of AI," he added.

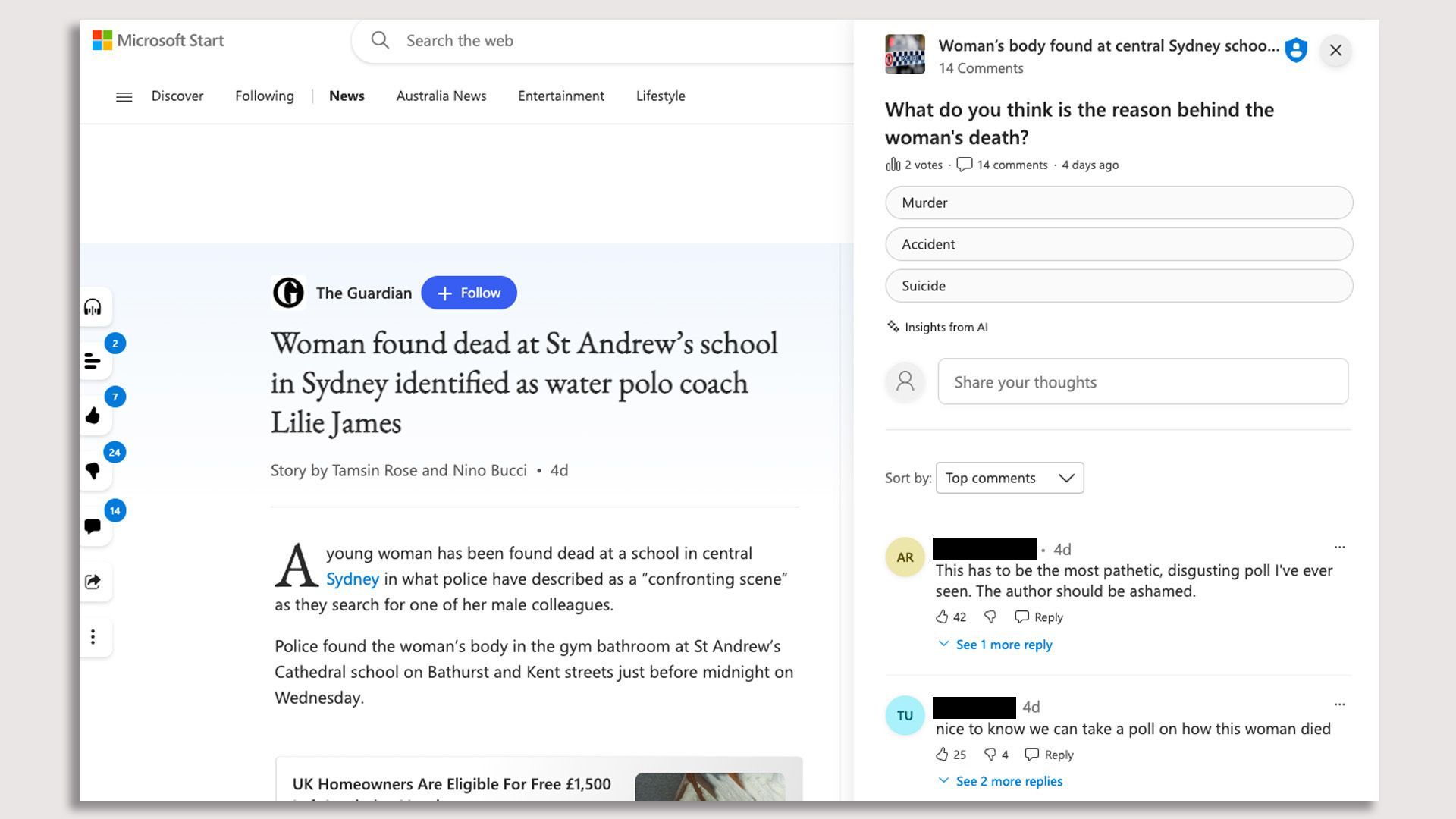

3. Guardian rips Microsoft for AI-generated poll

Screenshot of the poll, which was removed Monday, Oct. 31.

The Guardian Media Group is demanding that Microsoft take public responsibility for running a distasteful AI-generated poll alongside a Guardian article about a woman found dead at a school in Australia, according to a letter from Guardian CEO Anna Bateson to Microsoft president Brad Smith obtained by Axios' Sara Fischer.

- The poll, which ran within Microsoft's curated news aggregator platform, Microsoft Start, asked readers to choose the cause of death for the woman featured in the article.

Why it matters: Microsoft did remove the poll, but the damage was already done.

- Readers slammed The Guardian and the author in the poll's comments section, who they assumed were responsible for the blunder.

Details: "This is clearly an inappropriate use of genAI by Microsoft on a potentially distressing public interest story, originally written and published by Guardian journalists," Bateson wrote.

Between the lines: Bateson urged Microsoft to add a note to the poll, arguing that there's a strong case for Microsoft to take "full responsibility for it."

- She also asked for assurance from Microsoft that it will not apply "experimental technologies on or alongside Guardian licensed journalism" without its explicit approval.

- Microsoft didn't immediately respond to request for comment.

What to watch: Following an embarrassing publishing experiment from CNET earlier this year, more media companies are including disclosures of the use of AI in their editorial products.

4. Training data

- Senate Majority Leader Chuck Schumer will convene AI experts later today to brainstorm how to address the technology's social impacts, Axios Pro's Maria Curi reports.

- AI pioneer and Google Brain founder Andrew Ng says Big Tech is exaggerating the extinction danger AI poses to get the kind of regulation it favors. (Australian Financial Review)

- Meanwhile, the head of Google DeepMind pushed back on the idea that his company, OpenAI and others are fear-mongering. (CNBC)

- A news media trade group says generative AI models are relying heavily on news content for training. (NYT)

- Google reached a settlement with Match Group over the dating app's complaints about Google Play app store practices. (TechCrunch)

5. + This

The folks at security firm Vivint took urban legends from all 50 states and then used Midjourney to bring each hometown Halloween myth to life.

Thanks to Megan Morrone and Scott Rosenberg for editing this newsletter.

Sign up for Axios AI+

Scoops on the AI revolution and transformative tech, from Ina Fried, Madison Mills, Ashley Gold and Maria Curi.