Axios AI+

May 14, 2026

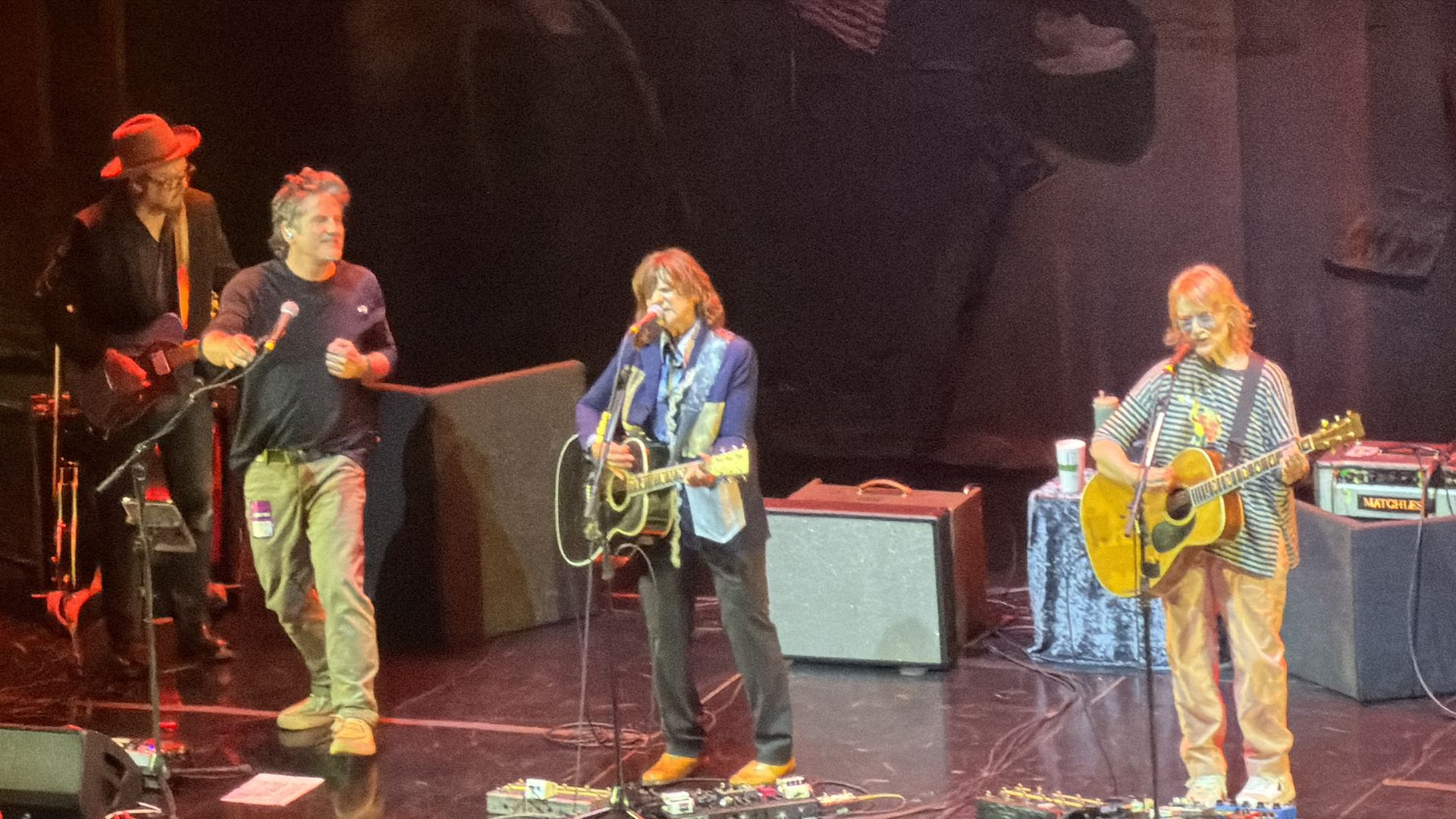

Ina here. It's been such a treat to see the Indigo Girls the last two nights at the newly refurbished Castro Theatre, though I may be a little sleepy in court today for closing arguments in Musk v. Altman. Today's AI+ is 1,093 words, a 4-minute read.

1 big thing: AI hacking boom still needs humans

Anthropic and OpenAI's cyber-capable AI models may still require significant human expertise to operate effectively, according to new findings from users testing the systems in real-world environments.

Why it matters: The next phase of AI-powered cybersecurity may depend less on fully autonomous hacking than on how well humans can direct, validate and operationalize increasingly powerful models.

The big picture: When Anthropic unveiled details about Mythos Preview, it warned that the model was so powerful that it found tens of thousands of bugs spanning nearly every operating system.

- Third-party testing suggests that OpenAI's GPT-5.5-Cyber is just as powerful as Mythos at finding bugs and writing exploits.

- Major companies and governments around the world have been clamoring to get their hands on these models to understand what they'll be up against once similar capabilities fall into the hands of attackers.

Driving the news: Several early adopters of Mythos and GPT-5.5-Cyber have shared their experiences this week from testing the seemingly revolutionary models.

- Palo Alto Networks told Axios it found 75 bugs using both the Anthropic and OpenAI models, vs. the 5-10 bugs it usually discovers each month. Researchers also found the models were increasingly capable of linking seemingly low-severity vulnerabilities into workable attack chains.

- Microsoft said Tuesday its new agentic security system, which runs on several frontier and distilled models, found 16 new vulnerabilities in the Windows networking and authentication stack. Microsoft also warned that AI tools are likely to increase the overall volume of discovered vulnerabilities over time, creating additional pressure on defenders to triage and patch flaws more quickly.

- Cisco this week released "Foundry Security Spec," an open-source blueprint for how organizations should think about using advanced AI models.

- XBOW, an AI-powered penetration testing startup, said Mythos is "extremely powerful for source code audits" in a blog post Tuesday detailing its internal tests.

Reality check: Vendors consistently found that the models performed best when paired with experienced security researchers who could validate findings, guide workflows and distinguish exploitable vulnerabilities from noise.

- XBOW found that Mythos was "good, but less powerful, at validating exploits" and that the model could be "too literal and conservative," sometimes overstating the practical significance of its findings.

- Palo Alto Networks, which has been working with Mythos, Opus 4.7 and GPT-5.5-Cyber, saw a false positive rate of about 30% across its products — although that rate dropped as the company trained the model on the environment it was searching.

2. Anthropic tightens Claude limits

Anthropic is putting new limits on what paying customers can do with their subscriptions, giving rival OpenAI an opening to lure power users to Codex.

Why it matters: The fight shows that "all-you-can-eat" AI subscriptions may not survive the agent era, where software can burn through computing resources far faster than humans ever could.

Driving the news: Anthropic announced that it's bringing back support for outside agent tools on paid Claude plans. But it is putting that usage behind a separate credit meter.

- Subscribers will now get a new monthly credit that they can use with third-party harnesses like OpenClaw.

- Anthropic says that new changes should support the ways that the majority of people use Claude.

What they're saying: Anthropic's changes didn't go over well.

- Claude Code product manager Noah Zweben's X post about the new rules was riddled with critical replies, with respondents calling the changes "gaslighting" and claiming to be switching to Codex.

The intrigue: OpenAI is taking the opposite tack, at least for now. CEO Sam Altman announced on X that OpenAI is giving new business customers two months of free Codex usage.

Zoom in: The industry appears to be rediscovering a lesson from earlier eras of computing: humans have built-in limits to how much data they can consume, while automated workloads can explode usage.

- A human might send dozens, or perhaps hundreds, of prompts a day, while an autonomous coding agent can generate thousands of requests, run tests continuously, browse the web, and recursively call models.

The other side: Businesses are finding that AI agents can lead to hefty bills.

- ServiceNow and Uber are among the companies that have already burned through their AI token budgets for the entire year, per The Information's Laura Bratton.

What we're watching: Everyone is facing the same economics and will eventually need to move away from unlimited use.

- Anthropic has been among the more aggressive in restricting use because it's been the top choice for coders who use agents the most and have been struggling to maintain enough compute resources.

3. Leo sets Catholics on collision course with AI

Pope Leo XIV is expected to sign his first encyclical as soon as tomorrow, positioning AI as the defining moral and labor challenge of a new industrial revolution.

Why it matters: The document, reportedly titled "Magnifica Humanitas" ("magnificent humanity"), would become the Catholic Church's clearest attempt yet to place human dignity, labor rights and ethics at the center of the AI race.

- Catholic and European outlets are reporting that Leo is poised to sign the AI encyclical on the anniversary of "Rerum Novarum" (1891), Pope Leo XIII's foundational industrial-era labor encyclical.

- The encyclical will focus specifically on AI's impact on "people and working conditions," framing it as Leo XIV's effort to modernize Catholic social teaching for the AI era, per the French newspaper Le Monde.

- Other reports suggest "Magnifica Humanitas" will argue technology must remain subordinate to the human person — not the reverse — and that AI systems should protect workers, creativity and moral agency.

The Vatican has not commented, but it has implemented formal AI guidelines and monitoring structures inside Vatican City.

4. Training data

- Scoop: A bipartisan group of House lawmakers is pressing the White House to move faster on AI cyber threats. (Axios)

- Elon Musk went to China with President Trump, even though the judge did not excuse him from his trial against Sam Altman. (NBC News)

- The U.S. has approved around 10 Chinese companies to buy Nvidia's H200 chips, but no deliveries have been made yet. (Reuters)

5. + This

It was incredible to see Joan Baez read poetry and sing with the Indigo Girls. Another highlight was Matt Nathanson joining in "Kid Fears" for the part once performed by Michael Stipe. (Bonus: Matt took the same bus we did going home and was super friendly with his many admirers.)

Thanks to Megan Morrone for editing this newsletter and Matt Piper for copy editing.

Sign up for Axios AI+