Axios AI+

May 24, 2024

We're taking Monday off for Memorial Day — enjoy the long weekend and unofficial start of summer, and we'll see you again Tuesday! In the meantime, today's newsletter is 1,128 words, a 4-minute read.

1 big thing: Google AI summaries go off rails

The blowback to Google's AI Overviews is growing now that they are showing up for all U.S. users — and sometimes getting things glaringly wrong.

Why it matters: The search giant's addition of AI-generated summaries to the top of search results could fundamentally reshape what's available on the internet and who profits from it.

- Many users habitually use Google searches to check facts, particularly now that AI chatbots deliver sometimes suspect answers. Now these search results are subject to the same mistakes and "hallucinations" that plague the chatbots.

Driving the news: Google last week announced that it was making AI-generated summaries the default experience in the U.S. for many search queries.

- People quickly began highlighting scores of glaring errors.

There was dangerously incorrect advice on what to do if you're bitten by a rattlesnake.

- In one summary, Google's AI suggested it had kids and served up their favorite recipe.

- Google seemed to have particular trouble with data about U.S. presidents — and, more troublingly, repeated the baseless conspiracy theory that former President Obama is Muslim.

- In another well-publicized example, Google's summary suggested using glue to keep cheese on pizza (apparently drawing inspiration from a Reddit post).

- Since most AI can't distinguish fact from satire, Google keeps reporting stories from The Onion at face value.

Context: Google has spent 25 years defending its reputation for informational integrity, devoting enormous resources to fine-tuning the accuracy of its search results.

- Its push into AI-generated results puts that reputational reserve at risk.

The big picture: Google's AI Overviews pose broad challenges for the business of publishing information online.

- Meanwhile, Google's shift to letting AI write its own answers to search queries means that the company could find itself treated as more like a publisher itself — and no longer protected by Section 230 from lawsuits over its content.

Between the lines: While Google may work to clean up individual results, or choose to turn off AI summaries for various categories of results, that doesn't mean the product will get better.

- The web is filling up with AI-generated content, which itself is prone to inaccuracy — meaning any AI generated summary could easily sweep up and elevate such content.

- At the same time, Google's changes could lessen the incentive to publish accurate information on the web.

The other side: In an interview with The Verge, Google CEO Sundar Pichai said, "If you put content and links within AI Overviews, they get higher clickthrough rates than if you put it outside of AI Overviews."

- "The examples we've seen are generally very uncommon queries, and aren't representative of most people's experiences," Google said in a statement to Axios. "The vast majority of AI Overviews provide high quality information, with links to dig deeper on the web."

Yes, but: For those of you worried about the accuracy of the AI summaries, you can turn them off — at least for now.

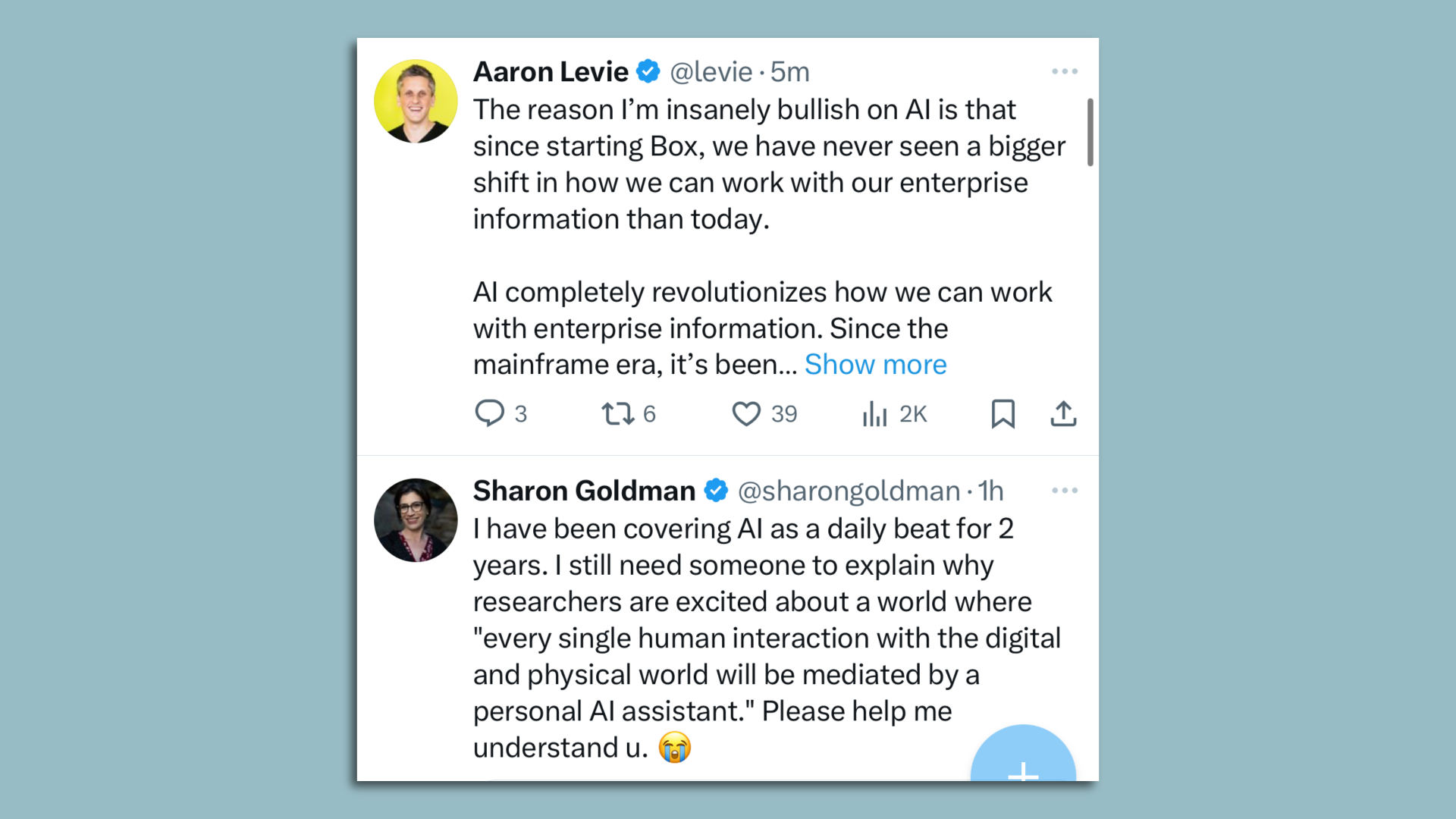

2. The peril and promise of AI in two tweets

Much of my work life is spent bouncing back and forth between those expressing optimism that generative AI will lead to a new golden era of human productivity and those who believe we are headed into a dystopian hellscape.

Zoom in: These two tweets, which appeared right next to each other in my feed on Wednesday, encapsulated that dichotomy perfectly.

- In the first one, Box CEO Aaron Levie lays out a compelling case for why he is "insanely bullish" on how generative AI will let companies make far better use of their data.

- The second post, an unrelated tweet from Fortune journalist Sharon Goldman, takes issue with the oft-touted notion that inserting AI into so many formerly human interactions is actually a worthwhile goal.

My thought bubble: I struggle myself, being both tantalized by the potential of generative AI and horrified by some of the ways it could be — and often is being — used.

3. Anthropic opens AI's black box

Researchers at Anthropic have mapped portions of the "mind" of one of their AIs, the company reported this week, in what it called "the first ever detailed look inside a modern, production-grade large language model."

Why it matters: Even the scientists who build advanced LLMs like Anthropic's Claude or OpenAI's GPT-4 can't say exactly how they work or why they provide a particular response — they're inscrutable "black boxes."

- The new work from Anthropic raises the prospect that generative AI programs like ChatGPT might some day be much easier to understand and control — making them both more useful and, with luck, less dangerous.

How it works: Using a technique called dictionary learning, Anthropic's team found a way to identify sets of neuron-like "nodes" in their LLM that the program associated with specific "features."

- The features were places, things, concepts — "a vast range of entities like cities (San Francisco), people (Rosalind Franklin), atomic elements (Lithium), scientific fields (immunology), and programming syntax (function calls)," Anthropic said in a post about the project.

- Features could be located near related terms and ideas, they found: "Looking near a 'Golden Gate Bridge' feature, we found features for Alcatraz Island, Ghirardelli Square, the Golden State Warriors, California Governor Gavin Newsom, the 1906 earthquake, and the San Francisco-set Alfred Hitchcock film 'Vertigo.'"

Between the lines: Once a particular feature had been identified, the researchers could directly manipulate them, "artificially amplifying or suppressing them to see how Claude's responses change," per Anthropic.

- Instead of having to teach or retrain the model or give it feedback, they could directly adjust its dials.

- Anthropic, which aims to "to ensure transformative AI helps people and society flourish," sees this work as a foundation for building safer AI.

Yes, but: The research is expensive — and each LLM may need to have its features catalogued independently.

- The Anthropic project identified "millions" of features in the Claude Sonnet model they studied, but the researchers write that's just a fraction of the whole model.

The bottom line: As generative AI becomes easier to directly program, its guardrails might also become more reliable.

- Then again, in the wrong hands, the same dials that make the models safer could be used to amp up their capacity for harm.

4. Training data

- Facebook parent Meta and Google parent Alphabet have held talks about licensing major studio content to train AI video models, sources tell Bloomberg, noting that both Netflix and Disney have said no.

- Meta is considering a paid version of its AI service; Microsoft, Google, OpenAI and Anthropic all have $20-per-month subscription options for consumers. (The Information)

- The White House is asking tech companies to voluntarily help stop sexually explicit AI deepfakes. (AP)

- OpenAI says it will release most of its former employees from the non-disparagement agreements tied to equity that they previously signed. (Bloomberg)

5. + This

This is both an amazing way to win a Scrabble tournament and a fascinating analogy to how large language models work.

Thanks to Megan Morrone and Scott Rosenberg for editing this newsletter and to Bryan McBournie for copy editing it.

Sign up for Axios AI+

/2024/05/24/1716519810394.gif?w=3840)