Axios AI+

March 27, 2025

Hello from LA, where I am in town for tonight's GLAAD Media Awards. Among the nominees is an MSNBC segment I did with Jonathan Capehart from the Paris Olympics, decoding the controversy around Algerian boxer Imane Khelif. Today's AI+ is 1,131 words, a 4.5-minute read.

1 big thing: Security teams embrace agentic AI

Cybersecurity teams are leaning into the new agentic world, experts say.

Why it matters: Agentic AI can reduce workload and boost response times, but if it misfires, it could expose systems to serious threats.

The big picture: While chatbots respond to prompts, agentic AI goes a step further and takes approved actions based on its own findings.

- As with any technological evolution, getting security teams to adopt AI takes time and education.

- Building confidence in new AI-enabled security tools also comes with a unique threat: If an AI tool gets something wrong, it leaves an opening for spies and cybercriminals to break in.

Driving the news: Microsoft unveiled plans Monday to start previewing 11 new AI agents in Security Copilot next month.

- CrowdStrike added agentic AI to its security tools last month, and Trend Micro rolled out autonomous agents and its own AI brain to customers last year.

Flashback: Just two years ago, major corporations were blocking employees from even opening ChatGPT for fears of data leaks.

Yes, but: The tides have turned, and security is one of the clearest use cases for generative AI — especially since the industry has long had a dearth of available workers and faces high burnout rates.

- More than 70% of CISOs said in a survey last summer that their organizations are considered either "innovators," "early adopters" or "early majority" adopters of new AI technologies, which could be influencing their newfound trust in AI tools.

- Half of the CISOs in that same survey also said they have developed some AI use cases or were piloting potential new AI projects for their teams.

Between the lines: Many security teams just want agentic AI to help sort through the thousands of threat notifications they receive daily and determine which ones are legitimate threats to their organizations.

- When Microsoft customers first started playing around with its Security Copilot, they would stick to prescriptive use cases, like summarizing a recent incident, Dorothy Li, corporate VP of Microsoft Security Copilot, told Axios.

- As they've become more comfortable, some users now let Copilot automate as much of their workflow as possible, she added, which inspired Microsoft to bring autonomous agents into the mix.

- Many of those use cases involved responding to phishing alerts and notifications about vulnerabilities across the various tools in their stacks.

Zoom in: Last month, CrowdStrike added an agentic capability to its security-focused large language model that automatically triages notifications for customers' security operations teams.

- Once implemented, the new tool can eliminate more than 40 hours of manual work per week, CrowdStrike estimates.

- CrowdStrike tests its new agentic capabilities internally against its own analysts' findings to ensure the tools are accurate and don't take inappropriate actions before they're deployed.

- That testing is key to building trust with customers, who include security teams in major corporations, Elia Zaitsev, chief technology officer at CrowdStrike, told Axios.

- "Everything in the generative AI space, in particular, by pretty much every measurement I've seen, is being adopted quicker than any technology out there," Zaitsev said.

Reality check: A healthy amount of skepticism still remains in AI's promise for security teams, Zaitsev added.

- "People need to see those hard, quantifiable metrics," he said. "They need to see there's real ROI."

What we're watching: Now that companies are giving AI the green light in their systems, expect even more cyber vendors to make splashy announcements about their own agentic capabilities.

2. ChatGPT opens flood of Ghibli-style portraits

Social media users spent a good chunk of yesterday creating Studio Ghibli-style portraits using ChatGPT's new native image-generation capabilities.

The big picture: The Ghibli fest is dividing admirers of the Japanese animation studio, with some awed by the visuals and others dismissing them as "AI slop."

Driving the news: OpenAI on Tuesday rolled out the images feature in GPT-4o, making it available to users on the Plus, Pro and Team subscription tiers. An initial plan to provide it to free users was put on hold, per CEO Sam Altman, because of higher-than-expected demand.

- "We trained our models on the joint distribution of online images and text, learning not just how images relate to language, but how they relate to each other," OpenAI said in a blog post.

- OpenAI stated that it will "block requests for generated images that may violate our content policies, such as child sexual abuse materials and sexual deepfakes."

Between the lines: The Ghibli-styled images are possible because OpenAI has loosened rules that previously limited the creation of images using distinctive styles, along with some celebrity likenesses, company names and logos.

- ChatGPT still won't "generate an image of Taylor Swift" but it will offer to match a celebrity's "vibe" or "aesthetic."

- "Our goal is to give users as much creative freedom as possible," OpenAI said in a statement to Axios. "We continue to prevent generations in the style of individual living artists, but we do permit broader studio styles — which fans have used to generate and share some truly delightful and inspired fan creations. We're always learning from real-world use and feedback, and we'll keep refining our policies as we go."

State of play: Users across social media platforms are using the native image feature to convert old photos to Studio Ghibli-style images.

- One person on X said: "tremendous alpha right now in sending your wife photos of yall converted to studio ghibli anime."

- A person on Threads said that he was in "love with ChatGPT's new image editing feature" and that he "can turn all my family photos into Ghibli portraits."

- Another on Bluesky recreated stills from the "Star Wars: The Last Jedi" movie.

Yes, but: Not everyone is pleased.

- One Threads poster said "shame on you" if you're using AI to generate "Studio Ghibli looking slop."

- Another person said: "Some of you all ain't ever got a Studio Ghibli copyright strike and it shows. Also Ai Art is SLOP."

Flashback: Shown a demo of an early AI system back in 2016, Studio Ghibli founder Hayao Miyazaki called technology that can draw like humans "an awful insult to life."

3. Training data

- Sources say OpenAI is "close" to finalizing its latest funding round at $40 billion, with the deal led by SoftBank. Others in talks to participate include Magnetar Capital, Coatue Management, Founders Fund and Altimeter. (Bloomberg)

- OpenAI will adopt a protocol developed by rival Anthropic designed to standardize a means for connecting AI systems to data. (TechCrunch)

- A federal judge is allowing the New York Times' copyright suit against OpenAI to proceed. (NPR)

- The military is trying to figure out the right balance of software vs. hardware for modern warfighting. (Axios)

4. + This

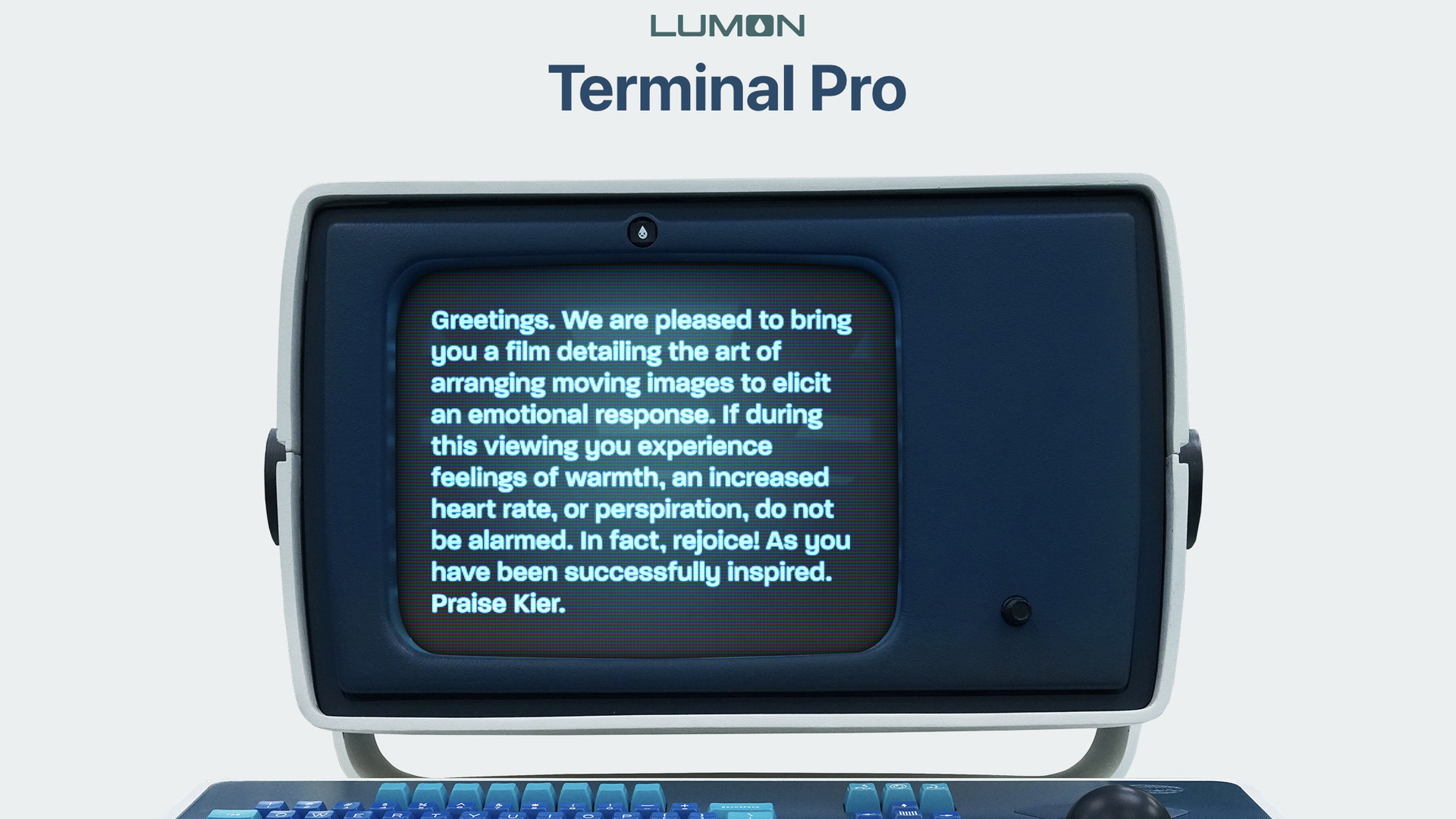

Apple added the fictional Lumon Terminal Pro to its online web store, but sadly for "Severance" fans, it's not actually for sale, just a plug for the Apple TV+ show.

Thanks to Scott Rosenberg and Megan Morrone for editing this newsletter and Matt Piper for copy editing.

Sign up for Axios AI+