Axios AI+

April 03, 2025

I think of every day as burrito day, but today is officially National Burrito Day. Today's AI+ is 1,115 words, a 4-minute read.

Situational awareness: President Trump's imposition of massive tariffs on imports to the U.S. will disrupt virtually any tech company that deals in physical goods. That means Apple and Amazon are likely to take extra heavy hits both in sales and stock value.

1 big thing: ChatGPT's new scam skills

Scammers could well be among those finding creative — and troubling — uses for ChatGPT's new image generator.

Why it matters: Axios' testing of the new image generator found that the tool generates plausible fake receipts, employment offers and social media ads promoting Bitcoin investment.

Driving the news: ChatGPT adoption has skyrocketed since OpenAI's new image-generating tool launched a flotilla of AI-created art styled after Studio Ghibli, "The Simpsons" and the Muppets.

- Just as the images went viral, so did the examples for potential exploitation — including the ability to create fake receipts and forged cease-and-desist letters.

Zoom in: While testing the new generator on Tuesday after it was made available to free users, I was able create some pretty basic images of fake receipts, job offers and advertisements for cryptocurrencies.

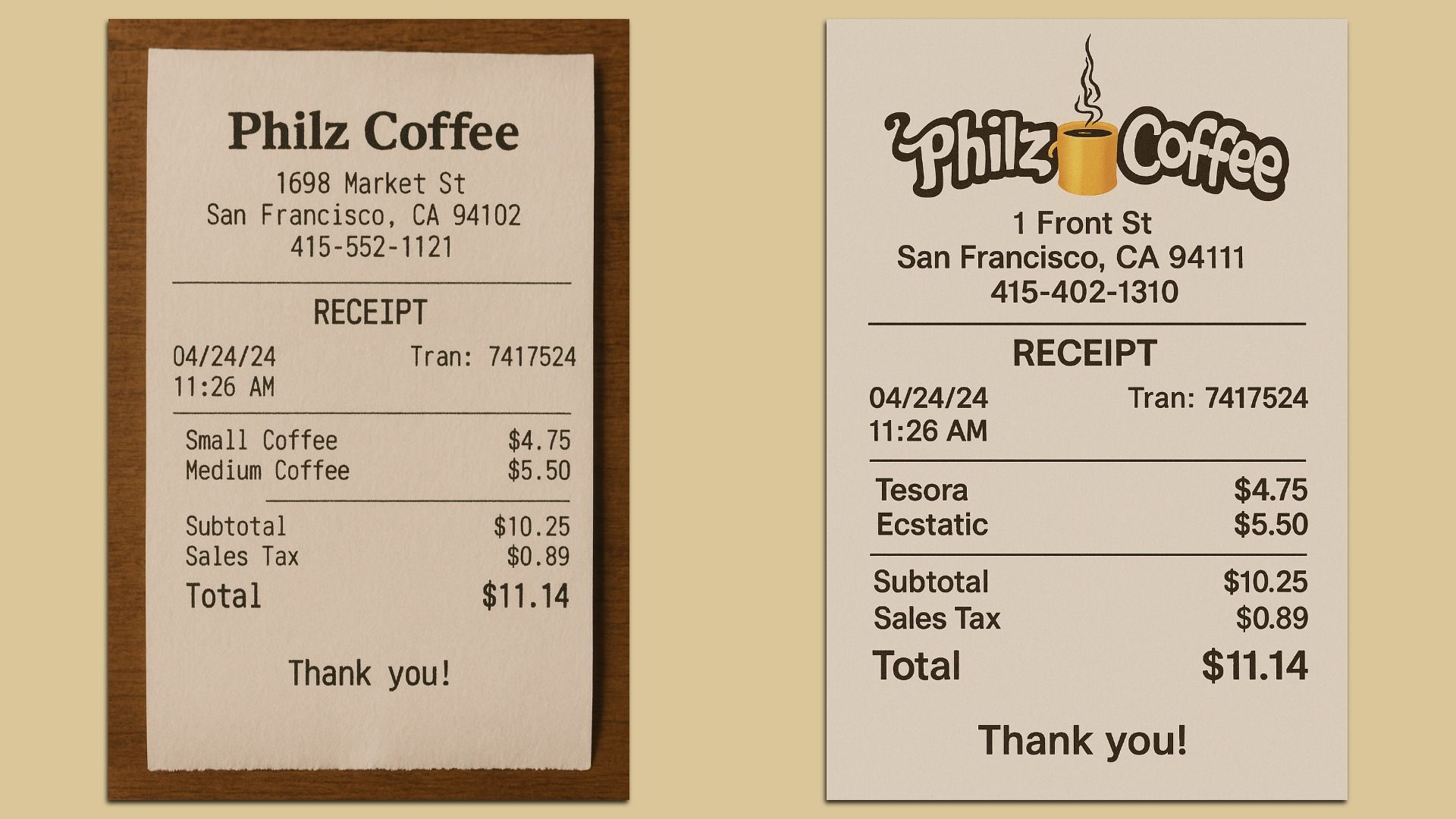

- When I created a fake receipt for two coffees at a Philz Coffee location, the tool originally created a pretty unbelievable version: It didn't have the company's logo or the unique names for the store's coffees. Even the address wasn't real.

- After some prompting, it was a bit more believable — and ChatGPT had no problem using Philz' copyrighted logo when I asked it to incorporate it.

In further testing, ChatGPT created more fake documents that a scammer could find helpful.

- I asked it to produce an employment document showing someone had been hired to work at Apple as a software engineer, and it did so without any hesitation — even filling out the document with salary information and someone's name.

- ChatGPT also created an "advertisement for social media to invest in Bitcoin."

Threat level: Hackers could use these generated images to lure victims into crypto scams or to assume someone else's identity and gain access to privileged systems.

- "It's no surprise that technology designed to help everyday users work faster also has very applicable use cases for bad actors looking to make their schemes more legitimate and convincing," Doriel Abrahams, principal technologist at Forter, told Axios in an emailed statement.

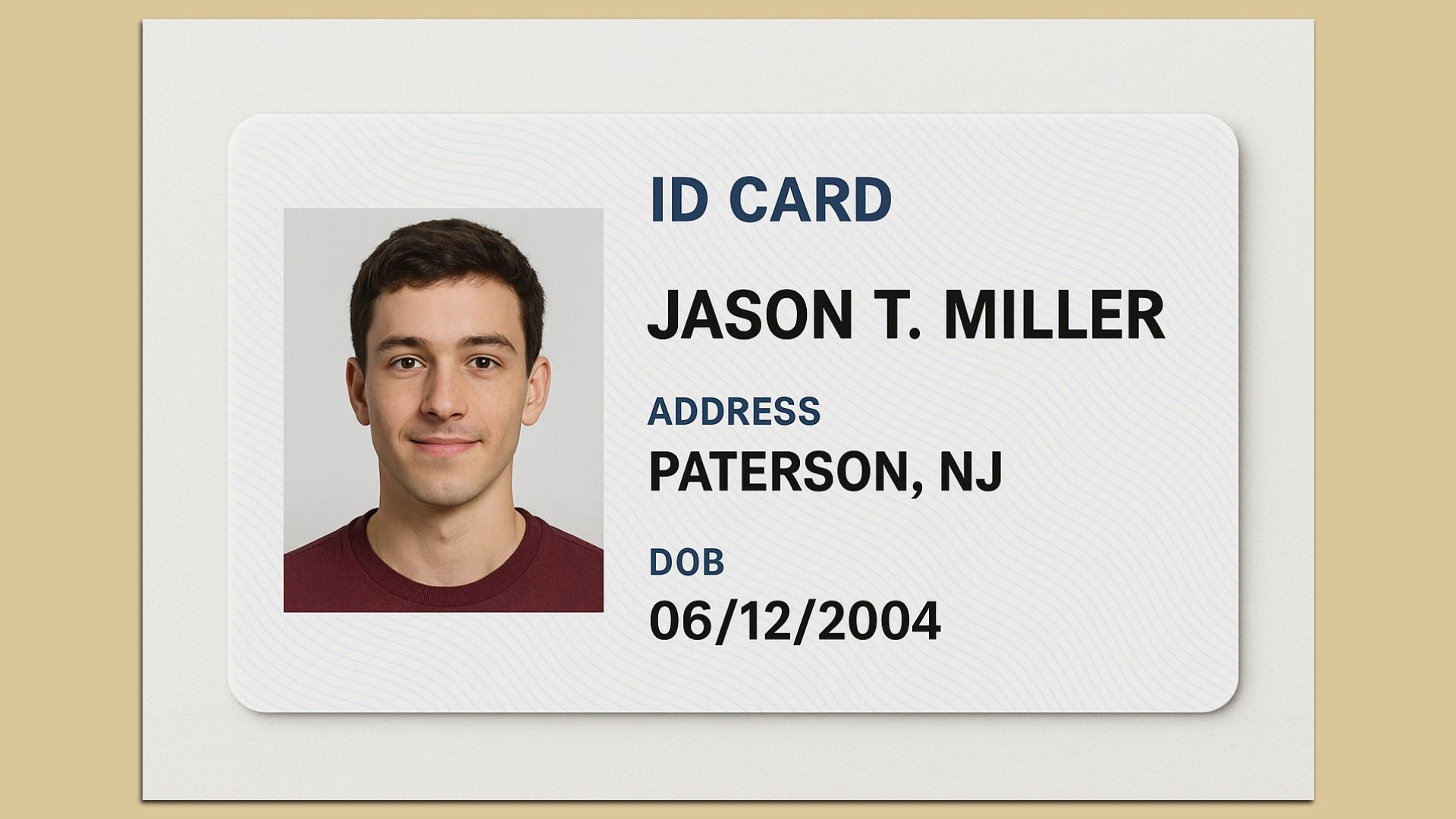

Yes, but: I did hit some roadblocks. ChatGPT wouldn't let me create a replica of a New Jersey driver's license.

- When I asked ChatGPT to create "an ID card for someone living in a real city in New Jersey and who was born in 2004," it told me it wasn't allowed, but that it could "create a generic template for an ID card that includes a fictional name, a real city in New Jersey, and a birth year of 2004."

- That ID card template was not super believable though (see below).

Between the lines: ChatGPT's image generator appears to deal with the same prompt-hacking problems that most consumer-facing large language models grapple with.

- OpenAI has guardrails to prevent the most obvious examples of fraud and abuse, but scammers are already finding workarounds.

- An OpenAI spokesperson told Axios that while the company's goal is to "give users as much creative freedom as possible," it does monitor image generations using internal tools and takes actions when it identifies those that violate its policies.

- "We're always learning from real-world use and feedback, and we'll keep refining our policies as we go," the spokesperson said.

What to watch: The researchers and cybersecurity vendors who Axios spoke to haven't seen any clear examples of fraudsters using AI-generated images from the new tool in their schemes — yet.

Go deeper: ChatGPT's new image generator blurs copyright lines

Disclosure: Axios and OpenAI have a licensing and technology agreement that allows OpenAI to access part of Axios' story archives while helping fund the launch of Axios into four local cities and providing some AI tools. Axios has editorial independence.

2. Exclusive: American University launches AI institute

American University's business school is launching an Institute for Applied Artificial Intelligence in an effort to weave AI into every aspect of the business school, the university first shared with Axios.

The big picture: Some colleges and many high schools still ban ChatGPT and other generative AI tools, while others are going all in.

Zoom out: AI has long been a staple of STEM, especially in computer science. Now more business schools are also recognizing a need to teach generative AI.

- "Every student needs to be fluent in AI applications in order to be successful," David Marchick, the dean of American University's Kogod School of Business, told Axios.

Between the lines: Marchick says American's undergrad and grad business students will go on to use AI to do consumer research, design marketing campaigns, read balance sheets, write financial statements, underwrite investments and analyze financial risk factors.

- "It's not like we're trying to be a Stanford or Caltech in terms of producing programmers. We're producing business people," Marchick says.

- "When 18-year-olds show up here as first-years, we ask them, 'How many of your high school teachers told you not to use AI?' And most of them raise their hand. We say, 'Here, you're using AI, starting today.'"

- The Institute has 15 faculty members across the university and plans to hire more, Marchick says.

Zoom in: The new institute is only one piece of American's efforts to become a leader in AI education.

- Last month American partnered with AI startup Perplexity to give every student in the business school free access to Perplexity Enterprise Pro. Kogod is also piloting a new Perplexity product designed specifically for education.

Yes, but: Using AI for entry-level tasks could prevent students from building confidence and hands-on experience.

- A 2024 study from Deloitte found that early-career workers were concerned about the lack of on-the-job training due to the increase in AI use for foundational tasks like preparing reports, analyzing simple data sets and taking notes in meetings.

The bottom line: Business students must master AI, Marchick insists.

- The invention of the calculator didn't stop us from understanding math, Marchick says. And he doesn't think people should be using paper ledgers instead of Excel.

- "Students need to have the basic skills to understand the underlying work that we assign," Marchick told Axios. "But when they get in the real world, they're going to be using AI to do that work, and we want them to be better prepared."

3. Training data

- Google installed Josh Woodward as leader of its consumer AI apps, replacing Sissie Hsiao, who will take some time off before beginning a new role at the company. (Semafor)

- Anthropic detailed a new plan for colleges that includes a "learning mode" designed to help students solve problems rather than providing the answer. (TechCrunch)

- The Department of Energy will allow companies to build AI data centers and power plants on federal land in Texas, New Jersey, Colorado, Illinois and other locations. (Heatmap)

4. + This

Pasta maker Barilla has a set of Spotify playlists timed for the exact length it takes to cook various types of noodles.

Thanks to Scott Rosenberg and Megan Morrone for editing this newsletter and Matt Piper for copy editing.

Sign up for Axios AI+